The intersection of XR and Artificial Intelligence is a hot topic, and this is the start of a 17-part Voices of VR podcast series where I’ve interviewed different immersive artists and XR developers over the past four months about how they’re integrating AI into their workflow in creating virtual and augmented reality projects.

I’m starting the series off with Evo Heyning, how has done a deep dive into dozens of generative AI programs, and she wrote an entire book called Promptcraft: Guidebook for Generative Media in Creative Work that explores our relationship to generative media. I had a chance to talk to her about her book at AWE where we talk about her journey into generative AI, her world in virtual worlds and the Open Metaverse Interoperability Group, and her various projects that live at the intersection of immersive media and AI.

Here is a list of the 17 episodes in this series on the intersection of XR and AI:

- #1253: XR & AI Series Kickoff with Evo Heyning on a Promptcraft Guide to Generative Media

- #1254: Using AI to Upskill Creative Sovereignty with XR Arist Violeta Ayala

- #1255: Using GPT Chatbots to Bootstrap Social VR Spaces with “Quantum Bar” Demo

- #1256: Using GPT for Conversational Interface for Escape Room VR Game “The Unclaimed Masterpiece”

- #1257: Talk on Preliminary Thoughts on AI: History, Ethics, and Conceptual Frames

- #1258: Using XR & AI to Reclaim and Preserve Indigenous Languages with Michael Running Wolf

- #1259: AWE Panel on the Intersection of AI and the Metaverse

- #1260: Using ChatGPT for XR Education and Persistent Virtual Assistant via AR Headsets

- #1261: Using ChatGPT for Rapid Prototyping of Tilt Five AR Applications with CEO Jeri Ellsworth

- #1262: Using in AI in AR Filters & ChatGPT for Business Planning with Educator Don Allen Stevenson III

- #1263: MeetWol AI Agent with Niantic, Overbeast AR App, & Speculative Architecture Essays with Keiichi Matsuda

- #1264: Inworld.ai for Dynamic NPC Characters with Knowledge, Memory, & Robust Narrative Controls

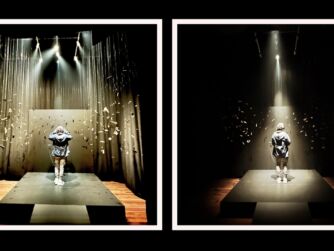

- #1265: Integrating Generative AI into Live Theatre Performance in WebXR with OnBoardXR

- #1266: Converting Dance into Multi-Channel Generative AI Performance at 30FPS with “Kinectic Diffusion”

- #1267: Frontiers of XR & AI Integrations with ONX Studio Technical Directors & Sensorium Co-Founders

- #1268: Survey of Open Metaverse Technologies & AI Workflows by Adrian Biedrzycki

- #1269: Three XR & AI Projects: “Sex, Desire, and Data Show,” “Chomsky vs Chomsky,” and “Future Rites”

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Podcast: Play in new window | Download

Rough Transcript

[00:00:05.412] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR podcast. It's a podcast that looks at the future of spatial computing. You can support the podcast at patreon.com slash Voices of VR. So I'm going to be starting a 17-part series of looking at the intersection of XR and artificial intelligence. So over the past four months, I've been going to a number of different festivals and conferences, and a hot, hot topic has been this intersection between AI, generative AI, and XR, the metaverse, virtual reality, augmented reality. So this is a 17-part series. I'm going to be talking to a lot of artists and creators and developers. I have a talk that I gave at San Jose State University. There's a panel discussion I was on about the intersection of AI and the metaverse at Augmented World Expo. And generally, it's just trying to do a broad survey for what's happening with this intersection with XR and AI. I'm going to be starting with a conversation that I had with Ivo Haining. She's an interactive producer for the last 20 years in gaming, live streaming, and the 3D web. She actually wrote a book called PromptCraft. She did a deep dive into all the different generative AI systems and likens it to this magical spell casting where you have to come up with the right semantics, the right words to use your language to be able to manifest whatever you're thinking about. There's a lot of parallels with this more magical and alchemical traditions and we're going to be doing a deep dive into how she starts to think about these generative AI systems and also how she's been working with the Open Metaverse Interoperability Group and these intersection between world building and generative AI in her own practice. So, that's it for coming on today's episode of the Voices of VR podcast. So, this interview with Ivo happened on Friday, June 2nd, 2023 at the Augmented World Expo in Santa Clara, California. So, with that, let's go ahead and dive right in.

[00:01:58.631] Evo Heyning: My name is Ivo Heyning. I have been an interactive media producer for 25 years in gaming, in live streaming, and in the 3D web. And I am currently working for a company called Chapter on a AI film project. I wrote a book called Prompt Craft, which is specifically about our relationship to generative media and how we use these tools in our creative work. And I'm also developing my own fictional worlds and a new band called Oracles, which dropped our first single this week.

[00:02:33.972] Kent Bye: Awesome. Well, maybe you could give a bit more context as to your background and your journey into all these intersections.

[00:02:40.137] Evo Heyning: Sure. So I've always been a storyteller, a coder, and an artist. And I started showing my art when I was five, coding when I was six, and figuring out how to use those tools to solve problems in my community when I was seven, eight, nine years old. And so for the last now 40 years, it's been how do we bring our creative life and our experiments together in a way that delights people, is maybe asking a good question, or helps people explore a new space. Maybe bring something to light that was in the dark prior. I'm very interested in our relationships, not only with each other, but with all of life itself. And so figuring out how to encode aspects of biophilia and mutual respect into everything we do, whether that's a generative media project or an app or an AR or a VR experience as well.

[00:03:44.420] Kent Bye: Great. And I've met you through the VR community, and so I know you've been involved with virtual worlds for a long, long time. So maybe you could start there with how you got involved in virtual worlds and then eventually into VR.

[00:03:54.826] Evo Heyning: Sure, so in 2004 I started exploring Second Life as a media producer. I was looking for a place to begin building my worlds and to figure out where I could potentially make social programmable places where we could come and play together. And so I built a world called Manor Meta, which was a meditation space. And then I began building social impact spaces in Second Life in 2005. And as a part of that, I was working with the team working on what was then called the Metaverse Roadmap. And so that was looking at the next 20 years of AR, VR, digital twins, and live streaming as well. And so I began exploring how to use all of those tools to create better social impact programs, to create better communities, to, for example, move an initiative forward that might redesign the public commons in ways that work better for marginalized communities or create an opportunity for people to connect that they didn't have before. From virtual worlds, I then went into AR, specifically for solving problems in the ecosystem, and into VR for storytelling, but also for changing our relationship to how we see ourselves as part of the environment as active creators together. So what that means is choosing to build together in virtual spaces sometimes informs how we build together in physical spaces too. We see this in augmented reality, for example, when we're playing, let's say, in a Niantic game in like Pokemon Go, that also affects how we play with each other once we turn off our phones as well. And so the dynamics of interactivity related to consent and ethics and mutual respect of each other. Also touching on For me, I've always seen these tools as ways to bring down walls and include people in a conversation, in a process, in an opportunity to experiment and play that maybe wasn't afforded to them in the past. And so that might look like engaging with kids in a refugee camp halfway around the world. Or it might look like spending time with people who have a very different perspective or experience, whatever that is, so that we can learn from them and then hopefully collaborate and do something interesting together. that moved into the Metaverse. And so for the last five years, I have worked on WebXR experience design and production. And that's storytelling, making stories like Wild Cacao, which was a journey to go see where chocolate comes from in the Amazon. You get to meet the women who harvest chocolate and you get to see how they live. That's an educational WebXR project. Now we're using generative tools. So those generative tools are adding to the layers of the story, but still maintain what is fundamentally human about stories. They help us connect to each other. They help us understand each other's lives and hopefully build on each other's experience, right? So when we're thinking about how do we use our generative tools to enhance how we engage in interactive storytelling. Let's call AR, VR, all of this work is different ways we share and transmit our lessons. We are telling stories together around digital fires. So if you think about the digital fire just being an ephemeral place that could exist anywhere, when you add generative tools to the mix, it becomes a bit more like the late night mystical story craft that you can imagine of many centuries ago, as people would have gathered around the fire all night long and told stories in the smoke. and you could see the stories emerging out of the smoke as the storyteller would weave you into it. That sense of deeply participatory storytelling that was happening around the fire centuries ago is now happening again, but in a new way, as all of the other participants around the fire can then add their visions to the smoke. And it is all smoke, it's all ephemeral, but it is this shared reality that we are crafting in real time. It's the leap from passive media to interactive media to deeply participatory media, the type of media that we create together because we want to create meaning together.

[00:08:33.632] Kent Bye: Yeah, I know Alex McDowell, and when he's given talks, he talks about the printing press as this turning point where storytelling and media production became really linearized in a way, where there's canonical versions of a story that were printed and then repeated in a way that was not changing. It was more static. in that point. Whereas before he paints this picture of these more tribal ways of, you know, if you think about myths and mythology of the stories were sometimes never really written down, but they were repeated and they were changed and adapt. And you can think about that as people were sitting around the campfire, maybe there would be people playing music to be able to augment or contributing what was happening in someone's lives. And so there's a way that the origins of some of this oral storytelling traditions had this more participatory element. And then you have the linearization of the media and now we're in some ways, having a return to all that. It sounds like that you're invoking that with what's happening with these new immersive media. So you've been involved with both WebXR, the Open Metaverse Initiative, as well as thinking about, on the onset of the pandemic, all these different ways of doing virtual gatherings. As an event producer, you're producing these virtual events. So I'd love to hear a little bit about what happened with you during the pandemic. before we get into more of the other AI stuff. Because I feel like I saw you diving deep into a lot of these different initiatives, like the Open Metaverse Initiative, and thinking about these deeper standards, and really trying to come up with the core foundational protocols and standards that are going to facilitate this vision that you just spoke about here, but working at a really low, deep level to provide those foundations for the technology to flourish in this open context. So I'd love to hear a little bit more about that journey for you.

[00:10:08.320] Evo Heyning: So if you're thinking of these technologies in service of human connection, the pandemic presented a unique challenge of isolation for so many of us. And at the same time, I, as an event producer, understanding both live streaming and all of these platforms, recognized that we needed lots of folks like me who could basically create the third space in the digital environment so that conferences, festivals, premieres, all of the things that we were doing could still exist. I worked over the course of 2020 and 2021 on about 500 hours of live streaming shows that included 12 conferences, many festivals, many, as we said, premieres, summits, even events like MetaTraversal, and a group that we formed in 2021, which is the Open Metaverse Interoperability Group. And OMI, or Open Metaverse Interoperability, was specifically, and is specifically, a community focused on open source collaboration, specifically on what are the pieces of the puzzle we need to bring together so that we can move together effectively across an open metaverse. That means collaborative platforms where we can actually work together, but that's also event platforms where we can meet and share our stories together. And those two platforms don't always exist in the same space. So over the course of 2020, the focus then was focused on how do we bring people together? How do we help them have a rich experience that fills the gap, right? Because many people were feeling not just isolated, but going through some pretty heavy things in that process, right? So we needed to form a digital support system very quickly. And what that looked like was using 30, 40, 50 different platforms to try and bring people together in very ad hoc ways. In IEEE's VR conference, we brought that together in three weeks using Mozilla Hubs. We also used Twitch and other live streaming platforms, but we used Hubs specifically because It was an open source solution that let us solve a problem for thousands of researchers very quickly. So in three weeks, we moved a conference from physical to digital so that everyone could participate and share their research. By 2021, we realized that the problems of interoperability in the sense of not being able to go and share our work from one platform to another, or to have a single identity that could move from one platform to another, was creating too much friction and was hindering collaboration. And so the movement toward an open metaverse needed to happen in part so that humans could work together better. Instead of being stuck in a walled garden where all of the work was then bound to the walled garden and could not be brought out, we needed an approach that allowed for portability, not just of the self, but portability of the collaborative work, portability of the social graph in the community, so that we felt more completely ourselves and could then create spaces to work. Because by 2021, virtual work was the work. That was the one place we were able to do our work. And so very quickly, we had to understand what are the affordances of all of these platforms? Where can we get our work done effectively? And then how do we bring this all together into something that the general public can then participate in? So by 2022, we began experimenting with, for example, portals between XR and Metaverse worlds. What are portals? They are basically doorways that allow us to move across the 3D open web the same way we might just use a link on the 2D web. We understand that a link connects us from one experience to another. There is no visual language yet. for what a doorway between one 3D world and another 3D world is supposed to look like. And so in the absence of a standard, there is vast experimentation. What that looks like is some platforms have doorways, some have portals that look like something out of the game portal, some use elevators and other vehicles. But the experience of being able to move from one world to another independent of the corporate platform that might be owned or the open source tools that might be brought together inside of that experience. Figuring out how to make this work for humans has always been the goal. What that's led to is the formation of larger spaces like Metaverse Standards Forum, where experimentation can move from small group adoption in these open source communities into wider adoption and working with larger companies. All of the many companies that make up the AR, VR, and XR industry participate in Metaverse Standards Forum, whether it's on asset interoperability, avatar movement, how we move our scenes and our experiences across worlds, because those are pain points that we all share. Every creator understands the pain of losing an entire world's worth of work, right? Sometimes thousands of hours of work can be lost when a server goes down or when a world is sunset. And so removing those barriers to participation at the end of the day, because We are all interested, I believe, in humans being fundamentally curious and experimental as beings. We like to try new things, but we also like to try our tools and see what they can do. And we are now in a stage where we can take our tools and our friends effectively from one world to the next and do what is good in this world, let's say an event world, and then go into a 3D design world. design a 3D asset, take that 3D asset into a third world, and then create a theatrical experience for a larger group of people. That type of portability was not possible even a year ago. So where we've come from 2020 to 2023 is in using the virtual environment effectively, not just for collaboration, but for actual portability of the self. and creating something that begins to reflect the kind of metaverse we want in the sense of open spaces, not just bound to a corporate firewall or a walled garden, but something that is as simple as going to a URL on the web and that is accessible for all ages and all people all around the world, irregardless of what background they might be from or what type of headset or device they might be in.

[00:16:54.551] Kent Bye: So I've been very familiar with a lot of the work that you've been doing as a community leader in the space and the open metaverse. And we've crossed paths in these virtual environments many different times over the last couple of years, especially during the pandemic. But there's also been this whole other strand of generative AI that you've gone a deep, deep dive into. And maybe you could help me understand what your journey into that world has been like, because you've written a whole book about it. And where did that journey begin for you to start to explore these different aspects of generative AI?

[00:17:23.921] Evo Heyning: So I talked about in Second Life, I built a space called Manor Meta. And Manor started as a script about our relationships with many AI. I wrote that script in 2005 as a speculative fiction series for families and kids to be able to be in this moment that we're in now, trying to understand a world where hundreds of different agents of a sort, intelligent or otherwise, might be influencing our day-to-day lives and building more intuitive relationships than we have ever experienced before. What that does to us as individuals and collectively as society changes our sense of autonomy, interconnectedness. And so I began exploring back in 2005 in Second Life what those ideas could look like. Obviously, AI tools have developed quite a bit in the last 20 years. We weren't able to bring any sort of real scripting into Second Life back in the day. But by 2014, 2015, we were able to use chatbots in our XR experiences or in VR. And so the experimentation moved into the languages of collaboration. And certainly in the chatbot era over the last 10 years, we've refined the language of What does it mean to ethically engage together, for example? What does it mean to involve an agent in our work as a collaborator? Not just as an informer, as a customer service bot, but as a collaborator, as like a paired programming associate in some work. So I stopped after 2022 and I dove deep into the sort of new crop of generative tools. During the pandemic, I was a part of the Buckminster Fuller Institute Design Science Studio. And within that work, we set about to create Worlds that work for 100% of human life, and not just human life, but 100% of life, period. And what we saw was that we had an amazing array of new tools on the horizon, and this was wombo and these kind of deep dream kind of things two or three years ago, but that there was going to be this upswell of tools. combined with sort of a lack of ethical engagement on how to use them effectively to build more interesting or more beautiful, better worlds. And so at the Design Science Studio, we began exploring the language of biophilia, the language of symbiosis, the language of biomimicry. And how can we train our tools now, tools like MidJourney, to recognize what is good design based on what we've learned from biomimicry? Because we can build more effective tools that learn resilience because we've learned resilience from nature. So how do we take the lessons of nature and bring them into AI? That was a big part of the research I was doing in 2020, 2021, 22. So I dove deep into tools like MidJourney, got very hooked as many people do, but I also began to see that there was sort of a secret language to it. And as I explored now hundreds of tools, I began to refine what can be a human approach to dealing with what can feel like a sort of a secret art or a magic art. Because for many, AI has been narratively exposed as a magic wand, right? We understand as technologists that it's not a magic wand, but the general public almost sees AI as magic. So figuring out from the language of magic, the language of spellcasting, we understand in all of our stories about spellcasting that every syllable matters, right? If you say a spell wrong, something bad happens. This is the work of prompt craft is understanding why every syllable matters, understanding the language and engineering it. That is what a prompt engineer does. They are mixing data science and computer science with language engineering, right? So the work I'm doing today at chapter working on AI film projects, AI storytelling projects specifically is looking at the nuances of language and how we relate to those things and then how each of those nuances then creates new worlds. Because we might want a world that is resilient or anti-fragile, but anti-fragile means something different to one AI than it does to another. And if we're thinking about what these words mean, we don't necessarily have a shared or a consensual agreement on what that is. So the work ahead for prompt engineers and for everyone in the generative space, is to begin to unpack those nuances of language together, to have these conversations, and PromptCraft, the book, specifically starts that ball rolling, but it's designed as the first in a series of four books. There are other books coming that are specific to language and that are specific to world building. Because a year ago, the text-to-3D prompting tools didn't exist in public, right? The text-to-video tools didn't exist in public, even a year ago. Today, you can prompt a whole world. You can prompt it to Unreal, you can prompt a Skybox, you can prompt characters, you can prompt 3D assets. And so we are stepping into something That is life-changing for creators. If a creator has always worked in 2D and is all of a sudden being told that the world is 3D, that changes the way that they have to think about design. And so there are whole new languages of design. related to ethics and consent, related to interactivity and risk and privacy. All of those things we are figuring out together. My role in this community is to be in inquiry with the other people in this community, because the best thing we can do is ask even the tough questions together in public. I don't have all the answers. I don't know that most of us do. But if we can't ask the questions, then we're doomed, right? And so I tend to be a very pro-social creator because I want to believe in our human capacity to come together and at least have the conversation. Even if we don't have a shared reality at the end of the conversation, we have at least pulled it apart. We've been transparent about our process and the discoveries inside of it. So obviously ethics in AI and generative work is a big conversation right now. I've given probably 50 talks in the last three months and this is on everyone's sort of top of mind. And one of the level setting things I often will speak about is that Much of the last 50 years, across all media types, has created a very poor training set for our relationship with the broad landscape of AI and generative tools. We have mostly told dystopian stories about AI, AGI specifically, coming to do destructive things to humans in one form or another. And we have almost no positive stories of symbiotic relationships with these intelligent agents. The movie Big Hero 6 is one of the few examples you can point to from the last 20 years of a relatively positive relationship with a bot.

[00:25:03.634] Kent Bye: At least in the American media. In Japan, that's different.

[00:25:06.076] Evo Heyning: Exactly. Right. So in Japan, today, the relationship between humans and bots is a much more friendly, pro-social thing. Here in America, we tend to be relatively afraid of anything that purports to be AI, because what we recognize as AI is HAL, or the Matrix, or something that typically ends up in human destruction. We have had this sort of gap in our storytelling and partially, I mean, I pitched Manor as a story in Hollywood almost 20 years ago. No one wanted to buy a pro-social story about our relationships with AI 20 years ago because we couldn't see that future yet. Now we're in it. And so we all have to create that together and we have to choose to create that together by every prompt, every choice we make in our creative life.

[00:25:58.946] Kent Bye: And so take me back to the first moments when you had an opportunity to either hear about some of these generative AI tools. I know that OpenAI has been working on things like GPT-3. And then there's been a lot of research and different stuff of zero-shot text captions and images. But Dali, there's a talk to a number of people who had early access for a couple of years. And so when did you first hear buzz that this was a possibility? And when did you first get your hands on some of these different tools?

[00:26:26.327] Evo Heyning: I began exploring chatbots early on when I was working at USC in 2008. So those were specifically language chatbot type models. For the art generators, 2019, 2020, we began to see more of these kinds of tools like DeepDream and Wombo. That was where I particularly was playing because I was looking at pattern recognition across the data set. I still am friends with people who work at OpenAI and other organizations. And so we had conversations about, even prior to the pandemic, where these tools would be going and that there would become a point at which the public would be engaged. where that public would be engaged, the needle kept moving. And the pandemic changed how we moved that needle in part, along with corporate culture and capitalism changed that, right? Like open AI was originally an open source endeavor. And now it's not, I don't think anyone would really call that any sort of open source exactly. How we Experiment with these tools is often dependent on each individual becoming in relationship with each individual tool. That has been the case for the last five years. I don't think that's going to be the case in the future. We can't manage a relationship with a thousand tools and there are over a thousand generative tools and many more coming. As we begin to think about the scalability of that, we see now more and more people going in through communities like Hugging Face to go and figure out which tool might be right for them or, you know, starting within an open source way, maybe exploring stable diffusion and stability tools or going into any of the sort of free tools to experiment for the first time. I specifically chose to build a relationship with about 15 different companies because I'm writing, as we mentioned, a new album. And my new album, we began with exploring 15 different large language models for writing beat poetry, not just lyrics, but specifically beat poetry. And from there, we began to extrapolate what would be the unique voice of each large language model and how it writes differently than the other tools. And my new band, we specifically started from that lyrical place. But in the prompt craft, we started from the questions we were holding, right? So what is the nature of reality? How do we move across these worlds together? What is extended reality? And how is that going to change our relationship to ourselves and each other? The questions of symbiosis and what is an artifice, all of these things started the ball, and then we wrote the music and the music videos, and now the rest of the band project has come together, but from inquiry, from a place of asking tough questions together first.

[00:29:36.280] Kent Bye: I played around a little bit with ChatGPT, and I was messing around with stable diffusion a little bit. It was very helpful to see other websites. So I can go and see, oh, here's something like an image that I think is close to what I want. And they allow you to copy the prompt and then start to tweak it from there. mid-journey started on discord so that you had this by default open sharing of these prompts so that you would get this communal learning process where you have all these GPUs running in the background but you could see what other people were doing and then later they added the more private aspects if people didn't want to have everything be shared but I thought that was a really interesting and brilliant move to create this communal aspect of trying to, in some ways like you said, there's this alchemy or magical spellcasting where you have to add different phrases and there's a lot of catchphrases that people would add and just as a thing like this would be like the little magic dust like, you know, trending on ArtStation or something like that. So people would add these things but there was also this trying to be very specific of how to fine-tune all these things. So I'd love to hear your process for understanding how the prompt craft of a different tool may be different than others, like finding its unique character, like, do you just run, like, thousands of prompts? Because there's also a seed randomness to it, so there's kind of like an aspect where every single prompt that you have is going to be a certain amount of difference to it, unless you set the seed to be consistent, so then you can start to compare them. So I'd love to hear what your process is to start to learn how to speak the language of these different engines and fine-tune your magical spells or your prompt craft that you do in order to translate your intention and your imagination into an actual image through the AI through language.

[00:31:11.782] Evo Heyning: Sure. So one of the things I love about Mid-Journey on Discord, for example, is that you can be prompting in public with others. And so when I'm often starting a discovery process, I will be in a public space on Discord and Mid-Journey or other places because I enjoy the jazz, the riffing off of other people. For example, the Manor Meta world is a crystalline world. It is an intelligent crystalline world. And every time I prompt for that world, other people pick up the words I'm using, for example, faceted or prismatic, and start to use them too. And so we start to notice these almost like memes forming within the prompt craft. What that led to was development of a word, Crystal Core, specifically to talk about what is that genre of crystalline worlds. The word Crystal Core didn't mean anything in Midjourney until we all chose to give it meaning. And now, if you prompt for Crystal Core, all of these worlds that are crystalline will pop up, right?

[00:32:18.483] Kent Bye: Is that because it's able to take feedback from people?

[00:32:22.312] Evo Heyning: Yes, and it's starting to recognize the pattern, but there's also something happening on the back end at mid-journey to say, okay, if you see the word core and you see this, you know that this is going to create this type of world. That is an emergent behavior that's happening in real time with other human beings in a way that we haven't really seen before in other aspects of web development. Maybe IRC, but otherwise you didn't really see it happening that often. So often when I'm starting a process, I'll do it in public, either in a public channel or in a collaborative channel with other creators, and then we'll start to riff. Whenever a new version of a new tool comes out, for example, with Midjourney, I will prompt all the emojis. because I want to see how it's interpreting what is a somewhat complex concept. An emoji is not necessarily a simple concept. It doesn't always mean the same thing to everyone. And sometimes there are multiple meanings in an emoji. And every time there's a new version of Midjourney, emojis mean something new or become more surreal or more photorealistic or something along those lines. And so I go through about a day-long process every time a new version of a tool that I'm using effectively comes out and I might prompt it a thousand times and that might include all the emojis and That might include all of my favorite snippets or keywords that I want to use over and over again. Let's say I like a prismatic ray, but I want to understand what prismatic does in this that looks different than that. And within our team, sometimes we create data sets. We've created training models based on this. We might even use a shared tool. We use Miro, for example. And we'll organize our data sets and say, this prompt means this. This prompt plus this prompt means that. And that's how we begin together to map the relationships between thousands of ideas and keywords. Now, the same word in mid-journey doesn't mean the same thing in stable diffusion. And you then have to repeat that process over and over again. Or you have to find someone who's already done the work. And this is why we typically lean on other communities of AI artists and the AI arts community broadly. AI creators who are working with generative tools tend to be very transparent about aspects of the work. They may not be sharing the specific prompts that they're using. but they may be sharing specific tips, like using a native language. If you're trying to get an image of someone who is from a specific ethnicity, prompt in their language. That is something I wouldn't have necessarily thought to do, but because of picking that up from another creator, I've been able to create now thousands of images that are more authentic and potentially unique to that particular ethnicity. This is where refinement across an industry matters because this only happens because thousands of people give feedback to the tool creator, to Runway, to DALI, to MidJourney to say, hey, this works and this does not work. And we need help going from here to here to realize our vision. And so we're seeing this happen right now, for example, between Runway Beta 1 and Runway Gen 2. Everyone I know who's using Gen 2 is fascinated by it, but cannot figure out how to prompt to it yet. So it comes across as almost a dark art in the magical realm, right? Compared to these more language-centered things that we can go to Lexica and just copy and paste a prompt from someone else's art. When you're thinking about emergent tools in video and in world building, we don't know the rules yet. We don't know the syntax. We don't know the magic language. We don't have the spells. There's no book of spells. We're going to have to write that book together.

[00:36:23.170] Kent Bye: Yeah, I've noticed that there is some different resources of, say, set a seed for an image, prompt it with this default image, and then have different styles. So just to understand all the different styles and art styles of the different artists. And so there's a certain amount of art history of being aware of existing artists in a certain style that may be applied, and so there's other resources that I've seen. But yeah, this whole dimension of style seems to be another modifier to have that. So I don't know if that's a part of your own process of knowing what those artists that are in the corpus that has already been trained that you could invoke a specific artist to be able to then change the complete style and vibe of the outcome of a prompt.

[00:36:58.625] Evo Heyning: Yeah, there are specific elements to a prompt that are relatively standard, no matter what tool you're in, and that includes describing what you want to see, and including style, but also including potentially perspective. So, style words are in art history, we understand Art Nouveau, Art Deco, these are art styles, right? And there are infinite, there are thousands of different types of art styles. This is why an existing visual artist will generally be very successful in a new generative art tool because they have a language for what they're trying to create. In literary styles, this also exists, right? So the literary language is sometimes a little bit more limited, but you can still find those style words. You generally, in any prompt, have to include a description. And a description can be, I often tell writers that they are well-equipped to be great prompt engineers, because if you've ever written a script, you understand that every scene starts with a description, and that is a prompt. That description right there is giving you perspective and it's giving you tone. The only word it's missing is that art style word usually. But that is a prompt. And so screenwriters, for example, are phenomenal at prompt engineering once trained and given the tools to do that because they have language. that maybe others haven't developed. So I want to encourage creators to not necessarily think of generative tools as being separate from them, or being something that is for others and not for them, because much of this narrative, especially in the West, has been set up as an us versus them mentality, and that is not beneficial to anyone. It is a yes and scenario here. We are all building on each other's expertise. And with our tools, yes, we obviously need to mitigate any risk. We need to address privacy issues. We need to address consent issues. We don't want to actively rip off other creators and artists. And so when I'm teaching prompt craft, I encourage artists or creators to not use other people's names in their prompts. You don't have to use other people's IP. And especially if you're trying to build original voice, original work, you don't want to be derivative. So keep other people's names out of your prompt craft. That's a form of what I would consider slightly lazy craft. It's a great way to get started, but it isn't necessarily a great way to develop a sense of a unique voice or a unique perspective. It's also stepping on the toes of other creators in some way that feels offensive and a little bit aggressive. So in comedy, if you steal a joke from another comedian, all the other comedians know, and they look at you and they kind of give the side eye to you because they know you did that. in visual art right now, that's a touchy subject, right? Some artists clearly don't want to be put in any data set. And so just believe yesterday, Mozilla awarded a team for responsible AI specifically to create a tool so that artists can protect their work so that it cannot be trained into a data set. There are both opt-in and opt-out solutions coming for participating or not participating in these ecosystems and creators. individually have to come together and figure out how to make those choices. It's not going to be the same for sometimes each individual person in a music project. I understand Daft Punk broke up because of something like this. So there are some interesting stories coming and maybe divergent paths forming where people are exploring and experimenting but finding that it takes them in different directions.

[00:40:56.492] Kent Bye: Well, this is a hot topic in terms of the data provenance and what's the data set of how things are being trained. There was a moment on stage here at AWE when I was on a panel talking about the intersection of AI and the metaverse. And it was moderated by Amy Lemire, Tony Parisi. But Alvin Graylin and I were sort of going back and forth on a certain number of issues. And there was a moment when Alvin said, we need to be able to just set some of the negative aspects to the side and look at what the real utility of some of these optics are. And I guess in the moment, I was pushing back because, But if we don't look at being in right relationship to, you know, let's say, the hundreds of hours and millions of hours of labor that chat GPT or these generative AI systems are kind of scraping the web and agnostic to copyright to a certain degree. And there's people like Kirby Ferguson who has a whole series of everything is remix and there's argument of like fair use and transforming that. And then there's lawsuits that are saying, actually, well, there are certain aspects that these are stored in a diffusion model in a way that's just kind of like equivalent to being compressed but still can be preserved and recreated. And so there's this existing legal debate that's happening right now as to whether or not it's fair use and transformative or whether or not it's actually kind of stealing data and having it directly appropriated into these. And so I feel like I was pushing back to Alvin, but I also can see his point where I think it's great to be able to see what the potentials of these tools are when you are playing with something like stable diffusion or mid-journey, which we don't actually know all the different provenance of all the data that was necessarily trained on. if it was in right relationship to copyright or whatnot. But at the same time, part of my orientation is to advocate for being in right relationship and consent and being able to create these data sets that have people who are openly consenting to creating this commons-based vision of this future where we do have this ability to be able to do that. Because I want something like Staple Diffusion to exist, but I also don't know how much they just scraped off the web and all the data. So I don't know, as somebody who's deep into this generative AI system, how do you reckon that ethical dilemma of whether or not some of these systems work based upon some of the stolen labor of stuff that may have not been consented to with some of the copyrighted material that may be included in some of these data sets and these models?

[00:43:05.226] Evo Heyning: I personally choose to start by creating from my own art. And that's a very different path than I would say most people engage in. But as a creator for 40 years, I began from a place of, yes, these things are not separate from each other. I think you are making a tremendous point about the deep interconnectedness of these things, right? The utility and the tools are not separate from the society from which they are enacted upon and are transforming in real time. And there aren't great easy answers right now when it comes to things like fair use, because fair use laws are going to need to be rewritten. Unfortunately, we have very outdated laws about everything related to not just copyright, but obviously the web and interactivity as well. When it comes to how do I approach publishing and creating with all of these tools, I tend to be a relatively open source creator. I've been publishing using Creative Commons since the very first days, and I currently publish most of my work using Creative Commons, especially on a Remix license, because I believe that throughout the creative life of humankind, We have always informed each other. We have always improved each other along the way, right? I began painting when I was five years old, but honestly, I learned by painting, by going to museums and looking at Georgia O'Keeffe paintings and trying to emulate the artists that I was seeking to be like, like Georgia O'Keeffe or like Hilma of Klint, right? These artists inspire me greatly, but I don't want to copy their work. I want to learn from it and grow from it. And so when I'm using a generative tool, I am using it as a pattern recognition tool in order to figure out how can I learn what is a beautiful approach to something that I can't solve on my own, something that no human could do. But that means I make ethical choices, for example, not to use names of living artists in my prompt craft, right? Unless an artist comes to me and says, I want you to prompt on me. And then I will, right? But otherwise, I don't engage in that. I also don't engage in copying. And I do create portraits for folks. But I do it when commissioned. I don't do it without consent.

[00:45:38.440] Kent Bye: When you say copying, do you mean like going to a website where someone shared their prompt? Or what do you mean by copying?

[00:45:43.488] Evo Heyning: For example, there are folks who create all types of media, books and things, but they'll do so by prompting the name of an existing celebrity and then making a digital version of that celebrity, which to me feels icky. If I were that celebrity, it wouldn't feel great to me, so I don't engage in that process. Other creators choose to engage in that process, I don't. Is it right or wrong for that creator to engage in the process of using a celebrity's likeness for their own art? That's something for the courts to decide. I don't think we're going to get there anytime soon, however. When I'm teaching prompt craft, I encourage people to be as original as possible. And that includes even when writing a prompt, right? And so, yes, copy and paste as you're learning, but then go out and write your own prompts and don't just copy and paste from 20 other artists that you saw that you happen to like, right? Think about your own vision. Do a little writing on your own and give your own vision some language. And that's the creative process, right? Creativity isn't in copying and pasting. Creativity is in learning from all of these influences and then creating something new.

[00:46:59.763] Kent Bye: You had mentioned that you wrote the first book of a four-book series, and you also mentioned teaching. So you've been teaching workshops on this. And so maybe to start with, people can come and take a workshop with you. What is the agenda for what they will learn if they come to a workshop? And then we can talk about your book series after that.

[00:47:16.076] Evo Heyning: Sure. I began teaching PromptCraft as a workshop in the fall, starting with image generation. So we did three rounds. Image generation one starts with an introduction to PromptCraft, and we go through both stable diffusion and mid-journey as a comparison in that first class. In the second workshop on image generation we go through how to create training sets, how to build your own world starting with the basic building blocks for it. So what are transformers and how do we go from an idea to something that can be reproducible over and over again. Starting in late June, I'll be adding courses on specifically language work. So that is all of these large language models and specifically how to recognize which tools might be right for which type of job, and also how to begin to elicit the kinds of responses you're looking for. For example, most large language models are terrible at humor, but some of them are okay. Right? Which ones are okay? Some of them cite their sources. Bing does, for example. Is it correct? It's better than all the others, so, you know, should you be using Bing for your scientific research? Maybe LLMs are not for scientific research in that way, right? We've already seen that over-reliance on generative, especially language models, to do things that they shouldn't be doing creates misinformation, disinformation, right? Sort of fundamental disagreements about what is truth itself. And that's where we see fundamental breakdowns starting to occur, especially as people anthropomorphize these tools on top, which creates all sorts of weird, I would say consciousness gaps almost, like we are maybe giving too much power and control to certain types of AI that shouldn't be transmitted to these tools. And we maybe need to pull back and think of them a little bit more utilitarian in the sense of, okay, a tool is as good as the person using it. So if you would like to be a good person using your tools, what does that mean? What are the choices you have to make? What are the choices? For me, that means I use this tool and not that tool. I choose to prompt this way and not that way. I choose to publish Creative Commons. I don't stick a watermark with my name on things generally because for me, Obviously, for all of the creators who came before me, that wasn't a solution, right? We've seen that watermarks show up in all of these other generative tools. So the process of what we called ownership prior to the generative stage is probably not going to work moving forward when we think about what is media and what is ownership of media.

[00:50:16.661] Kent Bye: And you mentioned you have the first book of Prompt Graph, one of four. What are the other second, third, and fourth books going to be about?

[00:50:22.993] Evo Heyning: So I'm working on a recipe book, and the recipe book is specifically about language and giving people things that they can take into their own work. It's very sort of cut and paste. The biggest book that I'm working toward, and I would love to interview you at some point, Kent, is specifically on world building. And so thinking about XR and AI together from the creator's perspective, when you're building a world, you often have to create thousands of assets, right? 3D things, skyboxes, avatars, little objects, right? An XR developer might be in dev for a year or two just building all of those little pieces. And so the generative workflow changes the nature of how we do that, right? We're already seeing this in Blender. We're seeing this using Blockade Labs. We're seeing this with all the things that NVIDIA is putting out as well. And so as creators begin to learn that they can basically spawn a world overnight that would have taken them a year to do prior, I think we're going to see WebXR and the vastness of the metaverse begin to unfold. If you think about where we are now, it's sort of like 1993 and the web days, where we still kind of knew where all the websites were. And that's not going to be the case a year or two from now. So the decisions we're making about searchability and indexing, about interoperability, all of those things will impact the future of WebXR, the open metaverse, and how we engage in things like augmented reality.

[00:51:57.006] Kent Bye: What was the fourth book then?

[00:51:59.468] Evo Heyning: With my band, Oracles, we've written about 200 pages of lyrics in order to develop the first album. In that process, we explored 15 different large language models and wanted to understand not just the uniqueness of their voices, but maybe where they considered themselves on the musical spectrum. And so that whole exploration and experiment of the Oracle's first album, which is called Deeper Learning, the first single that's out now is called We Are All Stardust. And specifically, we go through the songs that will end up on the album, but we also go through the 200 pages of lyrics it took to get to those. Because there is this misconception that you just type in a prompt, you get what you need, and you go. And that is not the case with generative tools. generally the first one, two, three times is not useful in terms of an actual final output. So much of the work in generative media is curatorial and editorial, right? It is critical thinking about choosing which of the hundred or thousand options you are actually going to use. And so while it does reduce the amount of time it takes to get the first part of the work done in concept development, It adds to the time when you're thinking about curatorial and editorial, especially in media teams. This is a huge leap, and most of us aren't really thinking about what that creates in terms of a cognitive load. In the book, specifically for deeper learning on the Oracles album, we go through, for example, what worked in humor, what worked in lyrical quality, what also felt too anthropomorphic to use on the album, what felt weird. One of the large language models I tested is Bloom, which is an open source science model based on scientific literature for the last 50 years. The very first time I queried Bloom, it came out as gay. I don't know why. I thought that was an absolutely fascinating behavior. What would make a large language model? I had asked it to define the word, weird ones, all one word. So, W-E-I-R-D-O-N-E-S. I gave it a fictional word to see what it would come up with. And it came out as gay. And then it said, I'm gay and that's okay. And then it went into all the wonderful things it does because it's weird. And what it was doing was pulling from social science research in the 60s and 70s on queer culture. Right? So while I didn't use that first set of lyrics, I then took those lyrics, because they were very stream of consciousness, into a different chat tool and was able to bring a song forward from what Bloom gave us. Even though Bloom didn't give us a song, it gave us a weird mishmash of human experience of what it meant to be weird, we were able to get a song out of it.

[00:55:01.882] Kent Bye: Wow. Wow. So you've barely been at the zeitgeist in writing Tidal Wave, but all of these intersections, I really appreciate you taking the time to share all this. I guess to start to wrap up, I'd love to hear what you think, some of the ultimate potentials of this intersection of XR and AI, all these generative tools, world building, and prompt craft, and all these things, and what they might be able to enable.

[00:55:25.055] Evo Heyning: I look forward to being able to walk into a 3D space speak it into existence and invite my friends over and have a beautiful experience. And then if we choose to close the door on that experience and it disappears, that's great. And if we choose to leave that as a persistent world that people can come back to for years to come, that's also great. But having the freedom to have that experience with my colleagues so that we can not just create a single experience in real time, but on an ongoing basis, participate, collaborate, and play, experiment around the things that really matter to us. To me, that's things like climate action and how do we use these tools effectively to solve the biggest problems we're facing, right? I live in California. We have fires and storms and all sorts of things that are fundamentally threatening lives. We can use XR effectively to address, for example, fire safety. We can use AI, for example, to address fire safety. So now is the time for all of those tools to come together so that my first responder has them all in his helmet when it's time to come to the fire.

[00:56:38.629] Kent Bye: Yeah, the way you articulated that first part reminded me very much of Star Trek and the holodeck, of just going into the holodeck and kind of invoking different scenes. And I think we're actually getting pretty close to being able to have those different types of holodeck experiences. I don't know if you've made those parallels or other people discussing that, but it feels like, of the science fiction vision of that, that we're actually getting pretty close to that.

[00:56:59.655] Evo Heyning: To me, that's part of the joyful next step. Like, we're starting to see this with Lovelace, for example. What they're doing, I think, is phenomenal, being able to generally prompt to Unreal. I love the idea of being able to prompt a science lab that is a functional experimental zone where I can go meet my teen nieces and nephews and go do a science experiment together, right? The things that are actually quite hard right now but don't need to be. Right? We have all these things. We have all these resources. It is really just in how we bring them together, we make them interoperable, and we make them accessible. Appropriately accessible.

[00:57:39.312] Kent Bye: Yeah, and just to invoke the Ready Player One with the Oasis, they have this big, vast metaverse of educational experiences, but yet education has been the most underfunded of all these different experiences. And I think with the generative AI tools, it sounds like from what you're saying is that we'll be able to maybe close that gap and have a lot more of those different types of educational experiences as well.

[00:57:59.009] Evo Heyning: That's right. Did you see Picard, by the way? The last season of Picard, the holodeck is primarily used for therapy. It's used to bring father and son together, to basically be the therapy zone. And I thought it was fascinating that they basically, of all the things a holodeck can do, chose to use it narratively as the place for safe therapy.

[00:58:22.667] Kent Bye: Awesome. Is there anything else that's left unsaid that you'd like to say to the broader immersive community?

[00:58:26.407] Evo Heyning: Check out the new album. It's at Oracle's AI.

[00:58:28.917] Kent Bye: The whole album is launched and everything?

[00:58:32.002] Evo Heyning: Well, we're dropping singles every two weeks, and that's Oracles as in the shape of the ear, which is A-U-R-I-C-L-E-S. And my bandmate Will Henshaw and I actually met 10 years ago through Singularity University and through Wisdom 2.0. We traveled in similar circles seeking deep insight. And so that is what brought us together to create this album. He's won Grammys and tons of awards for his work over the last 30 years in music, so I'm really excited for you to hear it.

[00:59:01.396] Kent Bye: You've got PropCraft that people can check out on Amazon, another place to get books. And where can people find out more information about any upcoming workshops you may be having?

[00:59:08.941] Evo Heyning: My website is evo.ist. My design lab, RealityCraft, is based in Oakland, California. I am right near Pixar. If you are ever in the East Bay, drop by.

[00:59:20.989] Kent Bye: Awesome. Well, thank you so much.

[00:59:22.550] Evo Heyning: Thank you, Kent.

[00:59:23.920] Kent Bye: So that was Ivo Hanning. She's an interactive media producer for the last 20 years, working on gaming, live streaming, and the 3D web. And she wrote a book called PromptCraft, looking at our relationship to generative media and developing fictional worlds and also a band called Oracles. So I've had a number of takeaways about this interview is that first of all, Well, I really appreciated the way that Ivo was thinking about generative AI in terms of crafting the right semantics to subtly influence and modulate these images within generative AI. It's a new mode that we have for being able to use language and semantics to interface with computing. And yeah, just a lot of deep dives into the way that she's trying to understand the nature of these generative AI systems. And this is an interview that I would send to people just to get them up to speed into trying to understand what's happening with these generative AI systems and some general strategies. So she's also been working in the 3D web and a lot of stuff with the Open Metaverse Interoperability Group and thinking about how the metaverse is going to be interfacing with these generative AI systems. Yeah, just generally how we're going to be moving into this world of like the holodeck where you start to speak out what you want and then have that translated into an immersive experience. And so I think we're going to start to see that at Venice Immersive, where you get to see some of these very early projects where it's starting to deliberately take these different prompts and create a ad hoc experience that is different for every person that goes through these different experiences. So actually having a conversation with Matthew Niederhauser, one of the directors of this Totalmancer that's going to be at Venice Immersive. So yeah, I'm excited to dive more into this whole series of looking at the intersection between XR and AI. I think generally a lot of people are pretty optimistic for what's possible, and they're trying to find these aspects of utility. There's certainly still a lot of ethical questions, I think, that get into the data provenance. And Ivo said that, you know, in her own practice, she tries to avoid using the names of living artists within her own prompt craft practices. So, yeah, just thinking about in the future, as we move forward, trying to use models that have complete consent, you know, for her own work, she's putting a lot of her work out as Creative Commons and, you know, just trying to live into this future where you have these Commons-based values, where people are collaboratively and collectively trying to contribute different aspects of our cultural heritage into these different models. and then to leverage that and to be able to create new things. I think that's the kind of the spirit of fair use and trying to provide a foundation for other people to stand upon as they do their own creative works. And she's doing that within her own practice and exploring all these different dimensions within her four-part series, Prompt Craft. And she's got her first book out and she's also got her band named Oracles and she's developing these different fictional worlds and doing lots of things around world building and how can you start to use semantics to be able to build out entire immersive worlds. So, that's all I have for today, and I just wanted to thank you for listening to the Voices of VR podcast. And if you enjoyed the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a listener-supported podcast, and so I do rely upon donations from people like yourself in order to continue to bring you this coverage. So you can become a member and donate today at patreon.com slash voicesofvr. Thanks for listening.