Adrian Biedrzycki (aka Avaer) won the Poly Awards’ WebXR developer of the year in 2021, and has been working on creating an open metaverse technology stack for many years now. He’s the co-founder of Webaverse, Moemate virtual assistant, and M3 Metaverse Makers Hacker collective. I had a chance to catch up with Biedzyrcki to do a bit of a survey of what’s catching his attention across off the open and closed Metaverse platforms, the state of XR interoperability, and all of the various ways that he’s been exploring the potentials and perils of AI as a hardcore WebXR developer and Metaverse hacker.

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Podcast: Play in new window | Download

Rough Transcript

[00:00:05.452] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR Podcast. It's a podcast that looks at the future of spatial computing. You can support the podcast at patreon.com.voicesofvr. So this is episode number 16 of 17 of looking at the intersection between XR and artificial intelligence. And today's episode is with a developer who goes by the name of Avaer,Adrian Biedrzycki, and he does a lot of different WebXR development. In fact, he won the Poly Awards WebXR Developer of the Year back in 2021. He's working on a lot of different projects like Webiverse and Momate. And so he's been at the forefront of looking at all these different open metaverse technologies, very concerned around interoperability standards and Yeah, trying to embody that within the work that he did with Webiverse but also doing a bit of a deep dive into artificial intelligence and virtual beings that he developed a whole app called Momate which would give you a little bit of a embodied AI agent that would be like on your desktop that you'd be able to engage with and so Adrian dives deep into some of the different frontiers of what's happening in artificial intelligence and how he's been using different AI tools for both his coding, but also just thinking deeply around the future of the metaverse and how all these technologies are going to continue to grow and expand and get a lot better over time. And so he's just trying to embrace these different technologies and use them in a lot of different ways. So very curious to hear how, over the last number of years, he's been integrating all these different AI into his own personal workflow, but also thinking about where things might be headed in the future. So we do a lot of different technical deep dives of doing a bit of a survey what's happening across these different platforms and some of the different trends of interoperability that he's paying attention to and lots of different references to dive deep into what's happening in the broader ecosystem. So that's what we're covering on today's episode of the Voices of VR podcast. So this interview with Adrian happened on Thursday, August 10th, 2023. So with that, let's go ahead and dive right in.

[00:02:04.807] Adrian Biedrzycki: I'm Adrian Biedrzycki. I go by Avaer. It's kind of like my hacker handle on the internets. But I've been doing XR stuff for a really long time. So long that I feel like in that time, XR has been redefined so many times. Because back in my day, I got started with XR when I was at a startup called Webflow. I was doing just web design work, creating web design tools, which was actually a really innovative thing at the time because the web wasn't even a cool thing. back then. But my gateway drug into all of this was WebVR, where basically I was able to take all of my JavaScript and web design skills and just apply them to graphics programming, which is something that I've always wanted to do ever since I was a kid. And so WebVR just basically allowed me to take all the skills that I learned in web development and create virtual experiences out of that. And as a hacker, I was always probing the limits of what you can do with something like that. So one of my first ambitious projects that I could think of right when I decided that like, hey, I'm going to just do web VR full-time, as opposed to just JavaScript development for this startup company, was basically taking an emulator or an emulator system for all the games that I loved to play as a kid. So that was things like Nintendo 64 and PlayStation. And I thought, hey, why don't I try getting that 3D aspect into a headset using JavaScript technology, which was like so many crazy ideas in one. Because on the one hand, it's like you're emulating this really old system on a really new system that's really not designed to be 3D in the first place, for like not to mention VR. So actually, I couldn't even do what I was doing, or what I wanted to do, just using the regular web browser. So I decided to reinvent the entire web VR stack on my own using a technology called Node.js, which is really just the JavaScript parts of a web browser. I re-implemented the entire graphics stack and I was actually able to basically walk right into the 3D worlds that I grew up in as a kid. So that's Nintendo 64, Ocarina of Time, Star Fox, and even like the PlayStation games. And to do that, I had to just deeply hack the cores of these emulator systems and plug them into JavaScript. And that taught me a lot about how to do things in VR, how to make them work in the first place, and how not to do them. But that was kind of like my gateway drug and that's when I kind of decided, you know what, I'm going to just start to push some of these ideas and see like, hey, where can we take them? Back then the metaverse was not a thing at all. So XR, back then it wasn't called WebXR, it was actually just called WebVR. Augmented reality was barely a thing, like this didn't even work on your phone really. But yeah, one thing led to another and I was able to convince enough people. I had enough of an audience with some of my hacks that I was doing at the time. Back then we were doing everything in Slack, but I got enough of an audience that investors kind of wanted to take a chance on me and decided to fund some of the projects that I was doing. Yeah. And I guess The next crazy milestone was when I finally realized that whatever I was doing started to have a bit more of an impact on the rest of the world, or I was working on the right things. I'm not sure which of the two it is. Either I had an impact or I was just doing the right things, but basically Mark Zuckerberg eventually just hopped on stage and decided to rename his entire company to Meta, which surprised a lot of people, including me at the time. But yeah, and then I guess the next thing was like the whole crypto cycle where these ideas got mixed in with like open source development and open Metaverse, like started to become a thing where we're not only just going to be developing these systems ourselves, but we might be able to financially incentivize people to help us. So yeah, I feel like I was a bit of a major proponent of that kind of idea. And we were also able to sell some NFTs based on that, or maybe even invent NFTs. It really just, once again, depends on how you define it. Because we were doing this long before it was cool. I feel like we were promoting these ideas to people and I remember it really blew my mind the first time that somebody came around to me and basically pitched me the idea of, hey, there's these digital objects and you can use blockchain technology to basically develop them in an open source way where these are publicly funded goods, but ownership is still tracked on the blockchain. And people were pitching me these ideas and I'm like, yeah, I know, dude. I've been doing this since 2016. I'm glad that we're all doing it now. But it feels like at some point, that narrative slipped away from me. And this thing warped itself into this beast that I couldn't control anymore. And it got mixed into ideas that I wasn't necessarily on board with, like, let's destroy the environment and just build this on top of Bitcoin or something. To me, I thought that there was always technical solutions that we had available and we weren't using. We could have just used Ethereum from the beginning, proof of stake. That which eventually did happen, but it was like this weird battle of narratives that I wasn't really able to win. Yeah, but I've been doing basically XR stuff like the whole time and trying to promote doing these things in an open way, in a privacy respecting way, basically developing open source software that you could deploy on your own machines if you wanted to run digital worlds that are multiplayer, that are extensible, that are buildable with community. And it seems like that idea, like I was so spot on with that idea that it seems like it kind of took over the world, especially during the pandemic when people were stuck indoors. So I had a lot of pressure on myself and my team to deliver on a lot of these things that we were promising. Yeah. And I feel like we also got swept away, a lot of us, in terms of the finances of it all. Because for the first time in my life, even though I didn't take any money out of the company... In fact, I might be the biggest loser in the Metaverse space in terms of how much money I've put in versus how much money I've gained. But it felt like the narrative was with us, that we would be able to actually financially incentivize people to come help us work on this stuff. So all of this was a crazy mind blow to me. And as a first-time business owner, as somebody who's trying to understand, how do you run a startup? How do you provide value to users? How do you close value loops? It was just so many things at once, as well as me trying to invent all these systems, deploy them at scale to a community, It was just so much. And I got completely overwhelmed, I think, by the pandemic. And you'd think that somebody like me, who really just likes coding indoors all day, you'd think that I would just love the idea of like, okay, now everybody's indoors, and we can all just communicate through these digital platforms. But I actually think the pandemic traumatized me more than more than a lot of other people because my only human interaction was kind of like running around outside every now and then and like seeing people's faces just doing parkour through the streets and like that was enough for me but but basically the pandemic cut me off from the ability to do that so it was really strange that like the isolation definitely got to me as well and maybe like changed my mental health for the worse.

[00:09:03.545] Kent Bye: Yeah, maybe you could give a bit more context as to your background in terms of computer science and math. And what, what was it about the open web that was so much more alluring than being able to run things and say, like closed wall gardens, like VR chat as an example. So yeah, I'd love to hear more about your background and why the open web versus the closed wall garden model.

[00:09:24.841] Adrian Biedrzycki: Sure. So I do have a degree, Bachelor of Math and Computer Science from the University of Waterloo. I also spent a bit of time in University of Victoria because I was chasing a girl at the time. But yeah, so I do have a degree and I've always just been interested in distributed systems and systems that are easily hackable. Like I'm a hacker at heart. Any system that I come across, I want to think, how can I like add to this? How can I decompose this? How can I make it better? And the web is like the perfect vehicle for doing that. And I think it's also never been any better. Because now we have WebAssembly where basically any programming language can run inside the browser. It seems the web has completely taken over like both the client side and the server side. Because now a lot of applications simply are CloudFlare workers that you deploy as basically WebAssembly blobs or JavaScript blobs and they're doing server side work. So the idea of basically being able to program the entire system through one language like something like JavaScript or something that's webby has always been super appealing to me because that means like any part of the system is accessible to me. I can change it, I can improve it, I can add to it. And yeah, I just find that fascinating to this day. I still feel like the web, just in general, is a technology that's probably going to win over the long term with the kind of systems that run basically our day-to-day lives. You probably have 10 web browsers right now running on your computer and you don't even know it, whether that's Discord, which is just a JavaScript based platform to a regular web browser where you probably have a hundred tabs. And there's probably like seven others as well. And the cool thing is that like all the software that we've built on one of these platforms is easily transplantable to another one. So it's a very incestuous culture where you're just NPM installing modules into each of these projects and just composing them into new and unique ways. And they all can talk to each other using standard protocols like HTTP. And yeah, I think it's a beautiful way to build software. And I think like that's been proven through how like all these things have developed.

[00:11:17.708] Kent Bye: Yeah, I'd love to get your take on WebGPU. I had a chance to talk to Brandon Jones and, you know, it sounds like it's a transition from WebGL into a new graphics approach and new shader language. And my understanding was at least like 3GS was having very specific ties into being able to modulate stuff with WebGL, whereas like Babylon.js was more abstracted. But as we start to move forward, Brandon's take was that It's still even despite the switch over to perhaps being able to have more direct access to the GPU, that he sees that the web in general is always going to be a little bit behind the native apps and what you can do in terms of performance. But it feels like over time, that gap is closing. And I'd love to hear some of your take on what web GPU starts to fit into all the different stuff that you've been doing on the web.

[00:12:03.624] Adrian Biedrzycki: Sure. I have not gotten a chance to do much WebGPU work myself. And it's for that reason. It's mainly just because Three.js has been traditionally just built on top of WebGL, which is the precursor. Although it has been relatively abstracted, the problem is like a lot of the shaders just do need to be translated over. So that does mean that there's a bit of a transition period where we'll need to stop relying on custom shaders so much and move to, for example, the Three.js node system, which I think does support WebGPU now. It's just a different way of programming. It's more like Blueprints-based, similar to how you're doing it in Blender. or Unreal or Unity, where you have a shader graph. If you build it in that manner, then the shaders can basically be trans-filed dynamically into something like WebGPU. And then you get a lot of performance benefits out of that. Although I think what we've been able to do on the web, just with Three.js, without necessarily relying on WebGPU, has proven that it's not necessarily that big of a deal. And certainly every single engine that is transpiling to the web, and there's tons of them now. I mean, it used to be that both Unreal and Unity were transpiling to the web. They kind of like sidelined that for now, but I think that's probably just going to come back relatively soon once web GPU becomes widely deployed and widely used. So if you're just using one of these engines, you're going to be able to get those benefits of deploying on any platform and just leveraging WebGPU technology. It's super exciting. I think most of the correct design decisions have been made in WebGPU because it's very similar in design to Vulkan, Metal, and all the other platforms underlying these GPU drivers. Yeah, it's totally happening. I can't wait to basically just say goodbye to WebGL and the state machine there and just have all my code run much faster. Yeah.

[00:13:48.594] Kent Bye: Well, we first met face to face back at the decentralized web camp that was happening in 2019. And I remember we have a conversation that we recorded where you were doing a lot of brainstorming around how do you make it financially viable to do work on the open web? I feel like there's two things that have been holding back. the open web development. Number one is that Apple hasn't shipped a WebXR implementation for both mobile Safari and in PC, but also just the finances are completely different in terms of like you can ship an app and sell it and perhaps make a sustainable, viable living, but you're giving away everything for free on the web. Then I feel like the ad models haven't quite been there and there has been the crypto world, but I don't know that I feel like there's. been mixed results from when I see the different communities that are out there from Decentraland or CryptoVoxels or any of the other crypto based platforms. But you know, when I think about these type of metaverse platforms and to really make them sustainable and viable, love to hear some of your thoughts on that since you have been thinking about this for a number of years and even done some experiments and some different models on that front. So yeah, I'd love to hear your take on how to make it as a sustainable WebXR developer.

[00:14:58.520] Adrian Biedrzycki: Sure. So I think fundamentally, there's two problems here, and they both come down to money. One is distribution in that you think that developing on something like the web and just deploying your application on the web would solve the distribution problem where basically anybody can access it from any sort of device. The problem is that there's a lot of infrastructure invested in, for example, app stores, like what Meta is doing, what Apple is doing, and the financial services that they provide for somebody who's deploying on their platforms, which is why they take a cut. So part of that is just like the advertising that they do for the applications that you develop. Part of it is like protection against scams, taking credit cards, taking payments, which is still like kind of an unsolved problem on the web, ironically. where how do you take secure payments from within a headset? It's getting a little bit better now, but that's always been kind of like a second class citizen because I think of the financial motivations behind taking payments in such a way. Like when you're deploying to one of these app stores, the application platform does take a cut and they also exert some amount of editorial control over the content that you're deploying, which is for a good reason. They want to promote a certain idea of how businesses should work. So there's always this impotence mismatch between how you're deploying on the web and how these financial platforms and the financial infrastructure and even developer infrastructure has been built in terms of like, for example, with Unity, you can just usually press one button and it ships directly to the store. You don't have that on the web because there's no financial incentive for doing that. But there's also the technological evolution side of this, which is that because there's so much money, like even just in the metaverse right now, even though it's not as hot as it was, but even in the metaverse, there's so much money looping through the developer ecosystem to make the experience of, for example, shipping to one of these stores so good, you don't have that on the web. because there's no financial incentive to improve those systems. So if something is kind of buggy, it can take months before the request gets through to the browser team that's actually responsible for making sure that that works better. And it's not generally a priority because it's not part of the core value loop of whatever the business is delivering. So there's also the aspect of there's not much developer incentive to develop out these infrastructures. Although I'm impressed by how well the developer community of WebXR has done despite all of this. Like, I mean, there's now live advertising networks which have like some amount of users where basically you can put in billboards inside your WebXR app and kind of like hop across WebXR experiences. And like there's real businesses being built on top of that now, which is fascinating. I'm not sure if the crypto part of that has played out so well because I feel like a lot of people who invested in these crypto projects didn't necessarily do it with the intention of, or at least their heart wasn't fully in it when push came to shove. And for example, you might lose all of your money because crypto is going down. So maybe you're just going to sell your token and abandon your ideals or you're no longer going to support this project. Whereas I think if people were shipping to these closed platforms, these closed app stores, they had that kind of financial security where it's like, okay, we'll have at least a sustainable user base here. They're paying a subscription or they're paying for my application and I know that I'm going to get a paycheck. So it's always been this kind of weird financialization, incentive engineering kind of thing that I think to this day is kind of unsolved with crypto. I still think there's very few products out there that are crypto powered, where there's a closed value loop that hasn't been just basically VC or crypto and just living off the fumes of investment.

[00:18:35.796] Kent Bye: Yeah, when I've done a little bit of an analysis of some of these different platforms like Decentraland or CryptoVoxels, there's this concept called preferential attachment, which meant that 8% of all the owners own like over half the land and 20% of all the owners owned over two thirds of all the land. So you have a small handful of people that get in early and then end up getting a disproportionate amount of the land. So I don't know, I feel like that land ownership is something that has worked pretty well in an application like Second Life. But in terms of the crypto metaverse scene, I don't know. I just haven't found it personally as compelling and not really a critical mass of community engagement. But if I look at something like VRChat or even Rec Room and some of these other metaverse platforms, if we even go to like Fortnite or Minecraft or Roblox, you have much more engagement with these types of more closed ball garden systems. So yeah, I'd love to hear some of your reflections on some of these different metaverse platforms and what kind of design inspirations you may take from some of them, or if you are engaged with any of them outside of the WebXR work.

[00:19:38.625] Adrian Biedrzycki: Sure, so lately I've expanded a lot into these other platforms and checked out what people have been doing and I've been blown away, honestly. Not only with VRChat and what you can do now with the latest virtual markets where you can have pseudo metaverse experiences, like you have worlds that are reacting to your own avatar. We met a bouncer, like a VRChat bouncer, who was just an AI who was analyzing your avatar and said, you know what, your avatar is too high poly. So you can't enter this nightclub because this is for high performance quest users only. I thought that was really cool. And also now basically something like VRChat can run WebAssembly. So theoretically, it will be possible to compile virtually any language and run it on a platform like VRChat. Though there is the problem where almost everything is controlled by these centralized platforms. But it's a dual-sided thing because that does provide the stability that a lot of developers do want out of these platforms. And I'm also generally surprised to hear this feedback, but I can completely understand it. A lot of people are pro all these anti-cheat systems that, for example, VRChat is implementing because they don't want their content, quote unquote, stolen or downloaded. They have their own personal avatars and they want to make sure that other people aren't taking them and doing nefarious things with them or repurposing them for commercial purposes or what have you, which is a totally real concern. Epic's doing really amazing things with Unreal Engine. I mean, I mean, at this point, it's probably the best game engine out there for pretty much everything from virtual productions to producing AAA games. And it's been used in almost every industry at this point. And what they're doing with UEFN and Verse is also really cool, where they basically took the kernel of the best parts of the game engine and opened it up to pseudo-scripting for newbies, just for kids who want to make games in a way that won't break the fundamentals of the engine. there's so many ways that you can get game development wrong, like in terms of resource constraints and you just create infinite loops and so on. But Verse as a language is so well designed to just create these game loop experiences where you can't actually do things so completely wrong that it creates a terrible game. So I'm actually really excited about their design approach, even though it's like even more locked down than something like VRChat, because they're not going to allow WebAssembly even. It's more of just like scripting entities in the world. And I don't know if they'll ever be able to do networking with it. But it's really cool that a lot of people are coming up on just programmable systems in games that people get a lot of value out of. Roblox is also doing, I think, amazing things. I think it's a bit too financialized. It has a bit too much of a flavor of crypto for me, where probably the first game that you enter in Roblox is going to ask for your money 10 times before you can get to any amount of fun. But on the other hand, a lot of kids are growing up with these games and they're being paid to become developers, which is something that I was not afforded. Programming was not cool when I was growing up. But I mean, as a kid, you can be the cool guy developing these Roblox games and they're popular. And to a kid, having 10 users on the playground, you're king. just hearing the stories of people growing up doing that is super inspiring to me. They're also doing a lot of really cool stuff with AI in general. And these days, what Roblox can do is just mind-blowing. People have been developing FPS games and completely rewriting the core of the engine to create utterly new experiences in Roblox and just leveraging the user base, the platform. And for example, the financial infrastructure that they have developed and delivered to users. So you can basically just develop your games inside Roblox, but it is like a very opinionated, very close platform in its own right. Yeah, I think a lot of these platforms are doing a lot of things right, but then it all comes back to the open metaverse. How do you connect all these things together? And it feels like every one of these platforms is doing everything they can and everything in their power that they can to limit interoperability across these platforms. And you have to drag them kicking and screaming to support like interoperable standards for things that could interrupt their own value ecosystems, like in a way where you can take a piece of content and not use that one platform, but use it somewhere else. Although things have gotten slightly better because I think people have realized the value of, for example, interoperable assets, at least like GLB and I'm as surprised as anybody, but VRM, now as a standard for interoperable avatars, like completely took off. Back when we were promoting this, this was a crazy weird Japanese thing where some Vroid studio from Pixiv basically created the standard for avatars, but it's like super expressive and super good. And I think like the rest of the world in many cases realized that, like, for example, being able to take your identity, to take your avatar across these platforms is super important. it's probably more important than keeping these platforms locked down. So I mean now Decentraland is even going to be supporting VRMs. HTC Viverse supports VRMs and all the platforms basically settled on that as a standard. So I think that's a huge win. I'm looking forward to more of those things. But then you have things like NVIDIA Omniverse, where it feels like a lot of the culture has settled on VRM and GLTF and these more webby standards. But then you have this completely separate camp, which is mostly just like NVIDIA and maybe a bit of Apple because of the Pixar connection, where they're pushing a new standard called USD. Which is basically a lot of the same parts as GLB, but it has a bit more of a real-time production flavor to it where you're transmitting only parts of the model that are useful. But yeah, it's a completely incompatible standard with GLTF, or at least it's something where you have to double the work. So it feels like maybe the world is also kind of bifurcating into these two different camps, which are going to be hard to make them talk to each other. But a lot of people, I think, are really bullish on the fact that because Apple Vision Pro is coming out and because Omniverse is 100% a USD platform and it's being heavily used in industry, so it's probably going to be a thing and we can't ignore it. And then it's a matter of how do we bridge the gap between these two different asset formats that have fundamentally different ideas of what an asset is? I think we're going to solve it, but That's always been one of the fundamental challenges. How do you get incentive alignment across these organizations? And when you add a feature, why would you add it for this other asset format that isn't your native one? It's always a hard sell.

[00:25:46.782] Kent Bye: I know that the Metaverse Standards Forum has a number of different working groups. And one of which is to look at the intersection between USD and GLTF. And yeah, I feel like that there's certainly the ecosystem in terms of game engines, you have Omniverse, you have the web-based versions and you have Unreal Engine and Unity. And you mentioned transpiling down to the web, but even when it gets transpiled down to the web, it's like a blob that has to be all downloaded. So yeah, I feel like that we had mentioned earlier that, you know, you see a lot of potential on the web and I'd love to hear you maybe elaborate on your relationship to the various different communities and hackers and Unity, because I feel like that there's a part of the open web where you have the capacity to start to pull in different things that are just more information based. And all these different libraries that are out there, the Node.js or just all these open source libraries that are available on GitHub, where it's not always necessarily easy to bring them into some of these seven game engines. You mentioned that Unity has some support for WebAssembly. But yeah, I've found at least when you're compiling these down to different platforms, sometimes it doesn't correctly get all those external libraries for all the different platforms. So even if you get it to work on one platform, doesn't necessarily mean that it's going to necessarily work on all the platforms. And so I'd love to hear some of your explorations of the interoperability ethos or mixing and mashing these different programs from more of the open web ecosystem.

[00:27:11.463] Adrian Biedrzycki: Sure. So my friend Jin, who is actually the co-founder of WebReverse, which was my main Metaverse startup company, he runs, well, we basically also co-founded another organization called M3 or Metaverse Makers. It's kind of like this underground hacker crew, but he's been running it without me for the last two years, basically carrying the torch. And I think he's done an excellent job of cultivating a community of hackers who are constantly playing around with these technologies. And we're not talking about just web, we're talking about Unreal developers, Unity developers who are making their own games. But the way that these technologies are coming together in the hacker community, I feel like, are around these standards and these weird protocols that we keep seeing pop up, but they're extremely useful. So for example, one of them is VMC or like OSC in VRChat, which is basically a way that VRChat now supports natively for having a stream of data from one avatar inside the world out to your local machine, for example. So this allows you to do something like take the capture of your avatar motions and feed them through a WebSocket out to some VR animation app or a green screen app that you have running outside of VRChat. Or maybe it's just your data capturing for a replay later if you're doing a virtual production. And there's so many little examples of hacks like that, which a lot of people don't know about, but they're super useful and they're using all these technologies in unforeseen ways. that are genuinely, I think, being leveraged by actual production companies now. They don't talk about their internal tech stacks, but they're using things like VMC and OSC to do all their data capture, to do all their streaming, for example, Twitch, if you're a VTuber. This is actively being used. It's just really not talked about very much. One thing that I would like to see is, for example, somebody like Jin is, I think, one of the main posters on, for example, Metaverse Standards Forum Discord, where it's kind of crazy because he's not even running a company. We're talking about a Discord that has 2,400 paying members. But Jin is the one that's actually promoting the interoperability across all these platforms or saying, Hey guys, did you know that you don't have to do this? This is how we've been doing it in our hacker collective for the last five years and it works really well. Maybe we should just adopt that rather than inventing our own things. And he's always telling me stories of how he feels not listened to. And I think a lot of that comes down to the financial incentives as well. These companies are popping up left and right without even doing their research or understanding what's actually happening, because they feel like they have a cool idea about the metaverse, they want to implement it. And so they Google whatever is the hot thing that some other company is selling, and it's all about money. And it's hard to find Jin's work, because he's an independent researcher, where we're just in Discord, experimenting with these technologies. And we often don't have time to write about them, or we don't have time to hire a technical writer who's promoting our work. So it's this kind of weird thing where maybe if our hacker collective had a bit more cloud or a bit more reach, we'd be able to do a lot more and convince these companies to make things a lot more interoperable in a way that would genuinely, I think, help everybody. Because a lot of the developers of these tools that we're using are just hackers like ourselves. They're basically just moonlighting all this stuff, having their own little communities of people using it. And I think it would be awesome if there was more of a spotlight shown on them. These days when we get a $10,000 grant to fund one of these projects, which by the way is almost nothing for a developer of one of these crazy tools which require so much specialized development knowledge to implement. Like when we get like a $10,000 grant, that's like life changing, honestly, for some of these developers. And I think Jin's been a really awesome kind of like shining star in making those connections. And I feel like we're missing a lot more of that in the metaverse standards form.

[00:30:57.938] Kent Bye: Yeah, well, Jen took home the WebXR Developer of the Year, and you actually took away that same award for the Polly's Awards WebXR Developer of the Year the year before. And speaking of M3, I actually happened to be looking on Twitter, and I think I clicked on a link, and I happened to be at the very first gathering of the M3 when you were still trying to decide what the name of your group was going to be. So kind of speaks to the ability to send out a link and pop in quickly into a world that the open web affords. But given that you are one of the winners of the Polly Awards, WebXR developer of the year, which I think ironically was during a year when your main project that you were committing to wasn't even launched yet, but yet. There was enough people in the community that knew how much you were contributing to a variety of all these different open source projects. So, yeah, I'd love to hear about some of these projects that you see are, I guess, some of these sleeper projects in WebXR, either that you worked on or that you are really excited about.

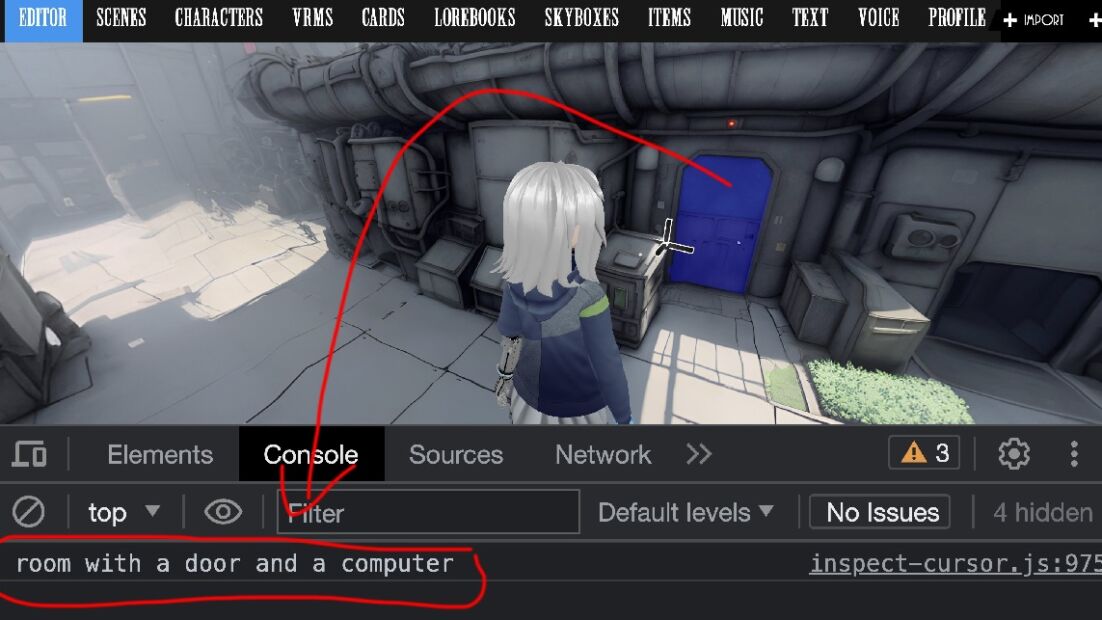

[00:31:52.693] Adrian Biedrzycki: Sure. I think a lot of this happened during the pandemic, which was kind of like a dark period of my life. But I literally committed code every single day for the last, I think, 20 years. So I've been developing this stuff basically daily. So I think a reason that I fell off of the whole WebXR thing a little bit was because I didn't see a financial path forward that I can inspire other people to follow, where you could actually make money using WebXR. We talked a bit about this. Crypto, like I felt like was a possible vehicle where we could like fund things and maybe pay developers to like work on these projects. But yeah, so I remember there was one turning point where during the pandemic GPT-3 first came out. And I asked it basically, okay, so I can understand the metaverse is probably happening. These technologies are going somewhere. Can you explain to me how do I, for example, start a business based on all this stuff? And it was actually GPT-3 that convinced me that we should probably do an NFT drop. It's reasoning, and this was completely correct reasoning by the way, was that crypto has a lot of investment right now through Bitcoin and so on, with a lot of believers in these open visions, but not so many products at the time. Just even early pandemic days, there really was nothing except these coins and these ideas. So it said, you know what, why don't you try to build a virtual world where you have a token registry and people can kind of create a marketplace for these digital assets. And we can use NFTs, for example, to financially incentivize people to build on these more open platforms where you're open sourcing your code, you're open sourcing your content, but you also have a way for you to actually make a living. You're selling it to users and they're able to buy these digital artifacts which have digital embodiments in the virtual worlds that we're building. That also allows for incentive engineering of things like where if these tokens are traded on a marketplace, the developer of the platform on which these tokens are hosted can, for example, take a cut of this transaction that's happening there, which is what a lot of the, for example, app stores are doing as well with their own token economics. And it seems to work out really well for them. So basically, GPT-3 spelled out the business plan that we should pitch to investors about NFTs. So this was before NFTs were even a thing. And that got us into the room with essentially Andreessen Horowitz and all the big investors who basically agreed, like, yeah, that actually sounds like a good idea. And to this day, I think there's a core kernel there that makes a lot of sense. The thing that I didn't predict was that Basically, once the money came in, things would be completely different because the incentives would not be aligned. Especially if the money is really just coming from the outside, where it's not necessarily people who believe in the idea, it's people who think that they can make more and more money, which is what this whole thing kind of snowballed into. But yeah, basically once we did our NFT drop, that did allow us to hire developers and build out the experiences a little bit more. But my focus, for better or for worse, I think my focus became a lot more centered around AI because I was blown away by the fact that this AI system, it wasn't cool at the time. Nobody actually believed me that GPT-3 could even write a joke. I was just basically pitching this to people like, hey guys, you've got to pay attention to this. But nobody was listening. But I was just blown away by the fact that this AI engine was able to basically spell out a business plan. I don't know what I'm doing in terms of business, but it was able to spell out a business plan that we could actually execute on. We could get people paid. And it was absolutely life-changing for myself and a lot of other people. And I just realized that, OK, if this is what it can do for a newbie like me, AI is completely going to take over the rest of the world. And I need to spread this message. This actually did help us a lot in terms of the storytelling we were doing with our NFTs, where basically the AI also spelled out the story that we should tell in terms of how do we, for example, inspire people to create content in this way, where you can basically attach stories to your NFTs. And it's probably the stories that you tell around them that are going to get them sold. Because people like media, people like to be attached to the things they buy. They like merch. And I'm all for that as well. I think anybody who's producing content should probably sell merch. It's probably the best way to actually make money and inspire community and to keep your brand alive. So one of the things that really stuck with me is the ability for GPT-3 to tell those kinds of stories and to ideate and generate ideas on top of whatever kernel you have in your mind. So I started exploring a lot of the other aspects around AI at the time, which were also even less cool than GPT-3. So that's things like the generative image models. Back then it was Google doing their Dream Studio or whatever. I don't think it was called Dream Studio, but it was like these AIs are dreaming and you had dogs with a whole bunch of eyeballs and that was the best thing that you can do, long before stable diffusion. But I just found that super fascinating. I thought, you know what? Just a couple of iterations on this technology and we're going to have something that's game-changing. We're going to have something that can be a creative partner for ourselves. I also fed that into GPT-3. And it told me, yeah, you should probably pay attention to this. Keep up on that research. And I did. I spent essentially most of that year not only trying to manage this business, trying to inspire a community of creators, but also trying to keep abreast of all the latest AI research, which I knew was going to be super important for the future of development. I knew it was going to take over. I think history has just completely proven out that that is exactly what I should have been doing. A lot of interesting experiments came out of that that never even saw the light of day because they weren't really productizable at the time. or at least didn't make sense with the rest of what we were trying to build. But I think they were super important in kind of informing, making sure that we're building towards the future. It's just, that's really hard to do when you're also trying to ship a product to users. But I'll give you an example. Like for example, I got obsessed with the idea of like, okay, so if GPT-3 can tell me the story and it can inspire me to go build it, maybe we can automate this entire process. And maybe if I have an idea for a story, I can just write it into a GPT engine and then hook it up programmatically to, for example, an image generator, an audio generator. Maybe we can start producing TV shows that are completely automatic. Maybe the future of entertainment isn't necessarily that I'm hopping on Netflix and I'm watching whatever is the latest show. Maybe it's I use prompt engineering to type in the thing that I want and the rest of the system builds it for me. It turns out that over the last one or two years, that turned from a pipe dream or a crazy idea to something that's literally happening right now in Hollywood. And yeah, so basically right now I'm sitting on a pile of technology that is actually doing that, where you can basically create your own characters, create your own scenes, create your own dialogues, your own narratives, enter it into the system, and it produces the entire thing. And you can even walk into your own custom TV shows, like in your headset these days, because we basically take, for example, the images from Stable Diffusion, we do a depth pass on top of them where the AI figures out, okay, so this is an image, what's the Z layer on top of all this? You can map that onto a mesh, And then from that, you can do image segmentation, for example, to figure out where's the floor and what's the normal of the floor. What's the scale of the image and what is in the image? Like when you segment, like here's a tree, here's a door. And basically just using that technology, you can create 3D comic panels with 3D characters in them that are walking around. that are also essentially user editable because with tools like Stable Diffusion, you can in-paint parts of the image. So, for example, if you segment the door out, you can clip it out, you can tell Stable Diffusion, hey, I don't want this door to be closed, I want it to be open, and then it'll do that in-painting for you. And if you pre-compile a scene like this, then basically you can generate 3D worlds semantically, almost programmatically and automatically, just without any user input. Or at least the user input is just like, here's my idea, here's my inspiration, which can be like a really long prompt. It can be like super hyper specific. But even if you're not an artist, like you can generate these story worlds. And you can basically live in them. And like, this is all technology that exists now. And like, basically I'm excited to ship. I haven't seen like enough applications of it yet. Like, it feels like just people are starting to understand the true power of generative AI and what it can mean for basically production of like WebXR experiences.

[00:40:17.429] Kent Bye: Yeah, Andrew Isachi of Fable Studio, I think they actually maybe even changed the name of their company to The Simulation, where they were using this kind of generative AI to do like South Park episodes where you could enter yourself as a character. And I think it's worth mentioning right now that we're in the midst of a writer's strike with the Writers Guild of America, as well as the Screen Actors Guild, where you have AI is a center of a lot of these different debates. To what degree can you have AI assistance and to what degree are you going to be able to take some of the stuff that the producers want to do is just say, you sign up for one day of work and we have your image and we can use you forever. So I feel like there's a way in which that AI is at the center of these conversations with the creatives. And from your perspective as a hacker, I can see how you're very excited. But for me as a consumer of different stories, I've just recently watched all of The Wire on HBO and watched The Succession. These are by writers who are writing stories that I don't think that AI is going to be able to match the depth of storytelling that's there. So I see that AI is going to be a part of this holodeck future where you do have like, hey, give me experience that's like this. But I guess I'm a little bit more skeptical that it's going to have really deeply meaningful stories and that there has to be at least some dimension of where's the data coming from? Is the data in right relationship? Is it something that you are using without consent? And so especially if the data that you're using without consent is produced by labor, that then you're going to be displacing that labor at the same time. So I feel like there's all these deeper ethical issues where I agree that there is a lot of exciting possibilities, but there's still, I feel like, so many ethical questions. And I'm a little bit more skeptical in the limits of say the stochastic parrots, where this is just a randomly telling you what the next word is going to be without any deeper understanding, no knowledge representation. It's, you know, maybe the AI will get to the point where we have genuine artificial general intelligence, but right now it feels like the superficial parroting of data that's been trained on. And there's lots of different gaps and holes in that. So I think those are all the different caveats that I just throw into the mix, whilst I'm still optimistic that there is going to be some use of this technology, especially when it comes to the early phases of brainstorming for different conceptual art of different scenes, or maybe it's going to be these AIs that you have this kind of Socratic interaction like you had with GPT-3, but also realizing that there's a lot of limits for what these things do and do not know and what they are able to do and what they're still not able to do.

[00:42:46.684] Adrian Biedrzycki: Yeah, there's definitely a lot of that. And I empathize with any creative whose job is being displaced by AI. Because honestly, I think programmers are one of the first to go as well. 90% of my code at this point is generated with Copilot. And I feel like at this point, if I were looking for a job, it would be a much harder sell than it was, for example, three years ago, where people who really don't have necessarily any computer skills can produce compelling software without me. Whereas before, that was completely impossible. So I can totally understand the plight of anybody who feels like they're being displaced by AI. But I 100% agree with you. There's no way that AI can touch the quality of top-tier writing, certainly yet. But I think a lot of that is just a numbers game. The human mind just has way more neurons, has way more complexity than any of these language models to date. And I think that definitely comes out in the quality of what it's producing. But that said, I think I would be lying if I said I didn't think that was just a matter of time before it's just going to come for everything. So if I was a writer, if I was a creative in a different industry, I think what I would be doing is trying to embrace these AI tools to empower myself more and more, rather than trying to fight against them. The efforts to stop this technology from existing or to slow down its development is really just not going to work. There's too much value and too much possibility tied up into it. It really just takes one person to not be on board with slowing things down. For example, maybe that's China, right? If the US just decides we're going to do a moratorium or we're going to stop development for AI for six months, China's not going to. So that just really means that, for example, the United States just becomes like basically six months behind the technology that China is producing. Then there's this whole weird economic thing that happens on top of that. I don't know what to say. I also think that there's a lot of interesting developments happening right now where agent simulations are being used to, for example, simulate artists and writers, like actually simulate their thought process in the writer's room, and then use that as an iterative feedback mechanism on top of the stories that you're writing. People are just getting started with this, but it has some compelling results where if you actually do simulate For example, 10 people in a writer's room for 10 hours debating over the merits of a story and evaluating whether it's good, rewriting the plot holes and so on, critiquing it. You get much more compelling results. And there was actually an interesting research result which surprised me, but I guess it makes a lot of sense, which is that GPT-4 now has human level criticism and evaluation of creative works across the board. So that means if you were to take creative work and put it in front of one of these AI systems and ask it to evaluate it, it would give you essentially the same evaluation that a human would give you. And once you can do something like that, you can close the loop with the AI where you have a critic agent on top of a generative agent And you have somebody in the writer's room who's saying like, hey, this story sucks for this and this reason. And then you can feed that back to the rest of the agents in your writer's room, your AI writer's room, and then they rewrite the story to correct that feedback. And it tends to work really well. And I think there's going to be a combination of those kinds of more human-centric or trying to mimic more human creativity, as well as just the improvement of these AI models over time to imbue them with more and more information and less and less mistakes, less hallucinations, make it more grounded, that I think is really going to come for everybody's jobs. So if I was, for example, a writer, I would be looking into how can I become a better writer with one of these tools, use the best tools for the job, and maybe differentiate myself that way. Because I just don't think we can stop it at this point.

[00:46:30.049] Kent Bye: Yeah. And you have companies like Valve with Steam where they have taken a strong stand against crypto. So having no crypto integrations and any other games that they're distributing on Steam and also with AI generated assets that they came down saying, we don't want any games that have these AI generated assets, which I can see in the case of like shovelware that they may have now that it was more opened up to be able to get games on Steam. But yeah, I feel like there's going to be a limit to that where completely having these bands, I can see a use of it where it's perhaps improving the creativity But I think the other dimension there is if there's any intellectual property that you may be unknowingly integrating into your work by that. So yeah, all these discussions that are happening right now that I think are part of the larger discourse, especially when it comes to distributing these different types of VR or immersive experiences. But I did want to give you a chance to speak about any of the other projects that you had passed along that you wanted to maybe talk about some of the cool super projects, whether it's from Hyperfly or Polygonal Mind or Vive.io, Webiverse or Momate.

[00:47:33.846] Adrian Biedrzycki: Oh, sure. Yeah, I think a lot of the spirit of the crypto community, despite the fact that like everybody's down in the market, I think a lot of that is actually being kept alive in like these little pockets. So I think Hyperfi by Ash Connell is actually doing a lot of the things that I wanted to inspire people to do with Webiverse. which is just to create this web-based system where people can build anything, upload it. And these days they're having float parades with vehicles that are created in-world with these tools. It's super amazing. Jin's actually a super huge fan of it as well. Then you have a bunch of cool VRM-based communities, like the Vive folks from Polygonal Mind. where basically they're creating just these awesome avatars that are actually pretty, super cheap to generate. You just go on their site and you pay. Well, it's certainly, it's less than you'd pay for a commission, but you can generate like a cool avatar, like roll the stats, mint it on the blockchain. And then you basically get an amazing VRM file. Like you can load into one of these worlds, or you can just download it yourself and play with it and blend or hack it. A lot of people are doing that and just customizing like the shit out of it. And I think that's a very consumer-friendly way of using, for example, NFTs, because on the one hand, you do have this digital asset, but it's also relatively cheap. Part of the reason just being the market is the way it is. They probably couldn't get away with charging much more, but I think they're delivering a lot of value and I think it's very fair.

[00:48:53.063] Kent Bye: So Polygonal Mind, I know that is done on a number of different free avatars. You said that they're behind the Vibe.io?

[00:48:59.548] Adrian Biedrzycki: Yeah, so it's the same guys. I think they took a lot of the learnings that they did from, for example, the 100 free avatar collection that they did for VRChat. It's still, I think, one of the main set of avatars that people are using inside of VRChat. I think they just took a lot of their lessons and managed to create a really compelling user-based platform for avatars. And I think they're also opening it up to third party creators where you can upload your own avatars and sell them on the marketplace. And kind of doing what Valve is doing for video games, but doing it for avatars. Animations too. There's a new company called Heat, where basically they have an interoperable animation marketplace for these avatars. It's really cool. And you can basically browse for any animation that you can imagine. And if you're an animator, you can upload your own animation there and sell it. And even some of the stuff that I tried to seed with WebReverse and MoeMate, that's happening right now. MoeMate was just basically this thing where I said, okay, so we have a lot of this AI technology. Can we just put it on your screen and let it read what it's doing? Using OCR segmentation, a lot of the things that I was talking about, and have an agent that can understand what's happening. and you can talk to it in real time and it'll give you feedback and it has memory subsystems and it has a module system that you can update. Yeah, there's a lot of really cool projects that I think are touching on some of these open metaverse ideas. On my end, I'm still kind of set on building whatever this open free simulation I feel like kind of needs to exist and still doesn't because like a lot of the projects that I'm talking about, although they have open aspects to them, like for example, Vipe, you can download your assets quite easily. The whole platform itself is not open source. If you wanted to build your own thing, you couldn't. Same with something like Hyperfi, completely closed source. It's awesome. And although you can export your stuff, like there is platform lock-in and there's very good business reasons for doing that. Because I think if it was open source, then probably there would be absolutely no money coming in because people would be weighing in their minds like, okay, so do I mint a world? Do I mint this NFT so that I can use this platform or do I deploy myself for free? And I think a lot of people, especially like in the hacker community would just decide to deploy for free. And then that ultimately just means that this thing won't get developed at all. I'm still really bullish on the existence on somebody being able to build one of these platforms completely in the open in a sustainable way where people are running their own nodes. And for example, like maybe the path that we can take is it's full of AI agents or something and you're paying for the inference costs or something like that. But I think there needs to be some sort of value interchange between the developers of one of these systems and the users of these systems. And AI is possibly just one of the ways that we can do that because it's one of the rare cases where a lot of people do want to, like, for example, have all the intelligence available locally, but it's so expensive to run that it generally does require a cloud server or it's too complicated to set up where there is a value add that can be provided by a company that's, for example, providing the cloud services for it, while the client itself can be completely open source and hackable by anybody. So I've been doing a lot of research projects around that. In fact, these days I'm writing my first official quote-unquote research paper on all of this. I'm calling it ChatWorld, but I basically took it upon myself to learn LaTeX or LaTeX, however you call it, to actually produce an AI research thing with the help of ChatGPT and kind of throw together a lot of my ideas and kind of inspire people and maybe even release some of the open source stuff that I wasn't able to over the last couple of years. and at least show it to the world and see what they think.

[00:52:31.326] Kent Bye: Awesome. Well, finally, what do you think the ultimate potential of the open metaverse and WebXR and the future of all these immersive technologies with AI, what the ultimate potential of all that might be and what it might be able to enable?

[00:52:46.278] Adrian Biedrzycki: That's a tough question. I'd like to think that this is the substrate upon which, if it's done in an ethical way, I'd like to think this is a substrate upon which we can build the next version of the internet. I still believe in the metaverse. I don't necessarily believe that it's going to be one company. I don't believe that it's going to be one thing. But I do think that, especially with things like Vision Pro and just this culture permeating through the collective unconscious, people are going to need some platform where we can connect openly without having to worry about like these financial incentives without having to worry about interoperability just kind of like put on a headset i can join anybody anywhere whether like they're in africa whether they're here in canada or or which hardware device they're on. I think that kind of needs to exist. I'm actually really bullish also on AI helping us to develop that technology because in one way, AI is also the universal translator. You can feed it any sort of data and tell it to translate it to a different format. So maybe that means translating literally between data formats like GLB and USD. Maybe that means translating between languages. Or maybe that just means like in the future, we'll be able to say, okay, so there's this software platform that I want to interoperate with, but I have this headset, which has like a different set of APIs. Can you just write me the software that will make that run? And who knows within the next five years, like that might actually be a thing that's within the hands of a developer. And maybe that's the metaverse. I'm super actually hopeful that like, this is actually still going to happen. It's just a matter of like, how do you get from point A to point C?

[00:54:22.917] Kent Bye: Awesome. Is there anything else that's left unsaid that you'd like to say to the broader immersive community?

[00:54:28.060] Adrian Biedrzycki: Um, I'm, I'm looking for work. I don't know if any of these ideas are super interesting to people, but yeah, I'm definitely looking for work, looking to connect with people on Twitter. If you want to DM me anytime, I think my DMS are open. If they're not make sure that they are right after this podcast.

[00:54:46.829] Kent Bye: Awesome. Well, Adrian slash Aviar, thanks so much for joining me to give a little bit of update for what your insights are and what's the future of the open metaverse, WebXR and the crypto integrations of all that. And also a little bit about what's happening with AI and how that's going to play into all of it. Lots of really fascinating potential futures as we're at this intersection of all these things coming together. So thanks again for taking the time to help break it all down. Yeah, thanks for having me, Kent. So that was Adrian Biejitzki. He goes by Aviar Online, and he's a WebXR developer who's working on the Open Metaverse technology stacks and doing XR stuff for a long, long time. He's one of the co-founders of Metaverse and Momate, and also the M3, which is the Metaverse Makers Hacker Collective, and also happened to pick up the WebXR Developer of the Year from the Poly Awards back in 2021. So I've a number of different takeaways about this interview is that first of all well Adrian or a beer as I often refer to him online from his handle is always looking at the frontiers of what's coming next with all these different immersive technologies, so Quite fascinating to hear some of the different things that he's been looking at and some of the different trends that he's seeing and he's on the end of AI kind of taking over everything, you know, I'm maybe a little bit more Skeptical of how at least in the short term how AI is going to be that I think certainly in the long term I think the things that he's saying is that it's almost inevitable that AI is going to continue to get better and improve and With that I think we all have to kind of reevaluate like what we doing in our job Are we going to somehow be displaced for what we're doing or are there gonna be? specific niches that we can each find where we can embrace the technologies and I guess get ahead of the curve by being fluent in this new type of interaction and so Yeah, he uses Copilot and he's doing all sorts of different dialectical explorations of getting business advice and helping to form his business plan and even developing this Momate, which is the large language model driven virtual assistant that's going to be down by your side. And so, yeah, he's really all in and just seeing where this is all going to be going in the future. And just over time, he sees that this is going to be just a technology that is ubiquitous and disrupting a lot of different ways that we're sustaining ourselves as a business. Also quite interesting to reflect upon the strained business models of working as an open WebXR developer. He's actually currently looking for work. And so if you're in the open WebXR space, and especially if you're looking at interoperability, he's certainly open for finding some paid gigs to get involved with. But I think that's an issue that we've had previous conversations that I've recorded that haven't published yet from the decentralized web camp that happened back in 2019. And so, yeah, just having discussions around how do you sustain yourselves as you're working on the open web, especially. Most people that are working on web based projects and Judy at least have had these different advertising models But as we move into the spatial web and think about new business models, then what are the different aspects of value exchange? And so he's postulating that maybe in the future some of these different AI agents and the training and sustaining all of them that might be something that people decide that's worth paying for some of these different interactions and experiences that folks have so Yeah, certainly interesting thoughts as we continue to move forward and hit it a whole exploration of the crypto space and got a little bit disillusioned in certain ways just how the Alignment between the people who are involved in some of these different projects and the long-term support of some of these different technologies There's a bit of a misaligned incentives as he's saying, you know people weren't necessarily concerned around some of these more idealized aspects of of building the open and interoperable metaverse. Folks that were in the crypto space were just very much interested in the short term profits and didn't necessarily always align with supporting the long term sustainability of some of these approaches. So I think for me, these technologies are somewhat agnostic, it's more around the different values are driving them. And so if there's a deeper cultural shift where people are using these technologies to really sustain this type of development of this technology stacks, and I think at some point in the future, things may change. But I think right now, I'm still a little bit more bearish when it comes to this intersection between crypto and the open metaverse. So Certainly something that he's looked at and he's pointing out to some of the different projects that he gets excited by as well as Jen who has also been at the forefront of trying to Create lots of different cooperative and collaborative conversations in terms of the open metaverse and interoperability Jen getting very involved with the metaverse standards forum by the kronos group So yeah, I think as we continue to move forward, it'll be interesting to see how both the XR and AI continues to blend together and you get a little bit of a nice slice of a number of different projects that Adrian's paying attention to in the course of this conversation. So, that's all I have for today, and I just wanted to thank you for listening to the Voices of VR podcast. And if you enjoy the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a listeners-supported podcast, and so I do rely upon donations from people like yourself in order to continue to bring you this coverage. So you can become a member and donate today at patreon.com. Thanks for listening.