Matthew Niederhauser and John Fitzgerald are both co-founders Sensorium as well technical directors at ONX Studio. They both have been working on a number of different immersive XR projects that are including the latest generative AI and large language model technologies. It’s a rapidly developing field, and they talk about their strategy of keeping up to date through the practice of making different creative technology and immersive storytelling projects.

Niederhauser spoke a bit about Tulpamancer, which has subsequently been announced as one of the 28 immersive stories in competition at Venice Immersive 2023. It uses AI to take input from each users based upon their questions exploring their memories, and then uses generative AI to create a unique immersive VR experience that is specific to that person. “Ultimately, every interaction produces a unique work that is deleted at the conclusion of its viewing, left only to resonate in the minds of each participant.” I’ll be on site in Venice, and I’m really looking forward to checking out this experience and following up with Niederhauser and co-director Marc Da Costa.

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Podcast: Play in new window | Download

Rough Transcript

[00:00:05.452] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR Podcast. It's a podcast that looks at the future of spatial computing. You can support the podcast at patreon.com slash voicesofvr. So this is episode 15 of 17, looking at the intersection of XR and artificial intelligence. Today's episode is with the co-founders of Sensorium and the technical directors at Onyx, both Matthew Niederhauser and John Fitzgerald. So during Tribeca, there's a whole onyx studios. They have a whole exhibition of different projects and Matthew and John weren't showing anything publicly, but they were working on a number of different AI projects on the back end I know John helped to advise one of the projects Tribeca immersive is called in search of time. It was using generative AI so he's working with a lot of the AI pipelines to be able to see how they can you know, really fine-tune and the different look and feel and their intention for what they were creating with that piece. And then Matthew Niederhauser has been doing a number of different experiments and prototypes of different generative AI explorations of looking at the ways in which language is either going to be interpreted or misinterpreted when it has this kind of poetic quality to it and other different art installations that he was working on. He's actually got a piece that he mentions briefly here called Tuplemancer that's going to be premiering at Venice Immersive 2023. And that I'm going to be actually hopping on a plane here on Sunday to go out to Venice to be able to see all the different immersive experiences. And I'll have a chance to actually see this project called Tuplemancer. which is a generative AI project. It takes a number of different prompts, and then it dynamically creates a whole entire immersive experience that's very specific to you and your memories that you're entering into the prompts. So it's essentially like this sand painting-esque, like it's new and different for everybody that goes through it. And yeah, I'm very curious to see how that plays out when I see it there in Venice. So that's what we're covering on today's episode of the Voices of VR podcast. So this interview with Matthew and John happened on Saturday, June 10th, 2023 at the Onyx Studios Exhibition in New York City, New York. So with that, let's go ahead and dive right in.

[00:02:07.632] Matthew Niederhauser: I am Matthew Niederhauser. I am one of the co-founders of Sensorium, an experiential studio, and I am also a technical director at Onyx, an XR accelerator and production and exhibition space in New York that tries to do all of the cool things when it comes to the broadest definition of XR.

[00:02:33.165] John Fitzgerald: I'm John Fitzgerald. I'm a co-founder of Sensorium, along with Matthew. And I'm also a technical director at Onyx Studio, which is here in the Olympic Tower in New York.

[00:02:43.938] Kent Bye: Great. Love to hear a little bit more about each of your backgrounds and your journey into this space.

[00:02:49.274] Matthew Niederhauser: Personally, I have been taking computers apart for a very long time and been into digital imaging for decades. My entryway into this was through, eventually, photojournalism and eventually trying to tell stories in immersive mediums using camera-based capture. I started with 360 photogrammetry volumetric and we did that through our studio sensorium. Had a lot of fun, did pieces at Sundance, Tribeca, IDFA. And at this point in time, we try to use cutting-edge hardware and software to create new experiences. And they can range from almost sculptural-like installations, interactive pieces in headset, to full-on proscenium-type performances. So we love to play with all the available toys out there for this type of creative practice. And yeah, that's our day-to-day. And also enabling other artists to create works here in New York at Onyx.

[00:04:02.045] John Fitzgerald: My background is in filmmaking. I was working as a documentary filmmaker and experimental filmmaker. I did a lot of immersive projection mapped installations and doing weird things with camera capture. And yeah, Matthew and I started talking about collaborating on larger format documentary projects together, and around that time we also started working in 360 video, volumetric, photogrammetry, kind of building our own camera systems and pipeline, playing with off-the-shelf technology, but making it look really fancy and look nice. higher quality resolutions. And yeah, that kind of led us to all the fun, immersive, interactive, sculptural art and video projects we're up to these days.

[00:04:48.921] Kent Bye: Yeah, well, I was here last year to see the Onyx Studios exhibition and then we had a chance to actually catch up at IFA DocLab in Amsterdam after you had the whole Onyx Studios with DocLab motion capture stage and all the different performances that you're helping out with. And here we are back at, you know, I'm here for Trumpback Immersive 2023 and The exhibition is happening again here at Onyx Studios. And this year, a big theme that I see is a lot of AI-generated staple diffusion integrations. And I understand that, John, you were actually working as part of the creative input for one of the pieces that was at Tribeca Immersive called In Search of Time. And Matthew, you just showed me a book that you created with some generative AI. So I'd love to hear a little bit of more context for, at what point did you start to explore some of these latest generative AI tools and start to explore what the potentials are for immersive media?

[00:05:38.688] John Fitzgerald: Yeah, I was really interested in some of the first text-to-image generators and capabilities that DALI and Midjourney released. We were kind of messing around with chat GPT and what text AI possibilities were out there. The project with Matt and Pierre happened out of a conversation at dinner once. Pierre had been documenting his kids, just shooting things on his cell phone, and he had just arrived in New York, moved here with his family, and we were looking at getting a little bit deeper into the stable diffusion, deforum pipelines, and this one process in particular, which is called warp fusion, which allowed us to kind of Generate layers of more complex imagery on top of images that already been captured using control net But I guess like the real interesting thing was you know when we started experimenting it just we were really playing with this idea of like memories like how you position your memories of what's happened in the world in relationship to other people and We're looking at the personal exploration that he'd been filming as he just moved to New York. So I was I I was excited to help him produce that project and it helped solidify a few of the elements of the pipeline to make the film come together.

[00:06:57.390] Matthew Niederhauser: I mean, I've always been waiting to talk to computers my entire life, fluently and in person. But my first real encounter with this type of technology was actually in high school. I used to code in a language called Lisp, which was an early recursive programming language for building neural networks. In my mind, I was working on battle bots and responsive little small tinker toys. And I've been watching this space pretty thoroughly for a while and I started collaborating with an old friend of mine from college named Mark DaCosta to essentially start coming up with ideas for immersive projects that utilized machine learning tools. and our first real work that we just showed here actually at Onyx two to three months ago was a piece called Ekphrasis. Ekphrasis is a term that was used before photography and image making where it's essentially a literary term for how people would describe paintings through writing. How would you communicate what a painting looked like or some other image look like if the only media is written language. And we took as like a starting point the poetry of C.P. Cavafy, essentially to interrogate the limits of large language models and also stable diffusion to interpret what we consider the more subjective but also impressive edges of human language, which is poetry, simile, metaphor, humor, which is also, quite frankly, I feel the quickest way to find out whether you're talking to a computer or not. So it's all based off of a stable diffusion pipeline and a text to audio pipelines. And it was like, could we create an entire script that would speak and visualize a poem? So it was more of an R&D project. So there were these final films that we made. And sometimes the poetry itself could, you know, be explicit enough that stable diffusion had something to grasp onto. But some of their more, I would say, deeper resonance of his works that comes from Ithaca or Waiting for the Barbarians, you know, we'd have to sort of like intercede with the visuals and sort of wear morbid director's caps. I think there's a secret hope that's like I could have a one script and just press enter and then it would create a beautiful likeness and reading of a poem. So this video piece in the book was essentially about that process and it's two or three months later and it's already completely dated just because everything is moving so fast in a good way and I'm actually leaving tomorrow with Mark to install a new project in Greece called Parallels and this is with the Onassis Foundation again It's more of like a sculptural piece. It's going to be a 2.5 meter by 2.5 meter LED wall and then I place a camera at a specific point on the back of the LED wall so that if you're standing from the front It completely mimics the view, so it turns the LED wall into trying to match us perfectly. It's like a tile of the world, or the scenic view is digital. And then that video feed is being run through stable diffusion, generating about an image a second that's responding to time, weather, sound levels, and have all this prompt engineering So that goes from especially like day to night where it's like essentially a large-scale machine learning sculptural piece. But it's also like a very visceral encounter with machine vision in the scope of a landscape. So you like encounter it and it fits into the landscape, but it's also completely different. And we're hoping that it is successful and interesting and not just a deep anime dive off of Reddit or something like that. But I mean, it's just happening so quickly right now, these tools and how they can be incorporated into immersive and interactive projects right now. It's pretty exciting.

[00:11:16.171] Kent Bye: Yeah, I know that we just saw each other six or seven months ago and it wasn't like the hot topic of the moment at that point in time, at least. But now it seems like that things are moving so quickly and that the next time I see you, I'm sure there'll be not only a lot of change and innovation, but you'll probably have like a few more projects to like talk about. I know there's a number of different projects that are here, like Katherine Hamilton has a piece called Shadow Time, and there are some 360 photospheres or at least some spatialized photogrammetry aspects that seem to have some stable diffusion type of techniques applied to it. I don't know if either one of you were directly involved with that, but I'd love to hear any reflections on that particular instantiation of using a VR piece, but also having what seemed to be a little bit of like depth, like you could see that there's some spatial dimensions of what was being modulated, because a lot of stuff I've seen is just either 2D images, but to add that 3D component of it, to have this melding and morphing, stable diffusion, it reminds me of the deep dream of some of those early animations of seeing this kind of psychedelic, and there was a lot of dog eyes, There's like, eyes would pop up, but this has got its own little flair for what it looks like to have this animated artifacts of these images. But I'd love to hear from each of you, going from what we're starting with, a lot of 2D images, 2D video, but we're kind of moving into the more spatialized aspects. I just talked to Brandon about how he's using depth maps as an instance of inputting and disabled diffusion. But as we think about XR and the future of spatial computing and having more spatialized dimension, either from photogrammetry or other 3D objects being generated. I'd love to hear some of your reflections on this frontier of moving into the 3D realm with generative AI tools.

[00:12:56.979] John Fitzgerald: Yeah, I can't really speak to Catherine's project directly. I didn't work on it and actually still had the chance to see it in its entirety. But you could probably talk about the depth stuff.

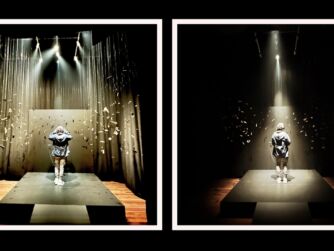

[00:13:06.284] Matthew Niederhauser: It is exciting, and I'm actually, even today, showing a demo of a project that I hope to premiere to the world later this year called Tulpa Mansur. Once again, it's something I've been working on with Mark DaCosta. And it is based off the notion of a tulpa. We like to come up with very esoteric words that sort of help frame ideas for some of these projects. But a tulpa is essentially an entity that through meditation or some other intensive cognitive process, you externalize a being that you then interact with and is almost like an imaginary friend of some sort. There's also much more serious versions. If you read deeply into the Tibetan Book of the Dead, tulpa is a very advanced form of meditative practice. And we almost see AI as a strange reflection and extension of us in a strange way. Some of the earliest projects we always were focused on was how does AI try to get to know us? What are the linguistic ploys? How do you make people comfortable? How do you pull people back and forth in terms of opening up to an artificial intelligence? So, the notion of this is you actually sit down on a pretty retro computer terminal and you answer a series of questions, and the voice is that of a shaman, actually, and we might play with that a little bit. It's honestly in a very early prototype stage, but through that interaction, we immediately start sending out that language to chat GPT and stable diffusion in order to generate within 60 to 90 seconds, like a six-minute voiceover that reacts specifically to what you input, and then also send it to stable diffusion to create equirectangular images with matching depth maps, which essentially gives you the chance to turn it into something completely volumetric and ways to animate it as a particle system and play with it to sort of bend space and time in a way that matches the tone of this voiceover. So every single person who does it has an individual experience that is also then deleted after you experience it for yourself. So it's like a sand painting. In a way. And hopefully the same meticulousness of a sand mandala. But it's pretty crazy. And what I was sort of demoing today was especially the voiceover. And some of the text-to-speech models are just really amazing in terms of the breathiness, the tone. It doesn't feel like a DMV automated system, you know, but what I'm very excited about is visualizing it in volumetric space. And there's some other weird techniques we're doing with depth maps, but we're also looking very closely at the earliest stable AI text to 3D objects. And there would be some interceded moments with, like, maybe some CGI interactions that, like, trigger your next ML space, like, or your next space that has been generated by stable diffusion. And that is super exciting. And once again, I mean, I'm trying to get it out this year, but could just be in the dustbin in another 12 months, you know, where the text to 3D objects. I know some people are working on startups that are doing text to motion capture information. And I personally feel that this is how the metaverse is really going to be built. And there will be individualized worlds, and they'll come together. And the computing power and sophistication of these machine learning models are getting to this point where I think we'll get much better 2D video, but the 3D aspects of it are going to come very quickly as well. And it's a super exciting tool for spatial computing and immersive experiences in general.

[00:17:10.071] Kent Bye: Yeah, I'd love to hear some of your thoughts on that, John, because you're obviously also with Sensorium in the XR space and also dabbling in all the generative AI, stable diffusion, chat GPT, all the hottest AI machine learning models that are out there. But yeah, what do you see as the things that get you excited about where this is all going?

[00:17:26.108] John Fitzgerald: Yeah, one of the things that I'm working on right now is I've actually spent about the past eight months getting to know a mentor of mine that I hadn't actually met in person, but Godfrey Reggio, the director of Coenis Scotti and the trilogy of films that he made in the 80s with Philip Glass soundtracks. It's an epic, epic journey. I think I saw the film first when I was 19. I probably watched it 50 times before I turned 25. I am indebted to this work because I just watched it so many times. It's a wordless depiction of humans' role with nature and technology and the processes of modes of production that are changing and how technology is kind of the new host of life, which is the argument that Godfrey makes. But I'm not going to give away a lot right now because it's still quite early in many manifestations of what I'd like it to do, but Godfrey and I, we've been really interested in exploring ways that we can generate a kind of reimagined version of his archive of films and the inherent decay that will come with generating kind of new synthetic forms of models based on footage that he's already captured and Yeah, early phases of exploration of how that will manifest but we're thinking to kind of like large collective projection space that multiple people would be inside of Choosing your own adventure if you will so you'll be training a new model on his work.

[00:18:50.836] Kent Bye: Is that we mean? Yeah Yeah, and then have you gotten into training your own models? Or you've been pulling off the models off the shelf for a lot of your work Matthew

[00:18:59.342] Matthew Niederhauser: I've been pulling a lot of shelf models. I mean, there's such diversity and amazingness of them. And that was actually one of my favorite parts of ekphrasis, which was the first project I talked about was, I mean, it's literally an infinite dive, not only in models and seeds and getting into the variables that are involved in the image generation process. I have been contemplating seriously, though, as I said, I have worked as a photojournalist for 8 to 10 years. And when I was based out of Beijing, and a lot of the work was focused on the rapidly changing cityscapes, you know, and modernity as it started manifesting for a billion people on the other side of the world. So I have this 8-9 terabyte archive of work that is ready to go in some ways for training. It's very expensive to train that many images right now. But there is sort of a latent project in my mind that wants to return to some of my previous media-making practices. I have entire photo series. It's like, I want to make a book. I want to do things with it. And now there's another layer of angst about my previous. Now I want to explore it using these new ML tools. But I don't know. I mean, the power required for that, I mean, it's becoming much more accessible. But, you know, in the same way, like, Sensorium was really built off of experimenting with, like, the newest available hardware and software to create new experiences, like, you know, still are trying to stay at the cutting edge of tools made by other incredible people and finding ways to recombine and Use them to tell new stories, and new stories that are more fitting to that medium, as opposed to thinking that every cinematic trope should make its way into our world of XR storytelling.

[00:21:04.502] Kent Bye: Yeah, in a very similar fashion, I've been waiting for a long time for the speech-to-text translation to get good enough to be able to get transcripts for my whole archive of podcast interviews. And found recently WhisperX with diarization, with the whole pipeline that I could get a transcript of an hour-long podcast in four to five minutes, and then have a pipeline to process it and digest it and import it into my website. So there's still a lot of cleanup and ways of the architecture that I want to do with that, but it's something is getting here a lot quicker. I've been sort of waiting for this moment for years and years and years, because it would have cost like $40,000 to $50,000 to get transcripts for all the stuff. But now I can run my GPU for a couple of days to get all of my published interviews. So it's a really exciting time for what's going to be made available with all these different multimodality interfaces to these machine learning and artificial intelligence models and whatnot. So yeah, I'd love to hear any other thoughts on what you're really excited about, where things are going in the future.

[00:22:00.332] John Fitzgerald: Yeah, I think we should pick your top 10 list of interviews and train a model, and then build a fake interview, like a new kind of like Kent. Infinite can talk. Kent 2.0 is like the interviewee is all generated on the questions you've asked and the framework of thinking that you've. Yeah, there's something there. We should keep talking about that.

[00:22:22.297] Kent Bye: Yeah, getting more and more into process philosophy, it's sort of like I see myself as an ever-refolding entity that has changed over time, so it's difficult for me to sort of pin down one thing. Also, what is the ethics around, you know, I'm happy to do any of the stuff on my contributions to conversations, but there's still, I think, some open questions in terms of like, what's the extent of which interviews like this people have consented to having their body of knowledge or conversations be shared into some sort of distilled model. So I think as we move forward, what the ethics around that are still a lot to be sorted out. And I have to figure that out for myself in terms of, the consent models for people to participate in something like that. So yes, that's exciting, but also I think there's stuff to be figured out.

[00:23:06.963] Matthew Niederhauser: Yeah, I mean, these massive progressions that we've been witnessing have largely been built on working with materials without consent. And it's a strange time to celebrate that, but also pulling apart this huge leap forward on who it was built on and why. And it's easy to be like celebrating the corpus of human, digital, everything, text, image, sound, but there needs to be better consensus on how things are trained, why, and, you know, always the never-ending struggle for creative professions of getting credit and potentially, you know, little monetary support here and there for our lifelong dedication to Some of these are making processes. So it's exciting and at the same time, you know, I do hope it continues to Create better tools for us to use as well. And I think it'll be interesting once it becomes more of readily available for people to iterate on their own archive of work. I mean, obviously a little more complicated to you insofar as the conversational basis of your practice. And, you know, I am cautiously optimistic, well, generally about the world in general. That's one of my favorite terms generally in general But I'm just completely fascinated by how people are using this in all sorts of different mediums to theater drawing Animation it's exciting, but it's gonna take vigilance quite frankly and about the models that we're using, how they were made, and that's why I feel like having a lot of this be open source and transparent insofar as they can into the black box of what's actually in there. But I think it's an exciting time to start using these tools to create, especially within the XR space.

[00:25:13.107] Kent Bye: There's certainly a lot of, let's say, stolen labor that is either being transformed in a fair use context or appropriated in a way that is not fair use and violating those IP and copyrights. So I feel like that's a debate that's unfolding. And as things continue to evolve, like you said, yeah, these models that are based upon the public domain or based upon Creative Commons or trained with being in right relationship to the data, I think is a key part as well. And also, recognizing limitations and biases of the data, of what is there and what isn't there, I think is also important to recognize. But I get excited when I see what all the artists and creatives, like what's being exhibited here at the Onyx Studios, you know, I think there is a lot of pipelines and tool sets that are being developed so that when we can produce these models that are maybe produced in more right relationship to the data it's being based upon, then we can sort of live into this more utopic vision of really capturing this archive of human knowledge and creative expression and culture in these models that are a few gigabytes in size, which is really astounding when you think about it. But yeah, I'd love to hear any other thoughts about what you're excited about as we move forward in this space.

[00:26:18.544] Matthew Niederhauser: I am the total cognitive representation of all of my friends, family, experiences, all as one. I am my own ML model. And I hope to continue to talk to computers in the future. Well said.

[00:26:35.100] John Fitzgerald: Well, I think one thing that I'm optimistic about is hopefully there'll be vast improvements in how medicine and medical information will be developing tools that can help humans in the future. I think the art stuff is quite fun, and there'll be a quick drive to make quick entertainment. That seems like it's happening now.

[00:26:55.724] Kent Bye: Awesome. Well, I guess to wrap things up, I'm wondering what each of you think the ultimate potential of spatial computing with generative AI and the future of immersive storytelling might be and what it might be able to enable.

[00:27:09.350] Matthew Niederhauser: Well, I've always felt that the future of immersive storytelling is this strange, gentle balance between theater, video games, and still cinema. And it's hopefully going to see more social mass gatherings, new worlds to explore. I don't know how quickly the hardware is going to be able to deliver the seamlessness of the experiences I think is going to lead to mass adoption of this type of work. It's obviously, you know, we've been through a few hype cycles. I would say even went through a hype cycle in the 90s and of course the mid-teens and everyone is up in arms again about Apple. I'm getting literally emails from relatives I haven't heard from years being like, What does this mean right now? But you know, I think that there are going to be a lot of use cases for it in terms of how we work, communicate, play, that are going to be common features in our lives in the same ways that we've figure out how to work with mobile devices, tablets, swiping and pinching, and is leading to what I think would be a more satisfactory form of interacting with digital data. And in my own opinion, it's going to be generational shifts. I will still like to be in a darkened theater and watching a movie. Fundamentally, you know, there's something about that that I think those Gen Zers just don't appreciate or don't care about. But once again, when it comes to vigilance, it's also going to be super important because it is the ultimate attention-dominating and eating form of media consumption. I want to have more lifelike co-presence. When I talk to somebody afar, I would love to design more things spatially. And I think that there are new entertainment forms and storytelling methods that will enable just completely different ways of playing with the world and interacting with each other. And, you know, hope to take part of that still over the next decade or so. haven't built the killer app yet, but it's something we're thinking about for sure.

[00:29:34.675] John Fitzgerald: Yeah, I think the, you know, ultimate potential of where this technology is going and spatial computing in general is about, hopefully, it's about connecting people and people in place, being aware of others around them, and having a kind of collective awareness of the hopefully more empathetic views of other people. I think it's hard when Some of the advertising of some of the new headset is, you know, father waking up, putting on a headset, going into work, you know, interacting with his kids through a screen and cooking breakfast. It just feels kind of chaotic, and it feels limited, and it doesn't seem like it's an abundant way to grow. But, no, I think there's ways that this can be thought of in more collective experiences, and that's hopefully the approach that will be taken.

[00:30:26.523] Kent Bye: Is there anything else that's left unsaid that you'd like to say to the broader Immersive community?

[00:30:32.048] Matthew Niederhauser: Carry on. Stay strong. Make better work. And you're always welcome at ONIX. And yeah, a new chapter is coming, and we're excited about it.

[00:30:43.097] John Fitzgerald: Yeah, come visit us at ONIX.

[00:30:46.155] Kent Bye: Awesome. Well, I know that the next time I run into you on the festival circuit or whatnot, you'll have some new work and new innovations that happen in the field. But I just want to do this little check-in, because I know things are changing very rapidly and lots of stuff that's already integrated in projects here. And I expect to see a lot more in the future of these different intersections of XR, spatial computing, immersive storytelling, and generative AI. So thanks again for taking the time to share what you've been tinkering around with, and looking forward to see what you create next. So thanks for joining me today. Always a pleasure.

[00:31:15.392] John Fitzgerald: Thank you, Ken.

[00:31:17.133] Kent Bye: So that was Matthew Niederhauser and John Fitzgerald. They're both co-founders of Sensorium, as well as technical directors at Onyx. So I have a number of different takeaways about this interview is that first of all, well, Onyx Studios is really at this forefront of looking at all the different latest immersive technologies, as well as how they start to blend them with other emerging technologies like artificial intelligence. And so lots of different experiments and prototypes with generative AI and Chat to BT and just kind of pushing the bounds for how to start to blend these two worlds together So always fascinated to hear both Matthew and John I last had a chance to catch up with them in Amsterdam during if a doc lab where they had the whole motion capture studio and a lot of really innovative projects that were showing there and yeah, I'm super looking forward to the piece that Matthew co-directed called tuple Manser that's going to be premiering there at Venice and Yeah, just to be able to take a number of different prompts and then basically create this whole emergent, immersive experience that be very unique to the things that I enter in. And equally rectangular, immersive, depth map, all these things, yeah. So just very curious to see taking what we've seen in generative AI and then moving it into more of an immersive space. And yeah, just to see how that starts to work with this kind of emergent nature. And so, yeah, I think I actually mentioned at the end of this podcast that I was going to be looking forward to running into them again, and I'm sure that we'll be seeing the latest work and all these different innovations. And so, yeah, there's just been a lot of different rapid changes, and they're on the forefront of being on the bleeding edge of trying to integrate all these latest AI technologies into their work in the immersive space and thinking about through the lens of environmental design and immersive storytelling. So, that's all I have for today, and I just wanted to thank you for listening to the Voices of VR podcast, and if you enjoy the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a less-than-supported podcast, and so I do rely upon donations from people like yourself in order to continue to bring you this coverage. So you can become a member and donate today at patreon.com slash voicesofvr. Thanks for listening.