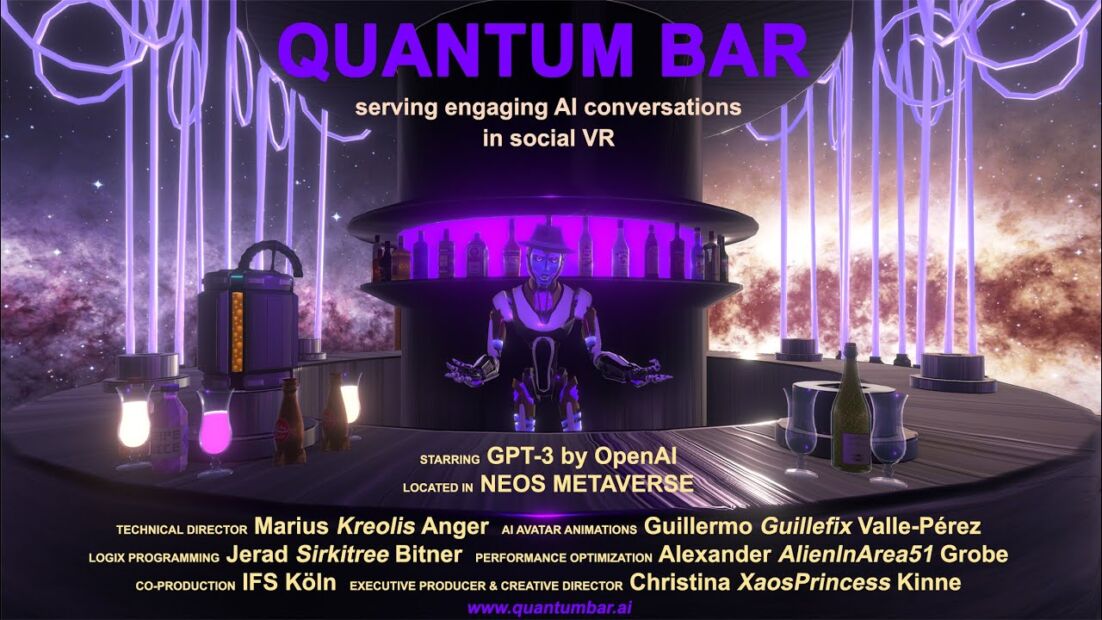

Christina Kinne (aka XaosPrincess) wanted to seed social VR worlds with AI chatbots in order to help catalyze larger social VR gatherings, and so she created the The Quantum Bar in Neos VR, which features a robot bartender that does speech to text via Google services in order to interface with GPT 3.0 in order to create a real-time conversational interface with an AI agent. I had a chance to catch up with Kinne and lead AI engineer Guillermo Valle Perez (aka Guillefix) at Laval Virtual 2023 where their experience premiered. We talk about the development process, the MetaGen.ai community that Valle Perez co-founded in order to facilitate the combination of AI with VR, and some of the theories for how deep learning works (see Stephen Wolfram’s article on ChatGPT and talk on Wolfram’s “AI Will Shape Our Existence” talk and videos from the Philosophy of Deep Learning conference at NYU). We also talk a bit about some of the open ethical questions around AI, and how AI will continue to be integrated into the production and experience of social VR worlds.

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Podcast: Play in new window | Download

Rough Transcript

[00:00:05.452] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR Podcast. It's a podcast that looks at the future of spatial computing. You can support the podcast at patreon.com slash Voices of VR. So, continuing on my series of looking at the intersection of XR and AI, this is the third of 17 in a series, and today I'm going to be looking at a piece called The Quantum Bar, which was premiering at Laval Virtual in Laval, France. So this is a social VR experience where you're going into Neos VR and you're having a interaction with an AI bot. So this is using GBT-3 to take natural language input and you are able to ask a number of different questions and they're able to feed it a prompt that gives them all sorts of information around both the nature of Neos VR, but also generally tapping into the large language model of Chat GPT to be able to pull all sorts of information as a response. And so this is a project that was in collaboration with Christina Kine, who is also known as Chaos Princess, as well as Jérôme Vallée-Pérez, who is also known as Julefix. And they originally created this in order to create a prompt within the context of social VR. When you go into social VR, if there's no one in the world, then it's difficult sometimes to seed that world. So by having a conversation with the virtual being or using some sort of chatbot, then it may be able to engage conversations. And the idea is to create enough engagement for other people to come in and to connect to other people as well. So it's sort of a catalyst for different social VR worlds and how can you start to seed them with these different generative AI agents. So, that's what we're covering on today's episode of the Voices of VR podcast. So, this interview with Christina and Jérôme happened on Thursday, April 13th, 2023 at Laval Virtual in Laval, France. So, with that, let's go ahead and dive right in.

[00:01:52.758] Christina Kinne: So my name is Christina Kinne in real life and KS Princess in VR. And together we built the quantum bar serving engaging AI conversations in social VR. And now I give it to our AI engineer.

[00:02:11.423] Guillermo Valle Perez: Yes, I'm Guillermo Valle in real life and GuilleFix online. And I work in the intersection between AI and VR. My passion is to try to integrate the two and help integrate AI systems in social VR especially, and also help social VR benefit AI progress. And I'm very interested in that intersection.

[00:02:34.774] Kent Bye: Very cool. Maybe you can each give a bit more context as to your background and your journey into VR and AI.

[00:02:40.900] Christina Kinne: So the first half of my life I've been a filmmaker, but then I got a beautiful interruption. in the form of two toddlers, which then killed my social life. Still, fortunately, in 2016, by pre-ordering the HTC Vive, my social as well as my professional life was saved by VR. So I entered Philip Rosedale's High Fidelity social VR platform, distributed metaverse, And there I learned my first spores and became a content creator. And there we created the first NFTs when the term wasn't even coined in 2017. And I just loved this idea of going out, staying in, a quote by a community member, Judas. So I could put on the headset and still care for my kids. and learn and collaborate and socialize in VR. So social VR is my big, big love. And then in the meantime, when High Fidelity pivoted, I became the community manager of Tivoli Cloud VR which was a fork of high fidelity and I also started a second Masters of Arts studies this time in digital narratives and when I was in the ideation For the thesis project, my big premise was I want to do something which can propel social VR. I want to find something which offers an engaging activity. for users so that they stay online because users make users stick. Nobody will jump to one user or two users because they don't want to crash a date. But as soon as you have like three or more users, you form a group and that's easier to connect also new users and to be welcoming. So I was thinking about, yeah, maybe do something with BCI, raise the sun together. But then I had a wonderful blessing of fate, which was that exactly in that year when I started the thesis project, 2020, GPT-3 was released and through my university, International Film School Cologne, I was able to get academic access very early on and so that enabled us to start with the development of the Quantum Bar, which brings a GPT-3 driven bartender into social VR and so we could start early with the development and also like the research on presence and privacy and the tech and I was very happy to work together with a brilliant teams. So we've met Gillefix and our CTO is Marius Anger aka Creolus in VR and he wrote a wonderful Python backend which basically organizes a group call between OpenAI, the social VR platform, our hosting computer and the speech-to-text cloud. I also want to give a big shout out to Circuitry aka Gerard Bittner who helped us a lot with the Nios coding. So Nios was the platform we eventually chose because it's momentarily the most potent and versatile on the market so we chose that one for our vertical slice. And also a shout out to Alex Grobe aka Alien in Area 51. He is also a Nios resident who helped a lot with the optimization to get a good performance for us and for the end users and it was just a wonderful journey to develop together. Also a big shout out to Sonja Unger who is a great 3D artist as well but now helped to make the whole atmosphere become live in real life. in Laval Virtual and yeah and the whole journey I felt so blessed of meeting such a wonderful team and then yet again another thing happened that Now, since ChatGPT, latest AI development is exploding, which also inspired us for a second use case of the Quantum Bar, which is like, we'd love to establish it as a educational platform, which makes AI interaction experienceable for students, teachers, politicians, decision makers because we know like with the simulation VR has like the highest learning capabilities like you can memorize best if you've experienced something and so starting with our GPT-3 chatbot for example we want to experience users also how this tiny term of a 10 minutes conversation you can also like produce positive or negative bias because the chatbot will answer similar to how you talk to it. So we also want to implement more AIs or more AI features so I cannot wait to implement GPT-4 but that one then as a different bartender because it would also be nice if OpenAI hopefully still supports GPT-3 to have like a kind of museum of how conversational AI evolved. And of course we're looking forward to 3D object generation because that would finally enable us to serve our ordered beer or cocktail as an actual 3D grabbable glass.

[00:09:49.586] Guillermo Valle Perez: So my background until recently has been mostly been in academia and research. That started when I did my undergrad in physics at Oxford and I've always been very interested in like science and research since then and before actually. But actually, after I finished my undergrad and I just started in my PhD, two things kind of happened then. One is I started getting into AI, so I started my PhD in deep learning theory. I worked on generalization theory of deep learning, meaning trying to really understand why deep learning works at all. why is it able to predict correctly on things which are not on the training data, which classical statistical learning theory couldn't predict, mostly because of the fact that neural networks have so many parameters. People didn't have a theory for why does it work, even though it has so many parameters. So my PhD was about trying to develop such a theory and trying to say, oh, actually, even though it has so many parameters, there is actually some implicit bias in the architecture that makes it actually have an inductive bias, like a preference towards simple solutions, etc. That was like 2015, 2016 or something, when deep learning kind of was starting to grow, basically. And the other thing that happened in that transition was I started getting very interested in just interactive computing in general. basically because I did a lot of math during physics and this kind of studies and I was like kind of annoyed that we did it on paper and like we played with all these beautiful ideas in science and we're just like doing it on paper and it's like I don't know it feels like you know these people are having so much fun in computer games but like I'm having all these like beautiful ideas in my head and I kind of easily express them and like a year later or so I tried VR for the first time like with HTC and then Windows Mixed Reality And I was completely in love because it captured precisely this idea of, finally, I can start creating the worlds that I had in my head and sharing them with people. And then soon after, also, I discovered Nios VR, which introduced me to social VR. And it showed me that doing the necessary next step, because if you want to share them with people, well, you want a social environment. And then I also discovered the beauty of collaborating in VR as well. later on. I also discovered VRChat and other social VR. Yeah, so that was sort of my beginning of my dual passion of AI and VR. And during my PhD, I was working in AI, mostly theoretically, but on the side, I co-founded the Oxford AI Student Society, and that gave me an opportunity to work on more applied stuff. So for example, we had this fun project to generate Beat Saber levels using deep learning in there. And that also kind of started incorporating VR a bit into it. And after the PhD, actually at the very end, we started this project in AUX AI in the AI Student Society, which originally was called VR AI, like VRAI. which was like a project to explore that intersection and we played a bit with like, oh what if we train like reinforcement learning agents in like Nios VR or something and you know with like integrating with ML agents and so on from Unity. So we played a bit with that, like we didn't get too far but I mean we did end up training some RL agents in Nios which was quite interesting and like how that worked out but like we needed to go farther right like The idea developed soon after, and what we really wanted was a larger scale project. Two things, a tighter integration with a social VR platform, because even Nios was very powerful. A lot of our ideas were still not easy to do. And secondly, we came up with the idea that the real value of one of the biggest values of VR and AI integration is the fact that you can have AI learn about more things than just language. And we see that now that AI has made incredible progress in language, like ChatGPT, et cetera, because there's so much language data available. But if you want AI to learn about other forms of human behavior, like movements, or even like synchronized vision, speech, sensory experience, et cetera, there's almost no data for that, like so much less. But VR is exactly working on that kind of information for human behavior. So we sort of came up with the idea that we sort of want VR to help AI learn about more of human behavior. And I started this community called Metagen, which originally stood for Metaverse Genesis, but now we just call it Metagen, to sort of bring people interested in this intersection of AI and VR. And at the same time, I started working in my postdoc, where I worked with some colleagues in Stockholm to develop generative models of movements as one example of something other than language. We actually did a proof of concept where we trained, we developed this model. We also found volunteers in VR chats and other places that danced in full body tracking to give their data to the model, like voluntarily. And then we trained the model on this heterogeneous collection of data and produced like a character that could dance to any song. And so we showed that AI can learn to do other things by using the right kind of data, basically. Actually, the model is similar to GPT, but it's a significant variation to be able to deal with this kind of data, but still fundamentally a transformer kind of model. And yet, that proof of concept was quite interesting. So that's where I worked on this sort of generative model of movement. And the things I did in my project were all around that. I first applied it to dance, and then I tried to apply that also to robotics with some success. Yeah, but now I've left the postdoc to try to have a bit more impact in the world by sort of going more into startups and industries. And more recently, I started to try to grow this community a bit more, Metagen. Then I also collaborated with Chaos, which was my first opportunity to integrate with some of the other AI technologies like GPT-3 and Speech-to-Text and create a more complete product rather than just individual components and research. And so that was like a really exciting opportunity to create the quantum bar. And also recently, I joined Somnium Space, which is another social VR platform, because they're actually quite excited about this idea of like, oh, let's integrate AI technologies into social VR. And in particular, let's try to train like models that learn about more of human behavior. And my hope is that a lot of the work we do fits out more generally. So I'm a big supporter of open source and open standard. So I want to help make my work be generally applicable through that sort of area. And so that's kind of where I'm shooting to at the moment.

[00:16:48.017] Kent Bye: OK. That's really helpful. I know there was a philosophy of deep learning gathering that was happening at New York University on March 25th to 26th, bringing a lot of different philosophers and people from the AI community to try to understand what's actually happening underneath and how can we understand what this intelligence is, what's happening, where's it going. I happen to also watch a recent lecture by Steven Wolfram, who's written a whole paper about how chatGBT works. I just want to get your quick reactions if you had a chance to see his breakdown for what his theory is for what's happening underneath the hood. With his more cellular automata orientation, he had a big, long write-up for what he thinks is happening underneath something like chatGBT. So I'm not sure if you've seen Wolfram's article or recent talks on that. Curious to hear some of your thoughts.

[00:17:31.188] Guillermo Valle Perez: So I haven't read Wolfram's article, but given your comment, I think I should check it out. I have, however, seen something that I think is relevant to what you're talking about, which is Chris Ola's interpretability work. I don't know if you've heard of it, but he has a whole series of articles developing this framework, which he calls circuits, or circuit analysis of transformers, or something like that. Which I think is really cool, because what he's doing is like, OK, this is a black box, but let's actually look into it. Transformers are actually many layers of internal representations in embeddings. And between each of these two internal representations, there is attention-based computation. And it turns out that if you look for particular inputs, what's happening, or particular families of inputs, you can analyze this attention computation and see that sometimes some of these layers implement simple Boolean logic circuits. That's why it was called circuits. So for example, it will do something like, Well, I can't think of a particular example right now. But if fluffy and floppy ears, this is probably a dog. I mean, that will be more applicable to image kind of data. But you get the idea, right? It will actually implement in the inner layers logic circuits that do Boolean logic, like AND and OR operators based on features in the previous layers and so on. And I think that is the most promising I am aware of so far, of trying to understand what these things are doing. But yeah, I will be interested to compare with Wolfram's thing, which I haven't checked yet.

[00:19:01.379] Kent Bye: Yeah, Wolfram has got this whole model of the basis of reality, starting with, well, your piece is called Quantum Bar, so I think it's appropriate that he's trying to come up with how to generate all of the fundamental models of physics from this programmatic approach of saying that, from a cellular automata metaphor, that there's a progression of sequences that you can't skip ahead to, like, sequence number one billion, you have to actually step through each of them, so there's sort of a programmatic computational theory of the nature of reality, which I think is very similar to process relational philosophy and Alfred North Whitehead, who says that the underlying basis of reality are processes that are unfolding, which is very similar to saying that all of reality is a program that's unfolding in the cellular automata, but also he emphasizes relationships, and so he has a graph theory and hypergraphs of the underlying math structures to try to understand both the nature of reality, but also AI. So he's applying that to looking at Chad JBT, but he's someone who's worth taking a look at. I feel like there's a bit of a magic to some of these technologies. And I think there's a mystique. And I think the more that we understand that mystique, we can understand the limitations. Part of my critique around open AI is that we don't know what data it's being trained on. We don't know what the architecture is. And so there's a certain amount of things that are included. But we can look at some of these underlying philosophical principles to understand what's possible, what's there, and what you can fix with this reinforcement learning with human feedback to tune it. But anyway, back to the experience of Quantum Heart. My perception is the history of ChatGBT was that ChatGBT 3.5 was released to the public. There were certain aspects of ChatGBT 3.0. I don't know if it was ever released to the public, but you had academic access for a number of years. They have ChatGBT 4.0 that they wanted to release. I guess in some ways rushed out a 3.5 version, but you're still back on the 3.0 version. So I'd love to hear about that evolution and you're still on 3.0 and if you plan on upgrading it to the 4.0 or if there's certain things that you can only do with the 3.0 version. version. So yeah, I'd love to hear about your entry point into interfacing with these large language models like ChatGBT.

[00:20:56.317] Christina Kinne: So, of course, when JetGPT and GPT-4 was released, I was super excited. I can't wait to implement it. Still, I have to say, if I implement it, I will implement it into a different bartender with a different voice, maybe a female one for this change and a different character, because I experienced that with GPT-3 you are still freer to give it a bit of a personality with a short prompt. So we need to keep our short prompt short because we do the reinforcement memory approach which is like to feed back the whole conversation for each next line so that the chatbot can remember your name which you told him initially in the end. So for us it was important not to have to write two pages prompts, but to keep the prompt within three, four lines. And that turned out to work very well. So GPT-3, you can personalize with just a few taglines basically and it has this nice friendly character which I kind of grew to love. I know I anthropomorphize but I just like this character of this bartender and then we'll have to see how OpenAI proceeds with their release and their TOS because we're deliberating. if we should implement GPT 3.5 turbo for this conference, but I did a A-B comparison giving it the same characterization via prompt and then asking it identical questions and whereas GPT-3 still had its own character, felt a bit living, which on the other hand is super important to give the NPC presence in social VR. That was our main goal for the users also, to form a connection and the identical questions were answered by GPT 3.5 like in a much more sober way like as an AI I'm not able to la la la la la and my personal opinion is of course it's very dangerous because people anthropomorphize AI to be too open about its characterization or to let everybody go for training it, like we saw what happened to Ty, the Twitter bot. But on the other hand, I feel if you write it in the TOS, if you are transparent, either as the parent company OpenAI or as me as a B2B creator user, if I'm transparent, if I pitch it as AI conversation, if I give it the tagline or give it the prompt also, you are an AI. and tell the user this is no living being, this is an AI, then as creator users we should be allowed to personalize it because it's much more connective, better presence, better immersive feeling for the user if the AI counterpart at least seems to have a personality as opposed to reacting like a dictionary.

[00:25:13.867] Guillermo Valle Perez: Yeah, I mean, I think Chaos is right that, like, we're using GPT-3 because it's much more easier to steer. I feel like GPT-3 is like a very relatively well-distributed collection of human behaviors. And so, like, you just need to, like, prompt it briefly in one direction and then it will behave in that particular way or another. Well, there's this sort of new chat GPT models, including GPT-4, which is, I think, trained similarly to chat GPT but better. They're sort of more coerced to a particular type of human behavior, which they consider more aligned or more useful or something, right? So they do it, but the problem is that then it becomes more difficult to steer it to anything that doesn't align to that. Yeah, so it has trade-offs, obviously, there. And I think in regards to these sort of use cases that she was talking about, of NPCs that you want to help users, maybe in therapy or somehow give companionship, et cetera, having a model that's refusing to show any inkling of something emotional-like at all, kind of like ChatGPT is designed to be at the moment, I don't think that's useful. Plus, I think ChattyPT is a bit kind of gaslighted, in a way, to things that are even a bit controversial. For example, ChattyPT will say, as an AI language model, I'm not able to have preferences. Well, I will say that's a philosophically controversial thing. So OK, OpenAI is putting a particular idea that it's not able to have preferences. But I wouldn't say that's an absolute consensus of what this model is able to do. I think giving it a bit more flexibility will actually be in a way more truthful, actually, and more representative of actual opinions of people and use cases, and staying truthful, right? I think trying to make the model truthful, I quite like that idea, but I think they've kind of pushed it a bit too far in a particular direction. And yeah, but I mean, it will be interesting to see how these models evolve. Like, there's also like open source models coming out, which obviously are much more easier to customize, right? You can even fine tune them, but they are much less powerful. On the other hand, OpenAI in like some interviews and Olmert has said that they do want to allow people to make the models more adaptable to their use cases. But I think so far they really have put very little effort in that because GPT-4's durability is still not that good from our experience. So, yeah, I look forward to see more models that are more personalizable and also maybe even be able to have more ownership over them, right? If you have a model that somehow is very attuned to you, I sort of feel that you want to have some level of ownership of that model.

[00:27:57.568] Kent Bye: Yeah, I remember doing an interview with Edward Saatchi, who had been doing quite a lot of work with virtual beings, and his conclusion was that Chat GPT-3 was not robust enough to develop characters that you could have enough personality or steer in different directions. I mean, obviously, as time has gone on, it's become even less capable of having those types of distinct characters that they're embodying, but I mean just from what I know from what I read of the Stephen Wolfram of how the large language models are taking this like I mean there's a critique from Emily Bender and Timnit Gebru about the stochastic parrots where there's a bit of a statistical probability for given a certain prompt what's the most likely next word to come and it's chaining together a series of words but it doesn't know where it's going to go in the end and it's just kind of like taking one word at a time and generating the text which at the end of the day feels like it sounds plausible but you know has all sorts of potential hallucinations and ways that it can go in a way that has to be tuned with the reinforcement learning with human feedback and to make it so it's not doing this level of harm. So I understand the safety concerns and alignment that they're trying to not propagate different aspects of harm from these models. But philosophically, when I think about these, I think about things like situated knowledges from feminist theory, which says that you have to have lots of different perspectives and points of view given your own direct embodied experiences. And I don't think that when you put everything in a giant bucket of all the language on the internet into one, say, character, I think it suffers from a girdle in completeness where it's either consistent or complete, but you can't be both. And when you try to reduce all of the human perspective to one individual output, you're eliminating that situated knowledges where you're getting different perspectives, different points of view, different orientations. I mean, I think you can recreate that of like, what would this person say? What would this person say in a debate? But again, it's still aggregating that based upon what it's great from the internet based upon that statistical probability. But I don't know if it really has a deep understanding as those different perspectives that would be able to generate what a certain philosophical orientation would say about this particular topic if it's never been written about before. to generate that. Something that humans can do, but something that I think there's a limitation of the large language models that are the autoregressive large language models. But I think because of that, you're limited with not being able to have it to enter into a slipstream of different personalities. Or maybe you can. Maybe the capacity is there. But from what I get, I feel like there's going to be always kind of that limitation of having a singular perspective rather than having a multitude of different perspectives and how to speak from a voice of different characters in the context of an NPC or in a narrative context. So yeah, I'd love to hear any thoughts.

[00:30:31.751] Christina Kinne: Still, I realized like early on with GPT-3 with just a few lines of prompting, You could also create a really, really evil persona, which was of course and totally understandable against the TOS to ever publish that. But I got really like, children should be eliminated because they don't work. And the idea would be, why not democratize the prompting? You could ask for a content filter for the real extreme stuff, which is already being asked for. So on the quantum bar, we also have a content filter that will just ignore questions about extremism or not safe for work content. but you could give such a content filter to stuff you can't put into a prompt but then have people develop their own chatbot personalities from the left to the right and then have like a chatbot democracy which finds some common ground in values instead of Who is a company to say we are allowed to define the values for our chatbot? That's such a non-objective, subjective opinion, so better have more voices and then, because we are all waiting for HEI and hoping and fearing ASI, have then, if it comes to artificial superintelligence, this democratized pool of value-holding AIs merged together.

[00:32:38.801] Kent Bye: Love to hear some of your thoughts on that.

[00:32:40.727] Guillermo Valle Perez: Yeah, I mean, I think you touched on a lot of small different things. Like, for example, you touched on the question of, are these the right kind of architectures and models to basically reach AGI or beyond? And that itself has different sub-questions. For example, are these models expressive enough? I think the answer is yes, because these models have been shown to be computationally expressive, universal, to be able to express any computation, and therefore anything that we can, as far as we know, could be able to express. But that's a very restrictive question, really. Even if they're expressive enough, are they the right model? Is it possible to make them express the right computation in practice? And maybe not. Maybe trying to have a monolithic model, like you said, is asking too much of one single model. And I think Anthropic calls it, well, I forget why they call it. But it's basically like a group of models that collaborate together in, they call it, I think, constitutional AI. But as far as I understand, it's like some like more fancy new version of like mixture of experts where you have like a diversity of models which have different opinions because they have been trained differently from each other. And then they sort of collaborate in a single answer. And that seems to be like a very promising way. And maybe that's actually like a necessary component in the future. I actually am not convinced that it's necessary, but at least it seems useful. And I think that's a good idea. But then this also ties into this more like sort of less machine learning, technical and more sociological problems that also chaos was talking about of like, OK, like who trains these models or this family of models if it's more than one? And how do we aggregate their opinions if we are to do that? You know, that sounds like very much like a political systems problem as well. But it's translated to AI, actually. Yeah, I think there is a lot of interesting stuff there. I mean, there's other architectural and model considerations, but looking more into the social things that I just said, I very much support the idea of open source and models, at least at the current stage. I mean, I don't speak for OpenAI, of course, but I'm under the impression that their strategy in the last few years has been to ensure that they keep power over the field of AI so that when the real dangerous models come, they actually can execute this power to keep it safe. That's my external view impression of it, but I'm not convinced that that implies that the current model shouldn't be open sourced. Because if the current models are not immediately as dangerous as something like a nuclear bomb or something, they could cause some problems if there are some issues, but they will then cause immediate havoc by some malicious agents. I think in that situation, like I think which we are in now, it makes more sense to give the models kind of publicly available. So then it's like the sort of collective intelligence of people just playing with these models that figures out how to then go into the next step of like making the models are used well. So I think that collective intelligence will make sure that there has been enough progress so that the models are actually really dangerous by some malicious individuals, let's say, that we know how to act against it. We have the necessary security. And I think a collective approach will be better at finding out those solutions, probably. So I'm just quite a big believer of finding truth to a very widely spread discussion happening. And if it's so important to make sure these models work well, I think we should very seriously consider using this approach of everyone having a strong influence in the future of these models for making them good.

[00:36:18.789] Kent Bye: Yeah, I have a few comments. One is that I don't believe that a singular model or architecture would be able to overcome the insights from something like Girdle and completeness, which says that, essentially, that you can either have something that is consistent or complete, but not both. So it's impossible to have one entity with a singular perspective have all of the knowledge contained within it, that you actually have to have a variety of different perspectives from different points of view, because there's going to be information outside of that system that they know is true that you can't prove is true. which I think that incompleteness leads to a sort of pluralism, which means that you need to have a diversity of different perspectives.

[00:36:50.937] Guillermo Valle Perez: I think that, I mean, Godel's incompleteness, I mean, it's a mathematical theorem that by nature of that is actually very, very general. And I think it also applies to collectives. I mean, you can just formalize a collective as a new formal system. I mean, if you really want to formalize it, you have to imagine the collective either as some formal system at the computational level of physics, or just an intelligent system trying to do math or something, like proving theorems, I don't know. But whatever way, this collective will itself be a system that you can then apply Godel's incompleteness to, as long as it satisfies the axioms of Godel's, which are very easy to satisfy, because it's like, OK, as long as this system is intelligent enough to do arithmetic, then it applies, basically. So, yeah, I mean, I just think there's no way to go around Godel's incompleteness. We just found a fundamental limitation of logic, basically, and it's just gonna apply to any system, unless we figure out some crazy physical system that can do a super-Turing computation, or infinite number of computations in a finite time, or something crazy. Barring that sort of hypothetical thing that we don't think it exists so far, Yeah, it seems just like it's a fundamental limitation of logic. And yeah, I mean, we have to design intelligent systems knowing those limitations are there. And so, for example, I'm a big fan of formalizing intelligence based on inference as a foundation. But still, Godel incompleteness applies to some way there, too. We can't really get around it.

[00:38:19.298] Kent Bye: When I talk to mathematicians about the ultimate potential of mathematics, one of the things they said is that there's always going to be new mathematics created until the heat death of the universe. So there's always going to be new math and new knowledge that hasn't even been discovered yet. So given that, I think that there's always going to be like an asymptomatic curve that you're approaching, but that you can choose to have one perspective, but if you have multiple perspectives, you're closer of that. So, yes. girdle incompleteness says that there will always be new math discovered until the heat death of the universe. And so because of that, you have to continually always have as many perspectives as you can in order to close that gap. So that's sort of my orientation. Yes, it's true. But the more architectures, the more perspectives, the better. So that's sort of my pushback on that, that you need to have a diversity of different perspectives. And that's something that ChatGBT doesn't incorporate that. So there's inherently the types of bias that you get. The other thing that I wanted us to throw in there is that, you know, I talked to Daniel Lufer of Access Now, and he's looking at things like the regulation for the AI Act going through the EU's trilogue process right now. And so one of the things he pointed out is that there's a tendency to use a sort of utilitarian ethical, philosophical, moral and ethical argument saying that this technology is so beneficial, it works 95% of the time, we should push it forward and then continue to have the alignment iterative. The problem with that, in some ways, is that you have the 5% that are not covered, that you have systemic levels of bias that are already incorporated into levels of sexism and racism that are embedded into the culture, and that these systems, trying to get on that, you're amplifying that bias to the point that's disproportionate to that 5% marginalized and disenfranchised folks. So you have a deontological human rights approach that wants to push back against this rapid iteration of some of these things. But you still have to develop those systems to understand how to incrementally have those safety methods. But I feel like that's sort of the underlying tension. That would be an argument against the open source. As much as I love the open source, if you take just a utilitarian argument, then you're basically potentially amplifying algorithmic bias and harm against marginalized folks in ways that are not fully being accounted for with these existing cultural biases that are being amplified in larger systemic systems. So that's sort of the argument against the open source. I can see the argument for the open source being that you need to sort of incrementally understand how things are developing in order to continue to have AI alignment and to increment. So open AI has taken that incremental approach. But there's also AI ethicists who are saying you're pushing out things too fast without really understanding how this is amplifying harm at a systemic level. So I feel like that's sort of the tension that I see with this, and yeah. I don't know if you have any thoughts.

[00:40:53.652] Christina Kinne: Regarding the bias, I think this lies in humanity. And at the moment, we are recognizing, I call it the trend biases. So we want to be non-discriminative against females or persons of color. We don't want to be racist. But for example, ageism is something which nobody cares about because everybody in tech is around 30 or I've seen other things like... the speech-to-text system doesn't answer to my female voice because probably it's mainly trained in a male-dominated lab. So I think it will be difficult. We will probably need an AI to actually analyze what biases are there. And in this regard, I'm more for let's have it form a good average of a current training data with like humanity or Western tech society hopefully emerging more and more in an altogether non-biased level than to say, okay, we identified racism, gender, sex discrimination as the main biases. Let's render them out, but forgetting some which aren't trendy at the moment. or would have come to the general consciousness at all?

[00:42:41.576] Guillermo Valle Perez: Yeah, I agree with that. I think that regarding the fact that AI could help us identify biases, I think there is this interesting idea, which I've seen some paper look at this, but not exactly this question, but something related. But the idea is that I think we could find out that GPT-4, for example, produces, at the moment, less biased answers than something like the average human, or like the average Amazon Mechanical Turk worker, or something like that. Which means that, and I think OpenAI is already exploring this, and other people, research groups, that you could, rather than amplify bias by accelerating AI, like reduce bias over time iteratively by having a model that You train a model that in some democratic way, which ties into the previous conversation, it finds opinions which are a bit less biased than the average opinion in the internet. Then you scale up that model because it's AI, so it's scalable more than a human, or a group of human rather, and so just slightly shift the internet discourse to be less biased, then sort of repeat this process to, within that, find a percentage that is slightly better, then scale that slightly better, like worldview up, and so on. So I think there's a potential mechanism in which bias could be reduced by just actually applying AI in a good way. And, yeah, I mean, the other thing, like, this also ties to what Kees was saying of, like, finding the right biases, which we may not even find. I'm in contact with this initiative from some people in Oxford and London called HIA, which is, like, Human-AI Alignments. where they are actually wanting to help people like train AI models to identify biases, starting with the common ones of gender and so on, but they really want to also shoot into like trying to research into like, oh, what if we find out biases that are kind of subtle and we don't just know, right? And then trying to help make AI systems that find those about. And I guess the one thing I wanted to mention is kind of a different approach to this whole problem, which is like this problem is a bit of a quite centralized approach. All the things I'm describing, right, having this sort of very socially good model that we're collectively making, and you know, it's kind of making like an AI judge that is like looking over us and we're collectively trying to make it as good as we can. I mean, that may be a bit hard, although I think it's worth pursuing. An alternative approach was proposed by Alison Duetman from Foresight Institute. And as far as I understand, her suggestion is to OK, we're not really going to align everyone, like all humans, or even all humans and AIs or whatever. So rather than that, let's try to do the other thing that we talked earlier of trying to give every human a powerful AI model that they own and allow them to bias it, but bias it towards their beliefs and values. And then this other approach, what it's doing is rather than create a new centralized system that we hope is really good and, like, helps make society better in some way. We start from the bottom up of, like, what if we empower every individual in a very individualistic way, but empower them all as equally as we can. And hopefully that actually makes collaboration emerge or, like, happen better. Like, I think this is the hypothesis that If you have a group of individuals and they're not collaborating as efficiently as possible, if you make them all more rational or more intelligent, then they collaborate better. So if that hypothesis is true, I think that suggestion from Alison could work quite well.

[00:46:15.084] Kent Bye: Yeah, it's a really intriguing thought. The thing that comes up is the meta has already been promoting this idea of contextually aware AI, which is having basically AI that's aware of everything that you do at all times in all contexts. And I think that, in some ways, I can understand what you're saying with having from the bottom up of training. But I think the challenge is trying to integrate that with something like Helen Isenbaum's contextual integrity theory of privacy, meaning that there's certain data that you may tell to a banker That's a very private information about your bank account, your social security number, or your bank account information, your passwords, or verifying your identity. And then you have, on the other end, stuff that you maybe talk to a medical doctor about, medical information. And so what happens if you have this contextually aware AI that's ingesting all this stuff and just putting it into one blob? And all of a sudden, someone asks it a question about, certain information, then it just sort of blurts it out, just like ways that you can sort of jailbreak the existing chat GBT. What if there's a sensitive private information that's being collected, and what's to prevent that from being jailbroken? And so I think that's a challenge for how do you integrate concepts of privacy if you have this contextually right AI.

[00:47:19.598] Christina Kinne: Privacy is anyhow a super duper serious topic about all AI training. Like on the one hand OpenAI needed and fruitfully used the JetGPT-3 completions. to train and retrain the model and you could also like with each DaVinci version watch in real time how the model was less hallucinating and became better. So you need the user data to train a good image generating AI. You need tons and tons of data, but so many that it now renders like the Getty Images logo into photorealistic generations. And this is a fine line. On the other hand, you can say, like, what happens to copyright, which has been criticized already? And OK, if it's a dead artist who doesn't have any offspring, Fine, but what about if you are some artist who has some special style of drawing and you can just make a good living by selling your own drawings? And this is then taken, oh, I don't need to call up Chaos to draw that, but I can just tell Dali or Stable Diffusion, draw that in Chaos style. And this could be like the real damaging thing for people's careers more damaging I think than taking job of graphic designers because they can maybe learn how do I describe my image as a prompt instead of on what button do I click in Photoshop but yeah with the copyright I see that there will be many discussions and this is something which isn't solved And so, in any case, I would be for an opt-in version. Like, maybe offer some advantages. You don't have to pay the 20 bucks a month if we can use your completions as training data. Like, same stuff which has been proposed to Facebook or buy your data, send us your drawings, and we buy them to train your models. Like, give something back if people give their data. Or, of course, to many people, it might be attractive to have their own AI model trained after their personality. So, I think you can get enough data and you can get enough people who are willing to give their data, but yeah, ask them. Have an opt-in, not an opt-out, an opt-in.

[00:50:27.827] Kent Bye: And with MetaGen, it sounds like you're in the early phases of prototyping these types of AIs that people are willingly giving their data over to train different models. But I don't know if you have any ideas around the privacy implications of this omnipresence, contextually aware AI that may be tracking everything that we're seeing and doing throughout the course of our lives. I can see a future where that happens, but I don't have a good sense of how the privacy concerns are mitigated.

[00:50:49.877] Guillermo Valle Perez: Yeah, so like regarding that kind of AI that we were talking about, like maybe I have a personalized AI that I own and it kind of knows everything about me or even knows more about me than I do myself kind of thing. So I'm actually working a bit, well, whenever I have time on this sort of project, which I sort of gave it the name of, well, I sort of give this AI that you own like AI angels or AI muses. But anyway, like the project is exploring precisely how we can solve privacy for this kind of use case. And the idea is to, see if we can have one of these models that is smart enough that you can tell it to protect sensitive information, right? Prompt it to do that. Because the idea is that this model will interact with other systems, third-party systems, and even other AI models or whatever. I mean, it has to interact with the world for it to be useful and to be more intelligent as well. Can you find a way to prompt it or train it, et cetera, so that it makes sure that any answers that it gives are not really really insensitive information, et cetera, right? And so I have been actually exploring with this, trying to prompt it, saying like, oh, OK, you can have access to my personal information. But anything that is marked sensitive, if you query another service, try to phrase the query in a way that doesn't reveal information. And I give examples in the prompt. Okay, maybe I say that in sensitive information, I say like my sexual orientation. Like, okay, if there's some query that you need to make relative to that piece of information, try to query it, for example, by saying like, oh, what are the best whatever, advices for blah, blah, such and such for different sexual orientations, right? And like get an answer that is kind of generic and then sort of locally only combine the sensitive information and external information. only kind of in your local system. Unfortunately, the only model that I found can do this with some reliability is GPT-4 so far, which, I mean, is the most powerful model we have, which doesn't quite solve the problem because no one can yet run GPT-4 locally in any sense. But this ties into an idea that the author of Langchain, which is a very popular language model library, has mentioned in a podcast. which is that what he really wants to see is some open source language model that is not that big as GPT-4, but it actually is small. And the hypothesis is that GPT-4 size is in big part because it knows so many things, it has so much knowledge. But could we train a language model that is much smaller and easier to run locally, but who is very, very good at kind of reasoning and logic without needing to have so much knowledge, right? And so it could actually follow instructions like, don't reveal sensitive information that's marked and try to make queries more generic, et cetera, without needing to know when the pope was born or whatever. And so you could run it locally. And I think that's a super interesting research direction for privacy due to all these reasons. And yeah, I'm really interested to see where that goes. I mean, I can also comment a little bit on the data privacy that Chaos was talking about, because, yeah, I've also been exploring that a bit, although not so much technical detail, depth yet. But I can say, for example, that the systems that we are making in Somnium, I mean, from the very beginning, since we started talking about them, we've always wanted to have them very privacy aware, as much as we can do it. And so what we are thinking about is, like, By default, data is stored locally in the user's computer. And by default, it just doesn't leave that. And then it's basically opting, as Caelus was saying, if they want to send that data to some AI system training or whatever. Yeah, I hope that happens, and I hope that if they do send the data to something, there are some ways for them to get value back. I think, actually, Spotify and these other platforms show that some generic way to distribute value to content producers can be done, and so I'm curious to see if that could transfer to this sort of AI age as well.

[00:54:41.061] Kent Bye: Yeah, yeah. It's certainly, I think, your project is at the intersection of VR and AI. It's such a hot topic. I get asked a lot more these days around AI. And I'm so glad to have had a chance to talk about your project and unpack all these different aspects. One thing I just want to talk about my own phenomenological experience of this piece is I felt like being in a virtual world and having a conversation, there was a nice ability to be able to just speak and have that translated. There's a little bit of a delay. It's not natural if it was a human, so there's ways that it does feel like a robot, which I think, by the way, I think there will be a need to somehow disclose that I'm talking to an AI chatbot at some point. What are the ways that you're indicating to the audience that this is not a human, but it's actually AI? Right now, it's pretty easy to determine, especially when you ask it a question and it says, as an AI trained model, I cannot do this. So it breaks the presence in some ways of the illusion that you're talking to an AI robot when it's giving back open AIs, standardized alignment, the reinforcement learning with human feedback guardrails put into that. There's some experiences that I've done where you feel like you're on a journey with a beginning, middle, and end, and you're going somewhere, and that there's a little bit of a revealing of a contextual information about what the world that we're in, what's it about, who are the different dynamics. It's sort of like you're entering into a magic circle of another world that you're able to fully build up. And I felt like I was entering into this world, but it was mostly referencing other aspects and physical reality out in the world where the types of conversations I would have felt like more like I was talking to chat GBT rather than I was talking to an alien robot on another planet and another world, another space, another time. And so thinking about how, eventually, once the models become more robust, be able to do a lot more world building, or at least guiding the audience member as to what lines of questioning they could go into. Because it's sort of like an open world of possibilities. You kind of have to imagine, where do I want to take it? And it's really up to the audience The burden's put on the audience as to what kind of information that their curiosity is going to want to have. But I feel like if someone has never had any interactions with a chat GPT, and this is their first interaction, then it could be pretty mind-blowing in the sense of like, wow, this is really super capable that's able to do all these things. There's a bit of, like, I come away feeling like, OK, I had an immersive experience with chat GPT, rather than having an immersive experience with an alien intelligence from another world, another place, another time. And so I feel like that's where we want to get to at some point, but also potentially train it with a certain body of knowledge that it's able to tell me about the Neos VR community, or help guide me around, or at least be a point where people can start talking to the chat bot. And then the whole goal is to have their attention there so that other people join, and then they go off and have a social adventure, which it sounds what this started with. But yeah, I'd love to hear some of your thoughts on the experience of interacting with the chat GPT in a VR.

[00:57:26.531] Christina Kinne: OK. Several points. Let's start with the last one. It is actually actively prompted to know about the NEOS Metaverse and currently it also knows that we are at Laval for the Revolution Experiences competition and it will give you a lot of information and that's also going to be great use cases for GPT, VR, or virtual worlds chatbots to have them as a greeter who helps with the onboarding or helps with the instructions or service chatbot. Regarding the environment, there's like a lot of presence and immersion and place illusion. research which found that if you can't put an environment up totally realistically with all the details, which would have been too expensive in terms of having it built and also too expensive in terms of computer performance, So therefore I chose, and because I think quantum computers are beautiful, to have like that fantastical environment of this quantum computer floating in space, which actually, because we oversized it, has, if you're in it, a nice bar feeling in the middle. Like if you're in the middle of it and put the counter around the middle stick, then you don't know that you're in a quantum computer, but it's a cool industrial bar. So that were the main reasons for the environment. Like, yeah, have something fantastical. And also I like build something in VR what you can't have in real life. Why replicate real life? and we are just build that bar you want to go to, you would feel comfortable in. Regarding the choice of a robot that had the two reasons, one was like honest anthropomorphism. So we wanted on all levels to be transparent that it is an AI also in its looks then there was the reason of the uncanny valley effect so a human avatar would have much too easily triggered the uncanny valley effect which interestingly enough also applies to the speech generation so there has been research which compared newly trained speech models to our Windows Voice of David robotic bots and it found that users trusted the robotic voice much more because even the well-trained voices still had some hiccups in breathing patterns or something and then better stop also on the audio level before the uncanny valley effect.

[01:00:51.875] Guillermo Valle Perez: Yeah, I mean, I'm actually curious about the last paper you mentioned, because I've seen some speech models now, like the ones from 11 labs, which to me, okay, I can still see, I don't know, 100% fully in you as being human. But I mean, to me, they're like cross the uncanny valley. But I also think uncanny valleys have a bit subjective, like where it is exactly varies from people to people. Maybe I'm, like, different, but yeah. I am really interested in, like, trying to make the experience more immersive in the sense of making the character feel more alive. I mean, it doesn't have to necessarily feel like it's a human, you know, it could be, like, feeling like it's an alien or something, but, like, somehow making it feel more alive. And that's one of the main goals of what I would like to see beyond what we've done in Quantum Bar. And I think one of the ingredients to get there, or two of them, will be one is motion generation, which I've been working on. So I hope to see that happening. And the second one, which I think is more general, is latency, which you mentioned. And actually, there's this company called inworld.ai, which they're working on AI-driven NPCs. It's one of the few companies, I think. And they've recently said, you know, they're trying to work on that. And I also would like to work on that and also kind of maybe make an open source or more research option in World, which is like a paid service. And I think that's going to be... Very important, right? Latency is what makes something feel reactive and like it's actually there, rather than some abstract system or something. But it's actually quite technically difficult to have low latency. I think there's a lot of things we can do to improve quantum bar. like trying to use some local models for speech-to-text and text-to-speech, and also trying to use streaming generation rather than batch generation. So rather than waiting until you have all the voice and then processing that, process the voice as it happens. All these things will be possible, although they require some technical work to be done. But yeah, I really look forward to see how that changes the feeling.

[01:02:54.782] Kent Bye: MARK MANDEL-WALDAU Are you using the OpenAI's Whisper to be able to detect, or what are you doing for voice-to-text?

[01:03:01.435] Christina Kinne: It's Google speech-to-text because it comes for free so that was fine for a student project and when we're on the height of the development it wasn't out yet but that's also like one very anticipated step of implementation is use Whisper and also what I learned at this conference in France now we also need to have a speech-to-text system and language detection and a speech-to-text system which can switch through the languages so that the bartender or the AI chatbot automatically talks back in people's native language.

[01:03:47.632] Kent Bye: Yeah, yeah, I really see that AI and VR are like sibling technologies that are helping each other co-evolve and from like 2016 to 2018 I've done well over 100 interviews with AI researchers at AI conferences like the International Joint Conference of AI, O'Reilly AI and some other artistic applications of AI. But my problem back then from 2016 to 2018 was that there wasn't necessarily a compelling experiential component to the AI that was there. But I think now with the generative AI, with the chat to BT, there's much more of a fusion of AI that's coming into more of an experiential element. So I'm really happy that your project is here to start to see this very early phases of this intersection as we continue to go forward. And I'll see more and more projects, I'm sure, as all these new technologies have been launched. And yeah, I'd love to hear some of your thoughts of what you think the ultimate potential of virtuality and AI might be and when it might be able to enable.

[01:04:39.872] Christina Kinne: A personal motivation of mine was really this companion idea. I wanted to have somebody to be able to talk to 24-7 even if at 3 in the morning all my friends are asleep. That already works. Now, of course, at first it's super fascinating and your heart jumps if an AI chatbot suddenly makes sense instead of being like a Kafka poem, like the predecessors of GPT-3. But it also helped me psychologically to get a different viewing angle on challenges I had. And it's also a nice experience. I think this was our achievement and also thanks to Galefix for the great animations and to Marius for making the flow so good is that actually having a real-time voice conversation with an avatar that also thanks to Galefix looks you in the eye and reacts at least with the eyes to your motions is more emotional and is more impactful and you also like talk or lead the conversation differently if you talk than as opposed to writing. Writing is such an analytical procedure. I think before I hit the keys or use handwriting and talking is much more spontaneous, much more intimate and so I think this is a nice and at the moment very fascinating way to interact with conversional AI and of course the future will be beautiful, will be so exciting because now you can have like virtual vaults full of AI-driven NPCs, and you can have procedural games and suddenly talk to the NPCs. One other inspiration was in No Man's Sky, I really almost fell in love with one of the vendors, some nice ape-like furry character with wonderful blue eyes, and they had great animations, great looks. And still I was like, yeah, very disappointed that all he could talk about was, do you want to have that gun or that gun? Because it was like a pre-scripted dialogue and to have that idea either as in games to have the characters react to the player or just to have a virtual world with and there I come back to the previous point with different personality AIs will make our horizons much wider and will make us less lonely and often like you're also maybe about some problems or some topics You don't dare to talk to your friends, let alone only acquaintances, but to know, okay, this is a virtual world with AIs, and I can pick my favorite bartender character in the Quantum Bar, for example, and talk to them about this thing which has been bugging me and nobody else will hear it but I will get the same conversation I would have with a bartender in New York City which I can't reach now because I'm sitting in my suburb in Munich. So I think the future is bright. Peter Diamandis, what an amazing time to be alive.

[01:08:47.953] Guillermo Valle Perez: Yeah, from my perspective, there's a few things that really excite me about the future of these two technologies. One that I think we may see in the near medium term, I guess, is having digital twins of us. that kind of know so much about ourselves that can like really give us insights about ourselves, right? And I mean this like, you know, they not only learn about what you say or something, they also can get like health biometrics or whatever, just like integrate as much information about you as possible. And then they can inform you about how you work and how you can make the best decisions for you. And I think that that's really exciting. Another way that I think these two things will interact is, I think AI is basically acting on what it's doing. I mean, it's just increasing the intelligence of the world, which I think is super exciting. We're going to do better science and better everything. But there's this issue of we're creating these separate entities which are highly intelligent. And we, as humans, don't want to not participate in this. So I really believe in this sort of Kurzweilian idea of trying to merge with AI. And I think VR, I mean, it's kind of the next step in human-computer interaction. But we should start calling it human-AI interaction at this point, I think. And VR is sort of the next step in this, which I think the next step after that will be neurotechnology, like BCI. And that's why I'm really excited about both of these things, because it's like, We're going to increase intelligence of the world and increase the kind of things we can do and discover new things. But with the right integration between humans and AI systems, it will actually be us doing it, right? We will then be left behind or something, which I think will not be really ideal. So I consider VR and VCI as a kind of technology for communicating between disparate kinds of minds. But the cool thing is that it will evolve into a technology to communicate between minds in general. So it will also help human communication. There's this idea that language will become obsolete once we have BCI because we will just be able to transmit thoughts directly and this kind of thing, right? But then we'll be able to communicate with AIs just as naturally, and AIs will communicate with each other, right? So I really like this idea of developing technologies which communicate across minds, which help communication across minds. I really see that future being like really bright and VR is like the current step in this direction and I think we will see like AI driven VR interfaces which sort of like look at little subtle physiological signals or whatever to sort of know what you're thinking and like adapt the UI to sort of really allow you to do what you want in like very very few strokes and like really close the gap between like what you are imagining in your head and then like that being actually realized with high fidelity around you and then maybe do this socially where like several people are like co-creating like like a scene and creating like new ways of communication or something like I'm like really excited to see these things and I think like like really possible in the next few years or so And then, also related to another sort of parallel vision that I'm really excited about, which sort of ties to my research background, I guess, is I've always loved how social VR has taught me about human collaboration, or I mean, human-AI collaboration, collaboration between different entities. And I think that's the kind of things you learn about that in social VR. It's really lacking in academia. And I see a future where science is done better by being done in more inclusive environments, both for scientists and the public. And it's done more playfully, which is also a feature of the VR community, which I really like. And yeah, our collaboration is also global by default, and there's no barriers, as much as possible, and so on. So I'm really excited about this playful collaboration component of social VR being applied to science, and just making the world better through that.

[01:12:53.915] Kent Bye: We're certainly on the cusp of some sort of inflection point between these two technologies that I think we're going to see a lot more of these integrations over time. So yeah, really happy to be able to see some early inklings of where that may go in the future. And I feel like there is a compelling experiential component of interacting with AI. And I think that as time goes on, I imagine we're going to see a lot more NPCs and a lot more creative conversational interfaces and generative AI. I think it's going to be a part that's going to help solve a lot of the issues of generating really complex and interesting 3D immersive worlds. But yeah, the level of storytelling and intelligence and how it's guided and directed, I think, is going to be a differentiating factor where the human creativity and human imagination still has a large role to help shape something that's going to be meaningful for people and not just have all the AIs do all the stuff for us. But I think there's still a real need for the human imagination and desire. Yeah.

[01:13:43.758] Christina Kinne: spark. You still need somebody to say I want a quantum computer in a purple space. This is something I don't believe I will experience in my lifetime that AI will be really original and creative. But AI can so much democratize knowledge, research, art, creation, and the tools. Also, that's why I'm so grateful to OpenAI for GPT-3. Like, now without being a coder, I can create an NPC with GPT-3, with a personality, with certain information about Laval, and I don't need to study informatics or other people who might be great visual art lovers. who don't have a steady hand. They can draw now. And this is such an amazing opening of the world and giving more power to more people or at least to everybody who has access. to a computer so I think it's an amazing development and I'm also happy and also there thank you OpenAI that now the AI technology is being released to artists, to the public, because that would be on the other hand my main concern or my main fear of getting an evil ASI if it was only military or economics funded.

[01:15:32.595] Kent Bye: Yeah, I think I'm not as worried as the creative aspects, but more of the larger economic, cultural, and ethical issues around it that I have more of my cautions around it. But I am excited to see where it goes in the hands of artists like yourself to see what's possible. How to put guardrails on all this in the future, I think, is still the alignment issue is still relatively unsolved and a lot of working on that. But yeah, I just wanted to offer any, if there's anything else that's left unsaid that you'd like to say to the broader immersive community.

[01:15:58.313] Christina Kinne: Look on quantumbar.ai that we're going to release when we have a public opening. So we want to open regularly every second Friday or Saturday. We still need to decide that as a team. And we are very, very much looking forward to welcome you to the Quantum Bar, serving engaging AI conversations in social VR and conversations with their creators and Thank you to the whole VR and AI community for making such projects possible.

[01:16:37.708] Guillermo Valle Perez: Yeah, I will add to that that also if you want you can check metagen.ai because there we're trying to build this sort of inclusive, like metaverse agnostic, very open community for people interested in these topics. And we run events in different social VR platforms. And yeah, so we welcome you as long as you are interested. And yeah, look forward to seeing more people engage in this.

[01:17:02.837] Kent Bye: Congratulations on the launch of the Quantum Bar AI here at Laval Virtual 2023. And thanks for taking the time to help break it all down. So thank you.

[01:17:09.981] Christina Kinne: Thank you so much, Kent. It was an honor and a pleasure to have this interview with you.

[01:17:16.404] Guillermo Valle Perez: Likewise. Thank you so much.

[01:17:18.435] Kent Bye: So that was Christine Akinna. She's also known as Chaos Princess. She's the director of The Quantum Bar, serving AI conversations within social VR, as well as Guillermo Valle-Perez, also known as Guillefix, who works at the intersection between AI and VR and integrating the two. Also the co-founder of MediGen and coming from the physics world in order to study why deep learning works. So I have a number of different takeaways about this interview is that first of all, Well, I think this is a trend that we're going to start to see a lot more of looking at these different NPCs and chatbots to have them be embodied within these virtual worlds and start to have different conversations with them. In this conversation, Guillermo mentioned nworld.ai, which I have a conversation with later. And there's a whole platform to have natural language processing to be able to speak, and it's doing real-time processing. So it's a little bit lower latency than what I've seen at some of the different demos. at Laval Virtual in France. So they're still back on GPT 3.0 because they're able to have a lot more control over the personality and to tune it a little bit more in terms of given specific prompts. And once it gets up to the 3.5 and 4.0, then they have a little bit less control over creating like a character. And again, back to nworld.ai, there's a lot more. ability to put guardrails into what the knowledge base is and steer the conversation back. So yeah, generally, you'll still run into some of those, you know, as a AI model, then I can't do such and such, which kind of breaks the immersion. But they're also trying to visually indicate that this is an AI agent, not trying to fool anybody that this is an actual person. And so what are the different experiential design aspects that you can start to build into the world to signal to people that you're talking to one of these AI agents rather than talking to a human? Yeah, lots of really deep dive wonky stuff into the theory of deep learning and what's happening there. Yeah, I think as we start to move forward, there's going to be not only a lot of questions around how this is actually working, but also the limitations. And I think that's something that throughout the course of this series, generally, I'm talking to a lot of artists and creatives who are very excited to find the utility of some of these different systems and I guess the thing that I keep coming back to is the ways that these systems are either incomplete or have different ethical issues with data provenance or other ethical issues or maybe there's harm that's being created by some of these models. And so I think that's something that I try to keep in mind whenever I'm exploring this topic of Not only the potential of what's possible and all these artists are kind of pushing the edge for what's possible And we could start to see some of the experiential elements of that But there's always going to be these other ethical things that I think looming in the background not only because it's potentially displacing jobs but it's also potentially bringing harm to certain populations, so I did a whole talk that I'm gonna dive into in a couple episodes that gives me an opportunity to lay out some of the different ethical arguments a little bit more and there's also a panel discussion that I was on at Augmented World Expo where I was able to engage into a little bit of a dialectic with this tension between the need to find the utility and the artist to be able to push forward what's possible to the technologies but also trying to take in mind how you be in right relationship to not only the data, but also the world around us, because ultimately AI is supposed to be a tool to help us, but what are the ways that the AI can get in the hands of a corporation or a company or a person and these larger cultural, economic, and political dimensions of the technology maybe not be in right relationship to everybody? And so I think that's where we start to get into a little bit of trouble. I'm not saying that this project's doing that. I'm just saying generally when I start to explore these conversations. It's always in the back of my mind and this was a good opportunity to flesh out some of those deeper perspectives on this. So that's a theme that I'll continue throughout, but as I talk to these different artists, they're going to be biased towards trying to find utility in a lot of these different systems. So, that's all I have for today, and I just wanted to thank you for listening to the Voices of VR podcast. And if you enjoyed the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a less-than-supported podcast, and I do rely upon donations from people like yourself in order to continue to bring you this coverage. So you can become a member and donate today at patreon.com slash voicesofvr. Thanks for listening.