I was invited to give a keynote at the Capturing Reality in Motion session as a part of the ONX + DocLab MoCap Stage. I gave a 20-minute talk reflecting on how some of the different projects on the film festival circuit have been using motion capture technology either in the display, production, and live performance of their piece. [Video of this talk is coming soon]. There was also a 5-minute introduction to this session by Sandra Rodriguez (Creative Director & MIT Open DocLab Fellow), but unfortunately it was not recorded.

The second half of this is a panel discussion moderated by Rodriguez and featuring Avinash Changa (We Make VR), Matthew Niederhauser (Sensorium & ONX Studio), and myself.

Music: Fatality

Podcast: Play in new window | Download

Rough Transcript

[00:00:05.452] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR Podcast. It's a podcast about the structures and forms of immersive storytelling and the future of spatial computing. You can support the podcast at patreon.com slash voicesofvr. So continuing on my coverage from IFA doc lab in Amsterdam, today's episode is actually a combination of a brief keynote talk that I gave about the capturing reality of motion, as well as a brief panel discussion that happened afterwards. And so there is this Onyx doc lab motion capture stage that was happening at the IFA doc lab this year that had a number of different projects featuring these OptiTrack technologies and to see like how can you start to use this high fidelity tracking into the future of storytelling. So they had the whole OptiTrack system and they had a number of different projects and talks throughout the course of the week And so this was an opportunity to do a talk reflecting on some of the deeper themes And so I was invited to give a keynote talk at the beginning of this and so I was trying to at least set a broader context for you know, looking at a retrospective look at the past Six years since 2016 is when I started covering Sundance New Frontier. I've been doing the podcast since 2014, but six years of covering the immersive storytelling scene. And throughout the course of that, there's been a number of different projects that have either explicitly used the motion capture technology in the process of their story or on the back end of being able to produce it. So either kind of a live, you're embedded in using the technology, or there's other actors that are using that technology, or they've used that high-fidelity motion capture technology. But also there's this whole other range of like capturing our faces and our emotions and having different social dynamics. And so I wanted to try to at least set a broader context for how I start to think about the innovation cycles over time. And then eventually those things evolve into different commercial contexts, getting out into the public, but also what are the different ways that we can start to think about as we have these different motion capture techniques, how do they start to fit into an experiential design process? And so I have a little bit of an elaboration of the elemental framework for how I start to think about this as an issue. And there'll also be, hopefully at some point here soon, a link to a YouTube talk because I have different slides that you can see to get a little bit more context as I'm giving this talk, referencing different visual components of the slides. And in the second half of this conversation was actually a panel discussion that was moderated by Sandro Rodriguez. There was actually a five minute introduction that Sandro did, but unfortunately the people who are recording it didn't start recording until when I was giving a talk. So I'm going to start with my talk and then we're going to be going into this panel discussion that I had with Avinash Changa from WeMakeVR as well as Matthew Niederhauser from Synsornium and helping to run this whole Onyx doc lab mocap stage. And originally there was trying to have another representative from the Onyx community to be there, but was not able to make it. So Matthew was able to step up for that. So that's what we're going to be on today's episode of the Voices of VR podcast. So this talk and conversation with myself, Sandra, Avinash, and Matthew happened on Monday, November 14th, 2022 at the IFA doc lab in Amsterdam, Netherlands. So with that, let's go ahead and dive right in. My name is Kent Bye, I do the Voices of VR podcast, and today I'm going to be talking about Capture Reality in Motion, Qualities of Presence, and MoCap. As I've now recorded around 2,000 oral history interviews over the last eight plus years and published around 1,150 is my latest episode, and so Today I'm going to be talking about three main points that I want to talk about. One is to talk about Simon Wardley's tech evolution model, which I think is really helpful for understanding the evolution of technology and how we can look at things like motion capture technology that's here, but that eventually that's going to be into like computer vision and artificial intelligence and there's a diffusion of that technology as it unfolds. We'll do a quick primer on the qualities of presence and then apply that elemental theory of presence into taking a little bit of a retrospective look at some of the immersive stories that I've seen on the festival circuit since 2016, reflecting on things that I've seen in practice over the last six years. Let's first start with Wardley's tech evolution model. So in this model, Wardley saw that technology is evolving over time and that with that evolution, It goes through four distinct phases. First, there's the genesis of an idea. It's like the academic idea that's being shown at conferences like SIGGRAPH or IEEE VR. And eventually, you have a custom bespoke implementation of that, where that may be a location-based experience, or that may be something that is handcrafted for an enterprise. A lot of the stuff that we're doing at these festivals are very bespoke and handcrafted. And eventually, we get into the mass consumer product, and then eventually, it's a mass ubiquity. So you can kind of think about, cell phone evolution and smartphone evolution as it goes through those different phases from the genesis, the custom built, the product, and the commodity. And so, you kind of think about that as those different phases of like, what is possible right now in terms of what's being proven at conferences like SIGGRAPH, and then that's put together in conferences like this. At DocLab, you see the custom bespoke enterprise, and eventually, the stuff that we see here at Festival may be in a consumer product with the next two, three, five years, and then eventually maybe 10, 15 years, it's like everybody is kind of doing it in a more mass ubiquitous way. So let's take this model and apply it to VR as an example. The initial prototype of the sort of Damocles was 1968 with Ivan Sutherland, and then eventually the custom bespoke phase started in the 80s with VPL research of silicon graphics and being able to have it from 1985 to 92. And then you have the consumer VR phase, where from 2013 to now we have all these explosion of different VR headsets. And then you can look to science fiction, like The Peripheral, to seek the mass ubiquitous sometime in the future where this is maybe an AR VR headset that people are wearing. That's more of a speculation as to whether or not it'll get to that phase of mass ubiquity. Right now we're still in the fledgling early phases of the consumer phase that can arguably still be in that custom bespoke phase where you have to do a lot of hand crafting of that. So yeah, again, we can kind of look at these different evolutionary phases and look at what's happening at these festival circuits and see even how that's diffused over time. So let's just take a look at motion capture technology. As I was like, just trying to look at the history, I found Harrison's Animac for the first motion capture was actually back in like 1962, which is amazing to think about where computing was at that point. And some of the very early examples of using the body to animate motion with computers. And then you have performance capture. In the film industry, there's a distinction between motion capture, which is the body capture, versus a performance capture. And for me, I think of the performance capture as a bit of the emotional expressivity of a capture, maybe the face and more of what's happening with the emotions that are expressing in the face versus how your body's moving. But I think in XR, it's going to be seamlessly blended together. So then as I go to the different festivals at GDC 2015, there is a face shift that was using like a depth camera to do that kind of facial capture. And then we look at that same year later, published in an academic context, was installing a camera onto a VR headset. And then that was the very early phases of trying to use facial capture and reconstructing aspects of the face with a partially occluded, as people are wearing a VR headset. And then we have the Vive Facial Tracker that then moves into the more consumer or arguably enterprise phase of having a peripheral that you can put onto a headset that can start to capture the face. And then now within 2022, we have the MediQuest Pro, which has cameras that are actually doing the direct facial capture. So you can just kind of see how, like over time, stuff that starts in one phase eventually gets productized into just being built into the headset. So already we have different aspects of that face tracking. So I wanted to give that as a baseline context as we start to think about these things. And we can see as I talk about these different things that are happening in the context of these film festival circuits, imagining how that's going to get transferred into a consumer product and scaled up from like we have this high fidelity motion capture systems here. How do you do that with a camera based artificial intelligence to do kind of the same thing? So I'm going to do a quick primer of presence. This is a very short, quick excerpt of a much longer, hour-long presentation that I give on this more elaborated theory of experiential design and immersive storytelling. I have a keynote that I did at StoryCon. If you go onto YouTube and search for StoryCon Keynote, Primer of Immersive Storytelling and Experiential Design, that'll be the full version of this talk. I'm going to give just a quick five-minute digest of just the specific part of the qualities of presence, because I think it's helpful as a framing technique to kind of look at the different aspects of how people are using motion capture in the context of XR. There's this quote from Marvin McKee that says, true characters revealed in the choices a human being makes under pressure. The greater the pressure, the deeper the revelation, the truer the choice to the character's essential nature. I think this quote from Arun Micky, for me at least, encompasses so many different aspects of how I kind of break this out into a fully fledged experiential design framework. Because you have being placed within a certain context and then being put under pressure. And I think in VR you're able to transport people into a spatial reality where you're able to change their context into this virtual environment that people feel present in. you're making choices and you're taking action, and eventually your essential character is being revealed, and that it's all in the context of these unfolding processes that are happening over time. And this essential character that's being revealed, and Robert McKee is mostly talking about screenplay in film, where you're watching other characters, where their character's being revealed, but in XR, you are sometimes the protagonist, so what does it mean for you to be embedded into an experience and for your essential character to be revealed? based upon the different interactions. So there's the context, quality, character, and story. I'm just gonna be focusing on the quality. There's gonna be certain aspects of character and the context. Context is more of like a spatial capture that you're setting the context. And motion capture, I think, is more about both the qualities of presence. There is gonna be certain aspects of character expression, but I'm not gonna be getting into that today because I think of like Sleep No More, if anybody's seen that, how you can start to use dance performance as a way of expressing different characters from a Shakespeare story and how they're interacting, but I haven't seen as much of that percolated from the immersive theater realm into XR, so that in some ways you can think of the diffusion model as immersive theater being at the forefront and the frontiers of using embodied dance for expressions of character, but that's still in the early phases of how that's being translated into XR. just begin focusing on the qualities of presence. So there's these four main qualities of presence. There's active presence, mental and social presence, emotional presence, and embodied and environmental presence. And so these are roughly based on the different elements. The more active and mental social presence are the more yang expressions of energy going outward, and the more yin receptive aspects of the embodied environmental presence and emotional presence are the things that you are receiving within the XR technologies in new ways. And so If we kind of look at how I think about existing media technologies, you have video games, which is all about taking actions and expressing your will into an experience. For the mental and social presence, you have both literature that you're reading text, you're seeing things mediated through language, you're communicating with other people, so you have a social dimension to that. So you have the internet and the World Wide Web and social media, all these expressions of speaking on podcasts and listening to language. So that's another modality for how you're able to communicate narrative especially when you have people speaking and narration. But then you have the film in the cinematic medium where you have the building and releasing of tension of how you're able to create these contrasts. But at the end of the day, it's really trying to modulate your emotions as you tell stories. And you're passive in the sense that your agency isn't changing the outcome of the story that you're watching. It's already fixed and locked into the film and you're receiving that modulation of your emotions through the lighting and the mood and the music. And the last part of the environmental and body presence, this is something that is new to XR in the sense that you're putting your body into the experience, you're including all the different sensory experiences, and you're also having different spatial disciplines like architecture or theater or dance that are being fused in together. So for me, as I start to think about each of these different communication media are all being fused together into active presence, mental and social presence, embodied presence, and emotional presence. And this is a broad elaboration. And as we think about, say, motion capture technology, it's mostly the embodied presence. It's your sense of your self-presence, your body, your embodiment, your sensory experience, your biometric and physiological data, brain-computer interfaces, neural inputs, haptics, immersive sounds, spatial presence, environmental immersion, architecture. So that's what the predominance of motion capture is focusing on that. You have the emotional presence, which I think is what is typically called the performance capture. So you have different aspects of emotional immersion, feelings, mood, vibe, color, lighting, having different consonants and dissonance cycles, music, story, the time. the sense of flow, narrative presence, empathy, and compassion. And then mental and social presence, you can kind of think about it as, as you have motion capture technology, some of the experiences, you get the sense of being present with other people. So as you have avatar representations, then you get a sense of actually being there with other people. So you have everything from thoughts, language, choices, expectations, novelty, mental models, mental friction, plausibility, coherence, attention, suspension of disbelief, puzzles, the mental friction, rules, social presence, and virtual beings. And then the last part is active presence. And I think this is one that's a little bit more emergent in terms of agency, interaction, dynamics, action, locomotion, exploration, spontaneity, creativity, will, engagement, gameplay, intention, and live performance. So that's kind of a rough complex of how I start to break down. For every experience, you have some balance of all of these different components, but they're kind of like, ingredients that you're modulating and sometimes you have a little bit more of a narrative beat, sometimes it's more about the agency, sometimes it's more about setting a spatial context with your embodiment, or you're having social interactions with other people. And the way that I digest it is taking action and making choices, sensory experience and emotional immersion. And Young, his interpretation of the different elements. He has intuition, thinking, feeling, and sensing, which I think is another good way of breaking all these down. So that's an introduction to the qualities of presence. Now let's just kind of walk through some of the different experiences and unpack it just a little bit. So embodied and environmental presence. This is the one that I say is by far the most predominant when I start to think about the impact of motion capture. And this is Back in 2016, there was a real virtual reality immersive explorers where you have these trackers all over your body. This is the first time that I think I actually experienced what Mel Slater calls the virtual body ownership illusion. When you have different tracks on your bodies and you have a one-to-one correlation to you moving your body and you seeing an avatar representation, you start to actually identify with the virtual body that you're in. And so you have this deep sense of being embedded into this sense of embodied presence within an experience. The Leviathan Project was also at Sundance in 2016, also using motion capture technology. And this was the ability to move through a space but have these objects be tracked in this space. So you have this sense of physical haptic, what they call passive haptic feedback, where you have these objects that are giving you that haptic feedback. But you have a virtual representation of that object that's also in the virtual space that gives you this deep sense of being embedded into the place. And so that's yet another place of having the sense of embodied presence. And then it's 2022, there's re-contrary that all of that I saw Leviathan in 2016 was all done in the Oculus Quest, but be doing a mixed reality capture of a space that had a one-to-one correlation of these different objects, but you're walking through those spaces and being able to actually like interact, well not really interact with the objects, but at least sense of those objects are there and you have that sense of, you know, you're actually embedded into that place. Optic track at GDC 2016 has all these different real-time tracking and basketballs to interact with you have the Xsens inertial suit that first came out in 2007 and then now we have Xsens, Perceptron Neuron, Rokuku and PrioVR. These are all ways that use the inertial sensors, which is a little bit a cheaper, but the challenge sometimes with these sensors is that they're really only have like three DOF, and so you can do some things to get a sense of a spatialized context relative to each other, but when you're actually moving physically through a space, then it can not have a full six DOF expression of that. So there's different trade-offs with these different methods of motion capture, like Future Rights, this is Sandra's project that she was talking about, There's a way that because you're using the inertial sensor, they don't always have the 6DOF tracking, which then means that they're limited to how they can have a dancer move through a spatial context because they don't have the full 6DOF tracking. So it's cheap and affordable, but there's also other limitations. And so there ends up being different trade-offs that you have to negotiate when it comes to the amount of full embodied presence that you can have in a 6DOF context. Razer Hydra came out in 2011 and there were some people that I saw even before the Oculus Rift had come out hacking it up together into a VR context and so starting to have different motion track controllers. Then you have the HTC with the Vive and Lighthouse tracking that was first shown at Mobile World Congress 2015 and then just a few days later at GDC which is the big buzz of actually having external laser tracks to get submillimeter accuracy of some of these tracking and the Vive tracker that was then announced on January 4th 2017 and that was able to then have the ability to put these trackers at different points in your body to actually have more motion capture aspects and in August of 2017 is when we first started to see this apply to full body tracking within VRChat and still to this day It's a whole community of people that have incredible dance scenes and rave culture for people to be able to have full body avatar representations within VRChat with these Vive trackers that have different iterations of that now. And then there's actually this tweet that came out with, this was about a year ago, with all these different We have a lot of different solutions. We have a lot of different manifestations of lighthouse tracking and inertial tracking and computer vision tracking. All of these different types of solutions. If you wanted to have full-body tracking within VRChat, there's all of these other third-party solutions now that are available that you can have and they'll probably even continue to be more so. You have to have some sort of external sensor to really do full tracking. Oculus has been very adamant about not having any outside trackers, which gives them the limitations of a lot of that. And so because of that, they have this whole debate around what's it mean to have legs in the keynote. And there is a whole thing around meta actually was deceptive in the way that they were saying that they were going to have the ability to have legs in VR, but they actually use motion capture for this. And then it came out like, hey, did you actually use this sort of internal tracking? but it turned out that no, they're actually using motion capture for this and so it was all a lie. But they do have research to do pose estimation AI for legs, but the caveat is that they're just looking at these upper body movements and then extrapolating what's happening to your lower body. So they're not doing novel movements of being able to kick your legs. So all the stuff that Mark Zuckerberg showed doesn't actually reflect into the AI research up to the point of where it's at. Maybe we'll get there eventually with sort of knee strikes and perhaps like supernatural but that's still going to be very early phases. I'm sure they'll get there eventually, they're able to track their face, but I think it'll have hand tracking or other ways of getting a full sense of those legs underneath. Emotional presence, you have all these different facial tracking that have emotional expressivity. You have Scarecrow, you know, I mentioned that from the MetaQuest Pro. So having an iPhone tracker to be able to track your face in that context of performance. You have Draw Me Close, which I think is a piece that was one of the first that I saw with the Vive tracker, And this is more about creating a character relationship between a mother and either son or daughter. You end up playing either the son or the daughter. You end up hugging in this situation. So there's a bit of an embodied presence there, but also this kind of emotional feeling of recreating this archetypal relationship of the parents and child relationship. So mental and social presence, you have different aspects of like VRI at Sundance. You have multiple people in the same social context all dancing around in a scene and seeing other people that are with you having a virtual representation and having these different dance experiments. Metamorphic at Sundance was using the Vive trackers to have this social dynamic in the context of a performance, and that, you know, there was a third person that came in at some point that was a surprise sort of reveal of that. But they're starting to use the Vive tracking technology, pushing the limits of embodiment in the social context and piece. As cinders at Venice, Again, this is a social dynamic, but it was actually a puzzle game as well. So again, into this solving puzzles, but you have a full embodied presence and you see other people in the context of the experience. Dream is an experience that was starting to use motion capture technology and they're using abstractions of this embodiment in the movement to be able to puppeteer, to translate the human body into different avatar representations that were being presented in this piece. So there's different gestures that they were able to do to be able to control how the avatar representation was being represented. So there's sort of an abstraction of how you take the movement of a body and translate it into non-human avatar representation. Then there's a whole idea of Wizard of Oz, which I think was first shown in 2005 in Teach Live. This was one person who is embodying multiple characters, and this was a training application for teachers. And so there's one actor, immersive theater actor, who's kind of puppeteering five other characters. And the piece that Onyx was showing here actually kind of uses that Wizard of Oz puppeteering. You can have one actor, but then you can have a prerecorded aspect and then have them kind of jump in and puppeteer other aspects as well. So this whole idea of Wizard of Oz puppeteering. And the final thing is the active presence. So what are the ways that you can start to take the movement of your body and either take agency or have this feeling of having something that's emerging in live? So what is the liveness of a live performance when you're able to be virtually mediated into a virtual environment, but there's people who are live actors? a live performance. but there's no way for you to engage and interact, then it just feels like it's kind of like a pre-recorded thing. So what's the purpose of doing something live if it just kind of feels like it's pre-canned? So what is the interrogation process for people to really test the liveness of the live? That's a question that I come up a lot when I see these pieces. Dazzle was a piece at Venice where you're able to actually engage and dance live with some of these other actors. So there was a real moment to be able to do that, but some people didn't get a chance to do that liveness. They had different tiers where some people were with actors and dancers and some people were watching from a virtual And then you get back to this, like, okay, they're doing it live, but how do we know that they're really doing it live? There's no real way to kind of interrogate that. And the last couple of points that I'll make here is that there's the wolves in the walls that was a way of taking this motion capture technology and be able to create avatar representations. So Third Rail Projects, an immersive theater company that did Then She Fell in New York City, Taking the experience that immersive theater actors had with engaging in an embodied fashion with other people, they were able to distill a lot of that knowledge into a character of Lucy within a game, so that when you're acting with Lucy, it's actually distilling down a lot of that embodied interactions to make things fluid in ways that you may not even necessarily consciously know, but they're able to have more of a dynamic, interactive aspect because they had all this sort of motion capture on the back end. And then hand tracking, you know, obviously this goes back into like leap motion and other aspects, but doing AI tracking of your hands and that allows you to express your agency in the context of that piece. And then eventually the next frontier is brain computer interfaces where you have things like EMG on your wrist and then it'll be able to isolate down to individual motor neurons, which means that you can just think about something and you'll be able to express movement in the context of a virtual environment. So finding new ways of having our motion be embedded into ways that we're engaging with different scenes. And so non-invasive interfaces like EMG and control labs, which may come eventually, is going to start to explore some of that. So again, we're looking at active presence, mental, social presence, embodied environmental presence, and emotional presence. And that's just, for me, a handy way of breaking down all these different aspects of motion capture. And I talked about the tech evolution model, these qualities of presence, and reflecting on some of these different pieces for the last six years. And with that, I think we'll go on to the next phase.

[00:24:47.362] Sandra Rodriguez: That was amazing. Oh, sorry. That was amazing. I'll invite you, Kent, to join us on the couch, but I'd like to invite two other guests, Avinash and Matthew, just to get us talking. You see that there's a lot of opportunities, there's some limitations, there's prototyping a lot involved, and social VR is definitely something that enables us to explore some of these tools differently, or at least get the audience members also interested in what the possibilities are, so I'd like to just have you present very briefly who you are and what you do and why or how does your work relate to motion capture.

[00:25:27.279] Kent Bye: So hi everyone, my name is Avinash from We Make VR. So I think that in the context of this, VR as an industry has definitely changed our definition of what motion capture is. Or basically VR tech has changed the way that we as makers think about what Mocap and represent. Because in film and VFX, we've used motion capture to capture performances and drive these digital characters. But with VR, we kind of do the same, but it's a lot the barrier of entry is lower. You can just use a basic VR headset and already start driving a character. Now, some of the examples you saw, everyone's wearing these big suits and you see these really high quality imagery. But what we kind of saw in VR happening was that people were assuming like, oh, it needs to look super high definition and high res to be a believable character in VR. But what we've, and Kent mentioned that, he touched on mental, social, but also emotional presence. So the assumption was for that emotional presence, you need to have that high quality fidelity. But what we've learned in VR is even if a character's quite basic and low res, because we can now drive the motions of a character, it's not just that you hear someone's voice, if that's someone that you know, and you see that in a virtual space, you already connect to that person because, well, and Kent also mentioned Mel Slater, his work has shown that even if an avatar is very lo-fi, the moment you can see yourself in a mirror or use the quest with hand tracking, that suddenly becomes a magical moment and you connect to that character. So I would say that with all these advancements, what Kent also mentioned in terms of Wizard Oz, It allowed us to do a project called The Meta Movie where we do live performances. We get people, actors, live in these virtual worlds where you would normally use a big mocap stage like an OptiTrack we have here, but Matt's going to talk about that probably. But now we also did exactly what Ken mentioned in The Wiz, where we have one actor who during the course of the performance can quickly jump into multiple characters. So that completely changes the way we deal with creating virtual stories and live experiences. And then all wrapped up. So what that brought us as a studio to is that now that it becomes quite easy to drive an avatar, but at the same time, companies like Meta are creating the avatar codec, which means that your virtual self is going to look very, very realistic. And with deepfake technologies that we've seen, someone's voice can sound like someone else. An actor can drive an avatar like you would move. Now that we see non-technical people jumping into the VR space and using these social VR environments, a lot of non-technical people already have a hard time separating fact from fiction when it comes to social media. Now once you add that layer of being in an immersive space with technical imperfections, how would the average user know that the other person is not actually you. And that's a responsibility I see that we as makers have, as a sector have. Now we need to start to think about the ethical implications. Now that mocap technology through VR is becoming so accessible, and at the same time the visual fidelity is becoming so high-end within the next year or two, we need to take that responsibility as a sector and start thinking about What do we do with this? Where do we take this technology from here? That's the discussion that we need to have. And now I'll stop and hand it over to Matt.

[00:29:00.497] Sandra Rodriguez: That's great because you've just mentioned also fidelity and high fidelity and making sure that it looks so much like the real. And I think what documentarians bring to the table is that we explore what reality means. So perhaps it's not about looking so close to reality, maybe exploring different layers of that reality through avatars that really don't look like yourself, for instance. So can you tell us a little bit what Onyx is, even though you've had a couple of workshops and maybe don't want to repeat yourself too much, but what got you interested in wanting to partner with IDFA? What was it that you just thought, you know what, there's something in this documentary and emergent media crowd that just fits perfectly with what we do at Onyx?

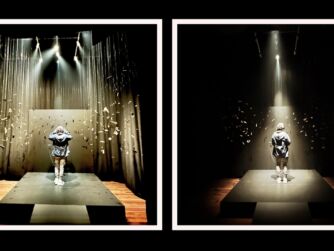

[00:29:40.607] Matthew Niederhauser: Well, I'm here wearing a few different hats, including the physical one. Is it here? Is it real? I don't know. But I'm also a co-founder of Sensorium, along with Jon Fitzgerald. And we first came to IDFA in 2018 with a project called Zikr, which was actually about how to translate presence of Sufi ritual practitioners in Tunisia into virtual reality. How do you tell a documentary about an experiential, real-life presence? And we were using camera-based volumetric technologies for representation to drive better presence. And even though there wasn't super high fidelity, one of the biggest things to transcend that was voice. And I think voice is one of the biggest ways to get over the fact that I might just be a parrot at talking lips. We also, you know, we try to work with this technology all the time, and especially explore embodiment. And you know you've made it when you're in a Kent slide. So we also did Metamorphic, which was that project shown earlier, in which we were sort of having people move in and out of embodiment within these, like, beautifully painted quill environments and avatars done by Wesley Allsbrook. But Onyx in particular, John Fitzgerald and I are technical directors and artists in residence there. And so we made a decision early on to get Onassis to provide an OptiTrack stage in the studio for a really amazing diverse group of artists and letting them, trying to activate and let them play with it. And that has been a really large source of creative genesis out of Onyx over the past year. And we were super lucky to have Casper at a showcase we did this summer. And one of the artists, Matt Romine, who has one of the crazier pieces here called Bag of Worms, offhandedly was like, hey, how about we take the stage to Andy? I've repeated this story so many times now. And I was like, that's potentially a horrible idea. It's a very complicated piece of equipment, but it quickly became clear that we had so many people trying to explore how to use this technology for new storytelling ways, especially with a live audience, which is an entire another layer of how to deal with representation and to engage an audience. So, yeah, I mean, ever since we first came here to tie it around in 2018, we always knew that this was a forum for experimentation. And that's why we felt so motivated to bring out this group of artists who are using, you know, the technology in such new and incredible ways.

[00:32:21.729] Sandra Rodriguez: It's setting the stage also to talk about maybe some of the constraints, because we're seeing some of the projects. Kent, you had an amazing overview of how it was used and how different tools were used to capture body motion. But there are inevitably limitations to accessibility. And that's one of the first learnings. I heard a comment in these last days that I thought, huh, interesting. A couple of years ago, I love to be in VR, in social VR in particular, in experiences where you know the people you walked in, are here with you and I try on purpose to try to identify them and go and approach another avatar in VR and see them react and I'm pretty sure I can usually find the people I think are there just because you recognize even through avatars that don't look like the person you're thinking about, you kind of recognize their way of being in a space. And it's not necessarily the full body motion, but if they approach things easily, their energy, their slowness, you do have a sense that you recognize other anonymous people in VR. And I simply think on one level, this layer of reality of our knowledge as humans of acknowledging other people's presence, is amazing and there are so many tools to do that, but then if you are a creator today and you're thinking, I want to explore these tools, what would you say is the biggest challenge into accessing and using these new affordances for a creator today?

[00:33:45.989] Kent Bye: Well, I would argue that it's actually getting easier and easier because you're absolutely right. Even if your avatar would be a piece of tree bark and it's someone that you know, you recognize that person. Even if it moves in a weird way, you know who that is. But I would say even with nowadays with a simple Quest 2 headset and two controllers and some simple software like Glycon 3D, that'll cost you like $70. That is already enough to get you started in basic motion capture. either to drive an offline character or to use it for a real-time social VR experience. I mean, once you really want to explore more, sure, you can go all the way up to an OptiTrack. But the next step up, like a Rokoko suit, you can get that for not that much money. And that is very accessible to even an indie maker. It's more about spending the time on your concept. Do we really need to have a full body tracking for this setup? Or can we get away with something else? Is eye tracking or facial tracking maybe enough? So, yeah, I would say it's becoming easier and easier. Unless you get into the realm of what Onyx does.

[00:34:54.663] Matthew Niederhauser: Well, I mean, you're going to be able to see what we do with it at 3 p.m. when we do the showcase again. But I would say it's becoming ubiquitous. I mean, the driver of that are mobile devices, like hands down. And there's a lot of technology that we're exploring. And even in Onyx with some of our members who are just using pure you know, machine learning based video analysis to create really good motion capture, let alone what we already have in our pockets for something like Snapchat. And, you know, there's a massive amount of data being eaten up by technology companies right now. And we're especially going to see some new ways that's going to be activated, I feel like with Apple next year, but even your face unlocking program has provided Apple with a ton and ton of data, which they use to create models of how people are tracked. I mean, I would say, though, that in terms of representation, there are some people in the ONIX community, especially LaJuné McMillian. They have been working on a project about creating an online database. of motion capture data of black performers and black character based people. Once you start getting the algorithms, it's really crazy to start looking at representation. So, you know, we want more ubiquitous access. OptiTrack is amazing in terms of like what it can be done for, you know, the exactitude and like being able to turn somebody on in a performance or in a metaverse or sort of CGI environment immediately, but the changes are coming so rapidly in terms of the ability to track oneself and then create a digital representation of oneself with something that's going to be in your pocket.

[00:36:42.259] Kent Bye: Yeah, just two points that I want to make. One is around accessibility is one of the hot topics in terms of an open problem that is yet to be fully solved. And I know that there is a group called XR Access that has a GitHub that starts to look at all this stuff. And one of the co-founders of that, Dylan, wrote a paper. I was a part of the IEEE Global Initiative on the Ethics of Extended Reality, which was an effort to look at some of these ethical issues in the XR industry. And there was eight white papers, and one of them was on accessibility, as well as diversity and inclusion and equity. So it's a broader issue, and then one of them points that Dylan said is that it's likely going to have to come to the government passing a law to mandate that that needs to happen. And there was actually kind of ongoing litigation with Viveport not having captions that they were delivering this as a service but not fully compliant with ADA. So the ways in which that all of these immersive technologies are going to eventually have to adopt all these different things, but that's still at the frontiers of development, I'd say, and needs a lot of attention. But the other point just that I want to make in terms of the trends that I see, why I think the Wardley model is so interesting, because it really tracks to see what's happening at some phase, and then what are the places that you can look to what's happening now to see where things are going to be happening in the future. So that's why I love coming to IDFA is because this is one indicator to see what's happening in terms of story and technologies. This is really at the bleeding edge of experimentation on that front. But also in communities like VRChat and NeosVR where there's some really sophisticated ways that people have full body avatar representations. There's entire cultures of dance culture that people have recreated the nightclub scene and created these underground music scenes. all sorts of other things that you can imagine that people are doing with both motion and haptic technologies. So I think what that does is that it creates a driver for other people to create more accessible technologies, like that tweet that I showed with all these alternative methods. If you don't have a Vive system with a lighthouse tracking, that's $1,500 to $2,000 all in with all the technology. But if you just have a Quest and you have iPhone and you're able to feed in your motion data directly from your phone into, I mean, we're getting to the phase where we'll expect that we'll still have to have an external sensor to do that, but I think we'll start to see more and more of that over the next year or two of external systems that'll have multiple ways for people to have full body representation within these technologies, and then eventually that'll be made available for other creators to make this technology more widely available.

[00:39:14.610] Matthew Niederhauser: And just quickly to address diversity, I am a poor substitute for Kat Sullivan, who was supposed to be the Onyx representation here on this couch, who taught a master class yesterday and unfortunately had to go back to London where she's running a school on motion capture. So just wanted to say I'm not supposed to be here really, but I'm happy to still be able to talk about this.

[00:39:40.034] Sandra Rodriguez: But it's a good way to talk about some of the pitfalls that we may be looking into and how and why are artists so necessary in this field. You just said, for instance, the example of the apple unlocking your face, and it instinctively made me think of the work of Joy Buolamwini, who used to be at the MIT Media Lab, who started an entire movement because Apple could not recognize her face. as an African American. And so because she was a woman and because of the color of her skin, the technology suddenly records and captures very badly her facial reality. But cultural differences as well. I remember experiences at Sundance a couple of years ago where they were trying to identify your emotions based on your facial movements. And cultural differences made us understand that smiling is not necessarily understood with or without teeth in the same mouth. manner in different cultures and suddenly it kept telling us we weren't happy because we weren't showing teeth. So our joke was American Colgate smile and then everybody understood. But these types of differences in the way we use our bodies and how these are analyzed are as you're saying becoming more and more mainstream as I think can't really show there's a way that this gets dispersed and accepted by the public and the way it is used. And I think the role of artists and creators, and especially documentarians that are here in the audience today, is to question and disrupt. So question that narrative, question the limitations, use them to tell stories. And there are so many opportunities for doing that. So if I was to ask you all, what do you see as something that, because you've tapped a little bit into it, maybe it's not even having just the idea of thinking about motion or thinking about moving, how we could use that in a storytelling way, I think is really, really looking forward to the future. If you're looking at what's really getting you excited right now that you see as an opportunity not to be missed, you kind of tapped into a lot of subjects that I think are interesting for people here. But what is your perspective on it? Avinash, if I start with you.

[00:41:37.572] Kent Bye: what is an opportunity not to be missed in this whole field? Oh my God, there's so much.

[00:41:43.114] Sandra Rodriguez: Just capturing motion specifically.

[00:41:47.636] Kent Bye: One of the things that I'm personally most excited about is, what was actually touched on, is if you can indeed use your mobile phone, you just put it on a little stand, and you have a very basic headset, and then suddenly you have a full body avatar. And then, of course, you'd want to use a bit of haptic feedback as well. There's something magical that happens once you can start to move your legs and your feet. And like the thing that we saw when we first did hand tracking, that was mind-blowing. It's like, oh my God, it's suddenly real.

[00:42:19.121] Sandra Rodriguez: People still do that a lot, just as soon as they have hands, just spend a couple of minutes, just like a baby that's in the room or was in the room a couple of minutes ago. We kind of go back to that four-month-old age of, oh my god, I have hands.

[00:42:31.194] Kent Bye: Exactly. And we see that when we onboard new VR users. Oh, these are my hands? And then there's something funny that happens when you suddenly give them Mickey Mouse hands, like, oh, hang on, they accept it. But then the next thing they, without accepting, do is they start looking at their feet. Oh, hey. Oh, and they lift their leg and they can't move their feet. The more you can make that a complete experience where people don't question the way they're going to drive their avatar, the more frictionless it becomes. The more they will embrace the experience, the story that you're telling, instead of technology becoming kind of a hurdle. Because we've had installations where you strap people into multiple sensors and Vive trackers, and it takes like 10 minutes before someone's in there. And they get so distracted by the technology that as a storyteller, it's counterproductive. So then we choose, oh, let's not do body tracking because we can get them into the experience faster. But any tech, especially mobile tech, that we can use to make that more seamless, more frictionless, that's what I'm really excited about.

[00:43:31.881] Sandra Rodriguez: And what about you guys in super, super succinct words, because I'd like to at least give the opportunity to have questions.

[00:43:38.748] Kent Bye: A lot of things that I look at in my podcast is trying to look at, what are the things to look at to see where things are going to be? What's the trends? And I think one of the things that I've noticed, at least, is in Japan, there's a huge amount of virtual culture that's happening, not only in VRChat with VKit and virtual market, but also VTubing and the whole phenomena of people having their physical representation translated into a virtual presence that they have. And so I think the VTubing aspect of the technology stack that they have there kind of mashed up with the VRM, which is more of an open standard way of using GLTF, which is an object format. But having the GLTF mixed with the stack from VTubing to be able to have more of that performance capture aspect, I'd say, but to have webcams and thinking about how in Roblox or Fortnite or even Rec Room you have people that, well, at least in Rec Room, they're immersed in VR, but you have people who are mediated into different 2D representations, and so what are the ways that someone maybe have a webcam that has their full facial expression, and maybe they have more emotional expressivity based on accessible technologies, and something like Roblox, I know they demoed that, but also potentially Rec Room, or VRChat, or these other spaces where you start to see this more fusion of technologies where people may not be fully embedded into VR, but they're able to give a more emotional expressivity of a performance, let's say, of an actor or character that is embedded in VR, and so that fusion there, and V-tubing, I think, is a thing that people should also consider as a vector for where some of this technology is gonna be intersecting with XR within the next five to 10 years.

[00:45:16.972] Matthew Niederhauser: I'll very quickly add at the end. I mean, I already mentioned their work once, but LaJanae McMillian, in so far as she's doing the Black Movement Project, is one of these artists within the New Ink and Onyx community where we're trying to be there to support in terms of creating diversity of movement. And seeing artists resist and or expand representation of movement in all sorts of different XR and performative works. But I would also say, as an individual, it's also being hyper-vigilant about how you yourself are re-represented in a digital format. And this is going to become, insofar as I feel that this technology is becoming ubiquitous, especially through mobile devices, it is going to be a real thing. How am I being analyzed? How is my behavior then being filtered into a CGI or digital representation? And quite frankly, you know, am I being conditioned within that behavior itself? And it's going to be a very strange, interesting shift, maybe not tomorrow, but definitely over the next few decades, as potentially a lot of the looming possibilities of the metaverse, you know, become part of our daily lives. And re-representation, you know, obviously trying to pay attention to diversity, and inclusion, but also as an individual, being vigilant, I think, is super important. And otherwise, there's some pretty crazy traps, I think, that we can fall into as a society. Everything's fine, by the way.

[00:47:07.084] Sandra Rodriguez: Everything's fine, but stay vigilant, keep your eyes open, stay awake, keep moving, keep looking at our bodies and our emotions and presence in space. I wanted to make sure that we did have at least a question, but I saw a two-minute mark. So I know it's going to be very short. Is there a very short question?

[00:47:24.959] Matthew Niederhauser: We're also doing the stage next, so we could definitely stay for another five or 10 minutes. We're the ones who are actually turning the stage over, and actors won't come in for another 10 minutes.

[00:47:36.362] Kent Bye: Someone in the center had a question. The lady in the black.

[00:47:41.404] Questioner 1: I was interested in the integration between AI and motion I can't speak for, no. I think that

[00:48:24.856] Matthew Niederhauser: Off the top of my head, there is an artist right now who's specifically trying to use integration of the motion capture stage in order to drive any machine learning recreations of what they're doing. But it's a very real thing that you're talking about. And it's actually something that's mainly addressed through our public programming and talks. And it's something that I think our community is vigilant and trying to constantly discuss, especially in terms of appropriation of other people's image or unwanted re-representation. I think that's a pretty ethical line that a lot of people acknowledge within our own community. And what you're talking about, you don't even need motion capture now. It's like literally text-based generation for these type of videos. So it's something that is going to constantly be moving in and out of our media ecosystem. And I hope to see more projects that try to address, question, and interrogate it for sure.

[00:49:30.693] Kent Bye: Yeah, just a quick comment on that is that a lot of the stuff that's happening in terms of that I think happens more in the AI conferences like NIPS and the different types of computer vision. You see a lot more of like meta doing research of trying to... Like the AI pose estimation stuff that I showed is largely based upon stuff that they're trying to extrapolate the motion capture data into these models that are able to recreate a best guess estimate of some of the stuff based upon how your upper body is moving, and then kind of extrapolating that into lower body representations. But in terms of the privacy aspect, I've done quite a lot of work of looking at the implications of these immersive technologies, and Brenton Heller is a lawyer who's also been writing and organizing around this topic. She calls it biometric psychography, which means that there's certain information that we're radiating from our bodies that are going to be able to kind of deduct different psychographic information around, let's say, how we're moving our body is indicating different aspects of our identity. but in more of a contextual, relational way of what is happening in the scene. And so, yeah, there's a whole movement called NeuroRights that is trying to look at the right to identity, the right to mental privacy, as well as the right to agency. So trying to break down some of these algorithmic aspects of these technologies. And most of the work that I see right now is happening more in the context of inferences in the context of AI inferences and what are the regulations around that. There's a lot of work that's happening right now in the EU that's trying to come up with different inference frameworks to say what are the bounds of what AI can extrapolate based upon the input that's coming in. And so much more in terms of XR, but more in the AI front of those type of inferences. That's kind of at the frontier of where those neuro rights are trying to be established and kind of fighting for these different aspects of mental privacy, our identity, and agency that is bound up into all that.

[00:51:28.953] Sandra Rodriguez: I'm using this to wrap it up because I think there's been ethical issues, questions on how are the consequences and aftermath of what we're exploring. But there's also so many creative outputs. Future Rights, by the way, is also using AI and motion capture to tell stories with our bodies and have a dense autotune system making you dance better than you ever have. So long story short, there's roles for us to question, debunk, demystify, and bridge new narratives potentially together. That's what I believe our role is, and you guys have been fantastic into opening a lot of these potential opportunities for us and debunking a lot of these myths and perhaps what seems still unknown. There is the mocap stage happening right after, so stick around if you can. And you guys have been amazing and super attentive. Stay vigilant. That's what we will remember from this from this doc and thank you for being all here.

[00:52:25.055] Kent Bye: Thank you So that was a talk and panel discussion at the if a doc lab called capturing reality and motion at the Onyx and doc lab motion capture stage and featured a talk that I gave at the beginning and then I was on a panel discussion moderated by Sandra Roger who I've actually done a couple of interviews with Sandra. She did Chomsky and Chomsky at Sundance New Frontier back in 2020. So I did an interview with her talking about that project, but she also was at South by Southwest 2022 with the piece called Future Rights, which is a prototype that was there. So it was an early development phase, but it was featuring live ballet and VR and some interactive dance autotune stuff that she was working on, which is really quite cool. as an idea. And we had Avinash Changa, who is a part of We Make VR, also working with Jason Moore and the meta movie project Alien Rescue, which has got a lot of real-time immersive theater acting as you have multiple actors and multiple characters as they go through this experience. And then Matthew Niederhauser, who's one of the co-founders of Sensorium and also a part of the Onyx Studio that was a big part of putting on this onyx doc lab motion capture stage I'll have a more in-depth interview with Matthew actually in the next interview where we start to Dive into the whole backstory of sensorium and all the different stuff that they were bringing in actually some of the other Projects and I'll have another interview with Matt Romine who did a whole piece called bag of worms, which is quite a unique experience I have to say with blending different aspects of sketch comedy and horror elements but also these immersive technologies on the motion capture stage and So yeah, I guess, you know, just trying to reflect upon all the different ways that we start with these high-end OptiTrack systems and give access and accessibility to different people with the Audinix studios in New York City, just making it available for folks who are within the New York City community, artists working within the spatial medium and just seeing what kind of stuff they can start to create with it. Just to see how some of these technologies start to develop. And then the artificial intelligence, I think, is a big theme in terms of trying to take this stuff that is more high-end and very expensive, and how can you start to translate that into more of a camera-based and artificial intelligence options that are going to be coming, as well. And yeah, just the different ways you're tracking the body, you're tracking the facial capture, and then having the different social dynamics and ways that these hand tracking and agency and interactivity. And so just thinking about the body as a controller and the body as an expressive entity, then all the different ways that these immersive technologies are trying to capture different aspects of our body and how that's used creatively to either create like social dynamics to create like emotional expressivity, to create representation of body within these different spaces, or to give opportunities of expressing agency, all in the context of these larger, immersive and interactive experiences. So lots of different perspectives and takes on that and happy to participate on both this talk and discussion. And like I said, hopefully I'll get this video up here soon and should be able to have access to watch the video version. And yeah, hopefully get some different insights on this as a topic. So, that's all I have for today and I just wanted to thank you for listening to the Voices of VR podcast. And if you enjoy the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a listener-supported podcast and so I do rely upon donations from people like yourself in order to continue bringing this coverage. So you can become a member and donate today at patreon.com slash voicesofvr. Thanks for listening.