Human Interact’s Starship Commander uses AI-enabled, voice-activated commands in order to participate within an interactive story. It was recently was released as a location-based entertainment arcade game, and I first talked to Alexander Mejia about it back in 2016. I was able to try to demo at GDC 2017, and I was really surprised with how being to engage in dialogue with virtual characters really deepened my overall sense of immersion and social presence. I talked about my experience with VP of business development and lead actress Sophia Wright where we discuss the existing storytelling applications of conversational AI, as well as all of the potential therapeutic applications that will be enabled in the future.

LISTEN TO THIS EPISODE OF THE VOICES OF VR PODCAST

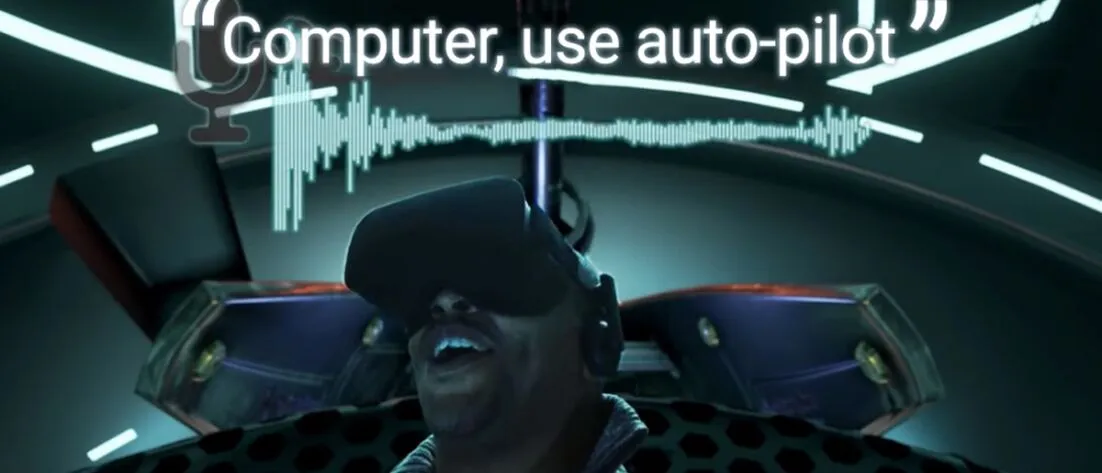

Here’s the launch trailer:

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

https://d315f3wfn5d33f.cloudfront.net

Rough Transcript

[00:00:05.412] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR podcast. So there's an experience called Starship Commander, which recently was released as a location-based entertainment experience, and I had a chance to interview both the founder, Alexander, as well as Sophie Wright. An interview that I did with Sophie Wright back at GDC 2017 was just after I had a chance to experience it for the first time. So in this experience, you are in a spaceship and you're able to start to use your own natural language communication to be able to communicate with Microsoft Cognitive Services, which is then sending, I guess, the intention of what you're saying into these higher level commands that are then sent back into the game experience, which is either giving you more information about what's happening in the game, or you're able to actually interact with the game just by using your voice. And so it's trying to use natural language as your primary mode of expressing agency within the experience. So I had a chance to talk to Sophie Wright, who is the vice president of business development for Interact, as well as the lead actress within the experience of Starship Commander. So that's what we're covering on today's episode of the Voices of VR podcast. So this interview with Sophie happened on Friday, March 3rd, 2017 at the GDC conference in San Francisco, California. So with that, let's go ahead and dive right in.

[00:01:32.390] Sophie Wright: My name is Sophie Wright. I'm the VP of Business Development for Human Interact. We're working on a product right now called Starship Commander. It's our release title. I do a little bit of this and a little bit of that. I consider myself the jack of all trades, the greaseman.

[00:01:47.164] Kent Bye: So yeah, there's a you're working on kind of a conversational interface narrative game here So it's an authored story more or less that has a number of potentially branches But you have the ability to interact with it. So you're kind of exploring a knowledge graph perhaps like you have a world that you're creating and then you have the ability to ask the computer and Information about this world and then you're kind of going on this journey to perform different tasks so maybe you could talk a bit about that process of Constructing what you would consider a script or the story and how you actually implement it with with the technology

[00:02:23.446] Sophie Wright: It's kind of like a choose-your-own-adventure novel, but it's a VR adventure. It's not really a game. It's not really a movie. It's something that's a hybrid in between the two. We haven't really got ourselves a word for it yet. We're creating a whole new lexicon here. It's more like improv theater. You are on stage with a cast of characters who already know what the world is. They know what is going to happen. But it all depends on the decisions that you make. So I play your wingman, which is Sergeant Sarah Pearson. And so you'll see me, you'll probably recognize my voice. And we have a lot of fun trying to explore the galaxy and defeat the bad guys. That is an over-simplistic way of putting it because nothing is ever that simple. There are three characters, myself, well four if you count yourself, and there are two guys that are going to be very interesting characters. There's the Admiral of the Peace, which is a people's earth army of commerce and exploration. And then there is the bad guy, the preferable bad guy. His name is Derek Kao Jing, or Derek the Terrible. And so what we've done is we've created the potential for you to get into all kinds of scrapes and shaves, interacting with this enemy race called the Echnians. And you do it exclusively by talking to your wingman and by talking to your voice-activated ship. The narrative then takes place with you and your wingman, you and your ship, you and the admiral, you and the bad guy. And what happens, possibilities will open and close depending on what you say. So if you ask questions, you could open up new paths. If you are rude to somebody and you shut them down, you're probably going to close a couple. You could even get yourself killed by saying something stupid. And yeah, so Kent, you played it, so you can tell me what you think as far as potential goes.

[00:04:13.729] Kent Bye: Yeah, well, I think my direct experience of it was at the beginning I'm in there and I'm, you know, interfacing with a holographic version of you in the experience, but you're this character kind of informing me of this world. I have no idea anything about this world and I kind of have this opportunity to start to learn about it. And so it's sort of like you say something and then there's some piece of information that I don't know about and then it's kind of a logical thing to say, okay, well, what about this? Who are the Ekenians? What about my ship? What can I do? Or also just start to role-play and ask some sort of romantic question or things like that.

[00:04:47.219] Sophie Wright: Yeah, I think what you did was spit game, actually. That's not something that I usually see, but it's kind of interesting and sort of surreal to see that from a third-person perspective.

[00:04:57.665] Kent Bye: Yeah, so I think that as I am going through this experience with you know from the VR design perspective is that whenever you go into an environment you want to start to interact with it and the more that you interact with it and it responds to you and it feels like it's listening to you the more that you feel like it's believable so it's like this almost like a ritualistic process of you know just like you would go to a movie theater and sit down, the lights go down, you watch the trailers, and then your suspension of disbelief is being primed in a certain way when you go to a movie theater. And I think this is a similar thing as you're sitting in the cockpit, you have this priming of your interaction with this AI character. The more that you interact with it, the more that you see that you can have this certain way of speaking. saying a keyword and then giving a command, essentially. But by doing that, it sort of built up this whole mental model in my mind that felt plausible, felt like, OK, it's actually listening to me. It's understanding me. It's triggering something. And I think that any time you're interacting with an AI chatbot, it's a little bit of a game of, OK, how can I say something crazy and have it actually understand me and respond to me? And when you get an affirmative, there's this just joyful, like, ha-ha, this works, and it's amazing.

[00:06:05.418] Sophie Wright: Well, the nice thing about it is, you likened it to going to a movie theater and having the lights come down and having the reality set in. For us, that's exactly what we were trying to go for. We wanted to have people come into this experience and feel that they were connecting with the characters. That's the goal with VR, for someone to have an experience that's so immersive that they can connect emotionally with it. For us, we always hate that moment in a movie theater when you see something happen and you're like, there's absolutely no way I would do that. Never go into that room with that person in there with a knife, because you know they're going to die, and that's why horror films are bad. Or whatever it is. Or that moment at the end of Titanic, like, Jack, just get on the damn headboard, okay? You're going to die on the water out there. So the opportunity for you to just have the movie go the way that you want it to in the end, or to have it go one way, and then say, yep, I didn't like the way that ended, I want to try it again. so that you can try that other ending and see, well, how would it have happened if I hadn't gone in that room? Would I have survived anyways? Maybe I wouldn't have. Maybe the story was going to play out and I was going to die anyways. Or maybe if he'd gotten on that headboard, maybe he would have froze to death anyways. Who knows? But at least you have the opportunity to explore that path and see what happens.

[00:07:09.844] Kent Bye: Yeah, the very beginning of the experience had this, you know, as you were talking I was thinking and reflecting back on moving from that first scene into essentially what's the second scene, you know, where I'm starting to be introduced to more of this world while I'm flying around in space essentially. And because I had this cultivation of presence in that first scene, and when I got into the second scene, then I was in this storytelling receiving mode of like, okay, give me this world and I just want to experience it. I think that had you just put me into an experience and that was the first thing off the bat, it wouldn't have been nearly as powerful, I don't think. Then when I move into what is essentially like the third scene, then it's going back into requiring me to interact a little bit. And I found myself kind of wanting to just like receive more of this story and this experience of this world, you know, and it was like, okay, no, I have to actually interact. And so I sort of like more passive in some way, receiving what's happening rather than more actively trying to destroy the enemy as soon as they show up.

[00:08:07.333] Sophie Wright: Right. And see, what we've done is we've created this demo that has just a couple of sequences that are in order chronologically for when they're going to happen. However, you got sequence two and essentially sequence 12 out of 12 sequences. So the very first sequence, you'd be meeting the admiral. And then the second sequence, you meet the wingman. The wingman teaches you how to fly the ship. The admiral will be teaching you, here's the world you're in. Here's the situation. Here's the backstory, you know. So that would be a little bit less interactive, a little bit more informational. The second one is very interactive. This is how you play the game. Here's how you fly the ship. And that kind of sets the mood for allowing you to become conversational, because she is a friendly character. She's someone that you want to actually talk to and ask questions of. The Admiral's going to be a little bit more aloof, a little bit more standoffish. And then you run into this character that's this Derek Caljean character. You'll see him once before this final scene. So there's actually some dialogue written in there. If you ask him, oh, hey, it's good to see you again, then that opens up a whole other path of dialogue, which you didn't get to because you didn't know. You already knew him, technically. so that when you get to that point, you can be more Captain Kirk, a little bit more swagger, or a little bit more, maybe even friendly, maybe decide that he's someone you wanna side with for whatever reason. So there's a lot of conversational options that'll be there, and like to your point, you will already have the opportunity to be a little bit more in the role play mood once you approach those later scenes. But we have to open it up somehow, and we have to give you the experience for the demo that you'll be having these very intense moments. this is a full range of emotions type of game, movie slash experience. Like, I have to keep doing that because I don't want people to feel like it's, you know, this is not grab a controller and start shooting the baddies. There's a lot more of diplomacy required to do this. And then there's going to be a lot of opportunity to just really have fun with it and play with it.

[00:09:52.336] Kent Bye: Yeah, that was, I don't tend towards violence. And so, you know, when I'm in a situation, it's like, okay, what are the options? Can I, you know, fight or can I?

[00:09:59.904] Sophie Wright: Funny, for a guy who doesn't tend towards violence, one of the very first things you wanted to do was, computer, fire.

[00:10:03.926] Kent Bye: Well, I was also just trying to, at that point, I was trying to see the extent of how I could interact. And this was the, like you said, I'm jumping into scene number 12, and so I'm like, it's more of a VR design critique. Like, OK, how far can I get this system to react? And so I was trying to get that to happen. But I feel like the thing that I'm also really noticing is that, In the future, like five years from now, they're going to be able to skip a little bit of the training of people how to use the system. I think you're kind of, because you're one of the first initial experiences, you kind of have to train people how to do this. And I can imagine in the future, just like people would play Facade, for example, and stream themselves trying to say or do funny or different things and see how the system would react. I feel like this is going to be somewhat similar of people kind of like really showing their personality of trying to recreate these different social situations. But it's going to be very watchable in a way of people able to really express their personality. But right now you're still in that phase of just really trying to teach people how to even use a conversational interface, interactive drama, narrative VR experience like this.

[00:11:10.890] Sophie Wright: Yeah, we are. And you know, for a lot of folks, they haven't even seen VR, much less seen this sort of a narrative in VR. So it's going to be very interesting for us, especially. We do want people to feel comfortable streaming this. We want people to interject their own personalities onto it. And this is just the very beginning. This is the first title of an entirely new genre. We chose Starship Commander. We chose the setting and space because people can feel very connected to that whole Star Wars, Star Trek, I'm the captain of the ship sort of a feel. It gives you the opportunity to be the protagonist of your own adventure. For us, it helps us limit the scope. So, you know, not to be cheaters here, but, you know, This is a new thing. It's something that we're doing. We're trying to make sure we give people the richest experience that we can with the limited resources that we have and the limitations of the equipment. But as the equipment expands, as our budgets hopefully expand, as we get additional resources, as we get people that come on board to help us out to tell stories, and as we get people who want to use this technology that we've got to tell their own stories, then we'll see, I really hope to see an explosion in this type of storytelling that it just becomes ubiquitous, that this is just the way that people want to tell their own stories and share their experiences and their own thoughts so that anyone else can start to explore whole new worlds, either existing worlds, existing IPs, or entirely new stories.

[00:12:33.813] Kent Bye: Yeah, and the previous time that I talked to Alexander back at VRLA, back last August, at that point you hadn't announced that you're working with Microsoft and their cognitive services. So my understanding is that there's kind of two parts. There's both the natural language processing, kind of speech detection of what are you actually saying, and then kind of translating that into that language input. And then there's the intent extrapolation part where you're actually trying to come up with the grammatical structure and find out, okay, what are they actually trying to do given the verbs that they're saying? So maybe you could talk a little bit about how those two are working together and how you're integrating that into your game.

[00:13:08.923] Sophie Wright: So the deal with Microsoft, they've been a phenomenal vendor for us. We are using their service, and because it's been such a successful collaboration, they've decided to go and do some co-marketing efforts with us. I think you may have seen the commercial that they created. They featured us in their commercial to sell their services, and we're really grateful that they chose us as their subject. The services themselves, there are the two services. The one is very much speech-to-text. It's basic. It's kind of like what Siri is for those people who've used Siri or Alexa or anything like that. It's very basic, straightforward. The accuracy is very high. But as people who've used these services know, it doesn't always get it exactly right. So when it doesn't get it right, when you ask Siri, hey Siri, where's the nearest bagel shop? Then you get results for Here's the 10 nearest disco shops near you. So if it doesn't hear it exactly right, you may not get what you were looking for. The intent part of it is what adds that little missing piece and says, OK, I might have heard disco shop, but I know you were looking for some kind of a shop. So let me look at the rest of the words in the sentence and see if I understood you correctly. And then it'll go ahead and make corrections to those words and say, OK, it's 9 in the morning. You're looking for coffee, too. You just did a search on your phone for these other things. So you're probably looking for something to eat. OK, I'm guessing you're looking for coffee and bagels. So here's some breakfast kind of things. So it gets you the rest of the information that you need. But it does it really quickly. It returns results in about 100 milliseconds, which is faster than you can blink your eye just about. So by having that, that's what makes it work. And even though you saw that some of the results that were coming back through on the text on the screen that we showed you didn't exactly line up with what the person had said into the microphone, it still gave you the right intent. So it was able to give you a line that answered their question. And the same thing happened when you were using it, too. Even though you couldn't see the box there, it gave you the same result, or at least a result that was acceptable for what you were looking for. It didn't break the immersion, and it kept the story going.

[00:15:01.290] Kent Bye: ROB DODSON Yeah. I think it worked, for the most part, really well. I think near the end, there were some other things where either I wasn't being heard or detected.

[00:15:09.426] Sophie Wright: The second scene isn't quite as polished as the first scene. The first scene, you can see we had full costumes, full makeup, all that stuff. And we were able to reshoot a lot of the lines and redesign some of the logic on that to make it really polished. That second scene, we need to reshoot and redesign some of that stuff still.

[00:15:24.413] Kent Bye: Yeah, so you're featured as an actress in this experience. And so maybe you could talk a bit about that creative process in terms of actually filming and putting yourself as a hologram within VR.

[00:15:36.172] Sophie Wright: You know, that's kind of a funny story, actually. It was never meant that I was going to be an actress in this. My degrees in mechanical engineering, my background is in automotive design, in lean manufacturing, Six Sigma, statistical process control. Yeah, that has exactly nothing to do with acting. I've never actually done this sort of thing before. We actually had another actress lined up and we paid her. She's a stage actress and when we did the testing with focus groups, it wasn't well received. So we had about two weeks before time to go show it off. We kind of panicked and didn't know what to do. So Alexander turns to me and says, hey Sophie, how would you like to be the wingman? I said, excuse me, what? And just like that, we reshot the scenes on an emergency basis. It went over really well. There was an awful lot of standing in the room with folks doing the demo and them going, wait a second, isn't that you, Sophie? Yeah, it is. But it went well. And so when we got final costume going and makeup and everything, We re-shot that first sequence, which people see that in the demo. And it just kind of stuck. So will we use that for final? I don't know. It depends if we have enough funds to hire somebody professional, like stage SAG or somebody. But people seem to like it.

[00:16:46.672] Kent Bye: MARK MANDEL-WALDAU Well, it seems like if it is you, then you have the ability to kind of do rapid iterations of user testing. And then whenever you want to actually get more lines in there, you could just sort of have that pipeline kind of really ironed out.

[00:16:58.815] Sophie Wright: Yeah, that was the other thing that kind of worked out pretty good is we had to reshoot a couple sequences and what we see in the trailer is actually additional lines that we shot just for that. So that's a benefit. Yeah. So, you know, one of those gift horse things, you know, don't look it in the mouth. It's just, just go with it. It's going pretty good. It's just very strange for me because that's never been my, my background. My background is always, I've been crew, not on stage.

[00:17:23.341] Kent Bye: So it seems like this conversational interface technology is starting with this interactive narrative experience. But it feels like it could actually start to move into other realms, perhaps some therapeutic realms. Just curious if you have any thoughts on that.

[00:17:37.355] Sophie Wright: Well, you know, that's interesting because that's exactly why I got into VR in the first place, is because there are so many possibilities. And when we talk about this Starship Commander VR experience, part of the reason why I get so hesitant in calling it a game is because people are very quick to label it, ah, yes, it is a game. It's just a game. but it's more than a game. The potential here is absolutely limitless. When you look at what we're trying to accomplish with VR, we're getting people immersed into an experience such that they are emoting with a character. They're actually building empathy. So we're teaching people to become empathetic. They are connecting with a character. They become emotionally immersed in a story where they care if somebody lives and dies. There's a reason why we put that in our trailer. Your decision is determined. if somebody lives or dies, they actually have an impact. So if you become emotionally connected with somebody, as you pointed out, you can even pursue a romantic connection with somebody, they can die on you if you make a bad decision. And there is a real emotional consequence that you're gonna feel if you get emotionally connected to a character. It happens all the time. We see this in long video games, long narrative video games. Final Fantasy VII, anyone? Anyone? So these things can definitely happen. We're doing it in an hour, hour and a half experience. So if we know these things occur, if we're already using VR to do things like treat PTSD, then why can't we use a narrative where you're talking to someone to train people who are limited in their empathetic skills? Perhaps they're somewhere on the spectrum. How can we train people, teach people to open up their abilities, to expand their abilities? So this is definitely a possibility. We also have people who have experienced traumatic experiences, they have PTSD, who are never able to really fully overcome them because they can't get back in that space, in that headspace, and then find whatever resolution they were looking for. They can't get closure. They can't confront their attacker. They can't confront that horrible situation. Maybe it was a car crash or maybe it was war or something. Maybe they feel like they failed somebody in some situation. What if they could get that closure? What if they could talk to that person? It's easy enough to create an avatar. We've got 3D body scanning. We can recreate somebody's voice. We can put them back in that situation in that moment and allow them to relive that experience and allow them to Change that moment if it went a certain way. I mean if we can change it in a story Why can't they just change that story moment that reality for them? I mean there's already therapies that do that sort of a thing now, but it's all done with imaging with visualizing so Imagine someone who's hurting because they never got to say goodbye to their family the way they wanted to that person somebody somebody passed away in their family with any ticket that right closure Imagine that they could actually go back to that moment and say the things and get a response from based off of something that they said or somebody who was facing an assailant in the past and then maybe the closure they need is to tell that person you don't have any power for me anymore and to be able to get that closure and to feel that, look that person in the eye and say those things and to have that moment. I mean there's so many ways that this can be used therapeutically and I'm not a psychiatrist. I have no training in the subject. But what I can tell you is that there are people who do, who want to pursue these things. And there's a technology out there that's available right now that we are using for entertainment purposes only that can definitely and should be explored and should be funded and looked at for these very useful, very much needed purposes. And me personally, I see this as only beneficial to the greater good of society. And I'd love to be able to help with that.

[00:21:12.672] Kent Bye: Yeah, as you were talking there, it just made me think about how so many video games right now have these abstracted expressions of agency through buttons. And when you have that level of abstraction, the level of meaning that you're able to communicate is limited by usually pretty binary type of interactions, like kill this person, There's not a lot of gameplay with diplomacy and it feels like you're able to really explore the conversational interface to do conflict resolution or diplomacy or to be put into situations to really cultivate that training of how do you navigate these complicated situations where you're able to actually get the skills that you need to have the diplomacy that could be like a translatable into your real life.

[00:21:54.223] Sophie Wright: Well, even the games that do have that, you've got the Bioware-style games that have a dialogue wheel. And they'll tell you, right in the center there, there's a little icon that tells you if this is the romance option, there's a heart that pops up, or if this is the aggressive option, there's like a fist, or this is the diplomacy option, or this is the joking option, you know, this is the sarcastic option. I mean, you can kind of tell by looking at the dialogue itself, but in case you weren't clear, it shows you a little icon. But it only gives you maybe four or five options. And you and I both know that there's an infinite number of ways you can take a conversation, not only by the words that you choose, but by the tone that you choose. So by using it this way, the way that we were doing it, allows you to kind of open up new avenues, ways of responding. And even if it wasn't directly done, through this language processing that we've done it, a trained psychiatrist, trained therapist can augment the responses by bringing in what they feel needs to be the right answer. But this is just a tool that you use to help people through whatever traumas that they're going through to resolve it. When you look at a lot of it, like the online bullying, I'm kind of changing subject here a little bit. When you look at things like online bullying, cyberbullying, trolling, stuff like that, when you really look at the people who are behind it and the PewDiePies and all over the world, even he admitted, I'm doing this because I have things that I haven't addressed in my life and I thought that I could just act out and I realize now that I have to address some of these things and it's wrong. Because we have so many people here who have unresolved traumas, unresolved issues from their youth, from their lives, from being bullied, that they then turn around and traumatize other people. And they have this great big megaphone called the internet that they're using to do it with. And by them inflicting trauma on other people, we're aggravating the problem. We are exponentially spreading this disease. that is just an illness, that is this poorness of spirit of something. I can't even tell you what exactly it is, but it is harmful and people are suffering. And so now we have a technology, we have had technologies, we've had therapies, but this can be sent out and used inexpensively, privately, in one's own home. It's just like putting it out there like a like a leaflet on a table. Pick it up. Don't pick it up. No one sees you do it. You know, see if you can't get some value out of it. But like I said, this is for people who are smarter than I am. But I see the potential is out there that we could be doing this and offering people something that can make their lives better. Maybe they won't feel the need to be so mean if they can work out whatever issues that they have, resolve those issues privately and feel good about themselves.

[00:24:25.860] Kent Bye: Right. And finally, what do you see as kind of the ultimate potential of virtual reality and what it might be able to enable?

[00:24:32.530] Sophie Wright: Boy, I mean, that's such a deep question. That's such a difficult one to answer. For me, a lot of why I got into virtual reality is because I see that there is so much potential for it. And what I'd like to see us do, especially with what we're doing, is to try to open this up to as many people as possible. For us, we've chosen the easiest controller on the planet, and that's the human voice. It's the one that everyone has the most experience using. You've been using it since you were, what, two, three years old, maybe four? So, the people who are best at this are people who are a little bit older, to be quite honest with you. My grandma can use this. And the people who get the most enjoyment out of this are the ones who are storytellers in their families. So, I have a second cousin. He's 65 years old and he's an ex-ad exec. And this is the exact perfect thing for him because he's always the one spinning a yarn. So, for someone who's going to get sassy or do improv, this is the perfect thing for him. Does he know how to use an Xbox controller? Absolutely not. But this is the sort of thing he's going to love. As long as we can get the headsets themselves easy to purchase and to install and use, this is going to be something the entire family can use. If the content is there, if we can create experiences that are family-friendly. I hate to use that term, family-friendly, because people associate that with cartoons and stuff. But truly something that anyone can get on and use. I've seen grannies get sassy with Derek Haljing and tell that little whippersnapper to sit down and shut up. In not so many words, actually. But this is something that everyone can use and get enjoyment out of. But bigger than that, though, people who don't emote very well, this gives them the opportunity to practice emoting. People who have limited mobility don't have to worry about having that dexterity to use a controller. We're even looking at the possibility of having a limited sight portion of it for folks who have visual impairments, making this very 3D audio intensive. So there's no reason why there can't be descriptors written into the script so that we can say, there's a large ship passing overhead and you can hear it pass overhead. And then everything is done through speech. So there's no Braille. There's no signing. There's nothing like that. It's all done by interacting with people with your voice. So we can open up this experience to whole new groups of gamers that don't even know that they're gamers yet. So that, for me, is where virtual reality is going. It's going to include all kinds of people in these kinds of storytelling adventures that we couldn't include before.

[00:26:58.598] Kent Bye: Awesome. Well, thank you so much. Thank you. So that was Sophie Wright. She's the vice president of business development for Interact, as well as the lead actress and Starship commander. So I have a number of different takeaways about this interview is that first of all, well, for me, it was really striking that the big thing about being able to add voice into virtual reality experiences is that you're now all of a sudden able to do things that are beyond what you could do with abstraction. So you push a button and you blow something up. That's pretty simple in terms of the types of gameplay interactions that you're able to have. What can you do once you introduce voice? Well, you're able to explore the complicated nuances of diplomacy Which was something that wasn't an intuitively obvious thing for me and so as I was telling Sophie that I'm not typically a violent person and she called me out because I During the actual experience. I was like, yeah, let's blow up this ship, you know, because it was basically like in that context I was just trying to see what the limits of the expression of agency that you could have, but it's not necessarily as interesting to be in a game like that and then to just like blow stuff up as if you were to, you know, do any other game where you'd have the options to push a button to do that. The thing that makes it interesting is the ways in which you could create dialogue and interactions and these moral dilemmas and these ways of using diplomacy as a gameplay mechanic and exploring these different conversational paths that you could possibly take. So to me, that's a very fascinating concept in terms of how the immersive technologies are going to continue to develop. So I had a chance to talk to Alexander about this whole experience before I had a chance to experience it. But then at GDC in March 3rd of 2017, that was the first time I actually had a chance to try it. And my experience was that once I was actually in the cockpit of that ship and able to have this sort of dialogue and banter, It really sold me that I was there and present within this experience. And so then it shoots me out into the space and being able to see these planets. And I felt this very deep sense of presence because it felt extremely plausible, like I had been interacting with this AI ship and just asking questions about the world. There was something about being able to participate in that process of world creation that just gave me this deep sense of presence and buy-in into the experience. To me, that was surprising. I didn't necessarily expect it. But in hindsight, I think it makes sense that if you're able to have this highly dynamic interaction and it feels real, then that just gives you a deep sense of social presence and much deeper buy-in into these types of experiences. So I'm super excited to see where this is going to go in terms of conversational interfaces and being able to actually dynamically interact with an experience. Now, I had just recently seen Bandersnatch on Netflix where you're essentially making these choices. There's these binary choices where you're basically have a choice of either A or B. And then that is then changing the trajectory of the experience. And sometimes one path leads you to the end of the experience and then it basically kicks you back into the experience and at the point where you can try again. but you have these different either endings that are very discreet and your impact of your agency is very limited because you don't feel like you're actually able to really put your own personality within the experience. And I think that with these types of experiences where you can really express yourself, then you feel like you're on an improv stage or you're able to really have a certain level of flourish. Now, I think there is a limit to how much the AI can actually detect and discern. But I think over time, these systems are going to get better and better and better. And there's also going to be more best practices for how to translate what someone is saying and what they're intending to say, because that's essentially some magic that we do automatically as humans is that we have a certain amount of context, we understand common sense, we're able to understand slang and incomplete information and poorly formed sentences. You know, the human being is very forgiving when it comes to natural language communication, and we're able to usually figure out what other people mean. But sometimes having computers do that hasn't always been so great. But within the context of a story, you can actually do more convincing levels of artificial intelligence because you kind of have this buy-in that you're playing along with a story. So that's limiting the amount of free expression that you have because it's within a certain context and you know what that context is. But it also is able to make some pretty good judges based upon some sentiment analysis of words and be able to kind of reduce things down there as well. So there's many different ways of trying to do this magical translation of what you're saying and what you mean with that intention and what that intention is trying to do in terms of expressing agency within experience. And these services like Microsoft Cognitive Services are also continuing to get better and better as they are able to get more data and do these types of integrations with games like Starship Commander. So that's all that I have for today. And I just wanted to thank you for listening to the Voices of VR podcast. And if you enjoy the podcast, then please do spread the word, tell your friends and consider becoming a member of the Patreon. This is a listener supported podcast. And so I do rely upon your donations in order to continue to bring you this coverage. So you can donate today and become a member at patreon.com slash Voices of VR. Thanks for listening.