I interviewed (this conversation is) Off the Record creator Nirit Peled at IDFA DocLab 2023. See more context in the rough transcript below.

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Podcast: Play in new window | Download

Rough Transcript

[00:00:05.412] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR podcast. It's a podcast that looks at the structures and forms of immersive storytelling and the future of spatial computing. You can support the podcast at patreon.com slash voicesofvr. So this is episode number 16 of 19 of my series of looking at different digital and immersive storytelling pieces from ifadoclab. Today's episode is with a piece called This Conversation is Off the Record by Nurit Peled. So I'm going to read this summary here to give you a little bit of a flavor of this piece. So, in her interactive lectures, Nuit Pellet investigates crime prevention algorithms and their profound impact on people's lives. The performance is built on her decade-long study of the consequences of risk-based profiling by Dutch police. This research was also the basis for her documentary Mothers. In her latest project, she takes an even deeper dive into the systems that are supposed to predict which young individuals will become criminals. So this is sort of like a dystopic thought crime profiling system, risk-based profiling by Dutch police that selected like 125 kids based upon looking at a repository of indicators and information that led to people who eventually committed crime. So taking a machine learning algorithm approach of just like trying to figure out the features of future criminals and then applying that and labeling like 125 youth who were like identified by this crime prevention algorithm, which is for me think of like, Minority Report, like thought police. Doing things before things actually happen So there's a lot of taboos and implications of the people who are getting tagged within the context of this program and there's not a lot of Transparency for how people even got identified and yeah It's just like a situation that she explored within the context of a documentary called mothers And now this was a performative lecture that she did if a doc lab where there's like three main characters in this piece she's playing a mayor who's setting the broader context and And as she's speaking, then you see the transcript in real time of what she's saying. And there's certain words that are being either highlighted or blacked out. So those words are connected to this visible algorithm that is trying to come up with these different features to identify people. So it's kind of using this real time AI to emphasize different aspects of the language that is being used in this algorithmic reality that she's exploring. So the piece is called This Conversation is Off the Record because as a journalist she was talking to all these different police officers and they would never be able to actually talk about what's actually happening on the record. They would always tell her off the record but she would never be able to actually report on that because everything has to be on the record. She was getting frustrated with this inability to cover what was actually happening And so she's looking at these alternative forms of theater and immersive performances that are kind of fictionalized in a way But still trying to get at the truth of what is happening So she created this composite conversation based upon all these author record conversations that's being delivered by this actress who is representing this police officer that she's talking to of the Dutch police and And then after you hear all the rationales for why we need to have this algorithmic-driven crime prevention process that's flagging all these people who haven't really done anything wrong but is potentially violating different aspects of their human rights, we have a human rights lawyer who stands up and then rebuts all these different arguments. And so you have this performative lecture dimension and using all these integrations of AI to reflect this algorithmic reality that Nareed's trying to deconstruct and criticize. So that's what we're covering on today's episode of the Voices of VR podcast. So this interview with Nirit happened on Monday, November 13th, 2023 at IFFA DocLab in Amsterdam, Netherlands. So with that, let's go ahead and dive right in.

[00:03:52.946] Nirit Peled: I'm Nirit and I'm a documentary maker and I'm very busy with form of storytelling. So it's not always immersive, but even if it's a film, I always have to find, you know, something that will connect to what I want to say or when I want to tell.

[00:04:09.329] Kent Bye: Great, maybe you could give a bit more context as to your background and your journey into this type of documentary making across many forms.

[00:04:17.651] Nirit Peled: I'm educated as an artist, and I was a VJ for a long time, so I was really in this much more kind of immersive, performative video party thing. But in the last 10 years, I really dived a bit more into more investigative journalism almost. And every time when I have my findings, I kind of think, OK, how can I make that? So, for example, I made one episodical documentary short on WhatsApp, where WhatsApp was then carrying these kind of messages, and the messages were becoming actually the form of storytelling. You would actually have contact with the main character, who was a young woman in America who was going to visit her brother in prison, and it took like 34 hours. So for 34 hours of a bus trip, to and from the jail you would get messages from her. So these are the kind of ideas, or last year I made the film about another crime prevention program, which was in essence for interviews with mothers whose sons were on these lists. And also there I had this problem of hiding them because they didn't want to be visible. And I used actresses who were lip-syncing. So I was playing with the idea of deepfake because I thought, how wonderful, I don't have to pixelate them. I don't have to put them in the dark or shoot some empty rooms. I can deepfake, amazing. But then deepfake was way too expensive for what I wanted to do. So I actually found a performative solution. So I always feel that I'm actually very early ready with a story. And then the last bit of the work is to kind of find a form. And with this one, it was the same. Something was left from the research of mothers, which was this little story that didn't get in, which was about an algorithm that they used once and then they realized it's not good and they stopped using it, at least in Amsterdam. So the Dutch police used it for longer. And I found it almost like a little, not a fairy tale, how do you call it, a parable? A story that I thought it had in it exactly the problem of not transparency in algorithms because there were 125 kids who were selected by it. And then Amsterdam kind of says, OK, we're not going to use it anymore. But the 125 kids are not informed. And with that kind of thought, I thought, how can you show this algorithmic reality rather than the human experience? Because I don't know who these kids are. And then Off the Record, actually, the wish to make Off the Record was born.

[00:06:56.145] Kent Bye: Yeah, so the piece is called this conversation is off the record and so in this piece you're exploring this minority report thought crime type of thing where this crime prevention where it's trying to figure out all these quantifying things to flag people to Basically surveil them and put them into a program where they are what you're arguing the piece there's certain aspects of their human rights that are being violated and But this is a story that's trying to cover this dimension of what you call this algorithmic reality of how the algorithms are influencing our lives. And so with this new algorithmic reality, you have to find new ways to tell these stories in a way that lands. And so maybe you could talk about where you began with the story. And maybe if you want to go back into the previous project of mothers was maybe an introduction. Take me back to the beginning of where this all began.

[00:07:45.814] Nirit Peled: So in Mothers, indeed, I kind of focused on the human experience. It's really a very touching, heartbreaking recount of four mothers, how they experienced the system. I'm not showing the system. And showing systems anyway is always very hard as a filmmaker. It's not a very visual thing and it's very boring. Sometimes I do journalistic work where you have just talking heads, but that's still not the system, right? It's the people who work with it. But what kind of stayed with me after Mothers is that what made meeting them strong for me is that I read their data or this kind of data for five years before seeing anyone. And I thought that for me meeting them was so heartbreaking because I knew how they look in data. And when I met them I thought, Oh my God, you're not like, you know, I had all these preconceptions and biases about who they might be. Because of course, if you read this data, it's just very promising that these people are all just, they have no chance. They're probably all drunk, junky mothers. That's what I imagined. I don't know why. I'm not sure exactly what it said, but that's the impression you get is that these people have no chance and the government will come in and help them. So anyway, the mothers tell that they're not helping them, that's one narrative. But I was left with that reading I had, with that way of looking at people as data and not seeing them as complete. You don't feel them, you don't see them, you don't forgive them, you don't appreciate them in any way. And actually in the beginning there were all these conversations I had also with police officers which remained off the record. And I was very frustrated about this because I felt that everybody's telling me what's going on but nobody wants to go on the record. And then I got frustrated with this journalistic code. where you need someone to go on the record. So it's not important what you know, even if you really know it, you have to get someone. And then I thought, OK, how about I go to something more speculative, which is actually how the algorithm also works, right? The algorithm is also speculating. They don't know, they're speculating. And in the beginning, I actually thought of VR. Because I thought, what if indeed, in the same way I did now, I ask an actress to read the transcript. I had everything transcripted. So I thought, if she reads a transcript, and the human rights lawyer is there, and all the people who help me shape an opinion, if I put them in this kind of virtual world. What bothered me about the VR is that I really felt that this experience should be bared in a community, that it should not be an individual. I also felt that Mothers, even though it's a film, that it's very individual and I really wanted to experience something together. And I felt that it had to be multidisciplinary, that I need every discipline I can pull in. And then I put together a little team. So I asked a theatre maker. I asked a 3D designer, which I really liked. Because I also wanted to steer away from this... I'm a designer and we are always designing the virtual world as these dots and lines. And I thought, no, no, can we go into speculation? How does this world look? And I gave her the assignment, for example, to design the avatars. We don't know the kids, but we know there's 125 datasets. So from there it became a real experimental way of working, where everybody is kind of trying to negotiate their own medium and their own way of working. And I was mainly guarding the story actually, kind of trying to grapple with what's journalism, what has to be. No, she never said that, so we have to stay with that. And slowly it found its shape, but it was really almost like a knitting of elements trying to visualize this sort, what I call the algorithmic reality, and that world that I feel I have almost visited, if you want.

[00:11:42.983] Kent Bye: Yeah, so the piece is broken up to what I remember at least into like three distinct parts where you're setting a broader context and introducing this and then you go into an actress who is playing the role of a cop where you have a transcript where she's essentially making an argument for why we need this type of crime prevention. And then you had a human rights lawyer, someone who is an actual human rights lawyer, give his response to this. And so it felt like a performative debate, but with something that's very constructed in terms of the argument. But I guess the piece is called this conversation is off the record. And you said that you were having all these conversations with these cops where they were telling you what they were doing and why. And so how do you make that leap between taking those actual off-the-record conversations to then create this composite transcript that is trying to get to the essence of all those same arguments that is, I guess, in the same way, in the spirit of what they're saying without violating that code of being off-the-record?

[00:12:44.217] Nirit Peled: Well, I really realized that I have to allow myself to go into fiction. Even Jelle is now involved in a case that he didn't want to talk about, so we had to find out. It's funny that everybody has something to lose from exposing the whole thing. So I made a few codes of fiction in order to mix a few transcripts and stay away from, let's say, In essence, what Off the Record is for is to protect the people who can tell you so that it doesn't harm them. So it was very important for me that she's not traceable and that it's kind of telling a story which is representative of the voices I heard in the police. And these were actually the arguments that helped me form an opinion. I actually really enjoyed the conversation with this police officer and I was very very sorry that with the three that I spoke to that none of them could speak publicly because I thought that realization of understanding that people are not this or that but they are and the criminal and the offender I thought it was a beautiful thought. Actually since then it influenced my work everything I do about youth criminality ever since is actually defined by this understanding. Only I understand it. If I see today a young offender, I encourage myself and others to look at him or her through the eyes of victimhood to understand what happened to this person. But suddenly I understood that they turned it around and they started looking at victims as potential victims. offenders and that I found really painful and I'm trying to kind of take you through the same process of making up that, you know, kind of gaining that knowledge and understanding this because I truly believe that everybody wants to actually do good in this story. It is just this very painful misunderstanding of what a digital tool can do if you, you know, apply this logic to it. Yeah, there was something that I felt that it's not only this kind of Hannah Arendt, you know, like all the civil servants who are just doing something, that it's now even worse because there's like this automated sauce on it where a lot of things can go wrong and there's no one looking at it to understand that it is the algorithm that turned it around, right? It is the algorithm that the machine learning went to look at the files of all these young offenders and find indicators. and commonalities, and it all found out, oh, so all these kids that came in here on child abuse or domestic violence, they end up as criminals. So then it turned it into an indicator. And that is something that I thought was important to kind of experience in the order I experienced it.

[00:15:29.057] Kent Bye: Yeah, I really appreciated the ways that the argument helped explain the deeper institutional logic that is driving these actions and it makes more sense for how if this is their goal, you mentioned that if they're a hammer and then every problem looks like a nail, so they're basically trying to translate everything into these crime statistics. And we had mentioned earlier, you know, that's very difficult to tell a story about a system and I immediately thought of The Wire as a TV series that I feel like does a brilliant job of telling the story of these institutions through, you know, those five different seasons and through the different lenses of looking at both the education system and the police. But a lot of focus on how much the statistics at the end of the day is driving all the behaviors around both the budgeting and the political dimensions of that. And so by lowering the crime statistics, then that ends up being a driver. And then so you can see how Throwing in all these new technologies can try to profile people to reduce those statistics But then yeah, it feels like the issues of algorithmic bias and all the human rights perspective that's provided there at the end to provide that broader context which I feel like is covered really well and films like coded bias that talk about like algorithmic bias of how folks who may not be in data training sets or if there's bias sets that it is repeating the biases that are already embedded into that so I feel like that This is yet another story where some of those ways that the numbers that they're looking at is already biased data that then is creating even more bias that is stigmatizing these 125 kids who were identified by this algorithm to be high risk for becoming future criminals that needed to be surveilled. Yeah, there's a lot of dimensions there in terms of both the algorithmic bias dimensions, but also the ways in which that the statistics and trying to lower those statistics is creating this deeper bureaucratic and institutional logic that is perhaps leading to these decisions that when you really unpack it, don't make sense. But you can see how within the context of that institutional logic, you can see how from their perspective as a hammer, they're trying to treat everything as a nail.

[00:17:32.835] Nirit Peled: No, completely. It's funny, for us this is a bit unfinished. Though now we're kind of happy with it and we don't know. We just got a small R&D grant from IDFA and decided to go for it. We still had the feeling that there's one more layer of interactivity that we want to add. We were planning, but it was not possible now. For example, there were boys who were doing the motion capture for the avatars. that I really like that if you really see if you have more time to see their motion as one as more of a choreography you really can feel those kids underneath right really can feel kind of like this sort of hanging out or I don't know walking on the street so these are things that I would really like to bring in still in order to not only bring these arguments in, but bring this feeling that you see these different layers of reality. It's almost augmented, right? It's almost something that you should eventually immerse yourself in it, to realize that we are all in this thing. I even had this talk with a lawyer who kind of told me, but why are we talking about the 125? I want to talk about all the kids whose data sets have been used because just using the data sets is period not okay. I just also on the way heard Naomi Klein talking about the double ganger and I thought that's exactly that feeling that I want to have and what do I need to do to kind of immerse the viewer even you know one step more into this understanding that my data set is somewhere there. and I almost need to know how to deal with it, or view it, or have some contacts in order to have some editorial power. It's funny that you mentioned The Wire though, because I really, intuitively, I really told everyone to watch it. What inspires me about The Wire is that also there's not like one demon. That's what I got from that policewoman who really inspired me, that she totally understand that people are not one thing or the other. And then I suddenly understood that for the algorithm it's very hard to bring this fluidity because it's a digital system. So, for example, the score people get out of this algorithm is between 0 to 6. And what I've seen even with police officers or with civil servants is that they don't know how this number came about. And of course they're going to try to check it themselves, but it's kind of overwhelming, right? If you get a name of a kid and a number, and probably this family has some problems. So you already think, oh, so it's very dystopian in this way. And it's exactly the opposite of the wire, which lets you look into the food chain of crime to create understanding of what's everybody's role.

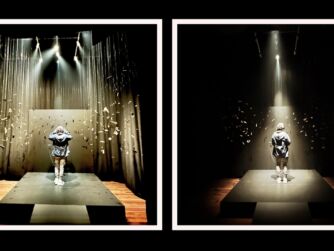

[00:20:12.749] Kent Bye: Yeah, David Simon has done an interview and my wife pointed me to how he talks about the structure of being like a Greek tragedy where rather than the gods and the fates, it's the bureaucratic institutions where people are subject to the fates of whatever the limits of what they can do with their agency. And so there's a lot of people really having their hands tied because of the larger bureaucratic incompetences of the different institutions, both political and the police and also the broader educational system and the whole ecosystem, but yeah, it feels like in this piece that you're Exploring a channel of information because you're having people stand up and almost give lectures and they're explaining These different arguments and you do have a bit of a spatial context where it's on a stage You're off to the left-hand side at a table speaking into microphone kind of reading the different script that's coming by but then the two people who are the protagonists the two other protagonists or the composite police character and the human rights lawyer off to the side and they get up and they give their speech and those three screens in the background you have these scenes of these motion-captured boys running around these virtual spaces and then you have during the second part like the transcript of the composite character it was in a different language that was being translated in real time so kind of a way of reminding the actor who's playing that to go through each of these different beats and then the human rights lawyer just extemporaneously gets up and responds to that but maybe you could expand a little bit more of the larger staging and the spatial context that you have, because you have these scenes of these virtual avatars and what you were trying to really get at with having that as a background context to this larger performative lecture that's happening.

[00:21:54.423] Nirit Peled: So indeed it started as a performative lecture, so that was how I called it in the beginning, though as we progressed I was wondering, where are we going? It felt a bit seasick, but I also liked it. I had the feeling that we have to kind of be able to have some play with the empathy. Like there had to be this emotional response else it remains in your brain, you know, like we write so much investigative pieces and then you read it and then just very technical and as I said it's very hard to weave together. what I've seen with these mothers and what I've seen in the corridors and in the computers. And that was this attempt to see if I can pour a little bit more emotion into that. And then if I would continue it today, I would probably continue with those projected stories of kids. that I have, because it is only when you see them next to each other that you really get that feeling, I think. I think when you see, even as a filmmaker, I always feel like people are so into the human condition, and it's always every commissioning editor wants to see a mother that failed, that is suffering, and I think, okay, but then we don't see the system really, right? And the system always gets away, because you can write about the system, but it's very hard to visualize the system. So the context for me was, OK, we need information, we need context, we need to understand the different officials. I didn't even give them names. For me, there was a lawyer, a policewoman, a mayor. I think the wire works in the same logic. but indeed I all the time wondered if there would be a moment that you can look at these avatars and have this underbelly feeling that this is really someone and that is I think was the main let's say essence of making this I think if I could I would maybe do it more in a gallery setting more in something that immerses you as a viewer more in there We consider to work live with those kids who are in the suits so that they are somewhere else next to here and they come in later. You know, like this feeling that something is augmented, that something is covering something else. Yeah, I think that is something I'm very passionate about because I think the only way forward is empathy. It's the only thing. It's the only thing. We have to practice feeling it. Because when someone is written as a five or a six or a criminal, that's so flat. You already lose your connection to this. And I teach in the Rietveld. And also for my students, I learned a lot. Because of them, I think I made avatars. Because of the younger generation, they're so used to refer to themselves in that other space. I mean, I also do and I love the digital world. I'm there all the time. But they really don't mind giving it a face and a look. And it felt really daring to go ahead and design avatars, right? Because you can also go so wrong. It's like visualizing something. It's like in science fiction books. You're almost sorry to see the film sometimes because you didn't want to know how this creature looks like. But I think that was for me the biggest challenge. It was how do I bring these avatars into a visual interpretation that will allow you still to feel them and dream them and think of your own city. Because I also don't think it's a Dutch story. I think what you just mentioned about the languages, that was a very big struggle. I think the policewoman is a great character, but it's very Dutch. And then I thought, hmm, the guy who doesn't understand Dutch is going to read this 12-minute transcript. That's also kind of, right, too much work.

[00:25:25.633] Kent Bye: Well, I think because I couldn't read it, then I had to really listen to the interpretation. So there was no distraction of having the ability to read it. So in some ways it almost worked better because if it was text, I would be tempted to read it rather than to listen to it. So yeah, I think there's a challenge in terms of the cognitive load of how much someone can take at any time. And thinking about the empathy, your character, the mayor, and then you have the police woman, and then you have the lawyer, one way to potentially extend it is to actually feature what some of the different direct experiences of either the children or the families are going through, because the human rights lawyer speaks on behalf of those children, saying, hey, they really feel stigmatized, but I feel like if there's some ways of either fictionalizing or including more of these anecdotes or composite characters to get through some of the different situations and contexts of what it feels like, or trying to recreate that feeling of being on this list that no one asked to be on, but they got selected by all these various different criteria, and then the different side effects of that, what is the direct experience of that, and if there's a character to explore that, or Yeah, I don't know if you've been in touch with some of these kids who are on the list and had an ability to get some of these other oral history discussions and start to potentially think about how to translate that into a composite fictionalized story.

[00:26:43.534] Nirit Peled: Yeah, I think now also that's what I'm trying to do at the last part where I'm more trying to describe reality. So that would be like the last layer with all this knowledge looking again at the world and kind of seeing where data points are being made because I always think that these are kind of portals. These are the moments where reality actually gets reported on in the computer. So I think that would be more of that tone and I really actually enjoyed just considering a more performative version you know of like working with the motion suits and with dancers and I don't know I would really go there. In the beginning I really thought it should all really be in this VR and that it happens around you and you know, maybe you navigate just by turning around. But now I kind of start liking this stage orchestrated experience. I do think it's nicer if the viewer would have a bit more control. in some way to even if it's the movement of the avatars or so I think we are looking for to because we had 45 minutes that we had a bit of time constraint with I was happy with it was a bit of an experiment but I think if I would go on it would be indeed with adding some of these stories I have a lot of recordings but language was really an issue. I would even now consider if to reproduce those recordings in order for them to be in English or to keep it in one language. It was actually an editorial request from IDFA to bring it to English because the policewoman was talking Dutch, which was much better, but indeed IDFA said our viewers are really 99% English speakers and for them it would be such a different experience. So I think these are things we're looking forward to kind of play with.

[00:28:31.656] Kent Bye: In the piece there's a number of different ways in which that these kids are getting flagged and one of them was like a medical condition of bedwetting which you say that there's some study that appeared somewhere that said there was some correlation between bedwetting and Child abuse and so you have like these things are in the medical context but being put into these algorithms that are putting people into their lives and so love to have you maybe have an opportunity to talk about all the different variety of objectified data points and numbers or information that's being fed into this algorithm to come up with the pre criminals or thought crimes or the folks who are highly predisposed to potentially become future criminals, I guess

[00:29:13.269] Nirit Peled: So it is a machine learning model, which is funny enough, that's what we're trying to do also with this text element that takes my language and I'm trying to teach it. So, for example, I had to teach it the names. So every time I said yellow class, which is the lawyer, it said yellow class, repeating, repeating.

[00:29:33.315] Kent Bye: Just to elaborate on that, because as you're speaking, you have a text transcript of what you're saying, so there's a bit of an AI translation of your speech that you're giving. You have it written down that you're reading, but yet it's doing live translation at the same time.

[00:29:47.607] Nirit Peled: Yeah, and it also grabs on to words that I have defined as good and bad. So I gave it all the indicators that belong to this algorithm. I was able to get through the court the indicators, but I was not able to get the code. So I don't know how much they weigh. So for example, the bedwetting, it's inside those indicators. And I guess the algorithm found it as a word that was mentioned in reports. But indeed it makes its own list of indicators, you almost have no control on it. And then it finds this thing that you're also thinking, really? In which report of the police do you write bad wedding? So it's kind of interesting to see what it comes up with and I'm trying to do the same while I'm talking. And of course in a more artistic way, I told it to ignore. words which I think are not being... So in the 2,500 documents we read from the court, if you do search for empathy or love, it's just not there. Those words are just not there. And it's 2,500 pages talking about kids between 12 and 18, which I thought, well, I mean, forgiveness must be in there, right? It's the age of forgiveness. I don't know if you have children, but Mine have just arrived at the same age, and I think, oh my God. I mean, go figure out what they're thinking right now. It's very difficult. So I think the language model is just looking for words which are anyway in their report. And the bedwetting was one of the examples, indeed, that they gave me, being kind of proud and saying, look, the algorithm can really find, you know, like very sensitive and kind of out of the box. ideas of how should we recognize child abuse which is an interesting question and indeed their data is very stiff and very square you know like there's certain things you just don't have in that system and they cannot describe that crime so they kind of try to find ways around it. It also made me think about the fact that many times I thought this algorithm does recognize people who need help. I do not even dare, maybe that's my process, to think if there would be a way to use it, because most of the people I found did describe kids, not bedwetting is maybe very specific, but did describe kids who were having a lot of trouble. I just don't know with which intention we are looking for it and what kind of help are we offering.

[00:32:14.736] Kent Bye: Yeah, it sounds like a little bit of the nightmare scenario of machine learning where you just throw a bunch of data at it and there's little to no algorithmic transparency as to how any of these decisions are being made. It's a big giant black box and there may have no explainability dimensions of being able to have it explain why it's making these decisions, which I think is one of the core tenets of like ethical AI is to have some sort of explainability factor as to why things are happening but it sounds at least that there's weighted of positive and negative affinity words that as you're speaking your script that you're going through there's words that are highlighted and there was a font color that made it difficult for some of the words once it appeared then it was difficult to read I don't know if it was yellow on the screen it was hard to kind of see

[00:32:57.898] Nirit Peled: The idea was that they're erased. The idea is when I say love, it doesn't stick and it turns to white on white. So it was actually on purpose that we were trying to create this very hard sensation that says, OK, some things do not get registered. And I think if you would look at my life and you would only take the negative events, and I can tell you there are quite a few, you would think something very bad because you don't see the good events, right? You don't see that in between I had, you know, I succeeded in this or I did that. It's a bit like, I always think of Ken Loach films. I think they have quite bad endings, like there's this harsh reality check but that you have the feeling that all these people, these working class people have tried and they loved and they laughed and they had a whole life and then maybe... There was also a bad ending, but I feel that the algorithm does not allow for that curve, that in the statistic it just looks like this down-spiraling thing. And that's what we try to do with this elimination of the words, with suggesting that the algorithm does not register that, and that you don't know how full-fledged is your data. And if you think of The Wire, I would love to make a scene about how policemen are writing this data. on their phone in between other calls. I've seen this. I've been with them in cars. I've observed a lot. And that's really the wire. Coming out of a house where everything goes wrong and trying to type in a little report and you get a phone call from your wife who shouts at you in the same moment and then you just save the report and never look at it again. That's never going away. But that report nobody can ever change. That's something also from digital culture, that it's no longer paper. It's no longer paper in a little office, in a little town where you grew up. It's a data base that's getting more and more connected. I'm now researching international data, so Interpol. there's so many lists which are being exchanged of someone like you who maybe once did something, I don't know, who's now in some kind of terrorist database and then every time you cross an airport someone's going to stop you and you'll ask yourself forever, why is this happening to me? So there's this footprint that I find super hyper interesting.

[00:35:22.984] Kent Bye: Great, and so I guess what's next for this conversation is off the record then?

[00:35:29.005] Nirit Peled: Yeah, we are all... It's funny, four days ago we said to everyone, listen, this is just a moment in time. Work in progress. And now it's been so well received and so many people saw in it so many things we did not yet even see in it, that we are kind of overwhelmed, but we want to continue working on it. I think also the collaborative effort has been really good. We had, after every session, we had some talks with audiences and we got a lot of amazing feedback. So yeah, I think we should do it more, but we want to make it a bit more international in the sense that it is a story about here, but indeed it should be more about this kind of algorithmic reality, your double ganger, kind of more something that you will really consider this augmented reality we're talking about as something that is there. I'm not even busy with the morality of this story. I just think we have to think about it in order to be super aware of it and find ways to deal with it.

[00:36:27.564] Kent Bye: Have there been any police officers who've had a chance to see it yet?

[00:36:31.329] Nirit Peled: Not yet. With Mothers, my film last year, there's been an enormous amount of noise and media around it. Actually, the mayor of Amsterdam wrote a letter, a nine-page letter, a week later. defending the system completely, not wanting, I was like, call us, call us, we want to, maybe you learn something. So I was extremely disappointed. So this one, because it was a tryout, it's been a bit more quiet. But yeah, once we make the next step, I think it will be a very interesting thing to sit with them and see how they react to it. So far, people have been quite defensive on the system side when it comes to algorithms. I don't think it's because of what we're saying. I think there have been a few scandals. I don't know if you're following in the Netherlands. It's just really bad PR. The reason Amsterdam stopped using this algorithm is not because they think it's not good. It's because they realize that it's becoming a PR nightmare. And that parents are starting to get really mad. And that mothers are saying, why is my son on the list? And that was the last email that they sent. They said, we can't explain this. And we're not mentioning the algorithm. We think we're getting the kids we want to reach, but we don't know how to explain this. So I think that the conversation now around algorithms, also in the UK with the gang matrix, with the prevent, all these governmental methods where they're trying to kind of work on data, it's just becoming a nightmare.

[00:38:02.213] Kent Bye: Well, I know that within the context of the European Union, there's the AI Act that is trying to at least come up with different tiers of harm that can be done and then actually outlawing certain applications of AI, including facial recognition in the context of law enforcement with police. And so because of the harm that comes when it goes wrong, you could go to jail or it can ruin your life. Then there's certain applications that have just been banned because of How severe of the harm that can result in that so I feel like at least there's been discussions there But in terms of actually passing that and then implementing it I feel like there's maybe still some time to kind of ripple out and again maybe change the level at which things are categorized because they can have a classification system to say this is high risk, so it's banned, and then medium and normal risk that has other reporting obligations. But yeah, given the context of law enforcement, that seems to be an area where the use of some of these different AI tools is most fraught. And so I'm a little bit surprised that it's still deployed and not been yanked back or reverted yet, because it does seem like it's extremely problematic, especially when people don't understand the limitations of what the algorithm can and cannot do.

[00:39:10.776] Nirit Peled: No, I think those European laws are very welcome, but I see governments experimenting. Now there's another investigation we're doing about fraud detection in the welfare system and in benefits. So I have the feeling that it's especially the lower income, social income communities that are being targeted with all this. I always thought, are there any other algorithms? Are you going to find out where is the next financial criminal going to grow up? Or, you know, like, what are we looking for? So I think the AI Act should take care of, indeed, how this thing grows. But I'm also looking at what are we looking at? and who are we looking for and who are we targeting all the time. So in that sense, I see that more as the story I'm looking for. And I think it's very important that we tinker with it and play with it in order to create these immersive simulations. Because at the end of the day, I think a lot of police officers would be very sensitive to this. Because what I saw in their eyes is that they really don't know what the system is doing and sometimes they're not very happy about it also, you know, like that their traditional way of working and the budget cuts and there's not enough people to talk to people and then suddenly you're sitting on a computer all day. I heard that sentiment a lot, let's say, from the older police officers who feel like suddenly they have to play and they're not tech-savvy like us maybe. Like I actually love playing with the algorithm. You know, I actually love talking to the machine and trying to see what I can teach it. So I think that the tinkering that we do with love for technology can maybe help thinking about it. Not very much from a moralistic point of view, but more from a kind of an imagined speculative point of view. Let's think for a second. What is this thing going to do?

[00:40:57.949] Kent Bye: Yeah, and as you say all that, I'm reminded to give another shout out to David Simon and The Wire, because I feel like so much of that series was able to detail all these different issues that are still very much real and present today, because it's all about how they're using technology to continue to do law enforcement. So now here's the next iteration that could be a whole other new season of The Wire as we go into all these different AI algorithms and whatnot. Yeah, I guess as we start to wrap up, I'm curious what you think the ultimate potential of this type of immersive storytelling and new forms of storytelling might be and what it might be able to enable.

[00:41:30.477] Nirit Peled: I think it's so important, especially as a filmmaker. I feel like when I come from film, it's much more defined. After I made Mothers last year, funded by Dutch television, very mainstream, it was very important for me to leave for a second this very well-defined way of making and go into a bit of a laboratorium, because I feel that, especially if we want to look at systems, where I really would like to look at is what technology does to society and where do they interact. I think it's very important to have a speculative and immersive way that allows also for a bit more playful, you know, a bit more trial and error, a bit more risky storytelling. Sometimes I'm worried that it does not reach enough people, maybe, or that it's more difficult. You know, when I make a film, a million people see it on television. But now I'm here for a few days and I have a great appreciation Because the quality of the contact I had with people is very high.

[00:42:30.714] Kent Bye: Awesome. And is there anything else that's left unsaid that you'd like to say to the broader immersive community?

[00:42:39.405] Nirit Peled: I think I would love to see how our love for technology can actually inform the broader context in which it develops. So a big fist up and applause. I really enjoyed it. Also this time in being immersed in DocLab, in IDFA DocLab. Yeah, it brings a lot of hope to me because I'm quite a pessimist sometimes.

[00:43:04.806] Kent Bye: Lots of really fascinating forms and structures of this kind of performative lecture combined with like the real-time transcript that's, you know, censoring and editing stuff out and yeah, just a really interesting way of addressing this, especially with a constructed composite transcript of all these other conversations that have been off the record to try to get at the heart of why this is happening and then have an opportunity to have this dialectical opposition that's countering all those points that it makes sense when you hear it argued, and then, you know, obviously as I was listening to it, I had all these objections, and then to hear those objections articulated in such an eloquent way from a human rights perspective, I thought it was a really effective way of getting to the broadest context of this as a piece, and I definitely look forward to seeing where you take it in the future, because, yeah, I think there's other ways to dig in and sort of unpack it more and add these other elements of immersive storytelling or other forms that are still emerging that may not even exist right now, so. Anyway, thanks for taking the time to talk a little bit more about your project and your process of This Conversation is Off the Record. So, thank you.

[00:44:05.553] Nirit Peled: Thank you.

[00:44:07.214] Kent Bye: So, that was Nirit Pillai as a performative lecture piece at Ifadak Lab called This Conversation is Off the Record. So, I have a number of different takeaways about this interview is that, first of all, well, I think it's definitely a topic that's worth exploring here of this type of algorithmically driven, one hour report about police and crime prevention. I mean, it's really wild that this has gotten to this point that people are actually doing this in some of these countries. But yeah, this risk based profiling by Dutch police that she's featured within a documentary of mothers. And also, as a journalist, like I said, at the top, she's really trying to find the ways that some of these other forms of telling stories through this type of immersive theatrical with overlaid on top of that just kind of interactive AI components that are trying to Unpack the deeper story of what might be happening So in this piece some of the more interactive AI components is that she's speaking out and as she's speaking Certain words that are being flagged by the transcript and being occluded or hidden you see them either not be transcribed or maybe appear and go away and there's other words that are highlighted and but there's not like a key for this type of symbolic communication that's happening. So it's a little bit confusing as to like why words are either being hidden or you can't see. And so I think it's a part of the challenge of this type of symbolic communication to sort of explain what's happening with this live transcript and kind of an example of like really trying to deliberately show, okay, I'm gonna say these words, but these words are forbidden or these words are good, these words are bad. So yeah, just the fact that she's covering this as a journalist and trying to find new ways of explaining and metaphorically showing this to audiences It was originally in Dutch So the actor who was playing the police officer actually speaks both English and Dutch But all the transcripts were in Dutch and she had to do this live Translation and so I think if it was written in English, I might have been a little bit more lucid as she was giving that performance but yeah, it was originally just gonna be presented in Dutch and I wouldn't have been able to understand any of it some and very grateful that the actress was able to do that kind of real time translation. But yeah, just to kind of lay out all the arguments to understand the logic, the different assumptions that you know, you make each one of the steps of the way, but you kind of hear the argument that she's able to dig into and understand through all these different off the record conversations. presented through this composite character that is in this imaginal performative lecture and debate with someone who's an actual human rights lawyer who gets up and deconstructs all the reasons and all the problems for why we need to reconsider this and all the ethical and moral implications and the human rights violations of this whole venture that's happening there. Yeah, I guess the other dimension is that as you are looking in the background, you know from the vivid unknown that I talked with John Fitzgerald There's a three screen installation space in the background and they're projecting onto the like these avatar representation of these kids So I would love to see a little bit more of the stories of those kids somehow being directly integrated in some of these rather than sort of more of an intellectual debate that's happening. And maybe that was in her prior work with the documentary of mothers, maybe there's another form that's really well suited for that. But trying to get to the journalistic components, I think this piece is trying to get to the essence of what is happening and describing everything and using different layers of augmentation or virtual reality. So in this case, there's like these Avatars and they're moving around but it was kind of a loose connection for how those Avatars in the background were directly related to any of the individuals. It was kind of like these composite Characters that were symbolically representing the children that you're talking about but for me there was a little bit too many steps of abstraction away from either very specific stories or how those two were kind of related to each other so Yeah, I think this is a very early prototype and there's a lot of iterations that are going to happen to flesh out some of these things. And the story is really compelling and strong, but how are all the other interactive and immersive and AI components augmenting and helping to amplify different dimensions of the story in this type of live performative lecture format? So, that's all I have for today, and I just wanted to thank you for listening to the Voices of VR podcast. And if you enjoy the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a VISTA-supported podcast, and so I do rely upon donations from people like yourself in order to continue to bring you this coverage. So you can become a member and donate today at patreon.com slash voicesofvr. Thanks for listening.