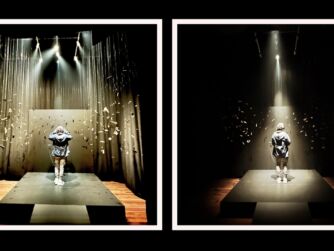

In Search of Time is a 2D film that premiered at Tribeca Immersive, and it uses a lot of generative AI style transfer techniques on top of footage of Pierre Zandrowicz’s children. Atlas V co-founder Zandrowicz’s co-directed this piece with Matthew Tierney, who comes from the figurative painting world, film, sound design, art, and works as a full-time artist. They wanted to explore the potentials of these new generative AI techniques for how they could be used within the context of an authored narrative. The end result is a very poetic, short film that reminds me a lot of the visual style that you see within the Waking Life film, but at a higher fidelity of photorealism.

I had a chance to catch up with Zandrowicz and Tiernay at Tribeca Immersive where we talked about their journey into playing with AI tools from a narrative perspective, the evolution of their generative AI pipelines, and some of the open-ended ethical questions around using AI within a creative context. It’s still very early days for how machine learning and generative AI will start to impact the creative process at all phases, and this is one of the first projects on the festival circuit that’s experimenting with some of the latest generative AI tools.

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Rough Transcript

[00:00:05.412] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR podcast. It's a podcast that looks at the potentials of immersive storytelling and the future of spatial computing. You can support the podcast at patreon.com slash Voices of VR. So continuing on my series of looking at the different experiences at Tribeca Immersive, today's episode is with a piece called In Search of Time, which is a 2D film, but it's using all sorts of generative AI applications, where it reminds me a lot of waking life. In Waking Life it's like cell shaded animation frame by frame and it gives you this animated painterly look and this is very similar to this piece where it's taking footage of Piers and Drovich's children as they're exploring around and reflecting upon memories and childhood memories but being able to modify and modulate all the different architecture of these different scenes and add On top of that, even more stuff that wasn't even the original footage, and using the different affordances of generative AI to explore the potentials of the future of immersive storytelling and storytelling in general, and as a style transfer aesthetic and prompt to explore all these different machine learning and artificial intelligence techniques in the context of storytelling. So that's what we're covering on today's episode of the Wastes of VR podcast. So this interview with Pierre and Matthew happened on Friday, June 9th, 2023 at the Tribeca Immersive in New York City, New York. So with that, let's go ahead and dive right in.

[00:01:38.791] Pierre Zandrowicz: I'm Pierre Zendurevich, I'm the co-director of In Search of Time, and I'm also running a company in Paris called Outlast 5. We do produce VR, AR, all these immersive technology, and it's our first exploration in this new medium, which is AI.

[00:01:56.724] Matthew Tierney: I'm Matt Tierney and I come from figurative painting world. And I met Pierre about six, seven months ago. And we were both completely freaking out about AI and how it's going to change art and cinema and maybe everything else in between in life. Yes. We were like, let's make something fun. And then out of that was birthed this project.

[00:02:19.682] Kent Bye: So maybe you could each give a bit more context as to your background and your journey into this space of this intersection between VR and AI.

[00:02:27.584] Pierre Zandrowicz: So I used to direct a lot. So I started to work in commercials. For eight years, I was directing commercials in France. And when I discovered VR, I dived into it with friends of mine. Then we created this company. We are four partners, and I'm the only one who is more in the creative side. I don't quite produce, I'm more directing, so I did a few pieces. I directed On the Morning You Wake, co-directed with Mike and Steve, Steve Jameson and Mike Bratt. I directed a piece called The Dawn of Art about the Chauvet Cave. The first film I did in VR was a 360 piece called I, Philip about Philip K. Dick's robotic head. Yeah, so I did a lot of VR experiences, but coming from a background in film, I was really interested to go back in film with something that is still close to new technology. So I feel like AI, creating film in AI is really the space I want to be in right now. Yeah, so it's like, in a weird way, it's like coming back to film, but with the background I've learned through VR, because it's still film and code. So, yeah. So, this is where I'm at.

[00:03:39.419] Matthew Tierney: So I went to film school at UCLA, theater, film, and television, and then about halfway through, I switched over to independent study to do sound design, music, and art. And from there, it led to becoming a full-time artist. And so I had a series of gallery shows. And then in the meantime of doing that, I was developing a new coding language with my friend Poki Rule that's based in London. It's called Cursorless. And that's doing away with keyboards and cursors, and it's just coding strictly with voice. And he has an ML background, so we're always back and forth discussing new tools. And right at the release of DALI 2, and then following that mid-journey, we got to start beta testing. And I had a few gallery shows lined up. And after seeing these tools, I was like, I need to slow down and pause on doing gallery shows and just learn about what's going on in the world. And then so from there, I just fully dove into text to image generation and then image to image generation. And I had been speaking with a group of friends in LA that are film producers, directors, and some writers. And our friend Sam Pressman, that's the CEO of Pressman Films, I suppose Pierre and myself were both chewing his ear off about the same topics. And he was like, you know, you guys just need to sit in a room together, have lunch, and have a conversation. Yeah, and from there, after about five minutes, we were like, let's try and make a film. Let's do it. Because people for, I guess, starting about a year ago in New York and LA were saying that it's not there yet, the tech's not ready, it's not possible, you can't hold narrative. And so I think our main goal was just going, can we push ourselves to tell a very, very simple story with the tools we have, make it coherent and consistent, and as opposed to heavily relying on the technology, rely more on narrative.

[00:05:46.261] Kent Bye: Maybe you could give a bit more context for how specifically this project, In Search of Time, came about.

[00:05:52.793] Pierre Zandrowicz: So I moved in New York in September and I didn't know that much people in New York. I was kind of missing my team in Paris. So I wanted to have someone to talk with and to be stimulated with. And so I started to talk with ChargePT and then Meet Journey. And it was super interesting because I really felt like I could have a conversation, like an artistic conversation with those platforms. in a way, because you're asking something and you get something else in return. You never quite get what you're asking for. And then when I met Matt, we wanted to use something else, like a new other tool to try to do... We were like, can we do a film with that technology? And as soon as we started to dive into this, we realized that it's not perfect, and what's interesting about it is that it's not perfect. So we rendered a few files and we were like, oh, that really looks like memories to us. The fact that it's organic, that it's not quite perfect and everything. And we both are really interested in what memory is, so we thought it would be a great way to have a new way of showing memories. So we used materials that we had, which was basically tons of iPhone footage of my kids. And yeah, we were like, why don't we just do a film about childhood memories? What could childhood memories look like? So we were looking for a style, and then we started prompting things over videos that I shot.

[00:07:24.859] Matthew Tierney: Yeah, so it first began at a lunch with Pierre. And we decided, yeah, in five minutes, we were like, let's make a film. And from there, the first, at about minute six, we said, you know, these tools seem to be really, really good. They kind of have this quality of memory within them, insofar as they can hold a consistent image and they can trigger something that you're very, very familiar with. But it always renders in a slightly strange way. And our first idea was a film about a father and son teaching the son how to ride a bicycle. And that we spoke about for maybe a week. And from there we just started writing text, sending it back and forth to one another. And out of that grew the narration that we have in In Search of Time. And so once we had our narrative structure, which was completely removed from a father teaching a boy to ride a bicycle, then we went through troves of footage that Pierre had shot on his iPhone, discussed which shots we think would work for our narrative and which wouldn't, and then did a bunch of render tests. And from maybe two months of testing, we learned which shots work best with stable diffusion and control net and can keep consistent images. And then which type of footage can best be processed to add a painterly aspect to them, how it appropriates negative and positive space, how it can mask characters. Yeah, so after those two months, then Pierre went out and shot a ton more footage of his children based on the knowledge that we learned and what type of things we needed and wanted to tell our story. And then we made a prototype. And then we picked it back up about three months ago, two and a half months ago. With our structure in place, a lot of our sound design ideas and our score ideas, narration done, and then from there we just spent days and days and days prompting, re-prompting, re-rendering to try to get frame by frame what we wanted narratively.

[00:09:34.095] Pierre Zandrowicz: I would just add, no, it was really an interesting process because it doesn't have anything to do with AI, but the way we did this was really iterating. Matt was working on sound design, I was working on editing, and then he was working on editing, and then I was cutting the voiceover. We did everything at once. So we had so many versions of the film, and it was really interesting because it's as if we could write and edit at the same time. I mean, I'd never worked like that, and the fact that it was only the two of us, made it really interesting because we were so close to the core idea we still need to work with people but we wanted to experiment this being just us so we could be so close to the core idea and not miss anything because when you work with others sometimes they'll bring something else which is great but sometimes you'll miss also other ideas that you brought so it was interesting to do everything by ourselves it was also tiring and exhausting but you know at least we made something

[00:10:31.332] Kent Bye: But at some point, you brought in John Fitzgerald to help out as well, right?

[00:10:34.895] Pierre Zandrowicz: Yeah, John came on the second version of the film, I mean, after the prototype. And it was really helpful to have him on board, because he had another view on the film, because he didn't know. I mean, he knew a bit. And also, he knows the technology, so he helped us on the workflow. So it was about workflow, ideas. He helped us a lot on the installation as well. They are also deflecting here and there. But what was the main, most important thing is at some point we wanted to do something completely different. We brought really bad ideas because it was only the two of us. And John came in and was like, are you sure, guys? I was like, yeah, maybe you're right. Maybe we shouldn't do that. So yeah, it was nice to have someone that came halfway.

[00:11:19.159] Matthew Tierney: I would say John, specifically, the best thing that he did was very, very subtly would always just nudge us back to the core original idea. And any little thing that we would add or take away that took us away from the inception of the idea, you go, maybe try what you just had done, what you showed me last week. Let's see where that develops. Keep pushing that direction. And I think a very, very subtle guiding hand was super, super helpful to the final product. And in the end, Pierre, when we delivered the file to Tribeca, we had dinner and were like, it was really incredible that the final export was exactly something that we both loved and was exactly our intention. And I think, yeah, it's very rare in the film world to be able, top to bottom, have your narrative voice be exactly at the forefront. And I feel like a lot of the AI tools are like this. They're very akin to painting, where the feedback is pretty direct, and you can start with an idea, work through a canvas, and it exists exactly as you intended, which is kind of wild and fun, and a bit scary.

[00:12:36.190] Kent Bye: Yeah, you were mentioning to me yesterday that you had started with some of these tools like DALI and Mid Journey, where you're submitting and having to pay for the render time. But eventually, once you moved into the stable diffusion pipeline, you were able to render stuff on your own GPU and own computer and not necessarily have to do things on a GPU that's distributed, which maybe is faster, but it's certainly cheaper, and you have less constraints in some ways of what you're able to do on the stable diffusion. So I'd love to hear about finding that right workflow and what were some of the trade-offs that you found if you were actually still using some of these online hosted platforms like Midjourney or DALI 2, and how you were able to integrate this into the final product.

[00:13:17.185] Matthew Tierney: Yeah, so we, at a certain point after running through Google Colab and outsourcing GPU, we were like, we should definitely do this locally. Once we realized the amount of time it would take to render, it takes about six to nine hours to render 15 to 20 seconds of footage. So yeah, then we started running it on three or four computers simultaneously. And we did actually learn that working locally is a bit slower.

[00:13:42.192] Pierre Zandrowicz: It crashes a lot. Yeah, exactly. So sometimes we just left like three computers running all night and we would come back the next morning and two of them just crashed. So we were like, oh. So yeah, at some point we just gave up all the computers locally and just came back online and just paid Google. You know, like take my money and just make it happen.

[00:14:09.633] Kent Bye: So yeah, with the control net, you have the ability to put like a reference image and then to say to what degree are you going to match this image and how much, you know, you can put positive prompts and negative prompts. And then there's the whole aspect of style transfer, which is something different. So were there multiple layers that you're doing or was it all into one? prompt from stable diffusion to do frame by frame and We'll start there and then talk about how to like get consistency amongst from like multiple frames without having too much Hallucinations or too much deviation to have this fluidity rather than something that feels a little bit more disjointed

[00:14:44.153] Matthew Tierney: Yes, so we definitely use control net for consistency, which we learned about rather late in the process. And that actually helped a lot frame by frame, like frame A to C matching with frame B consistency wise. And then Yeah, I would say in terms of prompt structuring, for one clip, let's say, frames 1 to 30, we would have prompt A, and then at frame 30, we would trigger prompt B. So it might be a boy walking on a beach with sailboats to his right. And then at frame 30, we would change that from a boy walking on a beach with sailboats on his right to now sailboats populating behind him. And so we could kind of, it took crazy amounts of renders, but we could almost get what we wanted in terms of moving objects that weren't in the original footage around the screen. So it still has these hallucinations, but with a nudging we could control them a touch, which was super fun. Yeah, that was like our favorite part about in the morning going to the studio, watching the renders and just going, did we fucking get it or did we miss it? Like, how did it come out? Yeah, it's like Christmas every day.

[00:16:03.377] Pierre Zandrowicz: Yeah, what's also amazing is, you know, this technology is evolving so fast that Every once in a while we had to update the whole process and things didn't work anymore. So we were like, oh fuck, how are we going to pull this off? And so we had to go online on Discord and we spent so much time talking with the community around this. And these people are so nice, like we've learned so much talking with them and everybody's sharing. I mean, I guess it's the same. There's a feeling of the early days of VR, in a way, where everybody's like around this and be like, we don't really know what we're doing and we're trying to understand and have a grasp of it. And with the time difference, it's like any time, day or night, there's someone there to answer you. So yeah, it's super nice. It's super nice and funny.

[00:16:53.376] Matthew Tierney: Our new favorite question on Discord is workflow? And from that, just one word and a question mark, people will write you paragraphs. Like, everyone's so down to share ideas. Hell, the community is rad.

[00:17:09.292] Kent Bye: Yeah, I was looking at the Reddit for Stable Diffusion because I was giving a panel discussion at Augmented World Expo on stage, looking at the intersection of AI and virtual reality. So I was with Alvin Ray Graylin from HTC, Tony Parisa from LeminaOne, and then Amy Lemire from WXR. It's a fund that funds a lot of women in their XR projects. playing around both with WhisperX to do transcripts for my podcast, but also with Stable Diffusion. And as I was looking at different posts on Stable Diffusion, they'd be like, oh, people have a little bit of a citation for, I use this model. And some people would even give the prompt for what they use. And so it gives the opportunity for people to go and try to replicate some of the workflow, or at least the look and feel And I noticed that you gave credits to your models in your piece, which I thought was really nice. So it's in that spirit of transparency or sharing, like, oh, here's how if you want to do something like this. So I'd love to hear a little bit about the models that you used and settled upon and what you found in terms of your recipe for this. What I would say is this kind of waking life-esque type of look, where it's got this illustrated and almost painterly feeling that is very dynamic from frame to frame. So it feels like a moving painted picture, very similar to waking life in a way. So yeah, I'd love to hear what were some of the different models and tools for your own workflow.

[00:18:28.978] Matthew Tierney: Yeah, so Pierre one day, very early on, was like, dude, I got it. There's this guy I've been chatting to. Check out this pipeline he created. And I'll let him tell you about that. But yeah, it was great.

[00:18:42.617] Pierre Zandrowicz: So yeah, so I'll do the long version real quick. So I was back in France and I was trying to look for the right pipeline workflow to do it. So I called this guy called Remy Molette, who is a French guy who's done like amazing things on Instagram. He did a music video for Chainsmoker, crazy on the forum and all these tools. So I texted him, I was like, can I ask you, what's your workflow? I was like, dude, can we just talk? I really want to talk with you. We could work together. So that guy was French, and I was like, I want to work on a film made with those tools, and we want to do it in three weeks. And that guy was like, there's no way you're going to do a three-minute film in three weeks. Just forget about it. And I was like, yeah, okay, then I'll do it by myself. Do you have any idea how I can do this? I was like, well, go on this Patreon, give that guy 20 bucks, his name is Alex Pirin, and start from there. And that was the beginning of everything, literally, really. So I went there, opened Discord, I started to talk with that guy, Alex. And we did the first version of the film based on the Ghibli model, but super weird. The film was kind of way too experimental. The model wasn't right. We had to do thousands of renderings to get something that looked okay.

[00:20:07.137] Matthew Tierney: Yeah, we had to do so many renders to get anything that remotely resembled a human. And we realized the Ghibli prompts aren't really great in terms of style. It does it well for still images, not for video. But yeah, then there's, I don't know if you've explored Civit AI, but it's just like a huge repo of models and on the Reddit stable diffusion threads. There's always people posting about new models, how they're trading them. And so we would just, all day, run through Reddit Stable Diffusion, just try to find little cues here and there to what we could use. And eventually, we finally landed on a few different trained sets that could hold face, some specifically for facial consistency, and then others that were much, much better for background consistency. And so we kind of did trade-offs between what we had in the footage, going which model is best and most ideal for each shot. But yeah, Alex Speer, and God bless him, because he helped a ton.

[00:21:14.803] Kent Bye: I'd come across some of those models as I was reading through the different citations. Hugging Face, I got a lot of different stuff from. But yeah, you maybe continue on with your story in terms of if there's anything else about the workflow that you wanted to say, because we sort of cut in.

[00:21:27.325] Pierre Zandrowicz: Yeah, it's just amazing because when we worked on the second version of the film, like that version, we wanted to do something more consistent, more professional, more close to Undone or to Scenar Darkly or all these films, and nothing could work. It wasn't great enough. One day, Matt was like, please try that model, let's just try something, because I thought I had the right, because you know, it's so fragile, like if you can just uncheck one box and nothing works anymore, so I was like so scared to kill everything, so we tried a new model and then suddenly it came out, and it was amazing. So the work wasn't done, but at least we had a cool workflow, and we downloaded everything on my laptop, and it was like, okay, this is it, we won't touch anything but prompts, starting from now. And then we made the film. But it's so hard to get the right thing to work. And also, maybe all the things we have now won't work in two weeks. So it's like, it's really, that film is like a mark in time, like a little parenthesis in time in that technology.

[00:22:33.517] Kent Bye: Yeah, one of the things that I noticed in watching it, so I watched it twice. After watching it a second time, there's something about the film that puts me into a slipstream of almost like associative-linked daydream. There's something about the AI that's constantly modulating and shifting that is constantly perverting my expectations in a way that has that artistic feel, but also almost puts me in a bit of an altered state of consciousness as I'm watching it. So I watched it the first time, and then I got an overall arc of what the story was. But then I wanted to watch it a second time, and I found it actually happened a little bit again as I was watching it. It was like this slipstream as I was watching it. But I think it's also because of the narrative structure is a little bit like a poem. And sometimes when you read a poem, I have to hear a poem multiple times sometimes to really get it. But there is this poem about memory. And the connections between memory and the visuals, I think, are a consistent theme throughout the whole piece. But yeah, I'd love to hear some of your thoughts on creating this piece with this poetic narrative, but also this exploration of memory and this surrealistic, lucid dream feeling that you're cultivating. And as a creator, sometimes it's difficult to know how people will see it for the first time and react. But as you were showing to people, if this was something that you heard from other folks as well.

[00:23:47.865] Matthew Tierney: Yeah, I think in the prototype the voiceover was a bit too present. And it was almost like a layer on top of the imagery. And the more we spoke about it, the more we wanted to just create more subtle layers. Our intention, I think, in the end was to be able to watch it and you only catch maybe the first bit of the voiceover and the last bit, and you can construct the narrative in your own mind. And then, unlike poems, as you get older, as you read it in the morning and then at night, everything about your day influences how you read it. And so if you watch it a second time or a third, you catch different bits and pieces and different parts of the poem stick out to you more. So yeah, that's our intention, hopefully, was to get.

[00:24:37.773] Pierre Zandrowicz: We were really inspired by Terrence Malick's work. And yeah, we wanted to do something where, to be honest, really the goal was to create a memory. And be like, it's funny because even now, we saw the film so many times, and even now sometimes, the other day we were looking at a shot, just to set up the volume. There's a scene where you have a wave crashing on rocks, and we just wanted to make sure the bases work. And I was like, I don't even know where that shot is. Because the whole film is such a weird, and the way it's edited, and so even for us, I can re-watch it over and over, and I can still discover things here and there. So yeah, the thing is, I really wonder if we can still work with this on a future film. Maybe it's too tiring in a way. Yeah, it was like a short, compressed version of a transmatic film in a way, plus adding on top of it a link letter touch, I guess.

[00:25:37.626] Kent Bye: You know, curious to hear about the ethical issues with some of these models, because sometimes you'll have like the Ghibli model, presumably is trained on all the Studio Ghibli films, and you have a lack of transparency when it comes to both Midjourney as well as OpenAI. with Ali in terms of what the data sets even are. So there's not a lot of transparency. But then talking to Ivo Haining, she was talking about how when she prompts, she wrote a book called Prompt Craft. And so she was saying that she avoids prompting of living artists unless she has their consent. And so there's all these emerging ethical ways of approaching this as to what is the training set data? Is it somehow using other artists' data without their consent? And also, just the way that you're prompting, are you invoking other living artists? So I'm just curious how you start to navigate some of these different types of ethical and moral dilemmas of AI when it comes to this generative AI.

[00:26:29.750] Matthew Tierney: Yeah, I would say the stage that we're at now, community-wise, it feels very much like the inception of hip-hop, where people would take their parents' old jazz records or 70s soul, sample it, re-sample it, and then construct something new. And it took a while for the industry to catch up and go, wait, wait, wait, we need to provide royalties for all of these samples. And I think that should happen as soon as possible because I think especially living artists, if you're using their style, I think there should be checkpoints in place where if a model envelops their style and then it gets used, they should absolutely get paid. And then I think there's even a broader ethical question of you'd have to have the artist's consent to be placed into a model. which I know is non-existent right now. Yeah, I think that has to happen. And I think where it gets really, to us, that we talk about now if we want to explore this deeper, is to just add our own images to models, and then go deeper into our own artistic practices to get out exactly what we want. And, yeah, not use any other artists or styles for reference.

[00:27:45.890] Pierre Zandrowicz: So we didn't use Ghibli at the end, we used Ghibli for the first version that never came out. We used a model that was trained on, actually we used two models, two different models that was trained on a huge data set of different images. Not only one artist, you know, it's like a bunch of them. Yeah, and also the fact that we're using our own video, to me, in a way, it's like we're creating something else. Like, it's hard to tell if it's that artist or that artist, and we don't really prompt name of artist, we just use the models. And yeah, I mean, we agree both on this, like, we should have more transparency, more regulation on this and everything, but for now, we're just exploring, and we've self-funded the film, we don't do money out of it, you know, it's like, for us, it was just a huge exploration, Yeah, so feel good about this for now.

[00:28:32.927] Matthew Tierney: And if you have time today, this afternoon, we're doing a program called Human After All at the World Trade Center Oculus. I think 4.30 we have a group of entertainment lawyers just talking about IP law and AI. And I think, yeah, so I'm doing that with Sam Pressman that worked on In Search of Time with us as a producer. And yeah, over the last six months we've just been having a lot of conversations of we should get people, like-minded people in a room and actually hear what the legal standpoint is on these issues and then also have creators talk and inform the lawyers, have that two-way conversation of this is what should happen and this is how we make it happen.

[00:29:17.788] Kent Bye: Yeah, I know that there's existing laws around fair use and having the transformative use of artwork, but then there's also some of the lawsuits against some of these models are from artists who are claiming that the way that the fusion model is like sort of like a form of compression where you're able to compress a work and then uncompress it and it's that compression and uncompression if that's like lossless enough then it's essentially the same as copying and so that's the argument in favor of more stringent IP protection. And the other side is that Kirby Ferguson has a whole series of everything is a remix and into this more creating an archive of this creative commons sense where people are contributing to something that is going to be able to become a repository that people can build from. I hope that we settle upon some sort of model where people are explicitly licensing something similar to Creative Commons that would flag to people that it is okay to use this. And the issue with Creative Commons is often there's attribution obligations, and so there's different licenses in there, even with the Creative Commons. I feel like we're in this new realm where there is this debate, is it a transformation of fair use, or is it a copy in this compression model of what happens in the diffusion? So some of this is at the technical level that is going to be informing how the law is playing out. But yeah, I'd be very curious to see what the other lawyers say. But it feels like it's this debate where I personally want to see this repository that we could capture all of the cultural knowledge of images that humans have created. That's what these models sort of are, and it's kind of and fascinating in a lot of ways, that this is like a repository of cultural knowledge. But how are those models, are they bringing harm to people who's not in those models? And there's some studies that people did like searching for CEO, and it ended up being like all white males that were coming up with some of these models. So there's ways that bias is built into these models. So I think there's a lot of those different types of issues that are still being settled, both from the IP angle, but also the other harms that are maybe embedded into these models as well. I don't know if you have any thoughts on that.

[00:31:19.798] Matthew Tierney: I totally agree, absolutely, with that. Yeah.

[00:31:23.805] Pierre Zandrowicz: Yeah, same here. I'm with you on this.

[00:31:27.230] Kent Bye: So what's next for you in exploring the future of this intersection between AI and filmmaking?

[00:31:33.025] Pierre Zandrowicz: So we're thinking of creating another chapter of this film because we want to keep exploring the relationship in a family. So this is, in a way, the point of view of the child, but also the father. You know, it's like two different point of view that are crossing each other in the film. And we now want to explore the point of view of a kid watching their parents. So, we're exploring that idea right now. So, you know, there's something, even when you work with Gen 2, you know, Runway's tool, the imagery is quite different from this, but it's still something where it feels like you're watching memories. Like, it brings me back to Minority Report, where Tom Cruise is watching his memories with his kids, and so there's something there, or it reminds me also of the Kinects, you know, when you shoot something with the device from Microsoft. where the point clouds are not clear, so that aesthetic is really interesting for us, so we want to keep exploring this through maybe another project we're writing right now with Matt, about photographers or... voyagers that are exploring new civilizations on other planets through their brain. So basically they're just traveling with their brain and they're coming back with just like short memories of those civilizations and this is something we could do with the stat tool as well. So it would be a mix of real footage again and AI-generated content.

[00:32:59.748] Matthew Tierney: Yeah, and the project's about civilizations. Yeah, I think largely we want to use it as a vehicle to talk about our own. And they'd all be human-like civilizations with similar constructs to planet Earth. But the one stipulation that we have thus far is none of the civilizations explored have developed AI and potentially computing, but we haven't decided that yet. But definitely AI.

[00:33:28.477] Kent Bye: Yeah, I'm curious to hear where you keep track of this industry, because things are evolving so quickly. I know there's, like, Discords. There's Reddits. There's Patreons that you've been able to get information. There's Twitter. So where are the sources that you go to to keep up to speed as to the latest developments of a field that actually is, like, moving? really quite quickly. So we think about, like, the release of Chat2BT back in, like, November of 2022, and here we are just over, like, six or seven months later, and we've had this real explosion of all these generative tools that are out there, and going back to 2022 with all mid-journey and stable diffusion. We're a little over, like, a year and a few months from a lot of these becoming publicly available out of the betas, but, yeah, it's been quite a lot that is happening very quickly. So where do you go to keep track of the latest news?

[00:34:14.872] Matthew Tierney: I always start Reddit. Definitely Reddit, Stable Diffusion, Mid Journey, Dolly2, OpenAI subreddit, Singularity subreddit, ML subreddit. And then there's a really great newsletter called Ben's Bites that I love. Yeah, so I get that email a couple times a week. And he always just really, really in a succinct manner synthesizes broadly what's happening in ML. then hundreds of discords. And also in the VRAR community and just friends of ours in New York, we have WhatsApp messages where if I see something on Reddit or Pierre finds something on Discord or John finds something, Anything that's remotely cool just toss it in and then talk about it and that's just kind of an all-day thing And I think it's really yeah, it's really helpful to have friends from totally different Fields just sharing go and check it out. Check it out. Check it out. You learn a lot quickly

[00:35:17.005] Pierre Zandrowicz: I would just add Twitter. There's a lot of things on Twitter. But same, Reddit, Discord. To be honest, it's really hard to follow everything. There's so much going on. What we said, after we're done with this, we're just going to take a break of two weeks testing things. Because you can read a lot of things, but you don't really know what's working or what's not. And so we have to go through those tutorials and Reddit conversation to explore everything because there's a few, there's so many little things you can do to make the workflow better. I don't know, like just slow down shots to render it and then put it back at the right speed. Yeah, so it's not only about the technique, about machine learning and everything, it's also the whole process around it. So yeah, after Tribeca, we're going to go back in the cave and explore the things.

[00:36:09.347] Kent Bye: Well, I know that one of the co-founders of MidJourney has actually been one of the co-founders of what was Leap Motion, which was David Holtz. He's got this whole background in both 3D spatial aspects. And so I expect at some point that he's going to be thinking about how to create a MidJourney for 3D objects. And so I'm curious if you've started to look at some of the early work that's out there to prompt full spatialized 3D objects, and if you thought about how to create a full immersive VR experience using AI. if the technology isn't quite there yet, or if you feel like it's just a matter of a few breakthroughs before we're ready to start having things into a production pipeline. Because I've heard a lot of folks talk about this stuff where it's good for prototypes, but not quite ready for primetime. But I think you've been able to show that you're able to produce something that has a narrative component. So I feel like having a good story may be able to tie things together. But even with the 3D, It still feels like it's early days, but I'm curious if you've done any early explorations of the spatialized component of being able to do generative AI with 3D objects and 3D art and immersive stories.

[00:37:11.082] Pierre Zandrowicz: So I saw online a tool to create a 3D object based on prompts. I think it's something that Google made, but I wasn't really convinced. The look of it is really not good. I'm more interested into Nerf, to do stuff with Nerf and then put them back into video and add a style on it. That to me is more interesting for now.

[00:37:32.039] Kent Bye: Yeah, it says neural radiance fields. It's essentially like a volumetric capture, but it's a machine learning to be able to give a spatialized experience of something. So it's a bit of like, there's a number of different Nerf tools to be able to capture stuff. So you're saying to capture the Nerf, but through the Nerf you're able to add a style transfer to kind of modulate it in a way.

[00:37:49.040] Pierre Zandrowicz: Exactly, yeah. So I'm sure that in a year or so, we'll be able to do 3D objects that are going to be great.

[00:37:56.762] Matthew Tierney: And my new Stable Diffusion release. Yeah, one of the new updates for, I think it was last week, within Stable Diffusion was for 3D objects. So it's already coming. It's open source. It's gone. Yeah. We'll see in a week where things are.

[00:38:14.267] Kent Bye: Yeah. So as breaking news. So yeah, just as we start to wrap up, I'm curious what you think the ultimate potential of this intersection of AI and the future of media, film, and spatial and immersive storytelling might be, and what it might be able to enable.

[00:38:32.763] Matthew Tierney: Yeah, I would say, this is, Pierre and I talk about this all the time, just the true democracy of the medium, where I remember being a kid and having like a DV camera, going out with my friends, shooting stuff. But even then, it was pretty expensive. You had to have a camera, you had to have those DV tapes, and then to edit them, it was always a super tricky process. And I think the younger generation with TikTok, Instagram Stories, they're developing all of these languages and skills naturally with these apps. So they're going, OK, I can learn how to edit. I can stitch together music with it. And I think using AI, I think, will just be another little tool that they tell either their long-form narratives or short-form narratives. And for us, being able to do this, just the two of us, was remarkable. And we learned, wow, there's a kid in Oklahoma right now that's 12, on the same subreddits that we're on, the same discords, going, I have an iPhone, or I have my parents' phone, or I have a Samsung, and I can just shoot things, and I can turn it into this complete dream world that I've always wanted to make. And yeah, the render times are long, but I think in a year, that kid's making a 20-minute short that I would love to see. I think that's so awesome.

[00:39:54.495] Pierre Zandrowicz: I think what was amazing is to work with such a small device as an iPhone and being able to shoot my kids. They don't even notice. If I had a big camera, they would. And I can be like, the camera would be that high up and I'll just follow them and the quality is great. It's amazing. But I didn't want to have my kids in a film. Even for them, their own image, I don't want to share their image online because the film might be online at some point. So it was a great way to make them anonymous also. And I really love the mix between animation, documentary in a way. Like it was so natural the way they're just living. And I think it's so hard to get in an animation film. And stable diffusion and all those tools. This is also why it feels like it's an experience. It's because it's life. Like what we're showing is life. It's not like I didn't ask anything to them. I was just... filming them. And then you add this dreamy world on top of this, and then it creates something magical.

[00:40:57.758] Kent Bye: Awesome. Is there anything else that's left unsaid that you'd like to say to the broader Immersive community?

[00:41:03.113] Matthew Tierney: Let's keep going wild and building this up because it's super fun.

[00:41:07.096] Pierre Zandrowicz: Yeah, the only thing I would add is, so first of all, thank you for the time. And it's really interesting to have this conversation with you. I think we should address this more like, you know, this is what you were saying earlier. And I think I feel like, you know, when COVID hit, we had a broad conversation about science. Like I've learned what was the vaccine. I've learned what was the virus. And, you know, obviously we had more time to But also, I discovered so many things about science and I feel like we should do exactly the same with AI right now. We all should read and learn what is AI and how it works. Because for now, it really looks like a black box where it's just magical. You type something and you press enter and then you get answers or images. It might be even something that we should teach to kids in class. I don't know where it's heading, you know, I don't really know where my kids are going to live, like the world that's out there. Yeah, so this is the only thing I'm going to try.

[00:42:04.747] Kent Bye: Awesome. Well, super fascinating intersection of artificial intelligence and film and also the future of immersive storytelling. And I think it's certainly a hot topic that I'm covering a lot more. And yeah, excited to see where it all goes and also play around with some of these tools and share some of the models that you worked here on this project and have other people start to play around and see what kind of workflows they can generate. Because like you said, there's so many different models, so many different opportunities to innovate what's possible with this intersection. Yeah, thanks for making the piece and taking the time to help break it all down.

[00:42:36.364] Pierre Zandrowicz: So thank you. Thank you. Yeah, that was fun. Thank you. Thank you so much.

[00:42:40.606] Kent Bye: So that was Piers Androvich. He's the co-director of In Search of Time and also one of the co-founders of Atlas 5, as well as Matt Tierney. He's a co-director of In Search of Time and is coming from figurative painting, world, film, sound design, art, and is a full-time artist. So I have a number of different takeaways about this interview is that, first of all, Well, this is a piece that is very poetic and I had to watch it a couple times just to be able to catch everything just because it did put me into a bit of this altered state of consciousness because there's something about the predictive coding theory of neuroscience where you're trying to expect the next thing that's coming. And there's something about the generative AI that is interesting and novel and consistently perverting your expectations. And so you end up doing a lot of cognitive load of just trying to understand all the visual information that you're receiving from these generative AI entities. And you don't always have mental models to understand or describe all the little nuances of it. The closest analog I can think of that I've seen is like the movie Waking Life that's exploring this technique of these animations. But this is also on the top of that very much a poetic piece where they're doing these deep reflections on the nature of memory and childhood memories as well. So it was just super fascinating to hear where generative AI is going to start to play into the whole pipeline and ecosystem of storytelling, whether it's in 2D film or when immersive storytelling. And it actually happens to be a big contentious issue in the context of these negotiations when it comes to both the Writers Guild of America, as well as the Screen Actors Guild, because artificial intelligence has the potential to completely transform all these different aspects of the pipeline of producing these creative media. And I think the core of it for me at least comes down to labor and whose labor is being included in these models and what labor is being displaced is a part of a broader discussion that's happening in the context of the ethics of all this stuff. And I think that both Pierre and Matt are using these different models in the context of using their own footage and they're more adding it as a style that they're applying to it. But as we move forward, trying to negotiate all the different ethical boundaries of technologies like this. On the one hand, it does unleash all these different creative potentials. But on the other hand, there may be some of these models that are acquiring different aspects from the training that they don't have the full rights to digest and transfer all these different styles and whatnot. So yeah, we'll be diving into much more of these different issues. I did a whole couple of dozen interviews over the last four months from new images, Laval Virtual and Augmented World Expo, talking to different folks at Tribeca, there was a whole Onyx studios had a number of different AI pieces that I did some discussions with folks there who are working with this technology. So it's certainly an area that's a hot, hot topic, both the creative possibilities of what's made possible, as well as the constraints and limitations and ethical considerations to be taken into account. So It's a much, much broader discussion that's happening at many different levels right now, but just wanted to elaborate on that a little bit. And I think from the creative side is certainly a lot of untapped potentials that they're starting to dig into. Certainly something that Evo Hanning calls it prompt craft. And so there's a bit of a alchemical or magical component where. You don't always rationally know exactly what's going to happen. You have to experiment a lot with trying stuff out and there's a lot of trial and error and you just have to see if it works or not and there's a little bit of like mystery that you don't know fully what's the right combination of words or how it's actually interacting and interpreting all these different aspects of language. So it's a bit of like interacting with an alien intelligence in some ways, trying to coax it and persuade it and use the right combinations of words to be able to get what you want. And that's why Ivo Haining calls it prompt craft, because it's like a alchemical magical spell casting in some ways of trying to get what you want through the use of language. And incidentally, I have an interview with Ivo that I hope to dig into once I start to dive into the next series of the intersection between artificial intelligence, machine learning and immersive media virtual reality and whatnot. But yeah, super exciting to hear the very beginning phases of this intersection. I mean, it's been happening for a number of years, but there's maturation of these different tools where there's both open source tools, as well as these commercial products, we're able to generate some of these different imagery. they were using control net to have a little bit more input as to the overall architecture of the scenes and from there being able to overlay all sorts of these different style transfer and modulate and give a certain amount of anonymity because these are pierre's children that he's been using footage from iphone and creating these more cinematic aspects of it and then being able to use that as the overall baseline but still at that point when you use any of these different generative AI tools it can take that and widely vary from that and so how to tune it and get it so it's consistent yeah a lot of pipeline that they had to figure out to get it to that point and So yeah, lots of new tools and lots of new action that's happening each and every day. And as we go from 2D into 3D, I'm sure that it's going to be a thing that we're going to see a lot more of in the context of virtual reality. I know there's a couple of pieces at Venice this year that are also going to be using different techniques of artificial intelligence and AI. And I'll look forward to seeing those experiences and talking to those creators as well. So, that's all I have for today, and I just wanted to thank you for listening to the Voices of VR podcast, and if you enjoyed the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a listserv-supported podcast, and so I do rely upon donations from people like yourself in order to continue to bring you this coverage. So you can become a member and donate today at patreon.com slash voicesofvr. Thanks for listening.