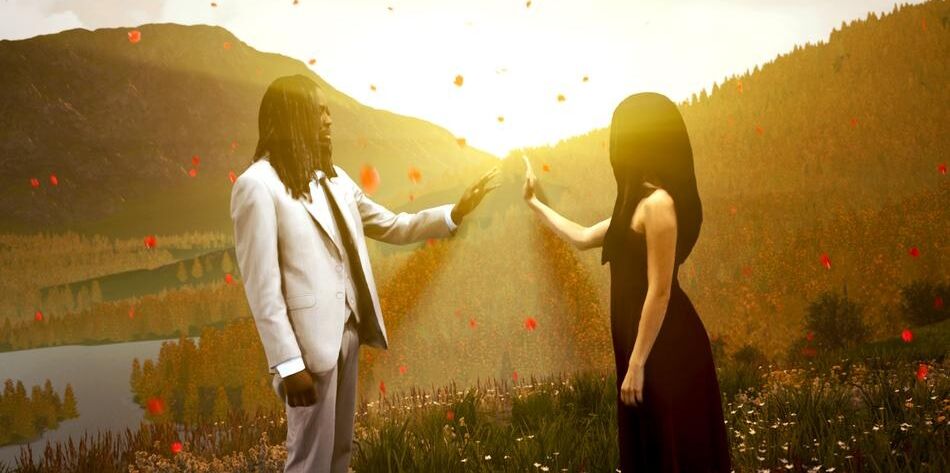

Departure Lounge is volumetric studio based in Vancouver, Canada that is collaborating with the Monstercat music label to experiment with XR technologies in making both 2D music videos and more volumetric immersive experiences that they were showing at SXSW. The 2D music video for WHIPPED CREAM, Jasiah & Crimson Child – The Dark debuted on February 1st, 2023, and there was a more immersive and interactive translation of this video that debuted at SXSW this year.

It was only after I had watched the 2D video when I got home from SXSW that I fully realized why they made some of the experiential design choices in the immersive that they did. As an example, there is a stream of rose pedals that you’re instructed to follow in the VR translation, but at one point the volumetric captured performances start showing up behind you and it’s ends up being a better experience if you ignore the rose petals. It was only after watching the 2D music video that I realized that they were creating those pedals more as a background context for the 2D video rather than optimized for what would make the most sense in the immersive experience. Also, the more interactive experience tries to make a delicate balance between user agency to move around where ever they’d like versus guiding attention towards the pre-recorded performances that are showing up in specific locations. In many ways, the music video is able to more deliberately guide attention through cutting in a way that was more emotionally-evocative than my immersive experience where I had to navigate being slightly confused, lost, or feeling like I had missed some important story beats that would help to contextualize what was happening. The affordances of film cutting allowed for some more robust and deliberate worldbuilding that I had missed within the immersive translation.

So overall, this piece is an experiment in user agency, environmental exploration, and how to seamlessly tie in some of these volumetric assets into a more embodied experience. In some ways, using this type of volumetric capture is a bit overkill for a 2D video production, especially as sometimes the fidelity or quality of those 3D captures projected into a 2D space have even lower resolution and fidelity than what a dedicated 2D-optimized capture pipeline would produce. But the upside is that you have an even more powerful volumetric experience of these performances within the virtual context. So there are a number of tradeoffs between embodiment, agency, emotional valency from more deliberate direction, as well as how to handle the user choices.

For the most part there were not very many interesting things to explore in this experience outside of the performances, but I also experienced the immersive version before fully understanding that there was a 2D version that had already been published. There were some behind-the-scenes types of objects laid out in the house before the music video began that I had little to no context for what those objects were or why I should be interested in looking at them. Again, it wasn’t until I saw the 2D music video that I realized why they had constructed it in the way that they had. Also, some of the decisions made in the immersive version of the experience made a lot more sense after seeing how they were reusing some of the assets. It’s actually a really interesting use case in exploring the affordances of the film medium of the music video versus the immersive version, and how one or the other mediums are prioritized in how it was all put together.

I was able to break down the design and creative design of this piece with Brenda Medina (Virtual Producer at Departure Lounge), Yie Hua Chee (Art Director at Departure Lounge), and Will Selviz (immersive director and Partner at RENDRD Media).

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Podcast: Play in new window | Download

Rough Transcript

[00:00:05.412] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye and welcome to the Voices of VR podcast. It's a podcast that looks at the future of spatial computing and the structures of immersive storytelling. You can support the podcast at patreon.com slash Voices of VR. So continuing on my 24 episode series of different experiences at South by Southwest, today's experience is with a piece called Whipped Cream the Dark. So this is an immersive interactive music video. There's a number of different music video type of experiences this year at South by Southwest and talking to Blake Kamendator, just how South by Southwest itself is an intersection between technology, film and media and storytelling and immersive storytelling, as well as with music. And so you have this confluence of all these different things all coming together. And so because of that intersection, then there was a lot of the selection this year that had a music specific focus. And so this piece, was an immersive music video that was based upon Unreal Engine and they have these volumetric scans that were captured at Departure Lounge which is based in Vancouver, Canada and it was more of a thing where you kind of walk around this piece and are able to navigate and have some agency but at the same time there's a story that's unfolding in terms of this song that was made by Whipped Cream aka Caroline Cecil in collaboration with Josiah and Crimson Child were also featured in this piece and I had a chance to talk to a number of the different producers, art director, and directors of this piece to be able to break down their process to both capture these performances but then also put them in a Unreal Engine interactive game environment and try to figure out what are the dimensions of this kind of interactive music video as a new genre and how do you balance this degree of location and agency and being able to direct attention, but also have this kind of like spatialized audio experience of this music video by Whipped Cream called The Dark. So that's what we're covering on today's episode of The Voices of VR Podcast. So this interview with Brenda, Hua, and Will happened on Tuesday, March 14th, 2023. So with that, let's go ahead and dive right in.

[00:02:08.409] Brenda Medina: My name is Brenda Medina. I work at Departure Lounge based in Vancouver, BC. I'm a virtual producer and I was a producer for this music video, immersive music video experience we brought to South by Southwest. And yeah, I get to work with absolutely everyone involved in this team from beginning to end.

[00:02:27.679] Yie Hua Chee: Hi, I'm Hua and at Departure Lounge I'm the art director. I'm involved in pre-production, production and post-production. Well, yeah, I work with everyone involved as well for this music video, yeah.

[00:02:41.551] Will Selviz: Hi, my name is Will Selvis. I'm an immersive director and I work as an independent filmmaker but also the founder of Render Media. I had the pleasure and honor of working with Departure Lounge and Monstercat directing The Dark here at South by Southwest.

[00:02:56.010] Kent Bye: Awesome. And maybe each of you could give a bit more context as to your background and your journey into doing this type of work.

[00:03:02.133] Brenda Medina: For sure. My background is I started, I'm a product designer, always interested more in the digital development of design. I worked a lot with creative work for advertising, a lot of film for this kind of thing, short documentaries, but also involved in developing products. So I just did my master's in digital media, along with my art director. and we created all type of digital products where then we create an R&D with Departure Lounge about volumetric capture, how we can bring traditional formats like theater and film into this new immersive kind of experiences and that's how this relationship started working, bringing my experience, what I have in film production, managing stages, managing talent and then involve the creative pipeline of using game engine work and other kind of stuff.

[00:03:49.873] Yie Hua Chee: Yeah, I had a background in advertising and design so I graduated focusing on design and strategy and later on I worked as a UI UX designer and I joined the same master program as Brenda actually and that's where I learned a lot of 3D and Unreal Engine. I used to be a motion designer during my advertising days as well so my understanding of camera work and filming kind of just translated into using Unreal Engine as a film, a visual tool.

[00:04:22.329] Will Selviz: I'm a design technologist so I started actually trained in traditional arts like drawing and painting and then transitioned to AR and 3D about 12 years ago. Somehow I ended up doing computer science at some point in my life, didn't like it, switched to OCAD University where they have a program called Digital Futures very similar to the one at CDM and I started to work with XR in a context of entrepreneurship and social causes and Eventually just decided to do that full-time as a pandemic. I graduated to the pandemic and everything went remote. So now we're here.

[00:04:56.449] Kent Bye: Yeah, maybe we should start with the volumetric capture studio because that seems like that's the heart of the start of capturing the performances that then lead to the other aspects. So maybe you could give a bit more context to this new volumetric capture studio in Vancouver, BC.

[00:05:10.254] Brenda Medina: Yeah, so for all the immersive kind of experience, what we're offering is this 18-feet volumetric capture stage. It has over a 106-volume camera, so you can capture a person, a performer, from absolutely every angle. So we do 360 kind of shots. And then we process all this data to have one asset which is a hologram, a fully 3D hologram. Right now it's the most high-resolution kind of real person you can have. into any kind of VR formats. And that's what we use for then involving it in Unreal, creating the environment around it, the animations, the edits, everything you want. So having this stage really opens the opportunities of how we can have human real performance in all kinds of VR digital outcomes and designs.

[00:05:53.414] Kent Bye: So I know a challenge that happens a lot of times with this type of volumetric capture is that it's very data heavy. So maybe talk about, like, is there an optimization process that happens after that? And then if that's part of what you're doing with the compositing. And yeah, just sort of give a bit more context of what has to happen after you've captured everything. You have dozens and dozens of cameras that then have to be synthesized in some way, but then in a format that could actually be used within a real-time engine.

[00:06:16.671] Brenda Medina: Yeah, so I can give you a premise and why Ivan can go further into it, but basically that's, there's many optimizations we're doing as we speak, right? So one is like you said in the processing of data, how we have our servers set up. So this can be shorter in the terms of time. I can give you a very quick example. Let's say just a one minute of capture, it's like one terabyte of processing. So we have a very well set up server from the cloud all the way to local to make it faster. So we can have these volumetric assets, we call them assets, and they can be, if I go more technically, OBJ, MP4s, they become a mesh that is then introduced into the game engine systems. So now then we play inside the engine, how do you affect lighting and placement and how we use them for the final thing. And as well, we're still playing in how we can later rig this kind of assets, how can we even improve more the mesh, you know, the texture and the definition in what kind of format, how we process it, and then how we put it inside again, whether it's Unity or Unreal. So that is a constant optimization work we're doing as a studio, at the same time as we're still using and creating projects with different collaborators and clients.

[00:07:27.006] Yie Hua Chee: Yeah, just to follow up on what Brenda was saying, so after we have the asset processed, I mean we would love to work with like 4K and above textures for every project but for pre-visualization we optimize making the load lighter by using 2K textures and usually it's imported, I mean it is imported to Unreal through the SVF Actor Blueprint and We are using MP4 like for previous especially when you just need to see something in engine and we have to minimize lag as much as possible to lock down what our cinematics gonna look like.

[00:08:04.053] Kent Bye: And I guess when it comes to directing, I guess there's people usually think of directing as like when you're shooting something live, you're directing the talent. But then there's another dimension of directing, which is like once you're in this immersive virtual space and then you're able to either create objects that are directing attention, that's a little bit less like control of what you're selecting. And so maybe you could talk about that dual aspect of directing the capture process, but also directing the other aspects of this piece.

[00:08:30.962] Will Selviz: Yeah, absolutely. I mean, there's so much to unpack in that, but I think most importantly is being able to work in real-time rendering. Again, I come from a pipeline of VFX where you don't have that privilege of just seeing everything immediately in front of you. But again, I was brought into the shoot maybe three or five days before it started, so I had very little time to see what it was going to look like, but also the day of the shoot. Using AI-generated images just to tell the cast what the environment was going to look like based on my art direction or what I had in mind, that also Whip Green had in mind, was very helpful, but also having a cast that was familiar with the technology already and knew what the limitations were, what kind of fabric they couldn't even wear, how they had to tie up their hair, and things like that, that only comes with experience. But for me, it was a lot of, at the end of the day, you're still in a green screen room or a volume. and you don't have much context that you can give the artists until you're done shooting. So I think it was a really harmonious connection of bringing in the storyboarding, things like practical lighting that we could do. Again, the studio has so many options that you could light it up however you want so that the character is well combined or well embedded with the Unreal Engine scene. So again, it's like an orchestra. So it's different parts that you try to pull and push, and a lot of compromises creatively and technically that you have to make, and optimization as well. But overall, I'm super happy with how this turned out.

[00:09:53.138] Kent Bye: You mentioned that you did some AI generation tools, something like Dolly or Stable Diffusion or Mid Journey. Is that what you mean, that you would give a prompt and then have an idea of what you wanted and then use that as a way of giving indication of what you had in mind for what kind of worlds you wanted to create?

[00:10:08.075] Will Selviz: Yeah, absolutely. I mean, Caroline already had a mood board, uh, whipped cream. She had a mood board that she shared with us and I took that and evolved it into other AI generated images that kind of expanded on her. Again, using like Miro and Figma and all those tools to sort of create like a larger mind map of what this might look like. And then a month, two months, three months later, something else happened. And again, with the help of Huawei as well, like developing that further. Yeah. AI helped a lot and it came in a really perfect time as well.

[00:10:34.575] Kent Bye: So you were doing some of that as well? Yeah, maybe mention what you were doing in that workflow.

[00:10:39.998] Yie Hua Chee: Yeah, so based on the mood boards from Will and Caroline, we started with a storyboard to determine what we would put on the shortlist. So the storyboard was born from what Will mentioned like the figma, the whole flow of the entire song because there was a narrative inside as well. So we wanted to make sure that that was communicated cohesively, tied in together with the visuals, responding well with the music. So I think Storyboarding did help to get the shot list and then once we had that on the shoot we were able to discern like what works, what's not working, what's like out of character for the artist and how we can work about that. That also kind of gave us an idea and some insight into Well, okay, the artist is walking straight, let's say, and we had a curved road in mind. How do we mitigate that? And so some creative decisions were also made on the fly on the shoot day, but it was helpful to have visual references on hand that we could just pull out and also give the artist some visual direction so that they understand the world that they will be placed in, where to look, what to respond to at certain times. Yeah, it was a great learning experience. experimental thing.

[00:11:55.418] Kent Bye: Yeah, I'd love to hear a bit more context as to how the partnership with Monstercat and Whip Cream came about.

[00:12:01.279] Brenda Medina: Yeah, so this project in particular was a fun evolution, very natural evolution. It happened with the people at DGBC that know Monstercat music label, finding always how to collaborate. Monstercat is really interested in playing with tech in terms of the music industry. So they come up with this idea of working with us with Departure Lounge and seeing what we come up with. So we started with Vancouver International Film Festival has a program called Signals. It's more for the interactive part of film and we created a teaser of the music video which just gave this little vision of what Caroline and all of us collaborating would become. We actually created a bit more arts installation playing with different layers of you know a scream and the lead just to show around and it worked really well so we decided to take it to the next step which is create the full 2D music video with virtual production with the holograms we already captured and we created that always thinking with Adam Rogers he was his vision of let's take it to the next level make it immersive so we created the 2D music video already optimized to then become fully VR immersion and then taking this storytelling and not just watch it in a screen, be part of it, be part of the artist's vision and I think it's a new way for audiences to connect with musicians and other type of artists.

[00:13:16.288] Kent Bye: Yeah, I'm wondering if you can maybe take us through like a pitch or a run-through of the journey that we're taking on because, you know, we're kind of starting in a house, we're going outside, we're seeing these roses that are flying around, we see some like musical vignettes that are popping in and out, but like how do you kind of describe the beginning, middle, and end of the musical journey that we are taking on?

[00:13:34.695] Will Selviz: Yeah, absolutely. I mean, like, the song itself has an inherent structure to it, you know, with building up and then having, yeah, that storyline to follow already as a musical experience. But we took elements from the music video, like the cabin where a lot of the action happens, and we wanted to turn into a lobby where, like, as you mentioned, there is, like, mementos that also reference Caroline's new EP that just released. You know, a bit of a teaser of also, like, a step into her life. So using the medium in a way that's more inherent to, like, Yeah, just giving more context about the artists and their lives. So again, you start at a cabin, then you move on to where the actual music video started, which is a piano, beautiful piano in the middle of a prairie forest, very reminiscent of the Pacific Northwest and where we're located in Vancouver. So if you've never been to Vancouver, it's a good place to be immersed in a place that, you know, it looks like out of Lord of the Rings or something like that. And then you are then teleported back into the cabin, but this time at night. And it's a totally different scene. Like you're kind of hinting at something that's going to go down with like lightnings, with the weather change, all these different things. And again, with how beautiful Unreal Engine 5 is, you can really sell that change of lighting and whatnot. So then along the whole experience, you're following pedals, which again, have a very symbolic meaning to the song and everything in the branding and everything in the meeting. and you're kind of guided through this experience. But we've seen people wander off in the forest. We've seen someone break the game today and reach all the way to the lake, which is a very, very rare occasion that never happens. But it's exciting to see someone actually, like people just put, like, be curious. Yeah, be like, wander off. It's really the meaning behind the piece. So yeah, I don't want to spoil the whole thing, because I want people to try it still, but the whole idea is to really take people through her life, but also a toxic relationship at the end of the day, like push and pull, and you don't really know who the master of puppets is in this case, if it's Caroline, if it's Josiah, who's, by the way, shout out to the artists, it also involved Josiah and Cringes of Child. Josiah's an opera singer, and Cringes of Child is also the producer of the song. But yeah, overall like adapting this in a way that feels that it makes justice to VR and it's not just, you know, a 360 video playing back or anything that, I don't know, I have nothing against that, but we also wanted to push the narrative in that direction more.

[00:15:58.200] Kent Bye: Yeah, part of my experience of seeing this piece was that it's sort of like this interesting like open world dynamic, but also have like specific locations where performances are popping up and there's a kind of a wayfinding mechanism of the roses that are going around and I found myself like at the beginning like okay I'm gonna trust and follow the roses and then there would be things that would pop up on that path, which was really nice actually to kind of have this agency and freedom to roam around and have things kind of be dropped in. But then there was other times where the roses were kind of going off in one direction, but the action seemed to be happening behind me. So then I was like, okay, am I supposed to continue to follow these roses? They're going to kind of looping around. And so I didn't know if I would have fallen if there'd be more things that are popping in. And so I felt a little bit of confusion as to like okay should I continue to follow the roses or should I just pay attention to the performances and I found myself kind of like going back to the performance so what was really neat was when I was walking through and just kind of choosing where I went and I felt like oh I'm just like running into these things that just happened to be dropping right at the right moment but or if it's triggered by the music, then it's timed. And then not everybody's going to have that experience. And there's going to be this thing. So it feels like there's these different trade-offs and balances of giving the user agency to roam around versus having the most directorial, curated, best position to see each of these things. So I'd love to hear about that dynamic of how you were balancing those different things.

[00:17:20.041] Brenda Medina: Well, we'll mention it correctly, we're trying to push boundaries. So we're even testing our own boundaries of, you know, like how the users will respond to every creation we're doing. Like you said, in VR you have much more agency, you have much more visual freedom. So we wanted to play around with this, like how much freedom do we give them at the same time you're following a story, you're following a song. So that's how this visual connection of the pedals will take you through. We didn't want to overload you with information about the onboarding, what you're seeing. We always think it's about proper design is for the user to just inherently know where to go, what to see. So that's the sign of things we want to refine as much as possible so you can truly get the storyboarding. plus the storytelling, plus as well having that freedom. So that's how we play with the rose petals as a connection, not just of the music video, but the album that Whipped Cream is releasing now. So it's a visual connection of the concept and also the volumetric captures, you know, the assets of performers. How do you follow them? How do you know which one to see first? So that's what we've been playing. We even thought about, what if we have ghost versions? But we were like, that might confuse you. You need to follow the storyline. So that's the things we were playing around visually. We're learning a lot right now, seeing so many people playing our video, and how do they work around, just like you explained, your own experience. And we're learning a lot of how we can even refine more UI, UX, every time we're playing with VR. especially with music, you're following a specific sync of sound, of audio, so we're realizing what works, what not, what we want to change, what we want to keep exploring and break it and then find a new way to make it. So that's the kind of things we want to keep playing with Whipped Cream and other musicians and just find this way to bring music videos into the next level. Like you said, not just make it 180, not just 360, how can we really create a new format to show music.

[00:19:17.747] Yie Hua Chee: Yeah, it was a really tight balance, I think, between letting the user experience what we had created in the 2D music video and also letting them roam around as if they were Caroline or Josiah or Crimson Child in an open world. Ultimately, what we decided to stick to was a maybe 70% viewing experience with instances where we were like, okay, this is a good opportunity to let the audience or the player kind of feel like they're in Caroline's shoes doing what she's doing or being part of Josiah touching petals as he's touching petals. So if you were to watch it just as a passive viewer you would understand the story anyway. If you walked around and took a look at things you would also understand the story and I think that was a nice middle ground that we collectively decided on. We also would love to refine further, I think, as we continue to work on these kind of VR music experiences, what we can do to guide the player without as many visual cues. So like, let's say, what if we have a project that doesn't have any trails or paths? And that's where I think it was really important to, oh yeah, shout out to Vaudeville. We worked with them for the music and so the asset that they provided us was an ambisonic sphere for both parts of the song, excluding the parts that we were excluding. The main part of the song was an ambisonic sphere, so you would be able to detect like the true north of where the music is coming from, and that's kind of like the subtle behavioral cues, yeah, that you would be kind of like, oh, coming from there, I'll go there. Yeah.

[00:21:06.843] Kent Bye: So they're actually mixing an ambisonic mix of the song?

[00:21:10.224] Yie Hua Chee: Yes.

[00:21:11.403] Kent Bye: That's cool. That's interesting to see that as I was in there I was looking at the visual aspect and didn't notice the ambisonic spatialized mix, but that's great to hear and yeah I'd love to hear from your perspective as the director as you're balancing from a director's perspective trying to guide attention, but also Realizing that people are going to be roaming around and looking at whatever they want

[00:21:31.147] Will Selviz: Yeah, I mean it's the art of push and pull and I think with the expertise of Departure Lounge and Juan, Brenda brought into this project as well as the bigger team. It comes down to trying and failing in other projects to then realizing what these cues are that will get people's attention so having for example like the pedals which again we mentioned quite a lot but once you experience it you'll see that it's definitely bringing people on the right path but also allowing people to just wander off is a new concept that like There isn't really something, you can't Google like how to make an experience like this and get a result, I guess, one straight answer. You can even ask ChadGBT and he'll probably give you like a variety of answers, but like to get those two things together, it takes like a whole team of people who are amazing at sound production, amazing at, you know, the fact that we could tell Caroline, hey, we need music. for the lobby we need music we need cues that bring people here and there and she's an amazing producer and we can get that pretty much you know the next day or a few days later it's incredible it's not something that I've seen before and it takes really like different levels of expertise so for me again coming from a background of AR and VR and VFX it was really you know getting the first build and looking at it and trying it like three four different times and saying hey like Am I wandering too much? Am I doing this? But then getting this last build that I see at South by, and I was also taken aback by how much was added, like the level detail, because realistically there wasn't too much time to really put these things together. Like a lot of people on this floor right now probably came down to the last minute, the last second. We all know how XR and VR things work. So at the end of the day, it was a good, again, I'll keep calling it a harmony or an orchestra of different parts that you push and pull, but Yeah, like I said, I'm excited to keep iterating on it, keep building on it, and see where it goes. But where we brought it today, I'm also really proud of. Yeah.

[00:23:25.609] Kent Bye: Awesome. And finally, what do you each think is the ultimate potential of virtual reality and the future of music, and what it might be able to enable?

[00:23:35.642] Brenda Medina: Well, I think I already get ahead on that question, saying that I think VR can help not just to enhance what we already can see or feel, listen in music, it's to create a new format. How do we interact with the musicians, how we interact with their work, how, you know, like Jua said, I think there's so much potential in sound design, especially for immersive. I've been going around in talks about the situation of audio has so much potential. It's like maybe some 10-70% of what you're seeing here. So definitely playing more with sound design for immersiveness can give us much more cue of how to follow things, how to be more intuitive of what we want to see or feel. You know how you sometimes feel the beats when you're like in a live concert? How can I feel that when maybe I'm in a VR format of something with music? So I would really like to explore more how we connect all these elements that are optimizing and improving and testing but now together into one blended thing and see where we can take it so I would definitely love that and then take it to different audiences you know like also very young audiences senior audiences how we can play more with sound and VR for many different things.

[00:24:42.827] Yie Hua Chee: Yeah, 100%. I think there's a lot of opportunity for extended sensory experiences because when you listen to music sometimes it just pulls you, it calls your soul and you are so connected to the music and now there's so many examples of you know, how music is visualized. And I think with VR experiences like there's even opportunity for haptics like that immerses you so much more. And, you know, if you want to connect to not just music, but like your performer, your idol, it's all there for you to experience.

[00:25:19.581] Will Selviz: Yeah, I absolutely agree. I think haptics and even smell is something that I've had the pleasure of experience here at South by and devices are coming out that also maybe like the change of the environment also dictates the change of the smell, different things like that. I personally found that the synesthesia is kind of like where it's at in terms of like making memorable experiences for those, of course, that are able to experience all the senses. but at the same time leaning on two ways to bend that so that it's not just visual, right? And there is this sort of like push and pull of are we trying to like recreate the real world or are we trying to treat it like, you know, treating the medium for the message that's inherently for that medium, right? So I think VR has a lot to offer. I think there's also a lot of potential in leaning into aesthetics and ways of using volumetric capture that makes a lot of sense for the environment as well. And yeah, I'm mostly excited about that. And all the headsets that are coming out are a testament to, you know, how much we can push this from the same product that we're currently doing right now.

[00:26:25.211] Kent Bye: Yeah, well definitely there's a lot of music projects that are here at South By having different takes on things and I personally think that there's a lot of things to push forward especially with spatialized sound with the future music visualization and how to like create these immersive experiences that people really feel engaged with the music and I think you know you're experimenting with this tension between that agency and the music, and I think it's an interesting intersection. I feel like there's still a lot more to explore and really get what that is, especially agency that is actually changing the music, which is pieces that I've seen before where it's more the audience gets to participate in changing the song. So that's like a whole other new realm of like music composition with the future of music in this more generative, decentralized aspect. So anyway, it's a lot of really interesting areas that I think you're starting to push forward. And yeah, thanks again for sitting down and helping break down your process and excited to see where you all take it in the future. So thank you.

[00:27:19.452] Brenda Medina: Yeah, thank you. And it was great talking to you, Ken.

[00:27:22.294] Yie Hua Chee: Thank you so much. It was so nice sharing and listening to our experiences.

[00:27:27.575] Will Selviz: Thank you, and I'm honored to be a part of this. Yeah.

[00:27:31.676] Kent Bye: So that was Brenda Medina, a virtual producer at Departure Lounge, as well as Yi-Hwa Chee, who's an art director at Departure Lounge, as well as Will Selves, who's an immersive director and the founder of Render Media, and the co-director of The Dark, along with Whipped Cream, was also the other co-director, Caroline Cecil, and also featuring the talent of Josiah and Crimson Child. So I have a number of takeaways about this interview is that, first of all, Well I found like this was basically like an open world exploration of a music video and they have these pedals that are guiding your attention and so you're kind of moving around through this space and there were moments when I was going along the guided path and right at the right moment there was a volumetric person that was dropped right in my field of view. But there's other moments where I didn't know like, Oh, am I supposed to continue to follow this pathway? Because the attention then was now had all of a sudden behind me where there was the performances that were happening. And so I just guided my attention based upon where the performances were happening rather than this mechanism of the pedals. And so there's like an underlying tension here between agency, interactivity, locomotion, as you're moving through a space and directing and guiding attention towards where the main action is. And so It's kind of an interesting fusion because, you know, as you go through immersive experience through like VR chat, you have different modes of locomotion. Some people like smooth locomotion, some people like teleportation. So this was only one locomotion option where you're moving through in a smooth locomotion and this tension between guiding attention and this freedom of movement, there wasn't as much interesting things to explore through the freedom of my movement. And so I found myself just wandering and tracking what was happening in the context of these performances. And so I felt like at a certain point, my agency was not as important as just following what the musical story that was happening in these performances. And so There's a little bit of confusion there of that tension and I think guiding and directing attention I think is going to be a key aspect and maybe I would suggest taking away some of the different extra exploratory aspects of the guidance if at some point you don't need to be following the pedals anymore. But there's also other sound design elements of this ambisonic mix that was also with the sound for where the sound was coming from subtly directing my attention into more of a sound field that was happening in the context of this space. So yeah, this tension between exploration and guiding intention, I think it's going to be kind of a mixed bag depending on someone's experience. But yeah, I'd say a little bit more higher level for people who are already very comfortable with moving around in virtual spaces. And what are the ways that you can more dynamically place these performances that are not in such a fixed location, but can be more responsive to where I move so that I can feel like I'm not missing anything by exploring, but to still have things kind of move around. I think that's like, I don't know from a performance perspective that they're able to do that but that's what I would want to see is like more of this kind of magical aspect of as I'm moving and deciding where to go that just magically this thing appears in front of me and I can continue to move around and regardless of where I move around they can continue to have these different cut scenes there. So that's what I would like to see is the next iteration of this. It felt like a little bit of an early prototype demo of this like very first cut And it's still this tension of this video game element of exploration with music video that I feel like has a number of other iterations to really find the sweet spot for what makes it truly unique in terms of this combination of open world exploration with this type of performative aspect. because you want to have this degree of discovery and exploration, but that's trading off from what's already happening at these other locations that are kind of locked into place that are not encouraging exploration. And so you have this tension between exploration and not exploration that I felt like in this piece that is kind of ripe for future exploration. And also just really curious to hear how they're using AI as a process of doing these mood boards and exploration of what the look and feel of different scenes are and to start to integrate that more seamlessly. As we go on to many, many other iterations, I'm sure that it'll be even more integrated into the art direction process of folks being able to speak what they want and to translate what is given as a thing that's being translated or even directly created into these immersive experiences. The thing always is how to optimize it. And with generative AI, is it actually infringing on any different intellectual property rights and everything else like that? So I think the training sets of some of those things as we move forward is also a thing to look for. The lawsuit from Getty Images against these different platforms is a thing to look out for, for how that continues to develop. But starting to hear from direct artists how these generative AI tools are starting to be more seamlessly integrated into their creative processes. So really interesting to hear how that broke down in this specific case. So, that's all I have for today, and I just wanted to thank you for listening to the Voices of VR podcast, and if you enjoy the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a list of supporter podcasts, and I do rely upon donations from people like yourself in order to continue to bring you this coverage. So you can become a member and donate today at patreon.com slash voicesofvr. Thanks for listening.