I interviewed Victorine van Alphen about The Oracle: Ritual for the Future on Wednesday, November 19, 2025 at IDFA DocLab in Amsterdam, Netherlands.

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Podcast: Play in new window | Download

Rough Transcript

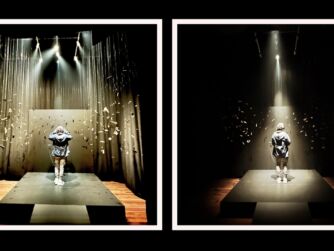

[00:00:05.458] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR Podcast. It's a podcast that looks at the structures and forms of immersive storytelling and the future of spatial computing. You can support the podcast at patreon.com slash voicesofvr. So continuing my series from IFA Doc Lab 2025, today's episode is with a piece by Victorine Van Alphen called The Oracle Ritual for the Future. So this is an interesting piece and difficult one to describe because there's a lot of dream logic poetic imagery, but the themes that are being explored are looking at our relationship to technology, our relationship to AI, and there's a number of different types of provocations in this piece that can be quite shocking or confronting in quite a deliberate sense. So in this piece, it's got this kind of ritualistic feel. So you're walking in and there's people in masks and where their mouth wouldn't usually be. There's like a cell phone and that cell phone has like text. And so the whole onboarding process is like answering a number of different questions around like, do you identify more with like your humanists or post-humanists? It's kind of like trying to allow us to use these gestures. There's no speaking or anything. And then as we're making these decisions, we're We're getting these stickers put on us. And I think a lot of those stickers would be used in the first section where you walk in and there's like these three different stools that you sit on. And then if you sit on the stool, then their drone comes out and scans you. And then later, if you sat down on one of those stools, then she's kind of taking pictures. These different AI technologies and using it to address you. And so that's, you know, one of the provocations that we talk around the kind of the ethical implications of this kind of shocking provocation. And my experience of this piece was that it was kind of exploring all these concepts and ideas. And I felt like it was not always clear as to what was being said. There's a lot of ambiguity involved. So I wasn't always able to kind of orient where Victorine's own perspective was, but it was kind of bringing up lots of interesting concepts and ideas that I'm glad that I had an opportunity to talk to her to unpack and just get a little bit more ideas of where she was coming from. She also had these series of probably like a dozen or so cards that were kind of an elaboration of these deeper philosophical concepts and ideas that she was exploring in this piece. And I got a lot more context as to like her intent and some of the discussion points that she had. And, you know, we only had about an hour to dig into the piece. And so we weren't able to kind of explore the full spectrum of all the different stuff that she's exploring, but it's kind of an interesting approach that is very much. emotion-based and so trying to create a mood or a vibe and so there ends up being a lot of abstract dream logic and colors and music and as you're going through this piece you're having all this stuff kind of wash over you as you go over time more and more away from the humanness and more and more into this futuristic world that's kind of dystopian with robots everywhere that are But you also see artificial intelligence kind of go through different evolutionary phases. And as there's this critique of AI, then you really have to show the process of using AI to kind of understand the current context for how we're interfacing it with the prompting. And so you end up seeing like a full process of prompting and the results in order to get a sense of how people are engaging with AI technologies, but also some critiques around AI. how it doesn't do a great job of being able to identify race. And there's a different critiques around effortless diversity is one of the things that she's really unpacking in terms of ways that some of these different AI technologies are advertising, you know, how to have a frictionless shortcut to be able to overcome some of the different diversity challenges by kind of faking it or shortcutting it. So yeah, Yeah, there's a lot of ways that Victorine's using AI in order to critique it and to better understand its downfalls. So she can really feel it in her body. She's really body centered in seeing how as she goes through these experiences, just paying attention to the different emotions and feelings that are coming up. And so as a viewer going through some of these experiences, then she's really kind of working in these realms of the emotion to kind of like explore these provocations and then Hopefully at the end, have these opportunities and context for either the audience to talk to her or there wasn't any set up formal situation where you could have like a process of unpacking and talking about it. But this is the type of experiences where I think that would really be well suited just because there are some deep provocations that are happening here. So anyway, I'm covering all that and more on today's episode of the Voices of VR podcast. So this interview with Victorine happened on Wednesday, November 19th, 2025 at IFA DocLab in Amsterdam, Netherlands. So with that, let's go ahead and dive right in.

[00:04:49.759] Victorine van Alphen: I'm Victorine van Alphen. I'm a director and philosopher and visual artist. And I make immersive experiences that often people don't know how and where to compare it to. Yeah. So it's on a cross section of theater, technology, expanded cinema, but also you could say experimental philosophy, social design. Yeah.

[00:05:15.718] Kent Bye: Nice. Yeah. And maybe you could give a bit more context as to your background and your journey into the space.

[00:05:22.225] Victorine van Alphen: I started with quantum physics when I was very young and got into philosophy because of that. Always questioning the world around me. That's something I'm still doing, but more as an artistic researcher now. I went to... After studying philosophy and interdisciplinary sciences, I started... Actually, next to that, I was working as an aerial acrobat and dancer and performer, and that became more experimental, but more from a completely physical way. So I started working with Anouk van Dijk, a very famous, amazing choreographer who kind of choreographs her audience rather through perception and space and time and I was very inspired by that and I started to explore next to my studies with a group of young people we started exploring experiments with audiences And then I studied at the Art Academy in Amsterdam, Rietveld Audiovisual. And then I did a Master of Film in the Film Academy, which is Artistic Research Master. And that's where I really started exploring my own methods, way of directing, immersive directing. Yeah.

[00:06:38.328] Kent Bye: Yeah. And you identify as an artistic researcher. Can you describe whether or not some of these projects are leading to like academic published papers? Or is this more of the sense of your art as a way of provoking or exploring the frontiers of technology and the ways that human are relating to it and that you're providing these experiences? But yeah, I'm just trying to get a sense of like the output of the research and how this intersection of art and research, what that looks like for you.

[00:07:07.205] Victorine van Alphen: I think it's even deeper than that, this whole cycle. For me, big questions I have in life or about the world or about how things work, those will feed into my work. But also my work and what I learn from that will feed into me again. And in the theories I have about how these things function. So I'm publishing a 20-dimensional immersive experience model that I kind of shave together with my students and all the work that I've made. about immersive networking. So it will be published as well. But I really believe after studying philosophy and not being in my head too much, I really acknowledged how important it is to really try things in life with real people, real bodies, real everything, and then reflect. So I'm still really reflective, but more I start with trying to burn my hands on stuff. Yeah.

[00:08:08.301] Kent Bye: Nice. And so you mentioned your students. What's the context of your students and where and what are you teaching?

[00:08:14.347] Victorine van Alphen: I'm teaching immersive media and I teach it all over the world. But I was a main teacher here in Amsterdam. But that course, the bachelor is going to be diminished. And in a few years there, they might run a new form, like possibly a master's, transdisciplinary master's. So now I'm going to full time focus on my artisthood and my own artistic research. And I will still coach some students and do lectures, but not anymore part time. So that will allow me to really dive into my work and the world and our relationship to technology even deeper.

[00:08:52.507] Kent Bye: You mentioned philosophy a few times. Maybe you could elaborate a little bit more on what branch of philosophy or how you kind of identify within the larger philosophical landscape.

[00:09:01.721] Victorine van Alphen: For sure, yeah. It's definitely embodied philosophies. For instance, Maurice Magnot-Ponty, a French philosopher, very much inspired me. He was also inspired by Cezanne and other philosophers who, for instance, said that yellow has a taste, it's sour. It's almost the phenomenological way of really, in a qualitative way, studying how we are experiencing and how that is an embodied thing. And that's also where technology comes in. If we take our body serious, we also have to take technology serious because technology completely, yeah, it might be an instrument, but it also changes. For instance, if you're looking at a screen, you're bending your head in a certain way. The screen has a certain size relative to your body. So, technology is completely related to our bodily being, to me. Even though we think we immediately pass through our head, that in itself is also something. The way that we focus suddenly only on visuals, we became living in a visual world that is maybe in a small screen and not the ones outside of us. So, to me, that embodied phenomenological framework is very, very, very important for the way I think So then you can also think about the mediumist message. But also Donna Haraway was very important for me in how she established different ways of dealing with all the binary thinking. And seeing in a speculative, very speculative way of approaching our current society with hypothetical and speculative ideas. worlds and scenarios so to understand better what could be possible and what is convention what is nature nurture etc to play with that a lot so those would be I guess the the strands but there's many more I can feel affiliated with or that I've but that I'm inspired by yeah

[00:11:01.916] Kent Bye: Yeah, I was looking on your website and seeing an interview that you did with a PhD student talking around the IVF-X that I had a chance to see at Paris a couple of years ago at New Images. So the student's talking around her experience with your previous project, referencing these concepts of the post-human experience. I think that gives me fear around like, oh, are we going to just get rid of all the humans and then we're going to live in a society that's run by AI and technology? There's a certain class of billionaires that seem to be in that mindset. But I think that there's other ways that post-human has been used in terms of like Donna Haraway and the way that we have these relationships to technology and humans. And so maybe you could describe a little bit about like what is post-human mean for you?

[00:11:43.719] Victorine van Alphen: For me, in the deepest way, it's a way to understand what human means and when we would lose that, what change would affect that. What I find very fascinating is that any technology can be very emancipated. My first work was about... Yeah, creating sidewalks as a way, also as a means maybe to overcome all the biological burdens and all the societal burdens that parenting now has to many people that are not cis hetero. So often technology can be a way to overcome certain biological limits. And so it can be emancipating or even helping people think beyond cultural conventions. But it also always has another side that we will change, like any technology will make us change. So in that sense, we're already post-human, of course. We already are completely connected to our technology and that changes the way we think, we see, we do, etc. So for me, post-human is really about the limits of and also the unlimitedness of what can still be human. All my works, the focus lies on what is human, I guess, by making it so uncanny that we start to feel afraid that we're losing that. And yet also I often establish a feeling in people that there's more possible than we think and that might even be more scary that we can turn into such different beings and still be humans. It's possibly more frightening than that there's a lot of limitations to how we could extend or expand or transcend ourselves.

[00:13:34.179] Kent Bye: That's great. And it helps to hear you explain that a little bit because I can see the kind of traces of these thoughts or intentions of exploring the different work that you're doing. And also in that conversation you had, you were talking around sort of this idea of creating this constructive, I forget the way you exactly phrased it, but it was almost being these liminal spaces and really embracing the ambiguity there. and not falling clearly into a binary. And so after I saw this piece, your latest piece of the Oracle, there's certain ways that I can describe what I felt or experienced the technical things, but there's also like the deeper arc of the journey and the story that it feels like it falls in this kind of liminal space for me where I'm still kind of sussing out all the different strands that were being introduced. So I'm excited to have this conversation to kind of unpack it some more. So maybe you could just set the broader context for the Oracle and how you describe what this experience is.

[00:14:27.452] Victorine van Alphen: yeah it's interesting it's a work that grew and it has all these layers I think in the middle of making it I made an actual real human being that was by the way one of the outcomes of my previous work of making cyborg babies was that I decided to make a real human being so I did that somewhere in the middle of the process of the oracle and I think do you mean that you became a mother? I became a mother yeah I became a mother yeah

[00:14:57.185] Kent Bye: I don't usually hear people phrase it in quite that way, so I just wanted to clarify.

[00:15:00.723] Victorine van Alphen: Yeah, I understand. Of course, I do it a bit jokingly because I've been making cyborgs so much. But yeah, I became a mother. But also, I had four friends of mine. They were very close to me who died. Very different reasons. But way too young and completely random in a way. And I think the Oracle in that sense really became about... Not only about change and how deep and layered that process is... but also how time works and how vulnerable we are and how we can change to becoming a part of something. So in the Oracle, I hope one of the deeper things that people might experience is to feel afraid of transitioning as well as feeling maybe readier than they think. So they are often saying to me, I came out kind of zen, even though it was very dystopian and I don't understand this, or they felt that they had processed something. So for me, I tried to compose a way of accepting transition, even if it's an extremely painful one, even if it's about losing everything that it means to be human. And that idea comes from a method in Buddhism where you are practicing to let go of everything you love. And of course that sounds like torture in a way, but it's also very interesting because it makes you realize what each of that means. So the oracle starts with a personal archive and the people of this personal archive, one of them, died. And that immediate vulnerability, that very physical vulnerability, is constantly contrasted also with, of course, the digital sensualities that it shows and other physical topics. And then it moves towards a far future vision that doesn't have any human beings, but still has somehow some sensuality and even expressiveness. And that was really something that I realized from being a mother, but also from another experience with ayahuasca, actually. It's the experience of deep time to understand and to feel that you have been a product of a very, very, very, very, very, very, very, very, very, very, very, very, very, very, very long process, evolution, change. all those organisms that have made me in a way and then suddenly as a mother feeling I just this little tiny little thing in a huge process somewhere in the middle of time or somewhere but not as a center but somewhere in time and then I made someone who will be a part but first maybe I felt like a sort of weird end point or something like And now I realize I'm just really somewhere in a very, very big space and time continuum. And in that idea, it's very easy to not be so afraid of anything. And that is very scary to think of life and expressiveness and sensuality and maybe even a poeticness beyond ourselves, maybe artificial intelligences that, you know, of course I find it... how much power Musk has, for instance. But in a grander scheme of things, if in 10,000 years there will be some artificial intelligences that make really intriguing visual art, which they already can do, which is also something I'm showing to people and they're impressed by some of the capacities and the complexities of the visual outputs. But in a grand scheme of things, I would be intrigued. I didn't want the dinosaurs to live forever, and I don't know if I want human beings to live forever, if I look in a bigger scheme of things. So that very dangerous idea that I'm juxtaposing in a way with my own attachment to life, my own attachment to my own humanness, to my own body, to things that we think are normal, I'm attached to them. So yeah, so it's an exploration of if we can let that go by also showing already developments that are in the here and now, but feel already dystopian or very normal or familiar, even though they're crazy and here.

[00:19:47.937] Kent Bye: Yeah, this word of dystopian has come up a few times. And then you had a card, you had a series of different cards, actually, that were these provocations from conversation starters, because there's a lot of, I'd say, philosophical ideas or provocations or points of conversation that you can launch into from a project like this. And one of them was this dialectic between utopia or dystopia and that you... said that you're encouraging this sort of like burning yourself in order to become more sensitive. So maybe you could elaborate on what you mean by this type of practice that seems to be embracing these different technologies and experimenting with them and seeing what's possible with them to kind of learn about them, but also to reflect on them, but also to start a broader conversation around how they fit into our larger lives. But I'm just curious to hear what you think around this concept of burning yourself in order to really get connected to your own sensitivity.

[00:20:37.767] Victorine van Alphen: Yeah, so I don't know if I philosophically and personally embrace most of the technologies that we use. I'm very critical with them and I would rather not use them. As an artist, however, I think it's necessary to burn my hands on them because, for instance, we use this terrible skin, ethnic, skin-changing application and also expose this to the audience. because even if it was just because of the marketing speech underneath talking about effortless diversity and other stuff just this word effortless diversity is something that to me very intriguing because there's a very deep bias going on in AI very deep it's crazy we're in 2025 And almost no AI can recognize a black person or a person with color. Like that's insane. That's really, to me, that's really, really, really, really, really grotesque. Yet it seems to be one of the sort of minor issues, but it's not, of course. It's very, very, very, very big problem. So then what happens, there's very superficial solutions for that, very applications that you can choose any ethnicity with, that are marketing now the effortless diversity and effortless minorities representation, but in a totally artificial way, which creates even worse problematics and ethical problems. But that's just in one corner of the internet that nobody knew of. I hear nobody knew of this. Or, I mean, everybody knew about the bias being there and most AIs not recognizing people of color very well. But then only so few knew of the whole mushrooms of apps that are there for superficially solving that with fake re-representation, basically. Yeah. So, if we wouldn't have just put our head into that world, we wouldn't have found out about those stuff. And if we wouldn't have tried to create our own undressing app, we wouldn't have found out how biased it would have been and we wouldn't have needed to solve that and we wouldn't have came across that stuff. I really believe that it's really the deficits in the details and the qualities of things and how things are formulated, how things are shaped, how bodies are formed, how hands are shaped, how faces are smiling or not, how women are always smiling, how people of color are not, you know, like those are the details, the qualities of how things are shaped. And then there's also amazing qualities that of course attracted me to AI. Like, hogs that were completely glitchy but therefore very poetic in a way you know like artificial hogs that were completely where people were impossibly intertwined so that's the beauty that I can see as an artist of the misunderstandings of a machine that has no body and tries to understand our extremely sensitive world and life I find that big misunderstanding as I see it I find that very poetic and intriguing. But no longer so more, because due to monopolies and commercial use, it's more and more a monoculture of aesthetics. So towards the end of the process of making this show, I started to expose that more and more, of things would turn into the same shit. And that as artists and as people, we need to really, really, really, really see that coming and fight that with different aesthetics, with different choices, with different prompts, with our own AIs, training our own AIs, using our own data sets, etc. So, yeah, it is a lot. And I'm also thinking if I'm deepening this work, we're probably going to make a few works out of it, like a triptych, so it doesn't become too dense. Yeah.

[00:24:52.642] Kent Bye: Yeah, there was, during the R&D summit this year, one of the artists was saying that they don't know if it's ethical to use any AI just because of the larger context of the data colonialism and all the sort of ethical, it's not really in right relationship to the world around us right now. And at the same time, they said that they use AI in order to critique it. And so it seems like that you might be in that same camp, which you sort of identify as kind of using the AI to really fully understand it so you can make a stronger argument against it.

[00:25:22.605] Victorine van Alphen: Yeah, totally. Yeah. I mean, I understand people's criticism and I understand, like, I'm worried when I'm using it, for sure, because I have blood on my hands when I use it. That's how it feels. Yet I also feel that most of the conversations about it are naive in the sense that we don't know how things feel unless we are feeling them. And we only feel them unless we're confronted with them. And that's really something that people often ignore. And that's also what took me out of philosophy. I mean, I still loved whatever. I learned so much there. But to encounter situations and really feel what's at stake, that's very, very, very, very meaningful. And eventually it's that meaning... That needs to be inside the discussion, inside our navigation of the future, inside of ethics. We need to feel what things mean to us and how they can truly be. Because otherwise we just have all these kind of opinions. I mean, people used to have opinions about electricity being like the worst thing in the world when it was coming out. So it's very easy to be very against technology. So then it's important to actually really... I also have this card that we made with all AI are not equal. We can't talk about AI. There's no such thing as AI. There's only... million kind of AI's that are doing something different and have different effects if it's some decentralized super simple thing that just makes life better that's totally fine but if it's a visual AI that's trained on certain data sets labeled by certain people then that's something else you know so I think it's very important to make it specific so this show also shows how different And how incomparable each of these applications in a way is. And that there's so many different ways to think about it. So I'm kind of unfolding it in a way for the audience. But I'm not making conclusions completely. I mean, I do give some directions, but I'm not giving any conclusions.

[00:27:34.915] Kent Bye: Yeah, I think the feeling I had of going through this piece was that in the title, it also makes reference of a ritual. So it does feel like it has a very much a ritualistic process, especially the onboarding section where we're entering into the experience and sort of being interrogated through these different questions. We're gesturing, we're getting answers around our relationship to technology, post-human, sexuality, sensuality. There's a sort of a Mm-hmm. There was like different symbols that were being communicated and there was parts where there was narrative. And so there was certainly narrative components that was more traditional, but it also felt like overall a lot of symbolic imagery that was juxtaposed with color and lighting and music and kind of giving me more of a feeling or a mood, but also like this space of being confronted with a variety of different images of AI over time as it's evolving and images of robots in the future. And so it sort of creates an amalgam of a lot of provocations that can be isolated in certain moments. But at the same time, like the narrative structure is also very experimental, very loose and like almost a theatrical performance with a six screen performance that we're inside of with like 10 people. And there's an actor who has this like helmet on and like this weird, Phone thing on their face. So it's just like there's a lot of like otherworldly, like I'm entering into a logic of a world that I don't quite understand. And I'm trying to understand the logic of the world, but also the logic of the experience as I'm experiencing it and having to let go of my mind and just rely upon the feelings that I have because I can't always make sense of a logic that's being understood. demonstrated so i kind of have to rely upon other sensory modes to kind of take in all the information and make sense of it so yeah i don't know how you sort of describe this kind of interdisciplinary fusion and this kind of journey that you wanted to take people on

[00:29:40.754] Victorine van Alphen: I think the way you described it, you also give answers to why it's like that. It's very nice to hear you say that because it's another logic, but you do feel it's consistent in itself in a way. So people do really surrender to it. They often tell me that they really were completely in it, like a trip. that they were completely in it, but didn't know exactly how and when and why. It doesn't follow a classical narrative structure, but it does really follow very deep other things that we as humans are completely sensitive to. But because it does so in a completely different configuration, so it works socially, it works with screens. We know screens, we know social things, but it works with gestures. It works with language, but not verbally. So it does everything, but in a different way. So it is familiar and unfamiliar at the same time in many levels. And it's very composed on an emotional and audiovisual way. It's very, very composed through the time. so everybody so do you mean that you do you mean that you started with it when you say it's composed for the emotions does that mean that that was kind of the root of you were trying to always think around the emotional flow yeah yeah for sure so that's also what i'm what my directing method is about is it's really using any medium or dimension of an experience to choreograph not a narrative but in internal experience of the audience And one of the root arcs, one of the arcs is to go from something very small, a personal archive, a death of someone very close, to something very far future, abstract. Yet both sides have a sensuality in common that is very precise and very, yeah. So that arc, it's an arc of letting go and an arc of complete transformation towards something completely different. that arc is much more subconscious than, than it is maybe conscious for many people, but it is totally there. And it's very interesting to see people deal with that. Yeah.

[00:31:52.560] Kent Bye: Yeah. That makes sense. And so one of the philosophers that I've been really drawn to lately has been Alfred North Whitehead and his process philosophy, where he's really providing an alternative metaphysics that's really based upon process and relationship rather than like of substance where substance metaphysics being a foundation of, Analytic philosophy tends to see things as these static concrete objects, but for Whitehead and for process philosophy, it's seen as these dynamic unfolding processes. And so I appreciate it in your piece when you mentioned process and this experience of deep experiences of time where you kind of show not only the evolution of some of these AI models across different fields, types of models but also the creation process so the prompts so the input and the iterative process of what is being put into the system what's coming out i'm finding a lot of artists as they're covering ai there's not only what they're showing in the piece but sometimes there's a Talking around, oh, the process of making it was here's what we ran to and to this bias and that bias. And then that process of those discoveries then also sometimes become a part of the project like yours. And sometimes it becomes an addendum that comes out in conversations that I have outside of it. But there seems to be a way of really kind of interrogating AI technologies or like whatever you want to call it, like technology. I'm sympathetic to Emily M. Bender's and Alex Hanna's like resistance to AI as a marketing term, as a way of describing it, because I think it's anthropomorphizing and other problems with that. But I think for convenience sake, we'll refer to what most people refer to as these AI technologies, but that there is a sense of using the process of the process of creation, but also showing how these AIs are also continuing evolving. And so starting to give us this sense of time by showing the evolution of these AI projects. So I'd love to hear any reflections on the way that you were trying to integrate process into this piece, but also to show these larger messages around AI.

[00:33:43.280] Victorine van Alphen: Yeah, well, it's beautiful that you name that, that how important process is for this piece, but not only for this piece, for many other pieces as well, and for studying AI or the way I were trying to deal with it as an artist. So the first thing is that when my partner in crime, where I was investigating AI with, when he died, the first thing I knew was that I was going to have to include that And that's very radical in a sense of what is process. So I decided really that everything became part of process. That's also why you hear my baby through, while I'm talking about AI, you hear my baby babble. which is then also lip sync, which immediately shows everything in a way. And that's the beauty of it. Death and life have always interrupted our lives because they are our life. They are not interrupting us. They are exactly what life is. That's what I realized. This is not a situation. It's also part of the process. So I think the biggest problem with us and technology is that we often... The fact that technology lives in a screen doesn't mean that we live in a screen. So how do we make that connection between our feelings, our thoughts, our loved ones, and our own bodies, our insecurities, etc., and what happens in a desktop? And I think it's very important to show what I'm prompting. For instance, I was prompting about my C-section. And this totally, completely ambiguous layered weird visual outcome came out and I put it in the show because it's both showing like, hey, what happens if I share something very personal in a prompt and what does it do with that visually, etc. So I think it's been sort of radically inclusive of process of what it means to be human who is using technology. I think that's very important. So I pushed myself to be more radical, even though I hated to make things personal as an artist before. I didn't ever want to make things personal because I just hated anything that was emotional or dramatic or whatever. But I realized I needed to because if I don't show how technology and my feelings and my loved ones and my body and death and life and all these little things and drinking coffee and having orgasms and eating and shitting and how all these things are related to AI because it exists in the same world. If I don't dare to show that, then how will we ever understand those relationships?

[00:36:36.935] Kent Bye: Hmm. Nice. Yeah. And there's moments in the piece that I thought were very confronting in the sense of provocations around AI and how AI has been used to undress people. And at the beginning, there's an opportunity for some people to. Sit in stools. They don't know the full context. Maybe there's a process of consent that's happening based upon the questions that were asked previously, but maybe not like a full connecting of the dots of knowing exactly what's going to happen. And so there's a surprise moment where some people within the audience are essentially undressed. And so I wanted to ask around that because it's a very.

[00:37:14.573] Victorine van Alphen: In my screening, there was no one that got up... The option to stand in front of the screen to censure it, just for the ethics.

[00:37:20.381] Kent Bye: Yeah, so they do have an opportunity to stop it, but it's already happened, and so the harm may already have been done, not knowing what the full context or consent... necessarily a process of informed consent and in that process so there's a provocation where there's a prize of that but then also like if that was disclosed up front where people were really doing that maybe no one does it but then i'm just wondering how you negotiate that because it feels like it could be an area that's very wrought in terms of like kind of re-traumatizing people in a way that is doing something that is kind of violating their sense of identity in a way that It's AI and these virtual images that we all recognize, and it's in the context of an art piece, and we're in Amsterdam. But it's also this area that feels like it's kind of a borderline.

[00:38:08.630] Victorine van Alphen: So there's a few very important ethical details there, I think. The people who are sitting down, they first get the performer to make sure that she comes very close to them. And on her face, it's written, if you sit in this chair, your emails will be used by AI. And that's when people sometimes decide not to sit on the chair. But they will see that probably just before they're going to sit on it, they get like this warning, like if you sit on this chair, your image will be used by AI for this performance only. So they make consent to that. That's what we usually do. We give a lot of consent to the use of our image. And we don't know to which extent that image is used every day, every, every, every, every day. So basically what we're doing is we're misusing a consent that we are already very, that people are already very naive with. to undress people but the undressing happens so slowly that people do have time to censure it before because it starts from the head up so people have time to censure it before the nipples or around the nipples so it doesn't go lower than that so in the moment they see like naked shoulders they could censure the whole screen by standing in front of it So to me, that's very important. And there is some agency. But then there is, of course, also still this moment that people realize like, oh, yeah, there had been a drone. I didn't know it was recording us. And oh, it actually does look much more. It's still funny for many people to see it, but it's also much more confronting than they thought because it's on life size in front of everyone. They're undressed and it and their bodies look different but have some realistic-ness and just the way of seeing for instance here some very high directors that are then undressed in front of the whole audience that's definitely something and I don't know what that something is but I know that something is very social it's a social dynamic that arises That we are all there to witness. Because finally we are there to witness and not just knowing that it happens but not knowing what it feels like. And now we're just showing it live scale and people get the chance to have a feeling with it and an opinion about it. So next to confrontation and provocation, we're offering them a moment to find out how they are positioned towards something like that. How does it feel to see yourself undressed? I was really surprised how naked I felt. and some other people find it just very funny to see their bodies like that they don't identify don't then feel hurt or scared but others do and how is it to see someone else being undressed that you know or that might be your superior or whatever you know how is it to see that and i think because we're doing it with a very slow pacing so really like a very slow striptease and a very beautiful hum very careful vocal hum humming song it becomes something gentle and I hope that people a lot of people gave back that they felt safe in the whole show because of those kind of decisions and that they also felt provoked and shocked at the same time and I hope we strike a balance yeah

[00:41:50.376] Kent Bye: And in terms of the output of that shock, where do you hope that gets channeled into?

[00:42:00.661] Victorine van Alphen: Awareness of how much we can do with an image and how easy it is to consent to very simple things that you don't know the extent of. But that's not per se the goal. I mean, I could say... also it's also awareness of our physicality in general and how vulnerable so it's both like oh they're undressing but it's also this existential moment that's also why the humming and why it takes so long such a time that would really take time for this scene it's also seeing each other naked. It's also, even if it's not about AI, it's a beautiful scene of suddenly being there all naked, which also has, after having the death and the other situations, and the birth, you realize it's also a way that we exist. It is a very cute moment in a way for me as well. I don't know how that was for you, but...

[00:43:07.304] Kent Bye: Well, I was just thinking more around like what's the consent in this moment? You know, just sort of like Helen Nissenbaum has a theory of contextual integrity, meaning that you are consenting to the flows of information where you are fully informed about the context under which it's being shared. And so in some ways, asking for consent and then doing whatever you want, it feels like a dark pattern of how the Internet is doing.

[00:43:27.501] Victorine van Alphen: And so you're kind of replicating this in a way that we are consciously replicating that. Yeah.

[00:43:33.541] Kent Bye: Yeah. And so what I think around is like, well, is there a way to sort of do consent in a way that actually is enthusiastic and ongoing consent, which is more of a, I guess, more of a pro-social approach of like, rather than replicating a dark pattern of stuff and potentially traumatizing people is like, here's some like, well, so... Because they do you think it could traumatize? Oh, absolutely. Yeah. I mean, you're you're putting people in there. They chose to sit on a chair, but they don't know the full context. If it was disclosed to me like, oh, you're unjust, maybe I would have like, oh, that'd be interesting. I might do that. But I didn't know what the context of sitting on the stool was. It's like a risk. And so what's the payoff or the risk? And I don't know the full context of what the situation was. So I chose not to. But for people that do and then just to know that AI uses your images, that's kind of devoid of the full context of like it's going to be used in a sexual way.

[00:44:24.730] Victorine van Alphen: And so to say it like it's not necessarily sexual way.

[00:44:28.905] Kent Bye: Well, it's disrobing them, so I don't know.

[00:44:31.287] Victorine van Alphen: It's disrobing them, but not necessarily in a sexual way. I mean, I do find it important. That's the pacing. It's very important. And that people have the moment to censure. And I do understand what you mean. I mean, we have been doing this mostly now in the Netherlands and in Ars Electronica, where people are quite... Maybe they can deal like you're from a different culture where that's already a bit different. If we would do too much consent, then we wouldn't be able to expose this problem of how easy consents are used beyond their extent. And actually beyond consent, you would say. I would say that most of our image is not used with our consent, but we are just using our phones and are making pictures and... and we press on okay ones and then we're screwed for the rest of our lives. To make that more tangible, how far that extent is, Yeah, I hope I don't traumatize people, but I do really think it's important to provoke there that that extent is possible and then at least do that in a sort of safe way because it's offline, it's a very small group and people can still censure. But of course, I never know. Yeah, it could be... It's interesting, I talked with someone else about another work that kind of, not traumatized us, but really shocked us. But I'm thankful for that. So that's also the difference between art and therapy and everything. Art is sometimes shocking. Like if you have, for instance, the movie Irreversible, I'm completely traumatized by that scene. And I'm thankful for our maker to create it. So what I need is not always what the audience or art needs. I as a person can be too sensitive to take that. For me that was way too much. I couldn't take that scene. But that is, yeah, so each of us have to find a very, I hope we do it in a soft way. And I don't think people will be traumatized to a very, very, very, to an extent that will be problematic. I do think they might be touched or like squeezed or confronted in a way that is actually, yeah, powerful. I heard a girl saying that she was so, she thought it was really nice and she was very curious how to see how she looked. She found that very interesting and only later she realized like, hey, wait. But it's mostly women who sit down. It's mostly women who are being undressed. And it's mostly men who feel really uncomfortable with that happening. But the women themselves feel not so bad with it. So that's also like... I think it's really important to choose for yourself. Because we have almost only women sitting down. Men somehow do not have the courage to sit down on the chairs. And the women who do, they seem really okay and mostly very curious about it.

[00:47:44.307] Kent Bye: Yeah, a couple of thoughts and we should wrap up because I've got another interview that's going to be starting here momentarily. But rather than standing up actively to stop it, you know, ways like what if you stand up already and then if you walk away, then it stops because there's a bit of inertia of sitting down that you have to take a lot of action. And also potentially having them see that image before it's shown so they can consent afterwards.

[00:48:06.488] Victorine van Alphen: to whether or not it's shown so giving them an extra layer of kind of enthusiastic consent and then otherwise it's a private experience for them rather than a public experience and so those are just things to think around that interesting thank you for the suggestions I mean of course it was such a as a director to make such a scene I think it's very very risky and that's also I guess why I did it because I do believe if I don't do it who will in a way right but i really really really do hope we did it in a way that allows people to deal with it through the pacing through the soft consent in a way like the being able to censure but i really like your uh suggestions i will definitely yeah we will definitely brainstorm about it

[00:48:56.681] Kent Bye: And then you can have a fallback where it's censored or maybe it's some other image, you know, or, you know, if everyone says no, then what happens in that moment? So it'll be a private experience for those people. And the last people will be like, what was that about? So anyway, just wanted to share that. And as we wrap up, I'd love to hear what you think the kind of ultimate potential for these types of immersive art, theater and performance and rituals might be and what it might be able to enable.

[00:49:20.371] Victorine van Alphen: In the beginning we did after-talks, mostly for us to get feedback, but I realized they were extremely invigorating for the audience, because the audience was starting to realize that it's very easy to think that your experience is the experience. But in the after talks it became so clear how different everybody interpreted things and how layered all the scenes are and how this can be in fact and what that says about how we relate to bodies, to AI, etc. So those aftertalks were extremely interesting for everyone. The only reason why we didn't do it again is because I also do really want to give people just the time to just breathe and think and just do whatever. But I do hope that the biggest potential of these kind of pieces, immersive pieces that allow you to feel something of the future in different forms and feel different possible futures different possible combinations of humans and non-humans etc and screens and different configurations of humans and technology I hope that they can allow for imagination and reflection yeah and hopefully also discussion yeah

[00:50:45.953] Kent Bye: Yeah, well, this is one of the pieces that I think has many layers, as you mentioned, and it's a very rich, immersive experience. And yeah, I'm happy that you had a lot of the conversation starters that give a little bit more context for the piece. And also just really happy that I had an opportunity to sit down with you and get a little bit more context from the creator yourself. And yeah, thanks again for joining me here on the podcast to help break it all down.

[00:51:06.330] Victorine van Alphen: Thank you so much, Kent. It was nice to see you again. Yeah.

[00:51:10.208] Kent Bye: That's all that we have for today, and I just wanted to thank you for listening to the Voices of VR podcast. If you enjoyed the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a listen-supported podcast, so I do rely upon donations from people like yourself in order to continue to bring you this coverage. You can become a member and donate today at patreon.com slash voicesofvr. Thanks for listening.