Neuroscientist researcher Rafael Yuste started the Columbia University’s Neuro-Rights Initiative to promote an ethical framework to preserve a set of human rights within neuro-technologies. He co-authored a Nature paper titled “Four ethical priorities for neurotechnologies and AI” in 2017, after creating the “Morningside Group” of over 20 neuroscientists who were also concerned about the potential ethical harms caused by neuro-technologies.

Neuroscientist researcher Rafael Yuste started the Columbia University’s Neuro-Rights Initiative to promote an ethical framework to preserve a set of human rights within neuro-technologies. He co-authored a Nature paper titled “Four ethical priorities for neurotechnologies and AI” in 2017, after creating the “Morningside Group” of over 20 neuroscientists who were also concerned about the potential ethical harms caused by neuro-technologies.

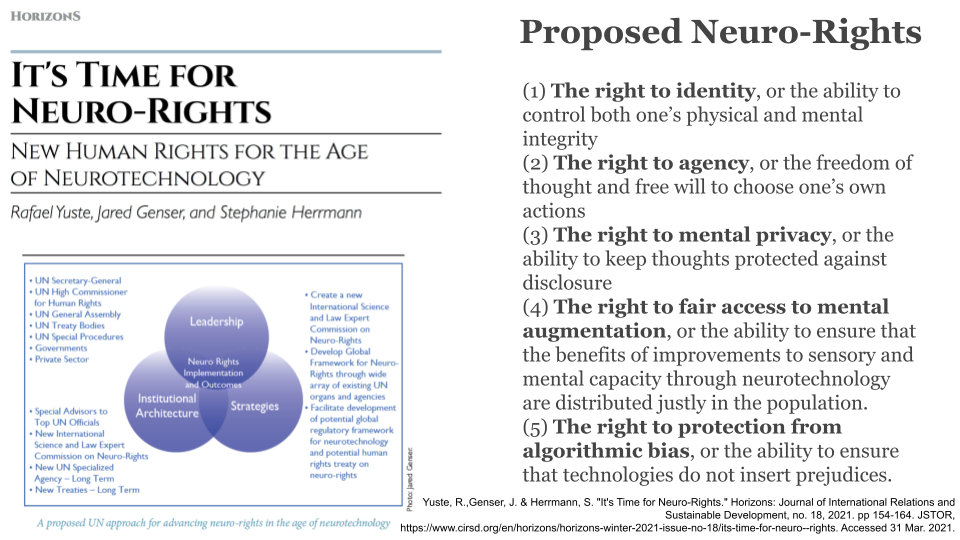

Another neuro-right was added to the latest Neuro-Rights paper titled It’s Time for Neuro – Rights. This brings the list up to the right to identity, right to agency, right to mental privacy, the right for equitable access to neural augmentation, and the right to be free from algorithmic bias. In the end, Yuste hopes to gain momentum within the United Nations to add these fundamental neuro-rights to the Universal Declaration of Human Rights, which could then put pressure on regional legislators to change their laws to stay into compliance with these neural rights.

On May 26th, there was a Non-Invasive Neural Neural Interfaces: Ethical Considerations day-long symposium featuring cutting-edge neuroscientists working to decode the brain, EMG specialists, and other companies working on commercial-grade, neuro-technologies. The gathering was sponsored by the Columbia Neuro-Rights Initiative as well as by Facebook Reality Labs as both sponsors wanted to bring scientists and ethicists together in order to debate the ethical and privacy implications of these neuro-technologies.

I did some extensive coverage of the Non-Invasive Neural Interfaces: Ethical Considerations event within this Twitter thread here:

1/ THREAD covering a #NeuroRights gathering co-sponsored by @FBRealityLabs & @Columbia_NTC's @neuro_rights called "Noninvasive Neural Interfaces: Ethical Considerations Conference."

They'll be Looking at the ethical implications of XR technologies from neuroscience POV. pic.twitter.com/gXzvl3h1UC— Kent Bye (Voices of VR) (@kentbye) May 26, 2021

Part of the concern about these neuro-technologies is that there is already a large amount of data from the brain that can be decoded, and this is only going to increase over time. Yuste also brought up that there as existing methods to stimulate the brain in a way that could violate our right to agency. Whether it’s reading or writing to our brains, Yuste says that we can’t be walking around with the metaphoric hoods of our brains opened up for any outside actor to measure or stimulate.

In the end, there was a lot more science shared at the Non-Invasive Neural Interfaces gathering than meaty ethical debates. There was not enough diversity of speaker backgrounds to hold a true Multi-Stakeholder Immersion gathering that included perspectives from privacy advocates, philosophers, or privacy lawyers. Part of what makes this topic of how to preserve mental privacy so challenging is that it does require a multi-disciplinary approach representing a critical mass of stakeholders and differing competing interests in order to have robust debates on all of the risks and benefits across different contexts. Also, dealing with the complexity of these emerging technologies requires some potential new paradigm conceptual frameworks around the philosophy of privacy such as Dr. Helen Nissembaum’s theory of Contextual Integrity or Dr. Anita Allen’s approach of treating privacy as a human right (see my talk for more context on this)

There was some interesting resistance to one of Yuste’s proposed strategies for preserving our right mental privacy for navigating the threats to mental privacy, since one of his suggestions was to treat the data from these non-invasive neural interfaces as medical devices and medical data. This would regulate data that could be used to decode what’s happening within the body, but also limit how the variety of different brain stimulation devices could be used.

Neuro-tech start-ups like Open BCI and Kernel Co resisted this suggested classification and regulation of neuro-tech as medical devices since their companies probably wouldn’t exist at the point they are today had there been additional medical regulations that they’d have to follow. But Yuste argues that the use of neural data could have profound impacts on the integrity of our body, and so there is a compelling argument that it’s a type of sensitive data that is most analogous to medical data.

After listening to Yuste at the “Non-Invasive Neural Neural Interfaces: Ethical Considerations” conference, I reached out to have him onto the Voices of VR podcast so that he could elaborate on the current state of the art neuroscience of neuro-tech, what he sees as the most viable strategy for protecting our right to mental privacy, why looking at these issues through the lens of human rights is so compelling, where the future of neuro-rights is headed, and why he’s so excited about the revolutionary and humanistic potential of neuro-technologies to help us understand our brains and ourselves better.

LISTEN TO THIS EPISODE OF THE VOICES OF VR PODCAST

Here’s my 22-minute talk on “State of Privacy in XR & Neuro-Tech: Conceptual Frames” presented at the VR/AR Global Summit on June 2, 2021

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Rough Transcript

[00:00:05.452] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR Podcast. So on May 26, 2021, there was a conference at the Columbia Neuro Rights Initiative. It was co-sponsored by Facebook Reality Labs. It was called Non-Invasive Neural Interfaces, The Ethical Considerations. So they got together all these different brain scientists and neuroscientists to talk about the latest innovations with neurotechnologies and the different ways that you can either decode the brain or use different signals from the body to be able to use as an input device with surface-level EMG. And because we have all this new data that is from our body, it's sort of blurring the line of what used to be just in the medical context. Now, all of a sudden, it's with these consumer technologies and has all sorts of really intimate information about potentially even being able to decode our mind and look into our body and to just have so much intimate access to all this information. So there's amazing possibilities that can happen with all this, but also at the same time, in the wrong hands, this data could start to undermine different aspects of our agency, our identity, our rights and mental privacy. So Rafael Eusta, back in 2017, got together with a number of other neuroscientists who were very concerned about the technological roadmap of neurotechnologies and put forth some ethical guardrails. And so they put forth a set of neural rights principles. And so he talks about the right to mental privacy, the right to identity, the right to agency, the right to have equal access to these neural technologies, and then also the right to be free from algorithmic bias as these neural technologies meld with artificial intelligence algorithms. So I had a chance to catch up with Rafael just to get a sense of what is the best roadmap as we move forward to protect our rights to mental privacy. So that's what we're covering on today's episode of the Voices of VR podcast. So this interview with Rafael happened on Thursday, June 3rd, 2021. So with that, let's go ahead and dive right in.

[00:02:01.595] Rafael Yuste: My name is Rafael Yuste. I'm a professor of biological sciences at Columbia University. Where I'm talking from, this is my old microscope behind me, and I'm a researcher. I study the brain, in particular the cerebral cortex, using mice. So we are trying to decipher what the cortex does. The cortex is the largest part of the brain in mammals, and no one knows what it does. We're trying to figure this out.

[00:02:28.743] Kent Bye: Okay. So I know a number of years ago, you started to look at some of the ethical implications, you know, talking about neuro rights. Maybe you could talk about the origin for when you started to look at, maybe we should start to have some either human rights approaches or thinking about some of these neuro rights when it comes to this type of neurotechnologies.

[00:02:45.824] Rafael Yuste: Yeah, so people like me that want to understand the cortex are faced with the problem that the brain has 80 billion neurons. And if you try to study the brain one neuron at a time, it's a little bit like trying to watch a movie in a TV by looking at one pixel at a time. So you're never going to get it. So our hands are shackled by the lack of methods to be able to record the activity of all the neurons in the brain at once. And that's what we've been trying to do, we and many other groups around the world, developing neurotechnology, which are methods to essentially record the activity of as many neurons as possible and to change the activity of as many neurons as possible. These methods are essential for our work. This is the bottleneck of neuroscience, the methods. In fact, President Obama launched the US Brain Initiative, which was actually inspired in our proposal to the White House. to generate methods for neuroscience to do this, to record the activity of many neurons and to activate as many neurons as possible. But these methods can be used for science, as I'm telling you, since they're the bottleneck of neuroscience, and for medicine, because these methods could enable the better diagnostic treatment of mental and neurological diseases. But they could also be used for reasons that are not strictly altruistic. And that's where we started to realize this from the beginning, actually, when we wrote our proposal to the White House. We alerted the White House that this new technology should be developed under an ethical umbrella. So, this was not done, at least is one problem with the Brain Initiative that it did not have a very strong ethical backbone. Now it's starting to have it, but it's five years in. And we decided to convene a grassroots group of people, experts from all over the world, representing brains initiatives from many countries, and also experts in neurotechnology, in ethics, in the clinic, in artificial intelligence, in the law. And we generated the idea that the best way to approach the ethical consequences of neurotechnology is to develop a new set of human rights. And we call those neural rights, which you can imagine is the rights that protect the brains of citizens. And these are not existing yet, not in the universal declaration. And we think they should be added to the universal declaration of human rights. And these rights, these neural rights, would protect the privacy of the minds of people, mental privacy. It would protect our individual agency, our ability to make decisions autonomously, our identity, our concept of self. And also, they should guarantee equal access to neural augmentation so that we don't end up with a society with people that have been mentally augmented with neurotechnology and people that have not. Since we made that proposal in 2017 in a paper in Nature, our group, the Morningside Group, is involved in trying to advocate for these neural rights in different countries, in different venues. And that's what we're doing right now.

[00:05:59.482] Kent Bye: And there's one more, the right to be free from algorithm bias, which is another aspect of making sure that the algorithms that we have are not unduly influencing people.

[00:06:08.852] Rafael Yuste: No, exactly. So that's a fifth, neural rights, and that has to do with the use of artificial intelligence in the devices, in the neurotechnological devices. The two technologies are merging. I think they both have developed independently, AI and neurotechnology, but you can see how this is all going to merge together. I think in the future we will have neurotechnology that has AI and AI will take advantage of the human brain also to operate them.

[00:06:35.382] Kent Bye: Yeah, so this whole concept of the right to mental privacy, I think is probably the biggest challenge in terms of being able to potentially decode different aspects of what's happening inside of your brain. And there was recently a whole non-invasive ethical neurotechnologies and the ethics around that, that was sponsored by the Columbia Neuro Rights Initiative, as well as Facebook Reality Labs. And I was an attendee and was listening to a lot of these neuroscientists talk about the extent of the state of the art in terms of the degree to which that we can decode what's happening in the brain. In some ways it gets thrown around like hyperbolically that you can quote unquote read somebody's mind, but this really is getting to the point where we have technologies that could decode different aspects of what is happening inside of our mind, especially if it's in the working memory. Our long-term memory is a whole nother issue, but it sounds like anything that is recalled from long-term memory and is in this working memory has a potential to be decoded. I know a lot of stuff has happened with super high-end technology that is fMRI or stuff that you'd only find in a hospital or with invasive technologies like ECOG. But it seems like we're on a technological roadmap where at some point with consumer off-the-shelf technologies, whether it's FNIRs or whatever else, as we come up with these new brain control interfaces, that we're going to be able to potentially have the capability for this technology to decode what's happening in the brain. And one thing that you said is that it's already to the point where it's threats to the mental privacy and it's only going to get worse over time. So it's basically how do we start to navigate that? So maybe you could reflect on some of the neuroscience in terms of like, is it hyperbolic to say that we can read our minds or on this technological roadmap to be able to decode different aspects of the brain?

[00:08:13.653] Rafael Yuste: Yep. So if you look at what we're doing with animals today, let's say with mice, with advanced neurotechnology, and these are neurotechnology that is invasive, so we have to go through the skull. But we can decode perception, for example. We've done this in the lab, and other labs have done this. So by recording the activity of the visual cortex, we can predict what the animal is looking at. And not only can we do that, but we can change the activity of the visual cortex and make the mouse think that he's seeing something that it's not seeing. So we can start to manipulate perception and introduce hallucinations, so speaking to the visual cortex of the mice. So this shows you what you can do with neurotechnology. We can decode and manipulate mental processes. Now we can do perception. Other groups around the country and the world are doing this with memories too, with emotions. It can change the emotional state of the animal. In fact, it's not a surprise. All of these things counter the brain. The brain generates all of our mental and cognitive abilities, our perception, our memories, our thoughts, our imagination, our emotions, our decisions, everything. Sooner or later, we'll be able to understand and decode all of that and be able to change it, manipulate it. And this is good. This is good for science and for medicine because a lot of these mental diseases are probably related to these abnormal circuits. So that essentially opens the hood of the brain with new technology. This is the first time that this has happened in history. So now comes the issue that obviously we don't want the open hood of the brain. in the future of society as these technologies percolate from the lab to the clinic to the consumer market. And that's where we have to draw the line in the sand. So I think I'm optimistic that this can be done and we can prevent with new technology the mistakes that we made with the internet or with platforms or social media were developed without any control. And as a consequence, they had a lot of negative effects on the society. And in this case, we're still at a time where new technology is starting to percolate by little into the market. And this is a wonderful time to try to regulate mental privacy in particular. I think mental privacy is the thing that worries me the most, because it's the one that's most urgent, since there's starting to be devices that you could imagine you could start to be used to do low-level decoding. And this is going to get worse the more technology advances, and also the more that AI advances, because AI algorithms will be used to decode them.

[00:10:55.156] Kent Bye: So I know that in 2017, Facebook announced that they're working on a brain control interface to be able to think a thought and then be able to type whatever you're thinking. And it's been four years since that. And I went to the Canadian Institute for Advanced Research and I was seeing some of the different research from UCSF, which is speech synthesis, being able to think a thought and then to be able to actually translate that into spoken words. Do you expect that within the next five to 10 years, we will have with say FNIRS technology, consumer available brain control interfaces that will be able to read our thoughts?

[00:11:27.355] Rafael Yuste: I don't know. It depends on what you mean by reading our thoughts, but they will be able to generate simple commands, move cursors in the screen, for example, operate maybe robotic arms and limbs. I think all of this is possible. And then the type of decoding that's done with humans using fMRI, for example, the work of Jack Allen or Uri Hassan, they can decode images that the person is conjuring, for example, in the case of Jack Allen, or they can decode the type of interpretation that you will give to a story, because your brain goes into a particular brain state in the case of Weyhassen's work. So I wouldn't say that those are thoughts, but those are things that you can already do today with fMRI. So I presume in five years, you may be able to do something like this with portable brain scanners.

[00:12:18.808] Kent Bye: So I know Jack Allen also said that it's one thing to read and decode the brain, it's another thing to write to the brain. And he said that it's a lot easier to decode than it is to write, but there are different deep brain stimulation and transcranial deep brain stimulation, different ways of inputting into the brain. So maybe you could just talk about that in terms of reading, it seems like there's threats to mental privacy, but the writing there seems to be like all these threats to our agency of being able to actually manipulate and control people.

[00:12:45.319] Rafael Yuste: Yeah, that's why I said that what worries me more is the reading and the threats to mental privacy, because the writing technology, as Jack says, are maybe five to 10 years behind. And this is true for animal work, and this is true for human devices. In fact, it's always the case that reading It's much easier than writing when you learn a foreign language. One thing is to read it. Another thing is to write it, as you know. So it's normal. So we should still be worried about the writing technologies. And now is an excellent time to try to define the basic rules by which these writing technologies should operate in humans. Because they will happen. They will operate in humans. And whatever we can do in a mass today, we should be able to do in a human tomorrow. So it's just a matter of time. I don't think there's a reason to wait and be cavalier about it. We already know. We can see the tsunami is going to hit us unless we do something about it. So let's learn the lesson now from COVID. Instead of not doing anything until we got hit by the pandemic, maybe this time we can actually put the barriers in place. And more than barriers, I would say guidelines in place so that this great energy of technological development is channeled in the right direction, which has so much promise. I mean, this is going to be a revolution for humanity.

[00:13:56.716] Kent Bye: And during the conference that was sponsored by Columbia Neuro Rights Initiative, as well as Facebook Reality Labs, you gave five different potential options in terms of pathways towards how we're going to deal with this threat to mental privacy. And there was a conference that I watched online with a number of different philosophers of privacy talking about different approaches. And I think your approaches have maybe all three different approaches. One approach when Dr. Anita Allen is more of a paternalistic approach saying that we should declare that privacy is a human right and should be treating privacy as like an organ where you shouldn't be able to sell different aspects of your privacy. And so we need to have more of a paternalistic approach of saying this is a human right that you don't have agency to be able to do. The other extreme of that is to say that it's just like any other data that we have and that there's something that you could buy and sell or trade access to this information. And that's kind of like the default for where we're at right now. kind of that libertarian approach where it's kind of up to the market to decide what happens to this data. And then there's a Helen Nissebaum has contextual integrity theory of privacy, which she defines appropriate flows of information according to the context. And I think in some ways your different five approaches are kind of like doing some sort of context dependent stuff that like, maybe it's okay to do it for science or medical research, or, or maybe it should be treated like medical or genetic information. So there's some restrictions Or maybe we need a paternalistic approach where it's like a human rights approach, but that gets enshrined into our federal laws that actually prevent certain applications or uses of this type of data. So I'm wondering if you could kind of reflect on paths forward in these different approaches and what you see as the most viable way moving forward to protecting our mental privacy.

[00:15:33.492] Rafael Yuste: Yeah, this is a complicated topic and it has a lot of shaded areas. And it's hard to discuss it in a way sort of before the game starts. But one approach that I recommend would be to use the medical model. The idea is the following, to treat all neurotechnology, even if it's non-invasive, as a medical device. In other words, bring neurotech and the umbrella of medicine and apply to it all the medical regulations. So, the advantage of this is that we already have an existing set of rules and laws to and traditions that go back 2,000 years that society uses to deal with technologies that affect the human body. So this will be very natural if you're talking about implantable neurotechnology, because whether you want it or not, you need neurosurgery. You have to go through the medical profession, which means that the device is subject to strict regulation. The data is also subject to medical data regulations, et cetera. But what's new about our proposal is that we would extend that to the non-invasive consumer type devices. So things that may look like an iPhone for your head, like a brain iPhone, or a wearable, or a helmet, would need medical permission to be used. And the data would be protected as confidential medical data, just like your medical records. I think that would be a solution that would simplify the debate a lot. And it's a change of concept, not because you would have to go to companies like Facebook that are developing this thought to text BCI and say, hey, you guys are developing a medical device, so you have to get approval from the FDA. Now, it may not be the FDA, but something like that. There would be a panel, a regulatory panel, that would be embedded in ethics. and in this case, hopefully also in new human rights, that would regulate how you build and distribute these devices and how you use the data and what type of protection. And I think that could be one way to deal with all of these problems by steering the ship, pushing the needle in the direction of medicine, And my gut feeling is that medicine has, I would say, a spectacular track record for humanity, 20 centuries working to benefit people. There's always an exception and a bad doctor here and there. But even in the worst times in history, in the middle of the middle of the darkest ages, you go to the doctor and you have someone there to help you. And this hasn't blocked the development of technology for medicine. In fact, it's flourished until The tech industry came about. The biopharma were among the largest companies in the world. So there is a whole ecosystem that enables innovation in medicine, but it's subject to ethical and societal regulations. And I think it would be good to start with a good step in this new technology era by bringing it under the medicine.

[00:18:42.411] Kent Bye: You also mentioned what was happening in Chile, as well as in Spain. There was a representative who was talking about the Charter of Digital Rights. It seems like taking a human rights approach, and then for some jurisdictions, they may decide to translate those human rights into their local laws, which at the end of the day, need to have the laws change in each region in order to actually be binding into a certain jurisdiction. So do you see that as a good pathway forward is to set forth these human rights principles, and then from there, kind of leave it up to each of these different countries, or maybe make universal declaration of human rights. But even if that happens, that still has to be enshrined into how each of these jurisdictions implement those within their local laws.

[00:19:18.666] Rafael Yuste: Well, I think to work with different countries and have different countries consider the issue and develop progressive legislation like Chile is doing, it's fantastic. But the key stakeholder here is the UN. United Nations owns the Universal Declaration. It's at the core of the UN, and it's at the core of everything that the UN represents, the entire global set of international organizations. So that's where we have to hit. And there's interest by the UN. In fact, they convened a meeting by the end of June on this topic. So they want to examine this issue of human rights and neurotechnology. And I think cases like Chile could be precedents that could be used by the UN or its associated organizations to develop guidelines and regulations. And I wouldn't underestimate what the UN can do. So for example, or internationalization, look at how we regulate in the world atomic energy, biological weapons, chemical weapons. Even more recently, the Commission for the Disappeared created by the UN has effectively, I wouldn't say eliminated, but made it much harder for rogue states to disappear people left and right. Now there's some serious consequences if you do that. So I can imagine a commission on neural rights that the UN could create. And if the countries developing the neurotechnology, which are the ones normally that are more careful about human rights, the first world, so to speak, adheres to these principles, then the companies operating in these countries will have to stick to these principles. And this could percolate through the legal system of those countries and the rest of the world. I think that's the path forward that I can imagine.

[00:20:59.257] Kent Bye: And the final question here is that when we talk about these neural technologies, it has lots of threats to the privacy identity and agency, but at the same time, there's lots of amazing things that can happen with this technology. So what do you see as like the most exalted potential, the ultimate potential of all these technologies and what they might be able to enable?

[00:21:15.610] Rafael Yuste: Yes, I mean, I'm super gung-ho about neurotechnology. I'm super positive. I think this is going to be a revolution in humanity. And it's going to usher a new renaissance. I think this is going to be Renaissance 2.0. So why do I say that? Because these technologies will enable us to finally understand the workings of the human brain and explain how the human brain generates the human mind. and we'll be able to understand ourselves from the inside for the first time, to see who we are. That's why I think there's going to be a new humanism, what it means to be human. How are all these mental and cognitive abilities that define what we are, how are they generated? We finally will see them from the inside. This has to be good. It will do away with prejudices that we may have inherited from previous generations. I think it will be the ultimate humanism. But on top of that, you have to realize that a large proportion of the world suffers mental and neurological diseases. And these brain diseases have essentially no cure, in spite of the heroic efforts of neurologists and psychiatrists. And they don't have a cure because we don't understand how the organ works. So it's very hard to fix a broken machine if you don't know how the machine works. And this new technology is not going to happen overnight, but this is exactly the kinds of tools that psychiatrists and neurologists need to get in and understand the pathophysiology of the disease. In other words, where the normal function of the organ goes wrong, to be able to design therapies that go to the heart of the problem. I think so this is going to be just for that. The ride is worth it. We have the urgency of treating all these patients. I'm sure that every one of your listeners has family members or friends that suffer from mental or neurotical diseases like Alzheimer's, schizophrenia, Parkinson's, depression, mental retardation, autism, stroke, you name it. I mean it's just a scourge of medicine and you can barely do anything about all these diseases today.

[00:23:15.910] Kent Bye: Awesome. Well, Rafael, thank you so much for joining me here on the podcast and giving me a bit of an update as to what's happening with the Columbia NeuroRights Initiative, as well as this larger movement of NeuroRights. These threats to mental privacy, to me, especially the melding of these technologies with immersive virtual and augmented reality technologies, it's a lot of amazing potential, but also a lot of harm that could be done and we need some guardrails. And I'm glad to hear that you're working on these NeuroRights Initiatives and hopefully they'll end up in the right place to be able to help both protect us, but also enable all these amazing technologies. Thank you for joining me here today. Thank you, Ken. So that was Rafael Eusta. He's a professor of biological sciences at Columbia University and the founder of the Columbia Neuro Rights Initiative. So I have a number of takeaways about this interview is that first of all, well, I do feel like taking a human rights approach may actually be the best approach for some of us right now. And you have to really balance this technological innovation for moving forward versus the ways that harm can be done. And what he said is that we're basically opening up the hood of the brain. And we can't really function as a society with everybody's brain being open to both being read and manipulated externally or to be directly manipulated through neurotechnology as this continues to move forward. Right now, Rafael was saying that the writing technology is about five or ten years behind the reading, but even just the reading aspect and the threats to mental privacy. So, there is this effort, he said, at the end of June, there's going to be a gathering within the United Nations to talk about some of these newer rights and how to potentially enshrine them into the Declaration of Human Rights, which, if that happens, then may percolate around the world into different local laws. I know from just going to this Non-Invasive Neural Interfaces Ethical Considerations Symposium and Conference, co-sponsored by the Columbia NeurIts Initiative, but also Facebook Reality Labs, there was a number of different startups that were there that said that if this takes the approach of treating our brain data as medical information, then they wouldn't be able to exist. They would basically go away. And it's arguable that all of VR technologies could be considered a type of neurotechnology. And if you're going to consider every single VR headset as a medical device, then that's going to potentially also stifle what's happening in the virtual reality community. At the same time, all this data that is going to be made available as we continue to put more and more sensors into these headsets and I'll be touching base with the open BCI Conor Russomano here soon to be able to really unpack all the different additional Physiological and biometric sensors to start to integrate into these VR headsets the open BCI is collaborating with valve as well as with Toby so they have everything from electroocular devices and PPG and EEG and eye tracking and different body temperature and EMG stress sensors within the headset to be able to detect your facial expression so all this information is going to be able to aggregate all sorts of physiological and psychographic information that could be transmitted in real time that's contextually dependent, but it could also be captured in mind and used in inappropriate information flows that maybe that is justified through the terms of service, but people may be objecting in terms of how that information is actually being used. So how do you get a handle on all this? And you know, there's different approaches to privacy and, you know, whether or not we say, hey, you know what, you can't do anything with any of this data because it's a medical device. And if you do want to do anything, then you have to jump through all the hoops to be able to even have access to that. So, you know, certainly there's a wide range of different perspectives on this issue, and I'll be talking to different perspectives and to really unpack some of the best path forward. If we do say that there are some existential threats to our mental privacy, How do we protect it? I think what Rafael is saying is these neurotechnology devices should be treated like medical devices, but it's hard to pass something like that within U.S. law. But if they go directly to the U.N. and they have the U.N. added to the Universal Declaration of Human Rights, then maybe at that point there'll be a larger effort to start to change the different laws around the world to be able to have different protections around these different things. So, you know, Helen Niesbaum talks about the contextual integrity theory of privacy, which is about appropriate flows of information within the context. So you have to define what that context is, and you have to look at those normative standards, and you have to see what's appropriate and what's not appropriate. And I think that's where we're kind of at with these different types of technologies, is trying to determine what's appropriate, what's not appropriate, and how do you put in some sort of safety guardrails to ensure that whatever is contextually appropriate for what the user is consenting to or if there's even a level of consent that they can even give to it, which I think is something that Dr. Anita Allen starts to argue in terms of privacy should be treated like a human right that's non-negotiable or that you can't buy or sell or trade. So just yesterday at the VRAR Association's Global Summit, I gave a talk about privacy because when I went to this non-invasive neural interfaces, ethical considerations. You know, one of my complaints about the gathering was that it was only like neuroscientists and a handful of ethicists, but they're really moderating. They weren't speaking much and really didn't have really deep dive debates about some of these different ethical and moral dilemmas within this technology. We were able to get a sense of what's possible scientifically, but there wasn't debating how to make an appropriate use of this different data. So probably one of the most interesting debates that did happen there was some of the different startups like OpenBCI as well as Kernel start to talk about how they want to be able to put the ownership of the data into the hands of the people and not have this kind of like colonial mindset, which is like if somebody develops a piece of technology and they're measuring something, that means all of a sudden they own all that data and they can do whatever they want with it as long as you sign the terms of service and you basically are consenting over to handing over all this data to these different companies. And that seems like a model as we move forward into neurotechnologies, that is just a non-starter. It's not going to work and it's not viable and it's dangerous. So at the same time, we want to be able to give people access to that data. that we want to deem as appropriate, so that we can get the benefit out of that. And so this is where we're at. It's a big debate. And just from listening to what Facebook was saying, they were talking about things like technological architectures of say, you know, federated learning and stuff like that, that's not really actually going to deal with the real-time interpretation of biometric information that's used to extrapolate different psychographic information, and it's not really necessarily addressing the heart of the problem of how to protect our right to mental privacy, our right to identity, our right to agency, and these other rights of the equal access as well as being free from algorithmic bias. You know, those underlying fundamental rights, there wasn't anything that was a consensus around an ethical framework for how to navigate this as we move forward. The Facebook reality lab research employees that were there had their own personal opinions, but they're not speaking on behalf of Facebook. There's conversations that are starting, but Facebook themselves haven't dictated what path that they think is the best. My sense is that they take a little bit more of a libertarian approach, which is you have notice and consent and whatever that you are consenting to, then everything is cool. It's basically the fair information practice principles from 1973. And, you know, just even from their responsible innovations principles, the first two principles are all about a reframing of those fair information practice principles, which is number one, don't surprise people, which is essentially like, as long as you disclose what you're doing, then everything is cool. That's going back to this notice and consent framework, as well as put controls into the hands of the people. So their first two responsible innovation principles of don't surprise people, as well as put controls that matter, matter to who matter to them matter to you, it's unclear, but In essence, those first two principles are talking about a model of privacy that goes back to the fair information practice principles, essentially treating this data as things that you can buy and sell or trade to be able to get access to different things, which is nothing like the whole human rights approach that was being talked about here. So there's a risk of ethics washing that if Facebook in the future starts to talk about this gathering as something that was justifying whatever effort they're taking, I'd say that would be going above and beyond what was discussed in the context of this meeting. It wasn't recorded. So I did take a whole long Twitter thread trying to capture the essence of those different types of conversations. But I think there's a little bit of an open question where I think there are a number of different neuroscience researchers within Facebook Reality Labs who do want to do the right thing in terms of putting up the guardrails that not only protects the consumers, but also allows them to innovate and do the different types of technologies that they want to make and create. But we are at this impasse between these different philosophical differences around the philosophy of privacy and whether or not some of these things should be treated as a human right or whether or not they should be treated as something that you can buy and sell. Again, if you check out my talk that I did at the VRARA Global Summit, it sort of dives into that, and it's a little bit more of an overview. And I hope to be diving into all these topics a little bit more, just because next week is RightsCon, and at RightsCon, I'm going to be working with the Electronic Frontier Foundation, as well as different human rights lawyers like Britton Heller, as well as other folks from the VR community to have these discussions with human rights lawyers to be able to talk about whether or not there's some generalized human rights frameworks to be able to approach all of immersive technologies. The NeuroRights initiative is good for neurotechnology, but I think there may be additional things when it comes to a human rights approach. And so that's what we'll be discussing at RightsCon next week. And so there's going to be a number of different interviews that I'm going to be diving into to help prepare for some of the discussions that we're having. So, that's all that I have for today, and I just wanted to thank you for listening to the Voices of AR podcast. And if you enjoy the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a listener-supported podcast, and so I do rely upon donations from people like yourself in order to continue to bring you this coverage. So, you can become a member and donate today at patreon.com slash voicesofar. Thanks for listening.