Jessica Brillhart is the principle filmmaker for virtual reality at Google, and she been exploring the cross section of artificial intelligence and storytelling in VR. I had a chance to catch up with her at Sundance again this year where we did a deep dive into my Elemental Theory of Presence that correlates the four elements with four different types of presence, including embodied (earth), active (fire), mental & social (air), and emotional (water) presence.

Jessica Brillhart is the principle filmmaker for virtual reality at Google, and she been exploring the cross section of artificial intelligence and storytelling in VR. I had a chance to catch up with her at Sundance again this year where we did a deep dive into my Elemental Theory of Presence that correlates the four elements with four different types of presence, including embodied (earth), active (fire), mental & social (air), and emotional (water) presence.

Artificial intelligence will enable VR experiences to more fully listen and respond to you within an experience, and it will be the vital technology that will bridge the gap between a story of a film and the interaction of a game. I expand upon my discussion with Brillhart in an essay below exploring the differences between 360 video and fully-immersive and interactive VR through the lens of an Elemental Theory of Presence, and make some comments about the future of AI-driven interactive narratives in virtual reality.

LISTEN TO THE VOICES OF VR PODCAST

AN ELEMENTAL THEORY OF PRESENCE

Many VR researchers cite Mel Slater’s theory of presence as being one of the authoritative academic theories of presence. Richard Skarbez gave me an eloquent explanation of the two main components of presence being the “place illusion” and “plausibility illusion,” but I was discovering more nuances in the types of presence after experiencing hundreds of contemporary consumer VR experiences.

The level of social presence in VR was something that I felt was powerful and distinct enough, but yet not fully encapsulated in place illusion or plausibility illusion. I got a chance to ask presence researchers like Anthony Steed and Andrew Robb about how they reconciled social presence with Slater’s theory. This led me to believe that social presence was just one smaller dimension of what makes an experience plausible, and I felt like there were other distinct dimensions of plausibility as well.

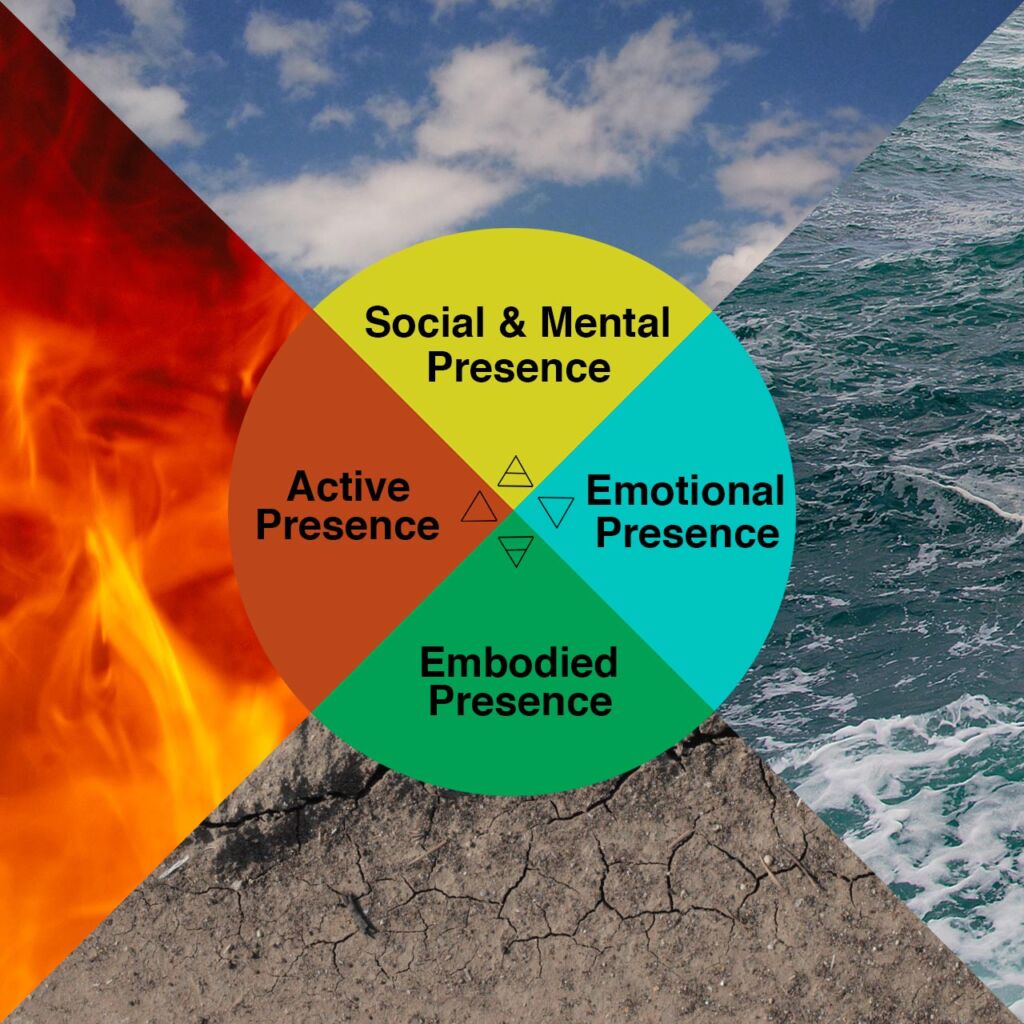

I turned to the four elements of Natural Philosophy of earth, fire, air, and water for a philosophical framework and inspiration in describing different levels of plausibility in VR. I came up with four different types of elemental presence including embodied, active, social & mental, and emotional presence that I first started to talk about in a comprehensive way in my last interview with Owlchemy Labs’ Alex Schwartz.

The earth element is about embodied presence where you feel like your body has been transported into another realm and that it’s your body that’s there. The fire element is about your active and willful presence and how you’re able to express your agency and will in an interactive way. The air element is about words and ideas and so it’s about the mental & cognitive presence of stimulating your mind, but it’s also about communicating with other people and cultivating a sense of social presence. Finally, the water element is about emotional engagement, and so it’s about the amount of emotional presence that an experience generates for you.

After sharing my Elemental Theory with Skarbez, he pointed me to Dustin Chertoff’s research in experiential design where he co-wrote a paper titled “Virtual Experience Test: A Virtual Environment Evaluation Questionnaire.” The paper is a “survey instrument used to measure holistic virtual environment experiences based upon the five dimensions of experiential design: sensory, cognitive, affective, active, and relational.”

Chertoff’s five levels of experiential design can be mapped to the four levels of my Elemental Theory of Presence where earth is sensory (embodied), fire is active (active), air is both cognitive (mental) and relational (social), and water is affective (emotional).

I started to share the Natural Philosophy origins of my Elemental Theory of Presence with dozens of different VR creators, and they found it to be a useful metaphor and mnemonic device for describing the qualitative elements of an experience. I would argue that the more that a VR experience is able to achieve these four different levels of presence, then it’s going to feel like more of a direct lived experience that mimics what any other “erlebnis” experience feels like in reality.

Achieving the state of presence is an internal subjective experience, and so it’s going to be different for every person. But I believe that this Elemental Theory of Presence can help us understand a lot about virtual reality including being able to describe the different qualitative dimensions of an individual VR experience, describe the differences between mobile VR/360 video and room-scale VR, help elucidate the unique affordances of VR as a storytelling medium, and provide some insight for how AI will play a part in the future of VR narratives.

360 VIDEO CONSTRAINS EMBODIMENT & AGENCY

Brillhart begins her Filmmaker Magazine article about VR storytelling with a quote from Dziga Vertov about how the film camera could be thought of as a disembodied mechanical eye that can “show you a world the way only I can see it.” She says that “VR isn’t a disembodied medium at all. It’s quite the opposite, because its whole end-goal is embodiment.”

Watching a VR experience and being able to look around a 360-degree space starts to more closely mimic the experience of being in a specific place, and it takes away the control of the creator of being able to focus attention on specific things. From a storytelling perspective it means that “what I have to be as a VR creator is a story enabler, not the story dictator.”

Film and 360 video at this point has limited amounts of embodied presence and active presence. Because you can’t fully move your body around and there’s not a plausible way to interact or express your agency within the experience, then we could say that the earth and fire elements are constrained. You can still turn around your head, which mimics what it feels like to be standing still and looking left, right, up, or down without leaning too much, and you can express your agency by choosing what to look at and pay attention to. But it’s difficult to achieve the full potential of embodied and active presence given the current 3DOF tracking constraints and limited interactivity with live captured footage.

Having three degrees-of-freedom in mobile VR headset at this matches that capabilities of 360-video, but anyone who has experienced full-room scale VR with 6DOF hand tracking knows that the sense of embodiment is drastically increased. If you have good enough hand and elbow-tracking and inverse kinematics, then it’s possible to invoke the virtual body ownership illusion where you start to identify your virtual body as your own body.

Adding in the feet gives you even more of a deep sense of embodied presence, and haptic feedback is also once of the fastest ways of invoking the virtual body ownership illusion. My experience with The VOID still stands as the deepest sense of embodied presence I’ve experienced because I was able to explore around a space unteathered forever because my mind was tricked by the process of redirected walking. I also was getting passive haptic feedback every time I reached out to touch a wall. Moving around a room-scale environment can partially mimic this feeling, but the level of embodied presence is taken to the next level when you remove the wire tether and allow intuitive, beyond room-scale movements without any presence breaking chaperone boundaries trying to keep you safe.

The fire element is also constrained in 360 video. You are able to look anywhere that you want to across all levels of 3DOF and 6DOF virtual reality, but mobile VR limits the full expression of your agency. Without having natural and intuitive movement that comes tracking your hands and body on all six degrees of freedom, then any expression of agency is going to be abstracted through buttons on a gamepad, gaze detection triggers, or the trackpad on a Gear VR. These abstracted expressions of agency can only take your level of active & willful presence so far, because at a primal brain level I believe that active presence is cultivated through eliminating abstractions.

This means that 360 videos are not able to really cultivate the same depth of presence that a fully volumetric, interactive experience with 6DOF tracking in a room-scale environment is able to. This is the crux for why some hardcore VR enthusiasts insist that 360 video isn’t VR, and it’s also why 360 video will be trending towards positionally-tracked volumetric video whether it’s stitched together with photogrammetry techniques like 8i or HypeVR, using depth sensors like DepthKit or Mimesys, or using digital light field cameras from companies like Lytro.

I believe that the trend towards live action capture with volumetric video or digital lightfields will increase the feeling of embodied presence, but yet I have doubts that it will be able to achieve a satisfying level of a active and willful presence. Without having the ability to fully participate within a scene, then it’s going to be difficult for any live-action captured VR to be able to create a plausible sense of presence for the fire element. It’ll certainly enable “story dictators” to have complete control over the authored narrative that’s being presented, but any level of interactivity and expression of active and willful presence will be constrained.

Conversational interfaces with dynamically branching pre-recorded performances will perhaps offer way for you to express your agency within an experience. There are some narrative experiences starting to explore interaction with pre-filmed performances like Kevin Cornish’s Believe VR, which is triggered by gaze detection as well as Human Interact’s Starship Commander, which is triggered by natural language input (more on this down below. But the dominant mindset for most narrative storytellers coming from the film world is to not provide any level of interactivity to their authored stories.

360 VIDEO AMPLIFIES MENTAL & EMOTIONAL PRESENCE

Whenever you reduce the capability of one dimension of presence, then you can amplify the other elements. If mobile VR and 360 video has constrained embodied and active presence, then it can actually cultivate a deeper sense of mental/social and emotional presence. There’s a reason why the major empathy VR pieces have been 360 videos, and I’d argue that social VR experiences with constrained movement like BigScreen VR can actually provide a deeper sense of social presence with deeper and longer conversations.

360 video can also capture microexpressions and raw emotions in a much more visceral way. Our brains have evolved to be able to discern so much information from a human face, and so 360 video has a huge role to play in capturing human faces within a documentary or memory capture context. Live performances from actors can also be extremely powerful in the VR medium, and there is something that can be lost when it’s converted into an avatar.

The uncanny valley ends up driving avatars towards stylization. This has a double edged sword for 360 video. One the one hand, live capture video can capture a transmission of raw emotional presence when you have full access to someone’s facial expressions, body language, and eye contact. On the other hand, the uncanny valley is all about expectations, which means that 360 video almost always violates the fidelity contract for presence. When you get a photorealistic visual signal from VR, but it’s not matched by the audio, haptics, smell, and touch, then your brain will send a presence breaker signal to your primal brain that keeps you from feeling fully present. So CGI experiences can create a surrealistic world that transcends your expectations, and therefore can actually cultivate a deeper sense of presence.

That said, there’s still so many compelling use cases for 360 video and volumetric capture that I’m confident that it’s not going to go away, but there are clearly enough downsides to the level of presence that you can achieve with 360 video given it’s constraints. But I’d still argue against anyone who tries to argue that 360 video is not VR, especially once you understand how the power of embodied cognition can be triggered whether it’s in a 360 video or fully volumetric VR experience.

There are also a lot of storytelling advantages in having limited embodiment and agency that can amplify the sense of emotional, social, and mental presence in an experience. It will get cheaper and easier for anyone to create a 360-video experience, and emerging grammar and language of storytelling in VR is continue to evolve. So I see that there’s a healthy content ecosystem for 360 video that will continue to evolve.

The level of social interactions that you can have on a 3DOF mobile VR headset is also surprisingly adequate. There is still a large gap of body language expressiveness when you’re not able to do hand gestures and body movements, but there’s still quite a lot of body language fidelity that you can transmit with the combination of your head gaze and voice. This gap will also be closed as soon as 6DOF head and hand tracking eventually comes to mobile VR as soon as 2017 or 2018.

STORYTELLING IN VR IS ABOUT GIVING & RECEIVING

When it comes to narrative and storytelling in VR, there’s a continuum between a passive film and an interactive game where I feel that a virtual reality experience sits in the middle of this spectrum. I’d say that films and storytelling are more about mental & emotional engagement while games are more about active, social, and embodied engagement. Storytelling tends to work best when you’re in a receiving mode, and games work best when your exerting your will through the embodiment of a virtual character.

From a Chinese philosophy perspective, films are more about the yin principle and games are more about the yang principle. The challenge of interactive storytelling is to be able to balance the yin and the yang principles to provide a plausible experience of giving and receiving. At this point, games resort to doing explicit context switches that move into discrete story-mode cinematics to tell the story or have explicit gameplay and puzzles that are are designed for you to express your agency and will. This is because interactive games haven’t been able to really listen to you as a participant in any meaningful way, but that will start changing with the introduction of artificial intelligence and machine learning technologies.

ARTIFICIAL INTELLIGENCE WILL ENABLE EXPERIENCES LISTEN TO YOU

Machine Learning has shown amazing promise in computer vision and natural language processing, which means that games will soon be able to understand more about you through watching your movements and listening to what you say. When you’re embodied within a 6DOF VR experience, you are expressing subtle body language cues that machine learning will eventually be able to be trained upon. As I covered on my episode on privacy in VR, Oculus is already recording and storing the physical movements you make in VR, which will enable them to train machine learning neural nets to potentially identify body language cues.

Right now most machine learning neural networks are trained with supervised learning, which would require a body language expert human to watch different movements and be able to classify them into different objective categories. Body language experts are already using codifying body language behaviors within NPCs to create more convincing social interactions, and it’s a matter of time before AI-driven NPCs will be able to identify the same types of non-verbal cues.

When you speak to AI characters in VR experiences, then natural language processing AI will be able to translate your words into different discrete buckets of intent, which can then trigger behaviors in a much more interactive and plausible way than ever before. This is the approach that Human Interact’s Starship Commander is taking. They announced today that they’re using natural language input with Microsoft’s new Language Understanding Intelligent Service (LUIS) and Custom Recognition Intelligent Service (CRIS) that’s a part of their Cognitive Service APIs. Starship Commander’s primary gameplay mechanic is natural language input as you play through their interactive narrative giving verbal commands to the Hal-like computer. I have an interview with Human Interact’s Alexander Mejia that will air in my next episode, but here’s a trailer for their experience.

I believe that with the help of AI, a VR storytelling experience is what is going to sit in the middle between a yin-biased film and yang-biased game. What makes something an “experience”? I’d say that an experience is any time that we feel like we’re crossing the threshold to achieve a deep level of presence in any of the four dimensions whether it’s embodied, active, mental/social, or emotional presence. If it’s hitting all four levels, then it’s more likely to reach that level of direct experience with a deep sense of presence. With the embodiment and natural expression of agency that is provided by VR, then the virtual reality medium is uniquely suited to be able to push the limits of what embodied storytelling is able to achieve.

WHAT THE FUTURE OF AI-DRIVEN NARRATIVES WILL LOOK LIKE

There’s a couple of AI-driven games that I think show some of the foundational principles of where VR games will be going in the future.

Sleep No More is an immersive theater experience where the characters are running through a hundred different warehouse rooms interacting with each other through interpretive dance in order to tell the story of Shakespeare’s Macbeth. As it stands now, the audience is a passive ghost, and you can’t really directly interact of engage with the narrative at all. You can decide what room to go into and which actors to follow or watch, and it’s a looping narrative so that you have a chance to see the end of a scene and later see the beginning.

Imagine what it would be like if the characters were able to listen to you, and you’d be able to actually engage and interact with them. The characters would have the freedom to ignore you just as in real life a stranger may blow you off if they had something important to do, but there could be other ways that you could change the outcome of the story.

One AI-driven simulation game that explores the idea of changing the fate of a tragic story, in Elsinore you have the ability to change the outcome of Shakespare’s Hamlet. You play the minor female character named Elsinore whose movements and interactions would have been plausibly been ignored within the context of the original story. You can go around and try to intervene and stop the set of actions that leads to a number of different murders.

Elsinore is a time-looping game that uses some sophisticated AI constraints and planning algorithms that determines the fate of the story based upon each of your incremental interventions. The story dynamically changes spins off into alternative branching Hamlet fan fiction timelines based upon your successful interventions of multiple tragedies. You have four iterations of the course of a single day Groundhog’s Day style, and you prevent the multiple murders through social engineering. Once natural language input and VR embodiment are added in an experience like this, then this type of emergent storytelling / live theater genre is going to be perfectly well-suited for the virtual reality medium.

https://www.youtube.com/watch?v=eXs0jc2YDKk

Bad News is an amazing AI-driven game that I had the privilege of playing at the Artificial Intelligence and Interactive Digital Entertainment conference last October. There’s a deep simulation that creates over 100 years of history in a small imaginary town complete with characters, families, relationships, work history, residencies, and a social graph. You’re thrown into this world at the scene of a death of a character and it’s your job to notify the next of kin of the death, but you don’t know anyone in the town and you can’t tell anyone why you’re looking for the next of kin. You can only notify the next of kin of the death otherwise you lose the game. Your job is to explore a this imaginary world talking to residents trying to find the family members of the deceased.

You have two interfaces with this simulated Bad News world. One is an iPad telling you locations, addresses, and then descriptions of people who are in each location that you go to. After you pick a location and choose someone to talk with, then you explore this world though conversations with an improv actor. These improvised conversations are your primary interface with this imaginary world, and you have to use your detective skills to find the right person and your social engineering skills to come up with a convincing cover story without being too noisy or suspicious.

The improv actor has a list of information about each character that he’s embodying so that he can accurately represent the personality and openness as determined by the deep simulation. He also has access to a Wizard of Oz orchestrator who can query the database of the deep simulation asking about information on the location of other town residents. Because this world is based upon an actually deep simulation that’s running live, then the interactor has find a moving target and so the Wizard of Oz can provide subtle hints to keep the run time of the game at around one hour.

It was an amazingly rich experience to engage in a series of conversations with strangers played by the improv actor in this imaginary town. You’re fishing for information and clues as to who is related to the person who has passed and where to find them while trying to maintain your cover story. Bad News encourages the type of narrative-driven, role-playing interactions that only a human can do at this point, but this type of conversational interface is what AI-driven natural language processing is going to enable in the future. This is the trajectory of where storytelling and gaming and in virtual reality is headed, and this possible future is freaking out traditional storytellers who like to maintain control.

THE BATTLE BETWEEN AUTHORED & EMERGENT STORIES

There are some people from the Hollywood ecosystem who see the combination of gaming and film as “dystopian.” Variety Fair’s Nick Bilton says, “There are other, more dystopian theories, which predict that film and video games will merge, and we will become actors in a movie, reading lines or being told to “look out!” as an exploding car comes hurtling in our direction, not too dissimilar from Mildred Montag’s evening rituals in Fahrenheit 451.”

This vision of films and games merging is already happening, and I certainly wouldn’t call it “dystopian.” Why is there so much fear about this combination? It think some storytellers see it as dystopian because the types of open world, sandbox experiences have not had a very strong or compelling narratives integrated into them. Being able to express the full potential of your agency often completely disrupts the time-tested formula of an authored narrative.

Hollywood storytellers want to have complete control over the timing of the narrative that’s unfolding. Hollywood writer and Baobab Studios co-founder Eric Darnell says that storytelling is a time-based art form where there’s a series of chemicals are actually released within our bodies that follow the dramatic arc of a story. He says that you can’t let people interact and engage forever, and that you have to keep the story moving forward otherwise you can lose the emotional momentum of a story.

Darnell was actually very skeptical of VR’s power of interactivity when I spoke to him in January 2016 where said that there’s a tradeoff between empathy and interactivity. The more that your engaging with an experience, then it becomes mostly about finding the limits of your agency and control. Stories are about receiving and if you’re completely focused on transmitting your will into the experience, then you’re not open to listening or receiving that narrative.

This is what Darnell believed last year, but over the past year he’s been humbled by observing the power and drive of VR to off the possibility of more interactivity. He watching so people who wanted to more directly interact with their bunny character in Invasion!, and he kept hearing that feedback. So at Sundance 2017, Darnell explored how empathy could be combined with interactivity to facilitate compassionate acts in Asteroids!.

He makes you a low-level sidekick, and you get to watch the protagonists play out their story independent of anything that you do. But I wouldn’t classify Asteroids! as a successful interactive narrative, because your agency is constrained to point where can’t really cultivate a meaningful sense of willful presence. Your interactions amount to local agency without any meaningful global impact on the story. There’s still a powerful story dictator with a very specific set of story beats that will unfold independent of your actions. While there are some interesting emotional branching relationships that are explored that give variation for how the characters relate to you based upon your decisions, you’re still ultimately a sidekick to the protagonists whose stories are fated beyond your control. This made me feel like a ghost trapped within an environment where it didn’t matter if I was embodied in the story or not.

Having explicit context switches with artificially constrained agency makes breaks my level of active presence. One of the keys to Job Simulator’s success of grossing over 3 million dollars is that they wanted to make everything completely interactive and dynamic. They applied this high-agency engine to stories with Rick and Morty’s Simulator, and they allow you to interrupt and intervene within a story at any moment. If you throw a shoe in the face of the main character, then he will react to that and then move on with the story. It’s this commitment to interruption that could be one key towards achieving a deep sense of active and willful presence.

But most storytellers are not taking a high-agency, inspired approach to narrative like Owlchemy Labs. The leadership of Oculus Story Studio has a strong bias towards tightly-controlled narratives with ghost-like characters without much meaningful agency. In my conversation with the three of the Oculus Story Studio co-founders they expressed their preference towards the time-based telling of an authored narrative. Saschka Unseld went as far as to say that if you have a branching narrative, then that’s an indication that the creator doesn’t really know what they want to say. Oculus Story Studio is exploring interactivity in their next piece, but given their preference for a strong authored story, then any interactivity is likely going to be some lightweight local agency that doesn’t change the outcome of the story.

There is an undeniable magic to a well told story, and Pearl and Oculus Story Studio’s latest Dear Angelica are the cream of the crop of the potential of narrative virtual reality. But without the meaningful ability to express your agency, then these types of tightly-controlled, authored narratives are destined to maintain the ghost-like, status quo of constrained active presence that Oculus coined as The Swayze Effect.”

FUTURE OF AI-DRIVEN INTERACTIVE NARRATIVES

In my interview with Façade co-creator Andrew Stern he said that there really hasn’t been a video game experience since 2005 that has provided a player with meaningful local and global agency where your small, highly dynamic action in every moment could dramatically alter the outcome of the story. In Façade, you’re at a dinner party with a husband and wife who are fighting, and you use natural language input to interact with them. The AI determines if you’re showing affinity towards the husband or wife, and a backend algorithm keeps track of your allegiances as you try to balance your relationships to push each character towards revealing a deep truth.

Here is Stern explaining his vision of the future of interactive drama driven by artificial intelligence:

There’s a spectrum of authored vs emergent story, and Façade uses a drama manager to manage input from the but also maintain the dramatic arc of the story. If you read their “Behind the Façade” Guide, then it reads more like a computer program than a film script, but the most authoritative blue-print for how to architect an interactive narrative that is fully listening to player and providing meaningful global agency. Here’s a visualization of Façade’s drama manager that balances user input with the unfolding of a traditional three-act dramatic structure of the overall story.

Human Interact’s Starship Commander is an important step towards allowing a natural language integration within a VR experience, but it’s still a highly authored experience given that they really provide performances from actors. Façade also features recorded performances, and so this hybrid approach provides a limit on the extent of how much true emergent behavior that you can achieve.

Looking to the future, Stern’s Playabl.ai is focusing on creating AI-driven interactive characters where trust and rapport can be built up over time. They’re leveraging research for modeling human behavior from a DARPA-funded AI programs called IMMERSE, and they’re hoping that interactions with these types of AI characters could start to mimic what it feels like to have an emergent conversation.

Stern is collaborating with his Façade co-creator Michael Mateas, who founded the Expressive Intelligence Studio at University of California, Santa Cruz. Mateas and his students are creating some of the most cutting-edge AI-driven, interactive narratives out there (I have an interview with Mateas and a number of his students that will be released with the upcoming Voices of AI podcast, including the creators of Bad News).

AI is going to be a critical part of the future of interactive narrative in VR, and 2017 promises to have many of the advances of machine learning start to be made available through cloud-based AI services from Microsoft, Google, and IBM. It’s already starting with natural language processing being integrating, but the ultimate affordances of AI will go much deeper.

THE FUTURE OF AI & VR IS “UNCOMFORTABLY EXCITING”

At the end of Brillhart’s Filmmaker Magazine article, she says, “Blaise Agüera y Arcas, is a principal scientist working on artificial intelligence at Google. He had this to say about the current state of AI, which I think also describes the current state of VR better than anything else I’ve heard: ‘We live in uncomfortably exciting times.'”

There’s so much potential of VR and AI that it’s hard to predict where it’ll eventually all go, but there are some early indications of some initial cross section will be. Brillhart talks about trippy Google Deepdream VR experiments that she’s done that starts to create such an unexpected experience that it starts to feel like you’re on a psychedelic trip.

Style transfer another area that is likely to be another early win for VR. This is where the primary features of artist’s style can be extracted and applied to new images. This is likely to start to be used in procedurally-generated textures and eventually 3D models within VR. Brillhart imagines that auteurs will train neural nets on image sets in order to use AI as a creative collaborator.

In terms of what else will be on the horizon, we can look at the principles of how AI is able to objectify subjective judgments and eventually be able to make the types of qualitative decisions that we ascribe to intelligent beings. So AI will have the ability to quantify the qualitative aspects of life, and then express it back to us within an immersive environment.

Neural nets have the ability to come up with averages of qualitative data like faces or create “interlingua” intermediary translation languages that amount to every language, but no language at the same time. Eventually, companies like Facebook, Google, or Twitter may be able to translate their vast repository of Big Data to create composite, AI-driven, chatbot NPCs that are embodied within a VR experience. We may be able to interact with a comprehensive representation of the beliefs and thinking of an average 25-year old white male or a mid-40s black lesbian. This will be a long-process and there are tons of open questions around creating a representative and unbiased set of training data to achieve this, but Brillhart think that it represents a future potential of where AI could go.

AI will be also involved in the procedural generation of both environmental and narrative content. Ross Goodwin explored using AI to automatically write a surrealist sci-fi movie script that they produced as a part of a 48-hour film competition. The script for Sunspring was automatically generated by feeding a Long Short-Term Memory recurrent neural network dozens of sci-fi scripts, and then the AI generated dialog and staging notes that the film crew used to produce the piece.

The resulting dialog is largely non-sensical and filled with confusion, but what makes Sunspring so compelling is the actors who are able to take the semantically-correct but otherwise jibberish dialog and imbue it with meaning through their acting and staging. You can feel what they mean with their body language and emotions but the actual words are meaningless, which gives it an other-worldly feel.

At a baseline, AI will provide creative constraints and inspiration for human interactions and collaborators that will allow for more freedom in role-playing and perhaps eventually provide the arc of a satisfying story. The ultimate realization of this type of collaborative storytelling is done by skilled Dungeons and Dragons dungeon masters who are able to guide the arc of an adventure, but at the same time allow each of the participants to do anything that they want at any time. The “theater of the mind” is currently the only medium that fully realizes the full potential of the human imagination.

Each participant has a fragmented picture of a scene in their minds, and the reality is dynamically and collaboratively constructed through each question asked and each line spoken. There’s a set of rules and constraints that allow the dungeon master or the roll of the dice to serve of the fates of these intentions, but any visualization of a virtual space by a VR artist or developer could serve as a limitation to the collective will imagination of the DnD characters who want to be in full control of their destiny.

Will AI be able to eventually serve as the dungeon master’s dual role as master storyteller and group facilitator? I asked long-time dungeon master Chris Perkins, and he was skeptical that AI and VR will be able to achieve the same level of emergent story that a DM can facilitate any time soon. But yet he’s also convinced that it will eventually happen and that it’s almost inevitable when looking at the overall trajectory of storytelling and technology. He says that life-long friendships are forged through playing DnD, and so the collaborative storytelling experiences that are created are so powerful that’s there’s enough intrinsic motivation for people to solve the technological roadblocks that will enable this type of collaboratively emergent form of storytelling. Currently Mindshow VR is one the leading edge of creating this type of collaborative storytelling platform.

VR Chat is empowering the most sophisticated level of collaborative storytelling with their open world explorations of interconnected metaverse worlds. But the stories that are being told here are largely generated from interpersonal relationships and the communication that happens with other people you happen to be going through the experience with you. Having meaning be co-created and constructed by a group of people shows that collaborative and social storytelling experiences are something that are unique to the VR medium.

But there’s still a lot of power in being able to use an environment to tell a story.

Rand Miller’s Obduction and The Gallery: Call of the Starseed are two stand-out examples of environmental storytelling in VR, but there’s still a lot of room for where social and environmental storytelling will go in the future.

Right now a lot of the open metaverse worlds are largely empty aside from what are clearly non-verbal NPC characters aimlessly roaming around or the occasional other person, but they have the potential to be filled with either live immersive theater actors or AI-driven characters to create what Charlie Melcher calls “Living Stories.” Living stories engage the participation of everyone involved, and they feel like an emergent construction of meaning, but have a clear narrative trajectory led by the storyteller. Alec McDowell says that we’re moving back to the oral history storytelling traditions, and there are unique ways that VR can overcome the vulnerability of the first-person perspective and emphasize the importance of many different perspectives with different positions of power and privilege.

Having AI chatbots within an open worlds will be the next logical step, but Rand Miller says that architecting a non-linear story through an environment is not an easy task. Otherwise, there would be a lot more people doing it. Stern says that part of the “>open world storytelling dilemma is that there’s a tradeoff in being able to explore an open world without limits and still be able to communicate a story with an engaging dramatic arc without it feeling fractured and unsatisfying.

This is where emergent conversations with AI characters powered by global drama manager starts to come in. Stern envisions a future of interactive drama where you can have complete and meaningful expression of your agency through conversational interfaces with AI where there is giving and receiving with meaningful participation and active listening. If there is a drama manager in the background that’s planning the logistics system driving a simulation, then the future of AI and VR has a lot of promise of not just being able to watch and observe a meaningful story, but to fully participate in the co-creation of a living story, which is a process that Stern prefers to call “story making.”

Rough Transcript

[00:00:05.452] Kent Bye: The Voices of VR Podcast. My name is Kent Bye and welcome to The Voices of VR Podcast. So on today's episode, I have Jessica Brillhart. She is the principal filmmaker for virtual reality at Google. Now, Jessica enjoys a privileged position in the sense that she's looking at storytelling but within the context of being at Google, which is really the world's leading technology company when it comes to artificial intelligence. So she has access to all these leading-edge machine learning and artificial intelligence researchers and is starting to think about how can they start to use AI in order to make VR experiences a little bit more interactive and plausible. But In terms of storytelling, I think in this conversation in particular, I had some really huge insights in terms of the role of AI. And AI is really going to be the glue that bridges the gap between a passive film experience and an interactive game, and to really make plausible all the four different dimensions of presence, which I think in this interview, I really explained the elemental connections to the four different types of presence. And looking at the different elements, we can start to look at the differences between mobile VR and passive 360 film and what's going to be possible in a fully room-scale interactive experiences that feel more of like a conversation and how artificial intelligence is really going to be the key missing element that's going to really enable that. So that's what we'll be covering on today's episode of the Voices of VR podcast. But first, a quick word from our sponsor. Today's episode is brought to you by the Silicon Valley Virtual Reality Conference and Expo. SVVR is the can't miss virtual reality event of the year. It brings together the full diversity of the virtual reality ecosystem. And I often tell people if they can only go to one VR conference, then be sure to make it SVVR. You'll just have a ton of networking opportunities and a huge expo floor that shows a wide range of all the different VR industries. SAVR 2017 is happening March 29th to 31st, so go to VRExpo.com to sign up today. So, this interview with Jessica happened at the Sundance Film Festival that was happening in Park City, Utah from January 19th to 29th, 2017. So, with that, let's go ahead and dive right in.

[00:02:34.390] Jessica Brillhart: Hi, I'm Jessica Brillhart. I'm the principal filmmaker for virtual reality at Google. And this is our one year anniversary. Happy anniversary.

[00:02:40.675] Kent Bye: That's right. Yeah, we talked last year at Sundance when we first met. So we were talking all about some of the more philosophical ideas of what's it mean to do VR and film storytelling. What are your thoughts over the last year? What have you learned?

[00:02:53.415] Jessica Brillhart: Well, I mean, still care a lot about artificial intelligence and machine learning being incorporated into the VR space. I'm really, really interested in that, both from an artistic standpoint, like we're seeing really cool stuff being done in Deep Dream and Style Transfer, and some of the aesthetics are being embraced by the machine learning community as well. And we have some teams at Google that are doing some really great work in that. also around audio, speech, and that, plus, you know, thinking about functionality, like, kind of how does a creator and a system work together to create something in VR, and I think that that's where it's all gonna go, so I'm really excited to work on that. You know, we're seeing some beginnings of interactivity, and, you know, I have a few thoughts on the whole storytelling thing, which has begun to become more of, like, something that's been eating at me, because it's just, now every time I hear it in the context of VR, I get really angry. So I've trained myself to just be so completely like adverse to that word because I don't think it means anything anymore. You know, and evaluating kind of what that means for what story is a narrative is in the space. So yeah, that's in a nutshell where I've been.

[00:03:57.502] Kent Bye: Yeah, there's a couple of things that I find really interesting about that. First of all, you know, being at Google and artificial intelligence, you're probably in a very unique place to be able to talk to that cross-section, so I'd love to dive into that. But let's start with the anger around storytelling first. I feel like, so I read, you know, you had a really great philosophical article in Filmmaker Magazine talking about kind of the state of VR and What I got from that is that you're talking about there's an intersubjective experience and narrative that's being projected onto a wider experience. And that is sort of the framework that you're talking about storytelling. Maybe you could expand on what you meant by that.

[00:04:34.606] Jessica Brillhart: Yeah, I mean, I think something I've been really thinking about a lot is this idea of, I mean, in that article too, I start with a quote from Vertov, who basically explains what he believes to be the future of cinema. this idea of like I can take this disattached eye and show you a world that is unknown to you. Like I can be next to a horse or under a train or... And with VR it's reattaching that disattached eye. So now suddenly it becomes like both the eye of, you know, the beholder of the visitor and then you have this eye that is mine that is meant to almost be a filter to the experiences and worlds that I bring you to. You know, it's not binary. It's not like there's complete disembodiment in embodiment mediums. But I believe that VR is more about embodiment. So it's predominantly that and very much less about the disembodiment. Although there's lots of like putting yourself in places and projecting yourself on things. Like we do that normally anyway. I think that a lot of the craft of VR and we've seen that from editing to acting to even various things that work in narrative to moving camera rigs to interactivity has all been based upon that idea of embodiment and I think Much like most things in the real world, we seep out. There's not a delineation between me and the world. My brain seeps, my physicality seeps, I interact physically. We are always constantly embodying something. We are always constantly trying to meld ourselves to spaces and places and experiences. I think there's a lot there, and I think the storytelling aspect blocks us from that. I think once you think about telling, you're thinking about, I know that what I'm thinking and what I want to communicate is vastly more important to what you may have to contribute to that. It's always a two-way street, but it's a bit more a one-way thing from me to you. And this is a bit more like really crafting for someone being able to kind of come in and discover elements that are important to the narrative or the story. but may not be necessarily the way that I've thought it should be experienced or should be told. And I think that's it. I think that's where VR should go. And why a lot of the mistakes that we're seeing and a lot of things that aren't quite working aren't working. It's because they're not embracing that as much as they should.

[00:06:45.355] Kent Bye: Yeah, I have a number of different thoughts about that. First of all, it reminds me of last year, Eric Darnell was describing to me the difference between film and VR, and the way he framed it was that in film, you're basically having a singular perspective of somebody's experience that they had. They're telling their story of their experience, and that in VR, you're given the raw experience that you're then able to generate your own stories from. And so it's like, how do you cultivate that process of having that experience? part of the dimension of having an experience is that you have agency, you have the ability to make decisions. And that ability to make decisions means that there's, from Mel Slater's conceptualization of presence, there's the place illusion and the plausibility illusion. So you're transported to another place, you know, that's the unique affordances of VR that create that. But the plausibility, I think, is connected to these different levels of presence. And I would say, I've come up with an elemental theory of presence, that there's actually four dimensions of presence.

[00:07:38.296] Jessica Brillhart: Nice. Probably be very similar in our thoughts on that.

[00:07:40.650] Kent Bye: And so earth is the embodiment. So actually being in your body and having your hands tracked. And so you having the virtual body ownership illusion. And then the fire element is your active exertion of your will. So you're exerting your will into the experience in some way. And so it's somehow dynamically interacting with it. You take action. So then their air element is all about social and mental presence. It's the ability to both have your mind stimulated, but it's about communication and it's about relationship with other people. So there's a dimension of presence that comes when you're with other people. And the final is emotional presence. That's the water element. That's essentially the ability to just feel all the different process of your inner experience, your subjectivity, all those feelings. And so The types of experience that include all four levels of presence are the ones that feel like a full experience, ones where you feel embodied, where you have your ability to make decisions and to exert your will into an experience. Having some sort of stimulation of your mind or communication or other people involved. And then finally just your emotions are engaged in some way. And right now I feel like the VR community is split between mobile and full immersive room scale. And that the mobile actually has only half of those presences. You can't do embodied presence when you don't have six degree of freedom with hand track controls.

[00:08:58.363] Jessica Brillhart: I would argue one of the most brilliant people on this earth is Stephen Hawking. He's a genius. He's able to postulate about reality in a way that no one else can. I think he had a quote that said something like, my inability to use my hands has allowed me to find unique ways to traverse the universe or something. I'm butchering that. But I don't believe that physicality is a necessary part of experience.

[00:09:20.794] Kent Bye: Well, what I would say is that whenever you reduce one level of presence, you amplify another presence so that you're reducing the earth element and increasing the air element in that way.

[00:09:28.333] Jessica Brillhart: Our brains changed. I mean, if you think about, you know, one of the things I thought was really interesting in a project I'm working on now, and it's taken a bit because I'm really trying to think whether or not I want to do this or not, but it's this idea of how does a deaf person see? Like, how can you show deafness through a visual? Because what happens when you lose your ability to hear is that your visual acuity goes up. And something happens, it's called motion parsing. Supposedly what happens is your brain kind of remorphs itself to allow for you to be much more visually aware of things. So it's almost like a long exposure. Like if I wave at you, you know, you see kind of like a wave and you get it and you move on. But in the context of like a wave to someone who can't hear, they actually see these steps all in one kind of frame, kind of these like parsing anything. It's just like these, you know. And it's amazing how our brains shift, how we become more aware of things that we don't when we start losing certain perceptual abilities, which is why a lot of, you know, in a 3DOF space, mental engagement is huge, because that's all we have the capacity to do, because we've lost, you know, we can't move, so you better freaking be dealing with my brain here. And in the physical space, brain still matters, but there's a tension there between what my mind's doing and what my physicality wants to do. And once you both have the ability to do that, you then start to distrust other things. And then you have to make sure you, in a lot of ways, almost under-promise and over-deliver on that part, too. But it's interesting, because for me, this kind of feeds into the AI bit. Speaking of Hawking, I think a little bit about string theory, or even the idea of M-theory, right? What you're talking about is there's not necessarily one singular way of understanding, like stringing this all along together. There are various realities that are kind of justified in coexisting. And with the string theory idea, it's more like, right now, most of VR is sort of on this three-dimensional plane thing. We're on the third dimension here, where essentially VR has the capacity to do six-dimension stuff, seven-dimension stuff, where we're able to traverse various permeations of things that we can't even begin to comprehend because we're very limited in the way that we perceive the world. So, you know, you start thinking about how machine learning can help create these various permeations that we can't imagine. And, you know, as a creator, how do we deal with that, right? Like reality to me is such an interesting topic because it's something that we don't completely understand. But to what you're talking about in terms of the elements, for me, it's also been about you have this kind of questionable objective reality that may or may not exist, but we don't know because we can't tell. And then you have this idea of experience, which justifies existence, and existence justifies kind of the universe. The universe isn't perceived unless we're able to perceive it. Not to say it wouldn't exist objectively, but in terms of that need and want for more enriching experiences that justify our being somewhere. That tension is interesting too.

[00:12:16.371] Kent Bye: So I just wanted to kind of lay out the spectrum between mobile and room scale and what is new about VR from film. So in traditional film, you could only ever have the water and air element, really. You have your ability to pay attention and watch with your mind and think about things and have your emotions move, but you don't have real embodiment or real agency or ability to interact within the experience at all, and that's what is unique about VR.

[00:12:41.141] Jessica Brillhart: That actually works against you more in the film sense, like I'm eating popcorn, I'm checking my phone, I'm talking to my mom, like one of those things.

[00:12:47.065] Kent Bye: Right, yeah, you actually want to turn all that off so you can really just pay attention with your mind and with your emotions.

[00:12:52.009] Jessica Brillhart: Talking kind of about why, you know, on airplanes people cry more or laugh more at movies and it's just because generally you're not really talking to anybody, you're not really eating popcorn, you're kind of like stuck watching that and how much more emotionally engaged we are when we can't do anything else except experience that thing or actually like feel ourselves more connected to it. It's kind of interesting.

[00:13:10.891] Kent Bye: Yeah, and so the mobile VR is replicating that right now because we don't have full expression of our embodiment or any agency that we have is abstracted. It's abstracted through buttons or through trackpads. It's not a true intuitive interaction. I feel like that in the full room scale, you have a full expression of your agency in a way that's natural and intuitive and not abstracted and that your ability to be embodied within a virtual reality experience is also amplified. And so Those are the two new elements that we have that we're able to actually create a full experience. And I think that's the difference between receiving a story through film versus giving a full experience, is that you're actually enabling all four of these different levels of presence in a way that we've never been able to do before.

[00:13:51.953] Jessica Brillhart: Absolutely. I mean, well, it's like representation, right, also. One of the things that I definitely look at normally, the things that we're finding from a craftsmanship perspective in a 3DOF space, in a mobile experience, are the same things that need to exist in a positional one. I mean, I think I've found that the room scale stuff is still kind of not there either. Like, one of the pieces actually here that I absolutely love is Rachel Rosen's piece, The Sky is a Gap. It was like this idea of attaching physical concepts to our own physicality, like, taking something like time, attaching it to my ability to move, and seeing what happens when you can change that. Like, that's brilliant. Like, that's a really smart thing to do. And I think that there's something in that that's very compelling.

[00:14:46.198] Kent Bye: Yeah, Superhot is probably the most compelling game on the Oculus Rift, and it does exactly that, is that when you move, time moves, and being able to tie your movements to the progression of time is really compelling, and in Rachel Rosen's piece, she's actually tying that to the progression of some sort of narrative, so you're physically moving around a full room-scale space, and then as you move around, then the story unfolds. And so I think the challenge right now is that in film, or just in life in general, we always have our objective reality and our subjective reality, and they're always happening at the same time. And in life, we always have our free will that we can express our agency and change our objective reality, but In film, you can't change the objective reality. You can only project your subjective reality into the experience you're getting. And in VR, you're now combining the ability to change your objective reality by exerting your will within an experience.

[00:15:36.340] Jessica Brillhart: You know what's interesting? So, you touched on something that's really important in the mobile space. Stuff that I've been really interested in from this idea of mental engagement, right? which is like, can I understand what you feel compelled to do at any point in time, and can I respond to it? So it gives you sort of a sense that there's interactivity, even though there's absolutely none. Like, you know, like no actual, like, you're not actually deciding, like, okay, now we're going to go to this horse stable. Like, no. Or now I'll respond to, like, what's that over there with, here's the answer to that. And so that's where we need to get to from a 3DOF experience is just to, or a mobile experience, or whatever you want to call it, is a 360 film, 360 video, VR video. You need to create kind of like a faux interactivity thing. You need to kind of understand the nuances of engagement and be able to have that conversation. So at the very least it's an acknowledgement that someone has her own brain and is able to make her own decisions and to interpret the world the way she wants. And I think that's what I really love about that format is because it's harder. And it's hard for anybody making anything in VR, but specifically that, it's so interesting. It's not like a new thing from a film perspective either, like this idea of there's been some really interesting cognitive studies on how someone engages with film. You see a doorknob, what happens there? Even heat map type stuff's done on a frame. You look over there, does something happen over here? It's a lot of similarities between that and what's been going on in the VR space, except, and I talk about this a lot, it's just there are layers of how our brains function, how we interpret the world, from how experiences made us kind of understand the world, how we respond to the world based on our experiences. But also our abilities to perceive are how we evolved, you know, to feel about things, the things that are hardwired, that is really hard for us to change, and we can't. So I feel like there's so much depth there that as exciting and as interested as I am in a positional space, I kind of still have a few projects I want to do in the 3DOF space before I go too far out.

[00:17:37.051] Kent Bye: Well I think that's where the AI is going to come in because AI you have conversational interfaces where you're speaking and you're able to exert your will into an experience that's more natural and intuitive so you're bringing in the fire element in a way that right now all the ability to exert your will into an experience essentially come down to looking at things for a while, gaze detection, maybe tapping something with a button to activate things but it's not like you're able to actually fully interact and Being able to speak with a character I think and be able to make decisions in that way I think is gonna be able to bring a lot more ability to use the unique affordances of AI which is the natural language processing and being able to have them understand what you're saying and then you be able to react and have like an actual conversation that's unfolding.

[00:18:20.212] Jessica Brillhart: Yeah, no, I think some of the more interesting stuff though is the stuff that like is what we can't predict. The article that I wrote for Filmmaker is actually taken from a quote from Blaise Aguirre-Arquez, who is one of the lead research scientists on our machine learning initiatives at Google. He said, you know, we live in uncomfortably exciting times where, yes, we could do natural language with, you know, virtual characters and so on. And AI is definitely primed for that. But some of the more interesting things, I think, in AI, you see DeepMind, it basically generates its own language based upon understanding and learning tons of other languages. It kind of comes out with this.

[00:19:00.289] Kent Bye: It's like an intermediary language to translate to that and then to other things, right?

[00:19:04.121] Jessica Brillhart: There's a word for it too, and I can't remember what it's called, like interlingo or something like that, but the idea is you can basically have a system that understands all the possible similarities and cadences and cross-sections between all the languages that exist on this planet. And suddenly you have this language that is essentially every language, but no language. And I think that's what's so interesting about VR is also, if you think about embodiment, if you think about speech, if you think about the way we perceive this idea of everything but nothing. Because everyone can relate to it, but it's not anything specific. And it's not anything that is literal. It's not like, that's English, and that's French, and that's Spanish. It's like, no, this is everything. There's also that photo that came out years ago, which was like, this is the average American. It was coming up with the average of all those photos. Like, this is a person in the States. And I think there was one about, this is the world's human. Like, this is what an average human looks like. And it's every person and no one. Average Tabby. That was a Google brain project. They took a frame from a lot of YouTube clips and did neural brain surgery and tapped into all the different neurons in this neural net that was trained on these images and to identify what it was sensitive to. Certain neurons are sensitive to certain things in the world. And they found one that was particularly sensitive to cats. And they had it output basically what it thought a cat was. And they call that Average Tabby, which is every cat and no cat. but it's compelling because that stuff is like, what do we not have the capacity to get? You know, as a creator, I could probably come up with like 30 permeations of something, maybe a little bit more before I go insane, you know what I mean? And having a system also be able to come up with probably 100X more permeations of a possible outcome to a narrative based upon decisions you make, things you look at, directions you go, ways you hold your hands, can you hold your hands? you know, accessibility being a big thing there too. And then you start to think about like, okay, there's going to be things that I'm just not capable of understanding that this thing will. And the one thing that I learned from just working in the limited amounts that I've worked with our AI folks is bringing Deep Dream into VR, seeing how that works is the peanut butter methodology for AI, just AI for AI's sake is not the way it's going to go. It's going to be a conversation between the things that are important to me as a creator, things that I would like to express, mostly parameters, you know, strings I can pull, and then a system coming in and sort of building up on that. So it's sort of this really awesome hybrid between things that I'm thinking about and objectively what's possible. And so into what you're saying about the subjective and objective reality, both of which exist, AI being the representation of a potential objective reality is?

[00:21:48.940] Kent Bye: Actually, I think that AI is actually more of a subjective experiential technology because you have to give the AI an experience of data. And that has more of an ability to make subjective decisions. So I feel like VR and AI are both experiential technologies.

[00:22:02.108] Jessica Brillhart: What I mean to say is, oh, no, for sure. I'll go back. You're right. It's an objective, subjective reality.

[00:22:08.421] Kent Bye: Yeah, and that's a problem. Whenever you try to quantify something that's subjective, there's always going to be some error. So I know Peter Norvig has talked about some of the technical debt of machine learning. And there's a lot of things that you could just add some white noise and have the features that are detected be flipped over. And so that's why, right now, there's a lot of supervised learning that's happening, where it's like you're giving it data that you're tagging. You're saying, this is the input. So you're having the actual humans give their subjectivity objectively. And so it's actually more of a reflection of a human's objective subjective reality, if you understand what I mean.

[00:22:37.618] Jessica Brillhart: Absolutely, I think so because it's my objective understanding of the subjective reality that I then the machine is interpreting that is then again Subjective because it's by nature a byproduct of large data set of subjective understanding of it Yeah, it gets humans at this point need to be in the loop because humans need to be the ones that are looking at it I mean, it's not like AI is like a plastic box or something. It's something that learns based upon our own experiences, collective experiences, right, like you said. And I feel like, you know, one of the big things that we talk about when we think about AI is like, you know, are the data sets right? Like, do they really reflect what's happening? And responsibility in part, too, as a creator in the VR space, bringing machine learning or, again, I haven't done this yet. I just imagine, and I'm postulating what I think would be important. you know, you decide what those data sets are. I mean, I can imagine a future where you train your own neural net, and that neural net is like, the auteur becomes you plus the neural net. And you can decide, like, you know, okay, I want my neural net to be trained on images from this particular continent. So you suddenly have, like, can that change? Can it be like the narrative reflects certain cultural norms based upon this country? So like, you know, we talk a lot about proximity in the VR space. Like some cultures love to be like up close with people. They have no problems with that. Then you go to like Finland and everyone wants to be like a thousand feet away from each other. all the time. So you can imagine, okay, if I do a VR experience and I go to Finland with it, and it's about being around other people, I can then have a system understand based upon its understanding of that country, or that maybe that community, to keep people further away, at least in the beginning, and then bring them closer together. Versus if I bring it to South Africa, where generally it's fine, you know, like you'd basically have people close up right in the beginning. If you imagine like Abel Aurora's Waves of Grace, that last shot where you're on the beach and you have your main character like really close up to you, like I was like, It was a little uncomfortable. But some people loved it. So you can imagine what if that character kind of had an understanding of how comfortable you were and was able to move away or closer to you depending on how you felt. Now, I do see that there are issues with that, too. Because some, again, it's the data set. Some countries may not be representative, really, of the country. It may just be based upon 20 people that have decided to keep their data open. And that's how you are able to do it. And the majority of people actually love being close to other people. And that's the creative part. And that's the nuanced part. Where it's not just AI for AI's sake, it's not just slapped on machine learning and system understanding and data sets, it is literally going to be us deciding the parameters by which we train that system that works with us. Beyond just how much that system affects the creative piece, beyond the ways in which it affects it, beyond the vast permeations, what those permeations may look like, it is what that thing actually is. and what it filters in order to be able to create those experiences, and how it's able to subjectively, objectively, subjectively understand.

[00:25:27.589] Kent Bye: Yeah, the way that I would summarize the role of AI in VR is it's going to be all about plausibility and presence. And I went to a creative AI conference and talked to Michael Mateus and a lot of people at UC Santa Cruz, which I think are kind of the most leading edge people using AI.

[00:25:42.379] Jessica Brillhart: Santa Cruz is a pretty leading edge, yeah, it's a good place.

[00:25:45.487] Kent Bye: So I talk to a lot of the students there in their projects, and so what I see right now is that any interaction you have with a character, if you look at Fassad, for example, Andrew Stern, you know, you have natural language input, they're able to basically get emotional intent, whether or not you're agreeing or disagreeing, it's very high level, and they're able to feed that into an algorithm to have a reaction, but it's at abstracted high level and that in order for you to actually feel like you're present in an experience and it feels like it's plausible whenever you have an interaction with a character you want to have a conversation that's emergent you don't want to have on the spectrum of storytelling you don't want to have an authored experience where you feel like the narrative being shoved down your throat you want to feel like you're actually participating you have global agency and you have like your small decisions are changing the outcome of your experience

[00:26:27.220] Jessica Brillhart: Absolutely. I mean, that's the crux of VR in general, right? I mean, it's just this like, I don't want to have to keep predicting what's going to happen. And I want to be valid. Like I want to affect my space somehow, or at least give the impression that I'm freaking affecting it. But like, and you're seeing a lot, you know, there are a few things even here that just, and I won't go into what, but I mean, it's just like, there's some that are just like, I'm there, but it doesn't matter if I'm there or not. It's more like I'm being told the thing. And I know it's because it comes from a mind that wants to be a storyteller, which is like, great. There are tons of mediums that are great at that. Go do it. The exciting part and the untapped stuff, the stuff that we're still kind of all figuring out and exploring, is not the bucket of answers. It's not the choose-your-own-adventure story. There is nuance and weird, kind of strange outlier permeations. This is kind of similar but different, but I've been working with this team called Retinad in Montreal, and they're great, and they've been taking my pieces and some footage that I had kind of lying around. And they particularly took my film Go Habs Go, which is the Montreal Canadian ice hockey piece, and they did a heat map test on it. And the things that I found most interesting were when I saw a little tiny dot, a little tiny heat map dot, kind of go away and wander away. And there was one in particular that was just like kind of like, you know, just like wherever it was going. And all I could think of was that is so much more interesting. Like this other stuff's great and it's nice that that's there, but like the outliers, those permeations, the oddballs, like that's what makes experience so great. And so whether that be just the randomness in response, whether that be just the random like kind of stuff that happens in the experience, like can you imagine like a rando filter that you just like turn up or like a knob and then suddenly like, you know, the system decides, like evaluates the space and maybe like adds a little rat in the back. Or like it adds like bird calls somewhere else. Like it knows that when you've looked in the other direction that you're wandering off, that it creates and permeates on top of that. Like the understanding of randomness, that's huge. And that's also something that people in the machine learning world are thinking about a lot. And that's where creativity is.

[00:28:37.162] Kent Bye: Yeah, I feel like the way that I'm understanding the spectrum of on one end you have Film which is a completely yen experience you you're just receiving you're not able to participate So you're receiving a story and it's all about empathy and being able to just project your subjective reality into that And so at the other extreme, you have games, which is a complete interactive experience where you're expressing your agency. And it's about really finding the full expression of what you can do with your will. And that's all about the yang principle. And I think that this tension is where VR is holding, is really in this middle where you're both giving and receiving. You're both expressing the yin and the yang, where you're able to listen, but also participate. Because the listening may actually be a core part of a conversation. You have to listen and then respond. And I think that's what makes an experience. And I think that's where AI is going to help kind of bridge that gap between that authored story and the interactive game, which is all about the agency. That thing in the middle, I think that's going to be where VR and AI sit. And I would call that just experience.

[00:29:37.086] Jessica Brillhart: Yeah, and I guess my argument is it's not a binary thing.

[00:29:40.787] Kent Bye: Yeah, it's always in flux. It's always some combination of some. And it's almost like a quantum collapse of you're doing one or the other. And there's a lot of context switches that happen in games. They're like, OK, now we're going to do the storytelling mode. Now we're going to do the interactive. But it's not able to really combine the two because it doesn't have the dynamic ability to be able to respond to user agency. And that's where AI comes in.

[00:29:59.090] Jessica Brillhart: Yeah, exactly. I mean, I think anything can happen at any time. I think once we get there, we'll be in good shape.

[00:30:07.022] Kent Bye: Awesome. And finally, what do you see as the ultimate potential of virtual reality and what it might be able to enable?

[00:30:12.824] Jessica Brillhart: Oh, come on. How long do I have? 30 seconds? I don't know. I mean, I think I know sometimes, and then it moves. I find the things that we try to block, the things that we try to control and keep at a certain level, those are the aspects that are narrative layers. And I think, actually, you know what? Here's an answer. I've found in my limited experience in this field is the tension points, the points of conflict that we are experiencing, the things that are like, we're not good at this yet, like something about this isn't working. Those are the places where VR is. Those are the points that we need to go for. The places that make us feel really uncomfortable. The places that we're failing or seemingly failing. Because what we're finding is that a lot of people are getting way too comfortable and they're kind of sticking to the comfortability of it. And I believe that those tension points are where the medium lies. And every time I make something, I find a new one. And I imagine every creator feels the same way. And I think the more that we really go for those tension points, the more this thing's going to unfold for us.

[00:31:21.467] Kent Bye: Awesome. Well, thank you so much.

[00:31:22.608] Jessica Brillhart: Yeah. Thanks, Kent. Next year, right?