I interviewed Buried in the Rock creators Shehani Fernando & Anetta Jones at IDFA DocLab 2023. See more context in the rough transcript below.

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Podcast: Play in new window | Download

Rough Transcript

[00:00:05.452] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR Podcast. It's a podcast that looks at the structures and forms of immersive storytelling and the future of spatial computing. You can support the podcast at patreon.com slash voicesofvr. So this is the 6th of 19 of my IfADocLab series, and today's episode is featuring a Immersive nonfiction piece called buried in the rock. So it's a VR piece by Shahani fernando and also had a chance to talk to aneta jones who's a senior producer at scan lab projects and so scan labs is using a lot of lidar technologies to scan these different spaces and then it's transformed into a point cloud representation and so This was a documentary piece that was following a couple of speleologists. So people who study caves Pam and Tim fog So it's a piece that scans these different insides of a cave and is being represented with a point cloud representation And then you can kind of move around the space and as you move around the space you hear a spatial sound capture that you're able to hear what it sounds like to be in this cave. And the point cloud representation is sparse enough to give you the sense of this spatial architecture of these different locations. So, yeah, really exploring how could you use wider technologies and point cloud aesthetic to be able to tell stories. So that's what we're coming on today's episode of the Voices of VR podcast. So this interview with Shahani and Annetta happened on Saturday, November 11th, 2023 at IFFA DocLab in Amsterdam, Netherlands. So with that, let's go ahead and dive right in.

[00:01:40.838] Shehani Fernando: So I'm Shahani Fernando. I'm a documentary VR director, if that's a thing, I'm not sure. Working in immersive for the last, gosh, nearly seven, eight years. And yeah, we're here with a piece called Buried in the Rock, produced by Scanlab Projects.

[00:01:56.868] Anetta Jones: And I'm Anita Jones. I'm senior producer at Scanlab Projects. My background is working as a nonfiction producer across different mediums, VR, film, AR, and sort of immersive art installations. And yeah, I've been working in immersive art since 2016, which is when I met Shahani at The Guardian.

[00:02:13.952] Kent Bye: MARK MANDEL – Great. Maybe you could each give a bit more context as to your background and your journey into VR.

[00:02:19.594] Shehani Fernando: Yes, as Anita says, we met in 2016 at The Guardian. I'd been at The Guardian since about 2006, but largely as a filmmaker and delving into other things like geolocated audio walks and interactives. And The Guardian's always been kind of at the forefront of new media and change and with podcasts, certainly. But in 2016, we managed to get some Google funding to set up a internal lab at The Guardian making purely VR. So we had this kind of, now looking back, a rather wonderful couple of years just really experimenting with the tech and all sorts of different things we could do as a way of bringing new kinds of features to audiences, telling stories through the perspective of people. Some of that was embodied, some of it kind of wasn't. But it was a real test bed in terms of how we could do journalism in a new way. It was largely using older tech like the Google Daydream, if you can remember that, and the Oculus Go, but actually we really learned an awful lot. And during that time, we also made a different piece with Scandler Projects called Limbo, which I directed about the kind of refugee asylum crisis and what it's like to be somebody who arrives in a land navigating the system in order to be given their kind of leave to remain.

[00:03:39.511] Anetta Jones: Yeah, and so I've been working in documentary films since about 2008. I started at a company called Met Film Production. I worked a company called Archer's Mark and mainly across sort of like branded content. So a lot but mainly kind of like documentary focused features and TV. And then in 2016 started working at The Guardian in the two-year long experimental project I suppose that was a VR studio which was as Shahani described it it was again looking back on reflection it was very privileged to work in that space I think I mean at the time it felt like hell very stressful because obviously you're constantly innovating and having to experiment with something that hasn't been done before. So you're kind of inventing the wheel every single day. So it was very challenging, but it was great. And then I continue working at the Guardian, not in VR. I went back to producing some documentary film and then I worked at Anagram and produced Goliath with May and Kirstie and Barry and that whole team, which was brilliant. And then I've been at Scanlab since just over the last two years now.

[00:04:46.876] Kent Bye: Yeah, I remember Limbo in a way that it was a similar aesthetic as Buried in the Ground where it's like these point clouds where you were able to essentially take like a still life snapshot, but then I recall that the camera is moving through these different spaces and so Maybe you could start there in terms of developing this use of the point cloud technologies and if there was any project before that or if that was really the first time that you started to experiment with point clouds as a way of capturing different moments to then tell stories.

[00:05:18.759] Shehani Fernando: Yeah, so I mean, I love the point cloud aesthetic. I know it's been done quite a lot now, but actually when we first did Limbo in 2016, there hadn't really been anything like it in VR properly. For Limbo, because it was this subject matter around the refugee crisis, it really lent itself actually to the way we told the story. We didn't want to make a kind of 360 film looking at one case study shot in an apartment block or whatever it was going to be. We sort of thought about that actually and went down the road of thinking about that. And then it became clear as we started doing research and working with organisations that actually what we needed was something that was much more abstracted and actually the story is told through a montage of audio of about 15 different people, 15 different asylum seekers across the UK, and we travelled far and wide to record them. And we wanted to create kind of a universal experience of not exactly giving you the position of being in that, because, you know, we can never do that, but actually moving you through these fragmented landscapes. It's a cityscape. You start in quite a high viewpoint, moving through this kind of road, and slowly it winds its way down through to a very particular house that's lit up. and you enter that house, again, it's kind of like an x-ray, it's like walking through these buildings, but you enter this living room, you see people in the kitchen, you're moved up the stairs into these bedrooms, and all the while, you know, you're hearing these quotes from people, real-life audio, as well as a little narrative voiceover that we managed to get the actress Juliet Stevenson to do for us. So it felt quite powerful and you'll sort of move through this space. And what was great about the aesthetic was you're also able then to kind of merge worlds. So from the bedroom, the bedroom turns into a kind of ghostly recreation of Aleppo. Actually, we've managed to have this kind of high quality 4K footage. that we were able to get depth information from and turn into that point cloud aesthetic. And then it turns into the kind of immigration interview room where you have quite a visceral, you're interrogated effectively by someone from the home office. And again, every question was taken from a real transcript. We worked with lawyers on that. So, yeah, and I think that kind of black-and-white, fragmentary way of moving through point clouds is something that gives it this slightly liminal quality, this kind of dreamlike sense to it, but it can obviously create these perfect replicas of spaces at the same time. Anita can talk a bit more about ScanLab's work, but I do think they are real pioneers in this space and the attention to detail and the care and craft that they put into that and the huge kind of post-production workflow. is yet not to be underestimated and very hard to make work, actually, particularly on something like the Quest, which we were working with for Buried in the Rock, which isn't tethered. And, you know, there were certain technical challenges of really how to optimize that.

[00:08:14.707] Kent Bye: Yeah, I know I had a chance to see Framerate at South by Southwest of 2022 and talk to one of the co-founders of ScanLab and then It was showing there at Venice 2022. And so I'd love to have you maybe elaborate a little bit on Point Clouds, and particularly the LiDAR scanning technologies that ScanLab is using. But yeah, I'd just love to hear a little bit of your reflections on Point Clouds and what you're able to do with them now.

[00:08:36.953] Anetta Jones: Yeah, sure. So as was probably described on the last podcast that Matt Shaw was on, so Matt Shaw and Will Trostle were the founding directors and the creative directors of ScanLab Projects. So they're trained architects by trade, and that's how they started playing around with these. 3D LiDAR scanning technology, using it as surveying equipment for these buildings and landscapes. And it's when they started playing with this technology that they realized you could actually get this incredible data, these data sets, which you can then turn into beautiful visuals. Because briefly speaking, I'm sure the audience knows this, but the LiDAR scanner sends out millions of pulses, which helps to measure distances. And it's those tiny little megapixels that create this beautiful point cloud aesthetic. And so we get over a decade now, the guys on our team have been experimenting with that and creating all different types of visual content, but not just content that you see on screen. They've also been able to create sculptures with it, 3D living sculptures. We've produced work that's been used for projections in theatre. virtual reality, broadcast, all sorts of things. But it's interesting because we're buried in the rock with Limbo from when I've been talking to the guys. So I've only been at the studio for just over two years. And obviously when I joined, one of the first things I would ask the guys is what made you move into virtual reality? What made you start working with point clouds? I think one of the best ways they described it is that the point cloud is it's a 3D medium and prior to virtual reality, it could only ever be shown or seen on a 2D screen, which obviously didn't do it justice from what they knew was possible. And so it was a very natural, organic move, you know, transition into virtual reality when this medium got popularized. because all of a sudden the way that they were able to spin around and see these 3D models on screen, other people were able to actually immerse themselves in it and move around and explore it.

[00:10:34.113] Kent Bye: Yeah, well, I wanted to ask a follow-up on point clouds because it turns out to be a core foundation to a new rendering pipeline with Gaussian splatting, which was just presented for the first time in August of 2023, which is probably a little too quick to have it translated into this project, but the core foundation is that point cloud, and then you are taking those points and then essentially putting a disk on top of it, but then doing neural radiance fields, nerfs to train it, so the rendering ends up adding these neural radiance field dimension to it, but it ends up being able to take these huge datasets and actually see one of the most photorealistic real-time renderings that I've seen, way better than photogrammetry artifacts that I get sometimes. But given the fact that you're working with point cloud data, I'm wondering if you've had a chance to experiment a little bit with Gaussian splats as a rendering pipeline, and yeah, just some of your reflections on that.

[00:11:24.221] Anetta Jones: Yeah, I mean, as you said, it's very recent and it's definitely something that the studio have started talking about and started doing very early experiments with. It's not something that we've incorporated into our pipeline just yet. I think they're still very much trying to explore the boundaries of it and the limitations and how it can be integrated into the work we do in our process. Because as Shahani says, like the studio, we've been doing this for nearly 15 years and our process is so meticulous and everyone in our team is trained to not just do the 3D scanning in the way that ScanLab likes to do the scanning, but also we have our own rendering software which can manipulate and process all this very heavy petabytes of point cloud data. And so we've got our very tight process and pipeline that we're quite proud of, I think. And so, yeah, we've started to sort of like discuss and experiment and it's seeping into the studio for sure. But it's not fully integrated just yet.

[00:12:24.333] Kent Bye: Yeah, like I said, it's really quite recent. But I think there's a lot of excitement in being able to have different applications on your phone, like Lumi AI. And there's some others that are able to capture these Gaussian spots and to render them out. But I was just really encouraged to see how much you could take these huge data sets and have real-time rendering, like 90 frames, 100 frames per second. And the way that it was described is like, this is a completely new paradigm of how to do a render pipeline. So that, to me, is really exciting just because there is a lot of projects that are out there that have been using point clouds as an aesthetic. But now you're able to essentially take that same data sets and actually make it look like a photogrammetry scan, which is really quite exciting. So I don't know if you've looked at it or started to experiment with it at all.

[00:13:07.134] Shehani Fernando: I haven't, but I think it is interesting that actually, as a sort of archival process, it's very interesting that so many museums, archaeology, you know, the way we're actually being able to capture these buildings or spaces or objects through this technology and what that can mean then for future. And actually, if we have some of those data sets, being able to turn that into something very kind of photorealistic is also very exciting, because as you say, photogrammetry also can have its kind of limitations. But no, I think it's super exciting, and technology is obviously getting more accessible for makers, you know, like me. I was lucky enough to work with Scanlab a couple of times, but, you know, it's great to be able to put this stuff in the hands of makers, definitely.

[00:13:45.698] Kent Bye: Yeah, well, Buried in the Ground is another point cloud aesthetic piece that we've been talking about what you're able to do with it. So maybe you could give a bit more context as to this project and how it came about.

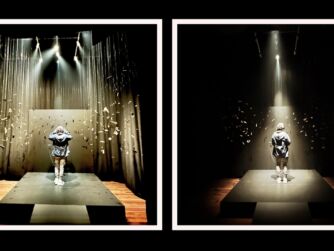

[00:13:57.628] Shehani Fernando: Yeah, so I was brought in by Scanlab Projects for this project when they got some funding from Creative XR in the UK, which sadly has come to an end, but it was a great funding stream at one point for early stage projects and it was to make a prototype within about sort of six months, I think. So it was just after COVID, everything was just kind of opening up, but you know, all our meetings were remote. And ScanLab had already pitched an idea about creating a kind of portrait of a person through this technology and what could that look like. That was the sort of early guiding principle. And when they brought me on board, we were talking about what would that be like in an environment and how could we lead a story through an environment but really make it very much about the people who inhabited that environment. I think Matt had met Pam and Tim on a separate job where they had gone down a manhole, apparently, under Rome when they were working on Invisible Cities, which was a BBC history documentary. And Pam and Tim are basically rope access specialists. which really means they enable people to get to extraordinary low depths or extraordinary heights using ropes as it suggests and it's pretty niche you know they've been doing this for over 30 years probably first just as a hobby but as they started to work more closely with the BBC's natural history unit They've rigged cameras for David Attenborough and for Disney, for Nat Geo. So this is their bread and butter and they absolutely love it. And what I loved when I first met them, when we were sort of checking them out as potential characters, was there's absolutely no ego. You know, they're from a generation for whom this kind of work is just real passion. And security is everything. Safety is everything. There's sort of no nonsense. And what was lovely was that they're a married couple, which, again, is not something you see often. So I suppose we wanted to tell the story of people who are behind the scenes often in these environments, that you see the finished documentary, you see David Attenborough, whatever, but actually you don't often see how people are getting to these places and their relationship with the natural world is so specific. you know, they are caving just for fun on a weekend down the road from their house almost all the time. So that relationship to also what they know about caves as these incredible kind of repository of climate change, of history, of human migration, was the other thing we wanted to encapsulate. So, you know, in Bowing in the Rock, you're effectively traversing through a cave system, which you see initially as a kind of doll's house version that you can move around, and different areas are slowly kind of lit up. You're hearing their audio about what draws them to these spaces and the darkness. What does that darkness do to someone? What solace do they find or what anxiety do they find? You know, how do they deal with that? and you kind of see this enormous shaft sort of appearing and the kind of vertical trick of caving of coming down the rope and eventually then you're actually down the bottom of the cave and sort of one-to-one scale. The second half is sort of different. You're located at the bottom of the shaft and you're able to kind of freely explore but also there are characters as Tim and Pam appearing to tell you various stories and anecdotes. So, yeah, I think it was a kind of way of thinking about could this be an interesting format or a series idea where we're actually, you know, really focusing on these ordinary people doing extraordinary things and a way of connecting audiences also to environments that, you know, they probably don't need to travel to, they may not want to travel to, you know, as a way of almost a little bit of virtual tourism, giving people a taste of these locations. in a kind of properly sixed off environment without you having to go there.

[00:17:53.643] Kent Bye: Yeah, I'd love to hear as you coming out of this project and from your perspective from Skin Labs and just a kind of reflection on Buried in the Ground.

[00:18:01.894] Anetta Jones: Yeah, just to add a little bit to what Shahani said, I think it was also a little bit around what is the motivation for people who do this kind of work. You know, you're not only getting to explore this environment that you wouldn't normally, but you're also getting in the heads of people who explore these environments and they do it out of, as Shahani said, like a real love and a passion and a devotion to this. Like Tim and Pam have been doing this for decades. They do it for work. They do it for play. They've kind of devoted their entire lives to this work and a lot of other things in their life have maybe been sidelined as a result, you know, and I think that that's kind of what we wanted to give a flavor of, because these places, they're not just hard to reach, they're perilous, they're dangerous. It takes a real personality to want to explore and explore territories that haven't been explored before. They don't necessarily know what's coming next. There's like an element of danger to it. So I think it's that. And then the other thing I wanted to add as well is that I was with Tim and Pam last weekend at the Belfast XR Festival, which is where the work premiered. And, you know, one of the things which really struck me was they said that when they go down into these caves, They wear a head torch and everything is completely pitch black and all they can see is what the light is shining at. And for them, one of the most incredible things of watching the VR experience was to see the full scale of the cave and how different parts of the cave. And it's a cave system that they know really well. It's Toll Yard Cave System, which is their local one. They're from Northern Ireland. And they go there all the time. And to see it visualized in this whole other way, but not just visualized, like accurately visualized, you know, it's a real depiction. You can see all the walls and the textures of it. They really appreciated being able to see that. And that's something that our audiences not only get to access a cave system, which they wouldn't normally, but they also get to see it visualized in this whole other way as well that they would never normally be able to as well.

[00:19:54.751] Kent Bye: Yeah, that was one of the effects that I really appreciated when I was going through Buried in the Rock. So, you had mentioned that at the start of the piece, their tabletop scale, the scale is off, and then as you are going into the cave, you're still getting larger and larger, but then once you get to the bottom, you're 1 to 1 scale, and that you are able to move around, and as you get closer to people, you can hear the spatialized audio from them, but then there's also this effect of having a spotlight so that depending on what you're looking at, you're actually seeing more pixels that are getting rendered, recreating that feeling of a torch on your head that's illuminating different aspects of the spatial context. And for me, what was really striking was to get this real sense of that spatial context at the end, to be able to look up and really give me this feeling of what it would feel like to be in the spatial context of that cave that they're in. Even though it's these point clouds are very sparse in terms of the architectural reflection, but it gave me enough of that shape to make it feel like I was in a cavern, which I thought was really quite striking to see how much embodied presence I was able to get out of this point cloud. I've seen a lot of point cloud pieces, but I think this is the first time that I've really felt present. I think part of it was the fact that you have this interactive component where I can both move around, but also it's dynamic in the sense of as I turn my head, it's rendering in a higher resolution, whether it's looking at the people's faces, which is probably one of the most striking things, or as I'm looking around, I get more detail. So I'd love to hear a little bit more elaboration on recreating this effect.

[00:21:27.013] Shehani Fernando: So, I mean, I love that you love that effect, Ken. I have a feeling that part of that effect was done because of the limitations of the quest and the fact that it would be impossible to render all the points in one go. I could be wrong, and Matt and Will might say, that's not it at all, but I think that is the reason. And so you do get this effect that the closer you get to something or the more intently you're looking at their faces, you can see more detail. But actually, we used the torch effect, I think, slightly differently. We wanted to give people this sense of being in utter darkness, because that was one of the central themes of the piece. You know, what is it like to really step into the unknown, where you don't know where your next foot is going to be? And actually, depending on rocks and depending on water and all sorts of things, there is a sort of constant danger. There's really just the two scales, the Doll's House scale where the only way we could show the entire system was to kind of make it smaller and then you're plunged into darkness for a few seconds and then Pam is talking about what that's like to be sort of wrapped in this sort of stole almost of blackness and then very slowly you kind of realize that you've got this kind of head torch effect through the headset and as you move around the cave starts to be visualized around you And so that was just to kind of slow things down in terms of pace and kind of let people imagine that sense as well as give them that sense of presence. But I'm interested that you felt a great deal of presence. I think partly that's the audio because we really went to great lengths. We took Axel Drioli, who's a fantastic sound recordist who works primarily in natural environments, And he was just recording everything, you know, every little spot sound, every little drip, you know, all the kind of resonances that we were really able to then put back into the cave and post. And we had Pascal Wise doing that sound for us to give you that real sense of immersion. So depending really, even in the doll's house where your head is, you really get a great located sense of what the audio is like. And as you move around in that second half at the bottom of the cave, again, the sort of sound shifts around you. And as you get closer, as you say to the characters, their audio becomes louder. And we had some little pinpoint sounds to just make sure that you would turn and see them where they are. So, yeah, it was a really interesting project, both in terms of how we thought about making it and then how to sort of present it to an audience who maybe didn't know anything about caving and also just in terms of holding their hand, making it very low on the interactivity, I think. We didn't want it to be super complex. We wanted you to have that torch effect and also be able to, in the end, if you didn't have enough space to do it fully sixed off, you can just use the controller to navigate. But it's kind of more fun in a big space where you can just sort of free roam, I think.

[00:24:16.435] Kent Bye: Yeah, I did notice that as I was moving around, then the audio would shift and it would get modulated if I got too far away. And so, yeah, there's a lot of spatialized audio in this piece as well. But I did want to ask, for Scanlabs perspective, for framerate, that was a time lapse with point cloud data, which is an incredible amount of data that ended up being rendered out. onto a 2D screen so something that wasn't like a real-time rendering of all that data but here in this project it's a little bit more of these snapshots and so it's a bit less computationally intense than having like a time-lapse but at the same time using it on the Quest software I don't know if you were using your rendering engine to create the XR or for you bundle it within Unity. Love to hear a little bit more technical details as to if this is the first time that you've started to dive into doing real-time rendering within VR, if there's other previous projects that you were experimenting with this as well.

[00:25:07.940] Anetta Jones: Yeah, there's definitely other projects that we've experimented with. So in terms of this particular project, yeah, it was created in Unity. And also we use our rendering software, Renderella. It's our own sort of like proprietary bespoke software that we've developed in-house. We've got a developer who frequently works on it and updates it. And so that's how we created this. But we have another project that we did in collaboration with an artist called Pierre Huy. It was a collaboration for the Kistafoss Museum in Norway. And with that, we did a lot of like real-time 3D rendering of point clouds. We essentially scanned this entire island and you can see all the sort of like flora of this island. We mutated it all in real time and the artist Pierre Huy, he showed that on sort of like big major screens. It was created for VR. It probably gives an indication of the highest quality visual fidelity that we're able to produce. Whereas as Shahani says, for the Quest, we really had to kind of like scale back in terms of our resolution, just because of what the Quest is able to handle for this particular project. And for framerate, you know, again, because it is all 2D on screens, we were able to really push the visual fidelity again. So it's very high quality. We currently play on 4K screens. We've been talking about upgrading that to 8K. And yeah, we don't feel like we've had to make any compromises with framerate at all.

[00:26:29.076] Kent Bye: Yeah, and one thing I noticed when I was looking at the two main protagonists of this piece is that you're doing these portraits of them and that when I had the torch effect and I was looking away from them, they would kind of dematerialize in a certain way. But their resolution of their faces was Seemingly a lot more dense or higher than the world around them because you wanted to really see all the details of their faces so I'd love to have you maybe reflect on if you think about it in terms of resolution because it seems like the people have a higher resolution than the world around them or if that was just a natural effect of The scanning technology that it was showing at the highest resolution as you could. Yeah, just love to hear a little bit more elaboration on making sure that the people you could have a rendering that could actually give you this sense of them as people.

[00:27:16.480] Shehani Fernando: Yeah, I mean, I think it's all about compromise, basically, when you're optimising for something like the quest. So, you know, we wanted to make sure, particularly towards the end, and you've got that final moment looking almost directly into their eyes, that that felt like a meaningful exchange where you're sitting across from someone. Whereas I think parts of the cave become less important as that progresses. So, you know, the very highest bits, for instance, or, you know, there's some rocks in the background and things. So I think what the team were able to do was just really focus attention in the areas that we felt that the viewer would be to make those as high resolution as we can for those moments. But often, obviously, because you're scanning often from one angle, maybe two angles, if you were able to go behind some of the characters in some situations, you know, you wouldn't see all of them. You know, I think that there's a tiny scan of Tim on the rope actually coming down, which was sort of very difficult to do because he literally was on a rope, you know, on a shaft. And then we set up some lights, but, you know, you'll notice that you don't get much detail on the face. So I think there were frustrations. There was always things that you see after you've captured that just wasn't time to do, or, you know, just the setup was just impossible to do better. I think in a perfect world, had we been able to have more budget or more team, we might have tried to experiment with scanning them actually moving to give it a little bit more sense of movement and aliveness. And that's something that the team have done. I've seen scans of, I think, a beautiful owl that was captured once in flight. So it's definitely possible, but actually, ideally, you would do that in a very controlled environment. And I guess it comes also then back to this discussion about what is documentary, you know, are you capturing things on the fly as you do in TV, which is sort of how we made this project, or are you recreating, you know, if we didn't do movement, we would probably have to bring Tim and Pam into a studio, re-rig them, have them wear the same clothes, and effectively fake it to put it back into the piece, which, you know, might have been fine, but this is a particular way of telling the story.

[00:29:22.351] Kent Bye: It's got this still life aesthetic where you take these little snapshots in these moments in time and you end up Allowing the audience to locomote around and move around and so I'd love to hear a little bit more of that decision to Allow people to move through this space because at the beginning I was instructed that the closer I am that I hear people more different I was going through it I didn't know if that meant that there was different choices that I was gonna be able to make but it didn't seem like after I went through it that there was any choices but that it was mostly able to really appreciate the spatialized sound as having different distances around from people and Giving this deeper sense of embodied presence in that sense But yeah, I'd love to hear about that decision to add locomotion to it because limbo you're controlling where the camera is going and so it becomes much more of a passive experience that people have, whereas this one is much more interactive in the sense of allowing people the agency to move around with a certain boundedness, like you can't go off to the edge and so you create this fixed space where they can move around. But you're also creating these opportunities for moving around and agency for the user while still telling a story. So I'd love to hear about that decision and some of the challenges.

[00:30:31.637] Shehani Fernando: Yeah, absolutely. I mean, I think because it was very much a story about this particular environment, we did really want to give people the ability to move around it and discover and notice things and sort of stick their head into tunnels or look at it from the outside and get that full sense of the 3D nature of it. Because it's the sort of thing, you know, you could almost kind of cast it to bronze or something, but it is this kind of extraordinary shape, which all starts from this tiny crevice in the rock just behind where they're sitting in the first scene. So I think to sort of see it emerge, and what happens is the ground level shifts up as you see more and more of the cave, you kind of realise in scale terms seeing it as this kind of doll's house shape, really how deep it is in relation to what's above ground. So I think that was really important for us, but at the same time we wanted to keep it very simple for the user, that you're very much guided by the audio of Tim and Pam who are talking through the experience. They give you some little snapshots and insights into other jobs they've done and other experiences they've had. but effectively you're free to move around. And I think the benefit of maybe not having them moving through as that kind of animated characters, if you like, was that you are really able to focus, which is something I still find difficult, you know, having seen the program even here at IDFA, watching VR sometimes, you just feel there's so much going on, even if it's quite subtle, that you think, God, what was that line I just heard? I can't quite remember what they just said. And it's that sort of grappling with the balance between information, particularly if you've got something that's speech-driven, and your surroundings, that we just wanted to make sure people had time and space to take all of that in. And it kind of enjoyed the movement and what that gives you with the sound. So I think that was definitely the point. And then obviously in the second half, when you're down in one-to-one scale, you just have that ability to roam around. So if you want to listen to the story, but you don't necessarily want to look at them, you can move back. You can kind of whiz around the cave and hear other bits of the sort of sound effects of the cave itself, potentially. So it gave you a little bit more freedom. I mean, I think Matt in the early days was describing it almost like a 3D podcast. You know, it was sort of one of the things that were like, is there something in this idea of like a 3D podcast, which could be like a series idea, you know, and it's a sort of interesting thing because actually it's heavily, beautifully audio designed, but it's got that kind of extra element of sort of movement around it.

[00:33:00.288] Kent Bye: And is it ambisonic audio that you're using or is it just you getting that specialized effect?

[00:33:04.312] Shehani Fernando: Yeah. So we actually recorded more spot sounds and then because we're then building it in unity, you then get that ambisonic effect by placing it in specific places. So we did record some ambisonic sound, but in the end we've ended up using more spot sound recordings.

[00:33:18.945] Kent Bye: Yeah. Cause that's usually you're fixed to one location. So to be able to actually look them out around, that makes sense. So yeah.

[00:33:24.909] Anetta Jones: Yeah, and I would just also say that I think the sound when you're actually in the experience, as Shahani said, it really is incredible. It's so evocative. And I think that there's this almost uncanny feeling that you get because you're in this space, you know that it's a real replica of a space, but the way it's visualized is so point cloud, ethereal sort of aesthetic, quite ghostly apparition-like look. But then the audio itself is so real. It's true documentary audio. and you hear the tiny little drips. So there's this interesting thing where, I guess, if you were going to go into a cave yourself and have this kind of experience, like, yes, of course, it would be amazing to hear from the experts and have them talk about what their experience is like and why they like to go in it and everything. But one of the things that you would really want to do is just have time and space to walk around and explore for yourself. And I think that there's something about the audio and just how realistic it is that feels a little bit spine-tingly and really makes you feel like you're in the cave. And that combined with the opportunity to be able to move around and explore, it's quite, I like to think, quite a multilayered experience in that sense.

[00:34:32.412] Kent Bye: And did you have a chance to actually go into the caves as well?

[00:34:35.571] Shehani Fernando: Oh yes. So I wasn't 100% sure, I have to say, you know, we organised the trip, I was definitely going on the trip, I was going to do all the audio interviews, but I was sort of saying, you know, I'm just not 100% sure about the cave bit. I mean, do I definitely have to go down? Anyway, the thing is, as a testament to Pam and Tim, they are so fantastically reassuring. We know we had a crash course the day before, they have some scaffolding poles outside and near where they live, and they just kind of put us in the cave in gear, showed us how the ropes work, all the safety instructions, and they're kind of right there all the time, and I thought, yeah, of course I've got to do it, this is crazy, of course, I can't come here and not go down the cave. So yeah it was an absolutely amazing experience and definitely not something I would ordinarily choose to do but I loved every minute of it and it was so fascinating and I think it's hard to make a piece like this where you're trying to give people the kind of experience of doing it without having done it yourself to some extent so it was really important I think for me to have done it. And I really enjoyed it. We had a really fantastic two days. It was like a cloud had lifted from COVID. I thought, you know, it was really like being back out in the world after being shut up for months and months. So personally, it was a fantastic moment.

[00:35:49.460] Kent Bye: Well, I know that in looking at wider scanning it's usually from like one perspective and so you have this occlusion on part of the backside for where the wider scan can't see and so as I was looking at some of the innovations and Gaussian spotting they have 4d Gaussian spots, but they end up taking a camera array with very similar to like Lytro scans where they were trying to do a light field but in order to get a light field you actually have to have multiple perspectives and so I'd love to hear if Scanlabs has thought about having multiple perspectives and synthesizing them or if you're already doing that. Just the idea of having something to address a part that may be occluded if you are already able to integrate multiple perspectives or if that's something that you've thought about having multiple perspectives for some of these different point cloud captures.

[00:36:35.745] Anetta Jones: Yeah, we definitely do that. So right now we're actually working on a new version of Framerate where it's a commission from the Desert Botanical Garden in Phoenix in Arizona. We're scanning in multiple sites all across Arizona in the desert and within the botanical garden itself. And when we're selecting those sites within one particular landscape, we'll choose multiple points where we can place the scanner and we can merge those scans together. Yeah, that's something that we've been doing for some time.

[00:37:03.670] Shehani Fernando: Just on Buried in the Rock, so I mean we did scan from multiple angles because we really had to work out how we could get as much of it captured as possible. So it was actually wonderful Tom Brooks who came on that trip and did all the scanning and was constantly calculating what angles do we need here and how many scans do we need. So it's very labor-intensive in terms of thinking about all sides of what you need to capture. And so with the spaces, it was definitely easier. With the people, it was a little bit harder because, you know, they've got to be quite still for a long time and there's some angles inside the cave that we couldn't get to. But certainly all that area above ground and things, we had lots of locations and then merged them all together in Unity.

[00:37:44.375] Kent Bye: Okay, so you do have some shots of multiple perspectives of the people or not?

[00:37:50.407] Shehani Fernando: yes I think with some of the people like at the bottom of the cave that scan I think we had from like maybe a couple of angles the ones on ropes were sort of harder to get and I think the ones outside the cave I think we probably did from a couple of angles because you can kind of see a little bit more than 180 so I can't remember exactly but we yeah and certainly with the locations we had to do

[00:38:14.042] Kent Bye: Yeah, I guess as we start to wrap up, I'd love to hear from each of you what you think the ultimate potential of virtual reality and immersive storytelling might be and what it might be able to enable.

[00:38:25.494] Shehani Fernando: Gosh, big question, Kent. Yeah, I mean, I guess for me, I still feel like there's so much potential in the documentary space that we don't hear as much about. You know, we hear so much about games and healthcare and, you know, fantastic other ways that VR is working. But actually in the documentary space, I think there's just this new language that can be played with in terms of space and in terms of storytelling. And coming to IDFA is always exciting. I really enjoyed the piece Close, which is the sort of dance piece, where actually it felt almost like a new form of documentary. You know, you can sort of hear these different audio layers about this very traditional art form, and yet you get very different experiences from it. And that's something that just isn't possible in 2D documentary. So I think we're still just scratching the surface of what's possible. And as the technology becomes easier and cheaper, and effects are easier to create, and worlds are easier to create, you know, I do think there's potential to tell these kind of bigger stories. And I also think in the education context, I mean, again, this sort of piece, you know, we'd love to see in kind of museums or in galleries or in schools, where people can kind of really learn about these environments and see them for themselves in 3D. I think the infrastructure isn't quite there in terms of how governments and education are kind of seeing the potential of the technology still. So while there's been huge moves forward, I think there's just still so far to go in terms of what's possible and how we can get people excited about learning about these places and spaces. And that's really what the spatial storytelling can do.

[00:40:08.404] Anetta Jones: Yeah and I think my background's in sort of like narrative non-fiction and I think that when it comes to virtual reality I mean there's so many great pieces out there and it's definitely getting you know every year when you go to festivals like the curation and the selection it always feels like It's just making so much more improvement each year upon year. But I think the thing that I would personally really love is for there to be more of an emphasis on the storytelling. So I think what we need for that is kind of in the same way that with documentary film, you have an edit producer. You'll have the director, you'll have the shooter, you'll have the producer, but you'll have the edit producer. And the edit producer is so essential to sort of helping to craft that story and craft that narrative, because I'd love to be able to say to friends, family who don't work in virtual reality, you have to check out this one unbelievable, incredible piece. And there's definitely works where I'm like, no, this is really excellent. You have to try it. But I would just love to be able to champion one piece. I mean, we used to talk about it ages ago as the serial, you know, the serial podcast of virtual reality. And I think we're getting closer. We're definitely getting closer, you know, with the way that people are sort of, like, pushing the boundaries of storytelling and what's possible. But I would love there to be just a bit more emphasis and importing storytellers from, you know, even more. I know there's already people coming in from, like, theatre and script writing and everything, but I kind of want to flood this space with even more of those who can really help to carve out very engaging narratives, compelling characters, story arcs. the kind of piece where you watch it and you're just blown away by that story and you want to tell everyone you know about it. Yeah.

[00:41:52.182] Kent Bye: Awesome. Yeah. Looking forward to the serial of XR or whatever that ends up. I think, uh, the morning you wake from the end of the world is, I think it's getting close, uh, but I don't know if it's to the part of the universal storytelling that the serial podcast was at such a mass scale. So yeah, looking forward to what that moment might be. And, uh, yeah, love to hear if there's anything else that's left unsaid that you'd like to say to the broader immersive community.

[00:42:14.994] Shehani Fernando: Well, I mean, I think it's what's interesting at the moment is just seeing how AI is kind of coming into this space. You know, already this year, there've been so many interesting pieces that are really playing with what's possible in terms of large language models and visual aesthetic. I'm starting to almost get a little bit tired of the kind of stable diffusion look now because it's sort of everywhere. But I'm really excited to see where that's going and how that might evolve and how it can come into the VR space. We've already, you know, Shadow Time has got a little bit of that here at IDFA. this year. And equally on AI, there's a great piece here called Voice in the Head, which is playing with this idea of sort of your virtual assistant in your ear, which I think is super fun and exciting. So that's a kind of game changer, I think, and how we use those technologies to challenge storytelling, kind of challenge some of the darker stuff that's happening in some of the tech landscape as well. I think that's the role of artists in this space and we're starting to see some really exciting pieces commenting on that.

[00:43:18.237] Anetta Jones: So I, as I mentioned, my background is I went from documentary film to sort of virtual reality. And since working at ScanLab, we've been able to work on many projects that sort of like use 3D LiDAR scanning and immersive principles, but aren't strictly virtual reality. And I guess I'd love to see more of that. I don't think that it's one or the other. I think these things can coexist. You know, like for example, Framerate is a multi-screen installation right now. There's definitely ways in which we could make it a virtual reality piece as well and use some of those visuals. And most recently we did a piece of work called Felix's Room, which was a digital hybrid theatre play. It was telling one man's true story of the Holocaust. It was a documentary piece. And we ended up sort of like 3D LiDAR scanning a replica of a room that Felix Gans, he was our writer-director's late great-grandfather who passed away in 1945 and his life sadly ended in Auschwitz. But prior to that he lived an incredibly illustrious career and life, traveling loads and he had several businesses and he was a collector of art and music and furniture. and all sorts and so we kind of like retold his life through this documentary, letters, photos and drawings and all sorts of like visuals but we rebuilt a replica of his room using 3D LiDAR scans and we projected it onto stage and had the opera, then we had a live orchestra, we had live actors, so I think we really were able to kind of push the boundaries of what immersive storytelling is and I really enjoyed producing it and I feel like audiences really enjoyed the response was pretty amazing as well. And I think that there's so many works that are sort of like adjacent to virtual reality and utilize the same materials or storytelling techniques or technology. that we can continue to play with. And yeah, it's the same with Framerate. As I said, we've just finished creating our first Taiwanese chapter of Framerate, which right now, Will Trostle is actually showing at TCCF Festival in Taiwan. And Matt Shaw, our other director, is creating our first American version of it, which is for this desert botanical garden in Phoenix. So I think there's, yeah, I would love to see people, I guess, sort of not just thinking about virtual reality, but thinking about how their stories, how these assets, techniques and everything around it can be repurposed for other mediums as well.

[00:45:41.843] Kent Bye: Awesome. Well, very interesting first looks at point clouds as a storytelling medium. And I've seen lots of different projects, like you said, over the years. And the spatialized sound on this in particular, and especially as we have new renderings of these point clouds as we move forward, I'm really looking forward to all the data sets that we have for the last 10 plus years to be able to start to render it out in a new way. I feel like there's going to be a lot of really interesting developments over that in the next couple of years. So there might be new renders of this as a project as that continues to develop. Yeah, thanks for taking the time to share a little bit about your own story and journey. And yeah, just kind of reflecting on where immersive storytelling is at right now and where it might be going in the future. So thanks for taking the time.

[00:46:20.023] Anetta Jones: Thanks so much, Kent. Thank you. Thanks for your support, Kent.

[00:46:23.049] Kent Bye: So that was Shani Fernando, the director of Buried in the Rock, and then Annetta Jones, who's a senior producer at Scanlan Projects. So I have a number of different takeaways about this interview is that, first of all, so for me, a big thing that I'm taking away is the spatial audio of this experience where they're able to record individual pieces of sound in this cave. And so as you move around the location, you get a real acoustic imprint of what this space sounds like. But there's also the sparse representation with the point cloud aesthetic using the LiDAR scans and from multiple locations to be able to move around and like because there's a lot of occlusion when you use something like a LiDAR scanner that it's a single point that's only able to capture what is from that single point. So you need to have multiple points to be able to get a full capture. Also, as you move up into different spaces, then they're slowly revealing more fidelity because there's just so much data that it's impossible to be able to run that on a mobile chipset on the Oculus Quest 2 or even the Quest 3. And so they had to have like a little flashlight mechanic so that when you flash the flashlight around, then you would get a lot more details for each of those different scenes. We talked a little bit about the Gaussian splats. That's something that was originally presented at SIGGRAPH back in August of 2023. And that's something that is going to potentially completely transform some of the captures of this point cloud data so that it actually looks more of like a photogrammetry scan, but even higher level fidelity. I expect to see a lot more Gaussian splat rendering techniques within the next year or two as it's already started to percolate within the context of these different scanning applications. I think it's a completely new render pipeline and I think there's going to be a lot of innovations for using Gaussian splats which is like a combination of like neural radiance fields and point clouds and just a completely different render pipeline. Scan lab projects already has their entire pipeline for this type of point clouds because they're dealing with these huge amounts of data So I'm really looking forward to see how they continue to evolve that to potentially include these high-resolution techniques like either narrow radiance fields Gaussian spots or other things that are going to be coming forth within the context of artificial intelligence and There seems to be a lot of new promising render pipelines that are coming down the pike, but this as a piece just shows the potential for using this as a form of documentary. Using that not only as a aesthetic, but giving you as the viewer, a little bit of agency to move around and have some interactive audio components to it as well. So that's all I have for today, and I just wanted to thank you for listening to the Voices of ER podcast. And if you enjoy the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a listener-supported podcast, and so I do rely upon donations from people like yourself in order to continue to bring you this coverage. So you can become a member and donate today at patreon.com. Thanks for listening.