I interviewed Squeeker: The Mouse Coach creator Jiabao Li at IDFA DocLab 2023. See more context in the rough transcript below.

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Podcast: Play in new window | Download

Rough Transcript

[00:00:05.452] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR Podcast. It's a podcast that looks at the structures and forms of immersive storytelling and the future of spatial computing. You can support the podcast at patreon.com slash Voices of VR. So continuing on my series of looking at different pieces from IFA DocLab 2023, this is the fifth of 19 in that series, and today we're going to be talking with Jiabao Li on her piece called Squiggar the Mouse Coach. So this is an interactive installation and art piece that is using the idea of what if you were to take a pet mouse and use it as a running coach. And so every time the mouse runs, it's tracked on all these IOT devices and essentially sent out to these iOS and Android apps where people have this running coach application called squeaker that tells you how much this mouse has run. So you basically have to run more than that mouse each day and that you're essentially training yourself for half marathon. So I talked to Jabba last year with the piece that she had called Once a Glacier, which was a VR piece looking at climate change and kind of an animation. And it was also actually like a dance piece that was there with motion capture that had a different iteration of it. But this piece is exploring this dimension of interspecies collaboration, which is a research interest of hers. So we talk about all that, her variety of different immersive projects that she's working on. And she also happened to work at Apple for a couple of years, working on the Apple Vision Pro. She alluded to it last year before the Apple Vision Pro was even announced and this year She was able to talk a little bit more not too much obviously because there's only so much that she can share I happen to go ahead and make a pre-order for my Apple Vision Pro, which is coming in February 3rd 2024 the day after its first launching. So I'm going to get my hands on the device and start to play around with it, test it out. So I'm looking forward to seeing Apple's entrance into the XR industry or what they refer to as spatial computing. So that's where we're coming on today's episode of the Voices of VR podcast. So this interview with Jai Bao happened on Sunday, November 12th, 2023 at IFA.Lab in Amsterdam, Netherlands. So with that, let's go ahead and dive right in.

[00:02:18.267] Jiabao Li: I'm Jiabao Li. I'm an artist, designer, creative technologist. A lot of my work address climate issues and interspecies co-creation. Mediums varies from AR, VR to immersive projections to installations.

[00:02:34.357] Kent Bye: Yeah. Maybe give a bit more context as to your background and your journey into working in this space.

[00:02:40.749] Jiabao Li: So I did my study in Harvard and MIT Media Lab in design technology and then I was at Apple for four years working on products including the Apple Vision Pro. And after that I come to University of Texas in Austin. I'm an assistant professor there for three years now and I run a lab called Echocentric Future Lab. where we try to tap into the intelligence of different species. And there are three pillars of research in ecocentric design, design for health, and technology activism. And we embrace all kinds of media.

[00:03:13.858] Kent Bye: Great. Maybe you could quickly recap some of the other immersive projects that you've worked on ahead of this one.

[00:03:18.747] Jiabao Li: What's the glacier that you interviewed me last year here at IFA? That's about the story between a girl and a piece of ice, glacier ice. Other words, immersive works. I think for the Equivision Pro, there's a lot of on the designing the headset itself side. And then a lot of the others are like transmission that's like three perceptual machines to give you the feeling of like deconstructing, defamiliarizing reality. And it was like talking about social media amplification. In fact, I create a feeling of allergy to the color red. That's very immersive. There is a headpiece on the other one is about tactile vision and it's around the discussion of searching like searching engines on the third one. It's a commoditized region where you earn money to look at advertisements and you spend money to leave an ads-free world. And that's about targeted ads. Yeah.

[00:04:15.447] Kent Bye: Maybe you could talk a bit about how the project that you have here at the DocLab 2023, how that project came about.

[00:04:21.648] Jiabao Li: Yeah, so here I'm showing Squeaker, the mouse coach. The mouse be your running coach. So how it works is whenever there's a smart running wheel, and whenever the mouse runs, you receive a notification for you to run. And if you run, you run, and you're running distance past the mouse running distance, then you both get a reward. For the mouse, it's a cheat or a toy. And then for you, you get to scroll on social media. And the distance that you can scroll on social media is the same distance that you run together with the mouse. And it's like cue, action, reward. There's this behavior loop, behavior change theory where The cue-action reward is essential to forming healthy habits. So here the cue is the mouse run, the action is you run, and the reward is the mouse gets a treat or toy, and then you get this dopamine rush of scrolling on Instagram, on social media. Yeah, where it came about? Good question. So mouse has been used in science studies for so much. Every year there's millions of mouse get depressed or fat or they glow in the dark or they grow a year. All in the name that we want to learn more about our own health, our human's health. So what if now we switch the control to let the mouse control our health? And I tried a bunch of different things. First, what if the mouse is controlling my living environment, like changing the thermostat, changing the lights, and then I realized, oh, how about the mouse just directly control me? So I started with me. It's almost like an endurance performance. I run as much as mouse every day. And also to my surprise, in the process, I learned that the mouse run four to 20 kilometers every day. It's a half marathon. So in order to run like a mouse, you're basically training for a half marathon. And it becomes harder and harder. And probably some of my friends see I use Instagram less and less because I can't run over the mouse every day.

[00:06:26.313] Kent Bye: So the idea is that Squeaker is the coach and it's basically creating a behavioral loops and creating new habits that you're trying to run as far or further than the mouse. So if you do that, then the mouse gets the award. Does that mean that everybody who also is got the app, then the mouse is getting lots of rewards from lots of people. Is that the idea?

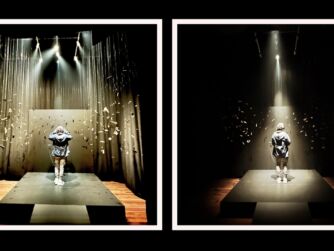

[00:06:48.299] Jiabao Li: That's such a good question. Then the mouse will get tons of reward. I will limit it because there's only certain amount of cheats that he could get. It's not very healthy to get too much cheat. But now that there are a lot of people doing this, they're helping me to also achieve the reward so the mouse can get the cheat almost every day now. Yeah, and like here at the exhibition, the interactive installation, we have a treadmill and we have the projection mapping version of the mouse there and also the live stream of the mouse who is living in Austin right now in the US. And the speed of the treadmill is controlled by the mouse, like we are recreating the feeling, the whole scene of the mouse living in his own home. And then collectively, if we all run over the mouse every day, then we open the champagne here every night. And to my surprise, even the first day, Casper's boy ran the whole afternoon and already surpassed the mouse.

[00:07:50.468] Kent Bye: So there's a QR code where you can scan it here at ifadoclab, and then that'll take you to one of the app stores, either iOS or Android. You download the app, and when I downloaded it, there was an indication for, I guess my phone is tracking how far I'm walking each day, because I'm not running, but it's at least activity tracker that says how far I've walked and then the mouse sets at zero at the beginning and then after I download the app then it's tracking how much the mouse is running and so when I check in on it then if the mouse has run more than I have then it's up to me to then try to either walk or run more than the mouse and if I do then I get a reward then what happens.

[00:08:32.107] Jiabao Li: So we integrate this with the screen time API. And you can choose which social media you want to limit your screen time. And so for me, it's Instagram. And if I can't run over the mouse, then I can't open it. It's basically like when you set the screen time, so you limit so the icon turn gray. And only when I run surpass, then I can open Instagram.

[00:08:54.380] Kent Bye: I see. So you have to run more than the mouse. And then once you do, then you can actually look at Instagram.

[00:08:58.553] Jiabao Li: Yeah. Yeah. And so for your version, you don't see it is because there's a thing we need to apply on the app store and they have a bug in their system and they didn't grant our access. But for my version that in off the app store, I have that.

[00:09:14.397] Kent Bye: Oh, OK. So yeah, there is a difference between your app and my app. And so I guess it has to get fixed in order for everyone to get that feature.

[00:09:20.860] Jiabao Li: Yeah. Everyone needs to use the screen API. You need to apply for the family control. And they have a problem in their system. They don't send you a confirmation email. And they don't give you approval. It's been like three weeks. They still haven't approved on that one. So we can't enable that feature on the App Store version right now.

[00:09:39.157] Kent Bye: OK, so it sounds like that's more of an issue of the delays of submitting applications to Apple to approve it, and then you're here at a festival context, and people are showing it, but the latest app isn't coming down for folks yet.

[00:09:51.398] Jiabao Li: Yeah, only that part. OK.

[00:09:53.855] Kent Bye: Okay, so all the other stuff is there, but in the installation there's like a video that's playing. There's actually multiple videos that are playing. There's one of like you running and then there's another like little ad that's playing on a screen and then it's got some sort of like controller with switches like a switchbot. It's also got the live stream of the mouse when it's running and then there's a treadmill. And did you say that the treadmill is somehow directly connected to what is happening with the mouse as well?

[00:10:18.023] Jiabao Li: So you see the projection mapping of the mouse running on the running wheel. And then that is timed together with the switchbot. So the switchbot click plus or minus to change the speed of the treadmill. And then when you see the mouse run faster, the treadmill run faster. When you see the mouse stop, then the treadmill get very slow.

[00:10:38.547] Kent Bye: Okay, okay. I think what someone said is that the mouse likes to run a lot at night which ends up being I guess in the morning here in Amsterdam because night it's like eight or nine hours difference and so yeah maybe we could talk about that because every time I've been there the mouse has been basically asleep or hasn't been running so I haven't seen the mouse running quite yet when I've been seeing the installation but maybe you could talk about like what happens if that is a case where the mouse is actually running at night, which would make more sense for some people who are in Europe rather than the United States if it's a nocturnal animal and running than how that works with trying to run further than the mouse if the mouse is gonna get all the miles at night.

[00:11:13.946] Jiabao Li: Yeah, so the mouse are most active from sunset to sunrise, so during the whole night. So the perfect exhibition space is the mouse is in the United States and the exhibition is in Asia. So that's the best time difference. And for here, the most active time is right around we wake up, like 8 a.m., starting around that time. So like, I wake up this morning, receive lots of notification of the mouse are running. I think the most popular time over here, like afternoon or evening time, that's already like midday in Austin. So the mouse are sleeping the whole time during that time.

[00:11:51.707] Kent Bye: Yeah, and you said that you were really interested about creating these relationships between humans and animals. Maybe you could elaborate on any other inspirations that you're taking from trying to create these immersive experiences that are trying to forge closer relationships between humans and animals.

[00:12:07.229] Jiabao Li: Yeah, so I have a, so zoom out a bit. I have this research thread around interspecies co-creation where human co-create with non-human species such as animals, plants, mycelium, artificial intelligence and ecosystems. So I've been co-creating with mouse, squid, octopus, bats and glaciers. Glaciers are ecosystems. Through co-creation, we can step out of this human shell and try to recognize their intelligence and shift our perspectives. And then in doing that, we interrogate ourselves. And not only that, also we cultivate the curiosity. So like, for example, when we talk about climate change, there's a lot of climate fatigue going on. collectively over it, but through the lens of animals, there's something you can hold on to that talk about the climate issue or the animal cruelty issue through the interesting, the wonder of the animal world. Like, for example, for mouse, there's huge stereotype around they're so dirty, they're filthy, like New York subway is full of rats, and same as bats, especially after COVID. A lot of people think they're so dangerous, they carry a lot of disease, and a lot of people are afraid of octopus, and a lot of these stereotypes are created from literature in the past, but they are amazing creatures. If you learn about their womb welds, their sensory experience, like all this superpower they have that we don't have, they're such amazing creature. And once you tap into that wonder, you totally change. how you think about them and how you treat them.

[00:13:42.783] Kent Bye: Yeah. Can you talk a bit about what other interspecies co-creation projects you've done with squid and octopus and other mycelia? What are some of the other things that you've done in terms of this type of interspecies collaboration?

[00:13:56.096] Jiabao Li: So for squid, while I work with the marine biology lab in Hawaii, I noticed the squid living in this lab just sitting in these white boring tanks all day long. So I wonder if I could make a playground for them and to make their life more interesting. So I collect the white and black sand from Hawaii islands and make them into the shape of China and U.S. and the squid living there for a month and carrying the sand around and reshape the borders and the maps between the two countries. And the squid swimming between China and U.S. crossing the borders without a visa or a passport. And there are kind of three layers around this. One is watching the squid kind of reshape the borders and crossing the countries remind me of myself as an immigrant moving between China and U.S. and carrying the baggage of the two cultures. trying to blend in but find myself in a situation like the squid where he has like white sand on the middle of the black sand and sitting in the middle of the black sand and then there's a lot of white sand around it like it totally changed the map but he feel perfectly camouflaged and then also we build a border walls in between countries that can block natural animal migration, and these animals that would not care about the delineation of borders and countries are forced to take a side. And so after a month, the squid completely reinterpreted this human-made map and border from the squid perspective. this map that we have fought wars for, for thousands of years, in the eyes of the squid. It's drawn on our own terms. And about octopus, I created this work called Solucene, where it's after climate change, the world is under water, and octopus become the next dominating species, and we imagine how this world will be like. And so there's this dance and also the morphing between octopus and the two dancers. Two dancers have eight legs and they try to become octopus, try to behave and embrace distributed intelligence where octopus have eight legs and basically they have nine brains. So how is it like for human when we have such distributed intelligence? Yeah.

[00:16:05.412] Kent Bye: Interesting. And you also said that you've been working with Eco Design. So maybe we could talk about if there's other aspects of Eco Design that have been integrated into this project that you have here this year, Squeaker.

[00:16:18.312] Jiabao Li: So a lot of the ecocentric design, basically it's a fundamental paradigm shift from egocentric, where human is on top of the pyramid of everything, to ecocentric, where we are intertwined with other living systems. And we've been talking about human-centered design in the past decade, and that's obsolete and I think the next stage we need to embrace ecocenter design. Everything we design we need to think about the environment the other species live around us and it ranging from small things like the product you use to living the built environment to landscape architecture all of that and a lot of part is also biodesign, bioplastics, biodegradable materials all of that.

[00:17:03.919] Kent Bye: Yeah, it reminds me of the philosopher Alfred North Whitehead who talks about process relational philosophy that is trying to emphasize the relationality of us to the world around us and that how at the deepest core of the nature of reality is relationships and potential and moving away from just focusing on the user and the object of people and think about how things are in more relationship to the world around us. That's sort of the sense that I get for ecocentric design.

[00:17:31.536] Jiabao Li: Yeah, and even though human-centered design is obsolete, but a lot of the new framework we're building around ecocentric design, it's also sort of looking back to human, but to change the world of human. There's a lot of understanding the stakeholder, which are not just human, but also the other non-human species around it. And then you design, you prototype, and you let them try it and gather their feedback, even though they can't speak, but there's a certain way that you can gather the feedback. And then you iterate on that. And then there's also, of course, when we talk about this, there's question around the rights of nature, the rights of more than human species. And how do you talk about rights when they can't even speak? Things like that.

[00:18:15.405] Kent Bye: Nice. And so what's next for you?

[00:18:17.368] Jiabao Li: Yeah, I've just mentioned bats. So I'm working on a new audio-visual experience. I'm collaborating with a sound artist, Matt McCarco, and we are making this interesting sound, like different environments of the habitat of bats. So there's this Israel researcher who used AI to categorize the different language or different sound of bats. They record them over several months and use AI to try to understand what are they talking about. And even when you speak back to the bats, can they talk back? So like we have chat GPT, can we even have bat GPT that we can speak with the bats? Or even AI can help us to communicate with the bats. So they learned that the mom bats have this kind of mom-baby talk is similar to when we talk to baby. We're like, oh, baby, you're so cute. So they talk in a similar tune, but a lower frequency. So we're getting all this sound data. And they also have kissing sound. They also have different sound if they are fighting or mating, they're in love, or they are enemies. So what does it sound like to have baby bat lullaby, or a love song from the bat sound, or a war song, or erotic song? So we are having this different sound and the immersive environment of their different habitats, like cave, trees, leaf. The famous Austin Bat Bridge, there are two million bats flying out of the bridge every night. It's like, that's what Austin is famous for. And we also work together with bat researcher Marlene Tuttle, who is like the most famous batman, and talk about bat conservation and the environmental impact of bats.

[00:19:59.007] Kent Bye: Wow, so do you foresee an immersive project that's going to come out of this, then?

[00:20:02.889] Jiabao Li: Yeah, yeah. We'll build an environment, and then it can be any format. It can be VR, it can be a dome projection, it can be an interactive piece. It even can be a DJ, where we are DJing in front with different bad call elements, and then have the fly-through of the visual.

[00:20:20.488] Kent Bye: It was interesting to hear you add in artificial intelligence into the same bucket as non-human, inter-species communication. So I'd love to hear how AI is coming into all of the work that you're doing as well, and how it's fitting into this ecocentric design in that context.

[00:20:37.085] Jiabao Li: We realized that when we talk about non-human species agency and right and the problem of consent, you can exchange every word there about the animal to AI and you have the same problem. And when we talk about non-human and we always talk about like is artificial intelligence conscious? What is consciousness? So like it's a similar kind of question. So that's one thing. And the other thing is, I love this word where we have, Larry Oxman mentioned Nature GPT. The AI system helps to, I think, I like the idea of AI being the middle agent for us to communicate or to help empower nature. And in a way, it's a mutualistic system. So the bat is about understanding them and if we could even talk to them and even if there's a world where the AI can talk to the animals in a way that we can understand and they communicate. in some way and who knows what will happen after that. Yeah and I'm making another work in collaboration with Steve where we are making audio to video system. It's basically chaining audio to text and then trajectory to expand the text and then turn that into a stable diffusion to make them into a series of video and we have like certain cuts of the video. And also we take in emotion. So for example, we play the news to our system and they generate a bunch of video where a lot of people are screaming and it's black and white and it totally feels like the energy of the news. And then we play the Republican debate and it becomes the pop art with a lot of Superman with the bubble comic book style with a lot of people shouting because it was a Republican debate. So a lot of the emotion and the feeling of this conversation go into the video and we're gonna show it in December in China where people can come and play the game of like there's a game around when people say a sentence and they form a Story and the story can be really fast generated into a short film and anybody can come and generate their short film like that

[00:22:49.225] Kent Bye: Wow, so it sounds like you've got a lot of projects that are going on. And since the last time we had a chance to speak at IFA DocLab 2022, there's been the announcement of Apple Vision Pro. So I don't know if there's anything that you can talk about in terms of anything that you were working on on that or any other reflections of what this new spatial computing computer might be able to enable in a larger XR ecosystem.

[00:23:10.842] Jiabao Li: Hmm, wow. So I was at Apple for four years. I joined 2017. That was the beginning part of the development and it's really great to see how the headset has progressed and like there was Oh my god, so many prototypes, so many designs that we've tried. I work on some of the body tracking, body representation, how do you go from the reality to the virtual part, or a lot of the technical specs part, like latency, SLAM, and what are the thresholds that go beyond the human perception, and what are the canonical use cases, and even answer the question of why we want to slap this thing onto our face. So of course there are the apps that exist in the current iPhone system, but what are the things that don't exist there that are unique to the 3D, the immersive environments that make it make sense. Yeah, I'm very excited to see when it's in the hands of so many people what people will create from that.

[00:24:23.418] Kent Bye: Yeah, well there's the eye tracking with the gesture tracking, which is something that I haven't had a chance to try it out, but it seems like from people that have had a chance to try it out, I think that fusion of having new human-computer interfaces that are really tying in both the eye tracking and The gesture recognition is something that I'm really personally excited to see how that's going to push forward these new human-computer interaction paradigms because a lot of what XR has been up to this point has been very much reliant on having controllers and I think the controllers are giving a lot of very specific empirical input for being able to push a button and have very low latency interactions that are not being tracked or being able to integrate into more game-like components, but to see how the lack of controllers and having this fusion between eye tracking and gestural tracking is going to open up all sorts of new paradigms that are really pushing forward where the future of XR, especially augmented reality and virtual reality, are going to go in the future. At the same time, a lot of the existing XR community have very much been reliant upon those controllers, so to kind of and see how all those things have to come together. But in particular, that integration between eye tracking and the gestural is something that I think is gonna be one of the things that it's going to be the best in class and help push forward the overall industry. So, yeah.

[00:25:40.321] Jiabao Li: The interaction of that is magical. It's like no other interaction can compare to. I think just like how with iPhone, multi-touch revolutionized the smartphone, this is what the input can revolutionize the immersive world. Although for that part, I think when it comes to use in film festival and the museum setting, it's a bit hard because it's a very personalized device. It tunes to your eye tracking. So every time when you put it on, you need to calibrate. And also for people with glasses, you have to have the lens. So when it comes to this kind of festival, it's hard to bring it from one person to another person quickly.

[00:26:19.484] Kent Bye: Yeah, I know that with even the Magic Leap 2, they have lens inserts, but similarly, having the requirement to have specific lens inserts from Zeiss to be integrated into Apple Vision Pro, when they did exhibit it at WWDC, they had all those lens inserts and they had a way of measuring your glasses prescription and, you know, that's not going to be something a lot of location-based experiences are going to be able to do. So yeah, it does sound like it's going to be a very personalized device that Isn't necessarily meant to be passed around at a event like this where many people are going to be coming through They have had it with Magic Leap 2 that they had at the shed in New York City where you could get some inserts But they actually said be sure that you wear contact lenses because it's not going to be very friendly for people who need glasses so since you can't really use the Magic Leap 2 with glasses because it messes up the tracking. So anyway, I think there's a similar thing that's going to happen with Apple. So I think it's possible, but it's going to be additional friction in terms of just putting on people's heads. So anyway, yeah, just love to hear what you think the ultimate potential of XR and immersive storytelling and this kind of inter-species collaboration and ecocentric design might be and what it might be able to enable.

[00:27:30.809] Jiabao Li: Wow, that's a much bigger question. I think for interspecies co-creation or the ecocentric design world, hopefully we can Essentially to battle climate issue is not just about carbon footprint and carbon sequestration but a fundamental perception change only through that and realizing that all the other species are the fellow travelers together with us on this planet. Only through that we can really make these things happen. and hopefully immersive world can be a tool, can be a medium to help with that. And that was what Once a Glacier was trying to bring, and also the next BETT project is trying to bring. Yeah, and for Apple Vision Pro, I'm actually really excited to use it on the airplane. I travel so much, which is also quite guilty because of the carbon footprint. But it's one thing that can put you into another world from the heaviness of travel. Awesome.

[00:28:35.358] Kent Bye: Is there anything else that's left unsaid that you'd like to say to the broader immersive community?

[00:28:40.211] Jiabao Li: Well, keep experimenting. Every year I come to here, it's a surprise. And I'm very excited and curious about what will happen with these new products. Yeah.

[00:28:51.317] Kent Bye: Awesome. Well, Squeaker, is this a project that's going to be continuing on? Can people continue? Is this something that you're planning on to have carry on with the one mouse that you have, or maybe potentially expand it if you need to have multiple rewards? But yeah, I'd love to hear if you have any plans to continue this, or if this is just more of a festival run to be able to show this experience.

[00:29:09.168] Jiabao Li: Oh, it's going to be a lifelong project. So personally, myself, I'm going to run a half marathon in Austin in February to testify the mouse training on me. So I keep running, need to do that. And then to further develop this app, so I have multiple mouse at my home, and people can choose based on their physical capability like if you can run more you can choose the mouse that run more and if you identify with certain mouse with certain either the color the look or like different personality of the mouse then you can choose that's your mouse coach that you feel attached to and Just after I showed here, I got these different suggestions. Maybe you can put a tag on the toddler or put the tag on your dog. So like even other animals, or like your cat, and then let all of them be your personal trainer. And they can train you on other things, like beyond running as well. Yeah. And again, it's on the App Store and it will be updated from time to time as this development happens. And it's actually a funny story. I run a startup called Endless Health. It's about cardiovascular health, but we also use AI and human coach to train people to get on healthier habits. And it's kind of, this is a humorous or sarcastic joke about it, where we are saying, oh, so we have the AI coach, we have the human coach, and now we have the mouse coach. So we'll compare all of them and see which one works the best. It would be so funny if the mouse coach worked the best. And we have running event here. So we did one running yesterday morning and another running tonight. And during the run, we did a loop, which is three kilometer. And we see, oh, we beat the mouse. We were running more than the mouse. And then the mouse start running. And the people are like, no, the mouse start running. We need to run another loop. So we run another loop. And now we finally pass the mouse. And then on round three, the mouse start running again. Some of us. are so ambitious, want to beat them up, so they run a third loop. So that's like nine kilometers in the morning.

[00:31:22.365] Kent Bye: Wow. And just one other quick follow-up is that you mentioned the process of cultivating new habits. I know there's a book called Atomic Habits, and I'd love to hear any other reflections on what's it take to cultivate and develop new habits? What's some of the research or insights that you're gathering to be able to help do this design of this project?

[00:31:38.239] Jiabao Li: Yeah, so there's atomic habits, there's tiny habits, and there's a bunch of podcasts that are discussing this kind of thing. I think one is positive reinforcement. That's where the QAction reward is coming in after you do a small thing and then you immediately get a reward. And that's why there's the reward of the mouse and you can scroll on social media and also because you run there's dopamine so you don't need the dopamine of social media so there's something there as well and meet people at where they are and make things small and bite-sized so it's really achievable instead of a grand goal that's hard to achieve like over a long time and I think the habit loop is like the golden rule of habit formation. And it takes time, like three weeks is a good count, 21 days. Yeah, and maybe find something that can make you accountable, either it's your friend or the mouse, and do it together with other people is more fun. Building a community, which is what we are trying to do here with this running community. Like when we run in the park, there are a lot of strangers, just random people in the park drawn. And the running group was like 15 people also joined us. So you build community and people like running past us, they're like, what the heck is mouse code? What's the badge there? And we have those running badge like the marathon badge. So you build a community with that. It's always more fun to do it together with other people. Yeah. And like we have the reward as the social media. That's also a comment on the attention economy. So when they study attention economy, a lot of the experiments were done on the mouse. And so that's another loop, like you do this reward system and when Facebook designed the like button that give you the dopamine rush, those are based on the same theory of those reward system based on the mouse. So that's why I have this system that the mouse train you and you get to scroll on social media. And it's also a play with the control, who is controlling who. What does it mean when the mouse can control everything? And another important thing we talk about when we talk about interspecies co-creation is how do you give agency to the animals so you are not just using them as paint or clay but they really, they have the agency, they get to have a say in the work and you respect their intelligence and behavior. Like the mouse here, they're doing whatever they're doing. during the day and they like to run on the running wheel. There's nothing they are forced into. They are living happily in the environment there.

[00:34:06.438] Kent Bye: Awesome. Well, lots of really exciting projects that you have here and really interesting to hear all the different ways that you're doing this type of inter-species collaboration, co-creation, and yeah, the habit forming, the habit loop, and also, yeah, just the ecocentric design and all the different creative coding projects that you have going on and how that'll be taking this type of research and feeding it into an immersive interactive experiences for people to have these direct embodied experiences as well as forming community as these different AI trainers, or mouse trainers, and all these other projects. So thanks again for taking the time to help share a little bit more about your project and some of the other things that you have coming down the pike. So thank you. So that was Jabao Li. She created a interactive art installation and mobile application called Squeaker the Mouse Coach. So a number of different takeaways about this interview is that first of all, yeah, just this idea of inter-species collaboration and moving away from human-centered design into ecosystem design. So really thinking about the broader relational dynamics that go beyond just focusing everything on the human. So seeing how even humans are related to the broader ecosystem. And this foundation of interspecies collaboration. So what are the ways that we can be in closer relationship to these animals and actually give them agency in different ways? And so lots of really provocative thoughts for an experience like this, that the actual installation at IFADOC Lab had a number of different components. And so there's a treadmill that has these different iot devices that are trying to Control at least how fast the treadmill is going based upon how fast the mouse is going You have a mobile application that you can download and it would send you lots of notifications so that at the end of the day You either had run more than mouse and if you have then you could tie that to your screen time applications and have these different conditionals so that if you do run far enough then you're rewarded by being able to look at different social media applications and if you don't Then they're basically locked down And then, yeah, the mouse is also getting these different rewards, which obviously as more and more people use this, then they're either going to have to have more mice or find ways to throttle so that it's going to be a little bit difficult to scale that up to lots and lots of users if the mouse is also getting rewarded based upon everybody's either individual or collective behaviors. So yeah, lots of just really fascinating digging into some of the deeper research interests with these different types of interspecies collaboration prototypes. And yeah, the actual installation had a treadmill, a physical installation of what would look like a mouse cage, and then having the projection map of a mouse running on there and then there was a big wide screen showing a video and then a live stream of the mouse that was showing whatever's happening in real time. So lots of different components and interactive art media installation that has lots of different technological integrations to be able to create this experience of turning your mouse into a running coach. So, that's all I have for today, and I just wanted to thank you for listening to the Voices of VR podcast. And if you enjoy the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a less-than-supported podcast, and so I do rely upon donations from people like yourself in order to continue bringing you this coverage. So you can become a member and donate today at patreon.com slash voicesofvr.