Kevin Cornish is a LA-based VR filmmaker who teamed up with AMD to do some interactive narrative experiments using gazed-based content in Believe VR. Depending on which character you look at different moments will triggered up to 32 different variations within this one-minute experience. He’s using Unreal Engine mixed with live-action footage to achieve this, and he hints that there will be additional post-production tools released that will make this type of experience easier. I had a chance to interview Kevin at GDC where he shared with me his ideas about writing interactive narratives, first-person storytelling for mute characters using the reluctant protagonist as a guide, stories where characters empathize with you, and the role of emotion and eye contact in immersive experiences.

Kevin Cornish is a LA-based VR filmmaker who teamed up with AMD to do some interactive narrative experiments using gazed-based content in Believe VR. Depending on which character you look at different moments will triggered up to 32 different variations within this one-minute experience. He’s using Unreal Engine mixed with live-action footage to achieve this, and he hints that there will be additional post-production tools released that will make this type of experience easier. I had a chance to interview Kevin at GDC where he shared with me his ideas about writing interactive narratives, first-person storytelling for mute characters using the reluctant protagonist as a guide, stories where characters empathize with you, and the role of emotion and eye contact in immersive experiences.

LISTEN TO THE VOICES OF VR PODCAST

Become a Patron! Support The Voices of VR Podcast Patreon

Theme music: “Fatality” by Tigoolio

Subscribe to the Voices of VR podcast.

Rough Transcript

[00:00:05.452] Kent Bye: The Voices of VR Podcast. My name is Kent Bye and welcome to The Voices of VR Podcast. Today I talked to Kevin Cornish who is a film producer from Los Angeles and he was working on a lot of different interactive narrative experiments with AMD doing gaze activated content and so I'll be talking to Kevin about his BelieveVR experiment that premiered at VRLA in January, as well as some of his other ideas about trying to create an engaging VR narrative using constructs like the Reluctant Protagonist and his ideas around reversing the equation of empathy within narratives. So that's what we'll be covering today in the podcast. Today's episode is sponsored by the Virtual World Society, and they are an emerging organization that is starting to recruit members to become the Peace Corps of VR. They really want to find the ultimate potential of VR and how it can transform society. So they're starting to recruit members as well as content creators to be able to use VR to help change the world. So you can find out more information at virtualworldsociety.org. So with that, just to set the context as to where this interview happened, it was at GDC, and AMD was having their launch event for the dual Radeon Pro, which was their new GPU, and I was in the back room of the VIP press area, and I had just done an interview with AMD's Roy Taylor, and at the end, Roy was like, oh my gosh, you have to really do an interview with this guy named Kevin Cornish, and right as Roy walked away, Kevin actually came up to me and introduced himself and that's how the interview came about and Roy was right He had lots of really amazing ideas about narrative in VR. And so with that, let's go ahead and dive right in My name is Kevin Cornish.

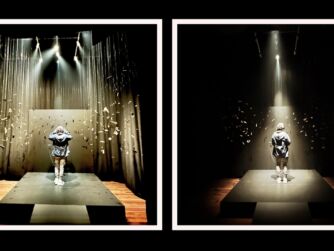

[00:02:03.159] Kevin Cornish: I'm a filmmaker and My background is in features and I was really fortunate about a year ago I got connected with Taylor Swift through Haley Steinfeld who was in a movie that I produced and Taylor wanted to do something that was in 360 and so we just shot something just to play around and see what it would be like. So the idea was doing something that was like, what's it like if you're friends with somebody like Taylor Swift? So we did something that was like at a birthday party for one of her friends and you're like there and like you're with her and then as like they're bringing in the cake, it feels like you're one of the girls. Well, the really interesting thing, once we got that into the headset, was that because nobody was paying attention to you, it feels like you're there, but it also feels like you're being ignored. And that alienation is really sad and really painful. And then there was another moment later on where Taylor speaks into the camera and you watch that and you're like, oh my god, this is incredible. It's like, I'm right here with this person. So pretty much since then I've been obsessed with first person storytelling in VR. The next thing that I shot after that was something at a Star Wars celebration and there we did something really cool with some stormtroopers where you're surrounded by stormtroopers and they're all like getting mad at you and questioning you and asking why you're there and why are you looking for these drones. You know, all the stuff that you want to hear stormtroopers yell at you about when you get caught by them. And there are great things about shooting Stormtroopers, which is their mouths don't move, so you can actually ADR, which in most 360 production, the challenge is there's no cheats. You know, you screw something up in writing in a normal film, you just cut to the back of somebody's head, you give them the line that you should have figured out the first time, and now the scene works. So that was kind of a cool thing. But there are a couple things that I've learned as far as the first-person storytelling. As I was first shooting Tess, I was thinking, OK, you're basically, you have to write for a character who can't speak, which is quite a challenge, but not impossible, because there's the great narrative convention of the reluctant protagonist, which is what you get in a movie like Casablanca, where the whole movie is driven by Rick not giving over the transit papers. So every time that he says no or he doesn't act, it moves the story forward. So I was playing around thinking about that kind of thing in different scenes. So one of them was, you're at dinner with your boyfriend or girlfriend. There's a phone in front of you. The phone rings. They ask you who it was. And since you're silent, they respond to your silence, and that makes them suspicious. And then they express their suspicion and they're like, why aren't you telling me who it was? And then when you don't respond, then now the suspicion escalates and now you've got to go to anger. So creating this kind of scenario where writing for a character who can't speak, you can still move the scene forward in a way that's fully first person and fully immersive without the cost of having an AI avatar that's photorealistic that has to be built. which I've seen some amazing ones and that's gonna be incredible but that's like a whole nother thing. So that was kind of one direction that I was going. Another thing that I was doing in my VR watching, there are a couple of really interesting first-person narrative VR experiences where they put the words of the character that you're playing basically in the voiceover. So when your character says a line, you hear the line that you're saying, and then there's the back and forth. And I was actually shocked at how well it worked and how well my mind just totally accepted, oh, that's me saying those words. And there's an incredible one called I Am You, which I don't know if you know that one, but it's awesome. It's very Charlie Kaufman, very like being John Malkovich. And the idea is there's a couple and they kind of get this device that allows them to switch consciousness. So the guy can be in the girl's body and the girl can be in the guy's body. And you're seeing it from the guy's perspective but with the girl's consciousness. And it's this really incredible thing. Well, one of the things that I'm, like, as far as all the first-person storytelling, the idea of when you're inside an experience, what I think is the most powerful thing is when somebody's expressing an emotion towards you. You know, the Chris Milk thing is that VR is the ultimate empathy machine. It's kind of the opposite, where what I really like is the idea of being in there and somebody is feeling empathy for me. I mean, it's clearly a very narcissistic thought, but that's something that I'm really trying to figure out, is how to get people that feeling. So, it's almost like emotional porn. where you're going into this VR experience and you're getting attention that's very important on a human level that you may not be getting somewhere else in your life. But biologically, we need that attention. And so being able to get that from VR, I think, is an amazing thing. So all of this is kind of going towards a piece that I did with Roy Taylor at AMD for VRLA. And the idea was showing off how gaze-activated content could work. And so I wanted to do something where you're inside of a scene and your emotional reactions to the things that are happening in the scene change the way that the actors in the scene behave. So the way that the scene that I did works is there's two girls in the scene. One of them's your girlfriend, the other's a girl who's telling you that your girlfriend's cheating on you. And the way that it works is whoever you're looking at speaks to you and pleads their case. So if you start, if you're looking at your girlfriend, she's there and she's telling you, this isn't true, whatever you've heard, don't believe them. And while she's saying that, another voice is trying to interrupt her. And this is the other girl who's kind of sitting kind of right on the other side of the table. So when you look to the girl that's interrupting, then she starts telling you all the terrible things about your girlfriend that your girlfriend didn't want you to hear. And then your girlfriend tries to interrupt you and get your attention back. So it's this kind of cool thing where you've got a two-character scene where they're both fighting for your attention.

[00:08:35.316] Kent Bye: Yeah, I think that the thing that's really striking about that to me is that a lot of times in 360 video you don't have the ability to necessarily change the action. You're kind of set. And you do actually have some capability to do positional audio. There were some experiences at Sundance where, depending on what you were looking at, you could listen to the different conversations. it's still kind of on rails, like the story isn't changing. So it sounds like you're creating like this interactive dynamic environment where based upon who you're looking at, you may get a completely different story or ending. So is there any branching behavior here? Is there different outcomes and endings depending on how much attention you're dividing between these two people?

[00:09:12.627] Kevin Cornish: Yeah, so the way that this one worked is depending on where you look over the course of it, there are 32 different versions of the scene.

[00:09:18.629] Kent Bye: 32, is that what you said? Yeah.

[00:09:22.063] Kevin Cornish: Yeah, and so basically it started with the decision tree and then I shot all the different lines that could come up over the course of the scene based on all the different scenarios of the different points when the user could turn their head from one direction to another. What was really interesting about it and what's really exciting to me is it was really difficult and I did this in January and from what I understand sometime in the next couple of months it's going to be really easy. So the way that we did it was we shot them on green screen, and then the first version that I did, I did with image sequences and an alpha matte, and then brought the image sequences into Unreal. The image sequences worked pretty smooth. Luckily, I was doing it with AMD's help, so I was doing it on their Tiki, and so that was, the AMD card, like, really is pretty strong. So that 20 gig file was able to play pretty smooth, but that wasn't something that could really travel. So after doing it to show it at VRLA, then I was like, if I want anybody else in the world to see this, I've got to figure out some kind of way to optimize it. So the only real option in Unreal at the time was Windows Media Vials, which is not conducive at all to any kind of a convenient workflow. There's no sync sound. They don't play smoothly going one to another. But it kind of was what it is. We were able to get the minute-long scene down to 180 megs, which I'd want to figure out a way to get it onto Gear VR. But the thing that was really cool is talking to the guys at Unreal about the future of their media player. And I don't know how much they've announced. I haven't really been following it. But basically, what the rumor is is that sometime over the next couple of updates, they're switching it over to VLC. and also allowing for H.264 compression. So what that'll mean is that doing bigger scenes with more characters will be easy, doing longer experiences will still be done in a way that you can have an interactive experience as a file size that could potentially play at the Gear VR.

[00:11:40.182] Kent Bye: I'm trying to get in my mind what this scene is actually looking like. Is this something that is 360 video that is somehow getting spliced based upon your gaze? Or is this something that is being rendered in a CGI environment with somehow translating the green screen characters into actual digital characters and avatars?

[00:11:57.411] Kevin Cornish: Yeah, so basically what we did is we did a cheat. It's in a 3D environment because it's Unreal. If you imagine setting up playing cards perpendicular to the viewer, and then we'd put some volumetric lighting around them so that that lighting matched the lighting that I had set up on the stage, so that kind of created a little bit of feeling of 3D. And then the other thing, so much of making this thing feel like you're really living in 3D space is the parallax. So I put a table in front of them that was actually a 3D asset. So I staged it such that they're sitting at a table, and the table that they're sitting at, you're sitting at, So as you're moving your head around, there is a true six degrees of freedom. As you're moving your head around, the table is interacting with them and with a parallax that would be the same weight you would get if you had motion captured them and done the whole thing in a 3D environment.

[00:12:52.186] Kent Bye: So how much content is there from end to end? I imagine there might be a range since there's like 32 different versions, but on average, what's sort of like the shortest and longest this experience would be?

[00:13:01.492] Kevin Cornish: Well, so I guess the other thing is it was something that we made in about 72 hours. So there were a lot of cheats that went into the way that it currently exists. So right now, it kind of goes about the same length no matter what. So depending on how fast you move your head back and forth, you end up skipping one character's lines. You don't hear all of their lines. I don't know if that makes sense.

[00:13:29.441] Kent Bye: Yeah, but how long would it take to kind of watch it?

[00:13:31.681] Kevin Cornish: Oh, it's a minute-long experience. The first thing that I saw in VR was the Volvo experience, which I thought was amazing. You look out of the sunroof and you're like, oh my god, this is incredible. This is what 360 can be like. And then you're like, OK, that was the moment that the train drove at the crowd in the theater. Now what's next? So one of the things whenever I'm developing an experience, the first question is, what's the feeling that I want the audience to feel when it starts, and then what's the feeling that I want them to feel when it ends. And the difference between a story and something like the Volvo experience is that those two moments have a different feeling. So with this one, it was like, you started off, like, casting doubt on the two characters, and then the viewer goes through an experience where different levels of trust and credibility are built between you and the characters. And then at the end you decide which one you trust more and then there's twists.

[00:14:27.042] Kent Bye: Nice. And so given this, what's sort of next with, you know, building this out in terms of like, did you want to tell longer stories like this and have many more like branches and variations?

[00:14:37.426] Kevin Cornish: Yeah, so I think cinematic VR I think is incredible, the possibilities. One thing I'm working on right now is doing something that's like this, except with multiple users in the same experience. So this one's actually a car chase where it's you and then whoever your friend is, wherever their headset is, are both in a car in the middle of a car chase and the driver is like, I don't know who you guys are, I don't know what you did, I don't know how you got me into this, but they're the ones, they're like freaking out and you're the one to blame and you're being chased and there's gunshots and it escalates and it's cool.

[00:15:24.137] Kent Bye: So when you were talking about this to me before you were saying it was you're kind of reacting to the emotional states of the viewer and so how are you translating gaze into being able to deduce from what they're looking at what their emotional state may be?

[00:15:37.785] Kevin Cornish: Well, so that's that's a good question. I mean, basically, at this point, I've been mostly working with really big, broad emotions like attention. Somebody's like yelling at you. You pay attention to them, like in the car chase. Kind of the way that that'll work has to do with when you're in such extreme chaos, the actual effect that one person's words or minimal actions have on the scene become very small. So the scene kind of stays going in the direction. It's like a train. Regardless of where people walk around on that train, the trains still go into the same place. So there it's a little bit of a cheat. In Believe VR, the characters ask specific questions that garner emotional responses and then your answer is determined by where you look. And then they respond to where you looked with emotions of their own. It's very elementary.

[00:16:37.751] Kent Bye: So if you were to kind of map out the emotional arc, would you have a distinct, like, this is the path of, like, fear and doubt, and this is the path of excitement and lust, or, you know, I'm just trying to figure out, like, how you think about these emotional beats as you're generating a story like this.

[00:16:54.353] Kevin Cornish: Well, I don't want to say too much about this one because I don't want to reveal too much but I'm doing Romeo and Juliet right now and basically the way that that one works is you'll have the choice between playing the role of Romeo or playing the role of Juliet And there it's one where it starts with a feeling of awe and wonderment, and then an exhilaration at the moment of the first kiss, and then ending with the thing that you want from Romeo and Juliet, which is two lovers who can't be together. It can only end with loss and sadness.

[00:17:29.002] Kent Bye: Yeah, and the thing that is really interesting to me about this and what you're doing here is that it's starting to be some of the first real examples that I've heard that is really blending a lot of this local agency and global agency so that your small actions don't just flavor your experience, but they actually change the outcome. A lot of the 360 video experiences up to this point have been pretty passive, even if you're a character that's being addressed within the plot, or you may be addressed and talked to, and you're kind of mute, you can't really say anything, or like you said, you may talk, but it's not coming from your own free will and your own agency, and so you're starting to get into this realm where I think is really the sweet spot of VR, being able to get this dynamic interactions and give people maybe a shorter experience, but more variation so that it has a lot more replayability.

[00:18:14.368] Kevin Cornish: Yeah, one of the main things that I'm trying to figure out and go for is interactivity where you're interacting on a subconscious level and you don't consciously know that you're interacting. Because I think that there's an inverse relationship at a certain level between presence and interactivity if you know that you're interacting. So one of the things that I try to figure out and think about in the concept stage of a project is what do we do to get the deepest level of immersion and the deepest level of presence. And there's one right now that I'm in development on and this is a question that comes up a lot because there's a desire for it to be interactive. And I'm like, the more you tell people they need to complete this puzzle to get to the next level, you're making them consciously think. And it's kind of like, you know, in developing movies, there's that, is it the heart or is it the mind? Like, where is this beat trying to hit? And interactivity is very much in the mind where what I think the deepest levels of presence really hit you in the gut.

[00:19:29.492] Kent Bye: Yeah, and talking to Eric Darnell of the Baobab Studios, you know, he made this point of, like, there could be a trade-off between empathy and interactivity, and really argued that the more that you're interactive and engaging in an experience, it becomes more about your completing a goal of trying to figure out what the rules, the boundaries of your interaction space may be. It takes you out of that place of really being able to receive the story. So, it sounds like you've come independently to this same conclusion that the more that you're becoming interactive, the less you are able to be as a storyteller, tell something that people are able to really receive and empathize with.

[00:20:05.699] Kevin Cornish: Yeah, I mean, the thing that I want to do really right now is figure out, and I've got like a few that may be this project, but what's going to be like the first tentpole VR experience? And what's that going to look like? And I feel like it's going to be like a 10-minute type of a film. But there are definitely different levels of interaction that can still work in a way that feels like a passive narrative experience. A very interesting format is the procedural, because that's one where you've got, like, if you were to imagine what's the VR version of an episode of Law & Order or an episode of CSI, When you think about what those shows are and how those stories work, it's basically you're going into a room, you're going to ask somebody a bunch of questions. Based on their answers, you're going to go to the next scene and ask somebody else a bunch of questions based on what the previous answers that you got. A story structure like that allows for different scenarios to be shot in a very choose-your-own-adventure kind of a way that doesn't necessarily require moments that take you out of the experience by asking you to make the choice about the next place that you're going to go on the adventure.

[00:21:28.573] Kent Bye: Great. And for you, what do you really want to experience in VR then?

[00:21:32.396] Kevin Cornish: Well, I'm obsessed with love stories. So, the feeling of feeling loved, I think is the most powerful thing that somebody can get in VR. So, figuring out experiences where people go in and they can have that feeling.

[00:21:48.966] Kent Bye: Yeah, I feel like, you know, one thing that's come up a few times as I'm listening to your talk is that one thing that happens in 360 video is that whenever you get eye contact or it's pre-recorded, there's this kind of like weird feeling where you don't feel like you're really there, you're kind of a ghost, you know, you kind of talked about it a little bit, but having this gaze-based interaction where you have different scenes that are playing out, There's one way to address that, but how do you expect that to work out with using something with film? Do you foresee that eventually you'll be moving into more computer-generated and dynamic interactive avatars that may be able to give a little bit more body language and social cues, or is this something that you feel like you're going to stick with the actual actors in real capture?

[00:22:29.238] Kevin Cornish: Well, what I think is one of the most powerful things is what you said, eye contact. When it comes to the headsets, your head is not always in the same place, your eyes are not always in the same place, so doing live performance capture in a way that maintains, like there's an eyeline issue. where when you're directing actors, you're telling them where you want them to look. When you're shooting that in 360 and it's going to be a 360 experience, they're always going to be looking directly into the eye of the user. When you're in an experience where, if it's for the Oculus or the Vive, and they can move around, where their eye is looking is not always directly into the eye of the user. The other part of this is, for me, the most powerful stuff is the stuff that's in the near field, which, when you think about blocking a scene for VR, it's the equivalent to the close-up in the film. That's like your money shot is when your actor is right there. When you're at like the medium shot, the eye gaze, if you as the user move your head six inches one direction, and the actor that you're talking to or that's talking to you is six, seven feet away, and they're looking at you, you're not really going to notice that they're not quite looking at you, but you will notice that in the close-up. I think there will be photorealistic avatars not very far off. I think it's still a couple few years away. I've seen one that is incredible. There's a lot that I want to say about it, but I'm not allowed to. But it definitely exists. It's only kind of at a head stage right now, but as far as photorealistic facial expressions, it's right there. The challenge with that is that Having the eyes follow the user is more than just the eyes, in that the way that the chin moves, the way that everything moves, there's a lot that has to be done to maintain that eye contact in terms of what kind of rigging you have if it's a 3D model. I don't know, that was kind of a rambling non-answer to the question that you asked, but the question that you asked is, like, a very big question that needs to be solved.

[00:24:47.086] Kent Bye: Yeah, it sounds like it's still quite a big open problem, and that's probably the reason why I ask is because the type of experiences that I think are in the future, you know, last year at GDC, I talked to Rob Morgan, talking about, like, narrative design in VR, and he made that point that when you're in a VR experience, you really need to have a lot of that body language with the avatars and the characters, and if you don't have that, it breaks presence because you know that it's not actually there. You don't buy it. And so a lot of these things we're kind of dealing with. In order to have that highly dynamic interaction, though, you kind of have to move towards the more CGI experiences, which, because the more that you do the live 360 video and it doesn't match all of your expectations of what social behavior people should be doing, then it also breaks presence. And so you have to make these trade-offs, it sounds like.

[00:25:30.853] Kevin Cornish: Yeah, one thing that I think will work, for a little bit at least, as far as the 360 video, is this idea of making it a truly passive experience, but giving your character pre-scripted lines that you're hearing. So, when you sit down and watch a movie, you associate with that main character, and the things that that main character says, you kind of feel like those are words that you're saying. Doing that, where there's a voice inside of your head that's speaking the words that your character is saying in first-person VR storytelling, I think can actually work pretty well. I mean, the other thing is a matter of cost. So, what I really like about the idea of shooting an actor and putting them into a game engine and then making a scene out of it is I can do it for really, really, really cheap. So, the cheaper that I can do it, the more I can make, and the more fun that that is.

[00:26:25.397] Kent Bye: And it sounds like there might be other solutions of like locking down the camera and stuff like that. So taking away positional tracking or the ability that people would move around, you can also do a lot of cheats.

[00:26:33.992] Kevin Cornish: Yeah, well, I'm really interested to see what Lytro is doing. Like, there's a version of capturing performance capture with like an array light field camera that solves the problem that I was talking about. Because now you have the data of wherever your head is moving. So there's no need to adjust for it.

[00:26:58.745] Kent Bye: And finally, what do you see as kind of the ultimate potential of virtual reality and what it might be able to enable?

[00:27:04.946] Kevin Cornish: Well, a big key to getting to the ultimate potential is all the work that you're doing. So before answering that question, I've really got to thank you for everything that you're doing, because these podcasts are an incredible education, and they are invaluable for everybody who gets a chance and everybody who's smart enough to listen to them. As far as kind of storytelling going, like when I think about movies, and I think about Instagram, and I think that Instagram is a story that never ends, where you follow a person that's a character in a story, and every time they post, there's another chapter to their story, and there's something really addictive about that. The way that the brain processes different imagery has to do with this. So when you see an environment, those shapes that you're seeing are processed in a certain place in your brain that's only mildly stimulating. When you see a face, because of the way that we've developed as far as evolution, that goes to a different part of your brain because we need to know what are the eyes telling us, what are the facial expressions telling us. So that part of the brain is more stimulating. When you see somebody who's familiar, like a family member, that goes to a third part of the brain that's actually the most stimulating part of the brain. And this is the reason why celebrities have such power, because when you see a celebrity, your brain is processing that in the same place that it processes you seeing your mom. That's why when you see those faces on a magazine, it goes to a different place than where it would go if you were seeing a stranger on that magazine. So what I feel like what can happen in VR is something where you're seeing the same faces and the same characters experience after experience after experience so that your brain stops registering them as strangers and starts registering them as people that are familiar. And that combining that with the emotional aspect of these characters expressing emotions towards you, I think can be really, really powerful.

[00:29:13.452] Kent Bye: Wow. That was really deep. Thank you. Is there anything else that's left unsaid that you'd like to say?

[00:29:19.614] Kevin Cornish: I should probably stop talking before I go too far down the Spike Jonze rabbit hole.

[00:29:25.576] Kent Bye: Great. Well, thank you so much. Thank you, man. So that was Kevin Cornish. He's a film producer from Los Angeles and been collaborating with AMD on a number of different interactive experiences. The last one was called Believe VR, and he'll be working on some more content that I'm really actually looking forward to seeing other stuff that he's doing. Kevin's got a lot of really interesting ideas about narrative in VR, how to drive stories when you are a passive ghost essentially, and you have no ability to speak into the narrative, at least at this point before the AI machines are able to do natural language processing. And so starting to experiment with just being able to use gaze-directed controls to be able to imply different emotional reactions and help drive the story forward in that way. And so really interesting to see where this goes because it's starting to go into that magical quadrant of interactive narratives where you have a little bit of local control within your experience where you're making decisions and you're able to potentially change the outcome of the narrative and And so I would point people back to episode 292, which was four different types of stories in VR with Devin Dolan, where we start to explore the different dimensions of narrative in VR. This is moving in the direction of having those interactive dramas. And one of the interesting points that it reminds me of is that in episode number 335 with Valve's Chet Fawziak, Chet is a writer. And the challenge, he said, once people go through these interactive experiences, at the end of it, do they know that they were actually making choices? And do they know that their choices were having any impact of consequence? Or do they just believe that that was the experience that they got? So I'm just imagining that if this type of believe VR experience was carried off so well Then you may actually feel like it was just kind of time to your reactions rather than you Actually feeling like you're a part of something that was driving the story forward. So this whole dimension of interactive narratives and going down these different branching behaviors starts to point a lot of open problems with interactive narrative in the sense that if you do it really well, then the audience may not even know that they were making decisions and acting. And it sounds like that's a lot of what the direction where Kevin is trying to go is to create these situations where the viewer is not even necessarily conscious that they are making the decision, that it's more from their heart or from their sense of presence of just reacting rather than thinking about it and thinking about the implications of their consequence. And so when you're thinking about trying to create a narrative and whether or not you're putting your viewer into their mind or into their heart or their body and just getting into a little bit more deeper levels of presence if you I think what he's saying is that if you are focusing on the heart and the body, then you can actually achieve more presence and have more engaging interactive experiences. And the person may not even realize that they're interacting. And if that's the case, then that's great, because you've essentially just created such a deep sense of presence and realism that they forgot they were in a virtual simulation. So just a couple other points. It's interesting to me that there's going to be better and better tools for this. This is the early wild west days. And so anything that people are doing that is innovative and cutting edge like this is going to take a lot of work and they have to get through a lot of limitations in the hardware and the technology and file sizes. And so it sounds like the tool set is going to get better for more of these. experiences and I'm really curious to see from a story perspective where writers and content creators are able to take narrative in the future. Also, just this idea of reverse empathy of, you know, instead of going into an experience and empathizing with another character, what would it feel like to be in a narrative experience where the characters are empathizing with you? Now, on the surface, it's hard to see what they might be taking as input, but I can imagine a time in the future where there's all sorts of different biofeedback where you're looking at heart rate and all sorts of other biometric feedback to be able to feed within the VR experience so that it can actually start to react to your reactions. And so it'll be interesting to see where that goes in the future, where the characters are actually responding to some of your emotional responses and to perhaps be able to use some artificial intelligence to create these narrative experiences that do feel really real. So with that, I just want to send a quick shout out to my Patreon campaign. I am getting ready actually to travel out to San Jose for the Silicon Valley Virtual Reality Conference. And I'll be speaking there about highlights from 400 different interviews of the Voices of VR podcast. I'll be speaking at 11am on Thursday. And the SVVR Conference and Expo is actually kind of my two-year anniversary for The Voices of VR. I started the podcast on May 4th of 2014, which is, we're coming up on that, and I'll be nearing episode 360 by then. So if you've been enjoying the podcast and would like to help contribute to its future, then please consider becoming a contributor to my Patreon at patreon.com slash Voices of VR.