Tom Furness has been pioneering virtual and augmented reality for the past 50 years, which is longer than anyone else in the world. He has an amazing history that started back in 1966 while he was in the Air Force building some of the first helmet-mounted displays, visually-coupled systems, and eventually The Super Cockpit. Furness eventually left the military to beat his swords into plowshares and bring these virtual reality technologies to the larger public by starting the Human Interface Technology Lab at the University of Washington, which has been doing original research to validate the efficacy of VR for everything ranging from medicine, education, and training. He also helped invent the virtual retinal display technology in the early 90s, which is being used as some of the basis of Magic Leap’s lightfield display technologies. Tom has continued to be a virtual reality visionary, and has some pretty inspiring ideas the future of the metaverse and education through the Virtual World Society.

Tom Furness has been pioneering virtual and augmented reality for the past 50 years, which is longer than anyone else in the world. He has an amazing history that started back in 1966 while he was in the Air Force building some of the first helmet-mounted displays, visually-coupled systems, and eventually The Super Cockpit. Furness eventually left the military to beat his swords into plowshares and bring these virtual reality technologies to the larger public by starting the Human Interface Technology Lab at the University of Washington, which has been doing original research to validate the efficacy of VR for everything ranging from medicine, education, and training. He also helped invent the virtual retinal display technology in the early 90s, which is being used as some of the basis of Magic Leap’s lightfield display technologies. Tom has continued to be a virtual reality visionary, and has some pretty inspiring ideas the future of the metaverse and education through the Virtual World Society.

LISTEN TO THE VOICES OF VR PODCAST

Tom Furness is a pretty incredibly visionary and pioneer of virtual and augmented reality. He talks about the early days of virtual reality where he was one of three people who involved in helping to create this new immersive medium that’s now known as virtual reality. Morton Heilig’s Sensorama was one of the first immersive multimodal experiences, and Ivan Sutherland’s The Sword of Damocles was being developed at the same time that Tom was working on some of the first helmet-mounted displays for the Air Force from 1966 to 1969. Tom was serving in the military as the Chief of the Visual Display Systems Branch, Human Engineering Division of the Armstrong Aerospace Medical Research Laboratory, Wright-Patterson Air Force Base in Ohio.

Tom says that he had different motivations for getting into virtual reality than Ivan. He has always been much more focused on solving real problems with VR. It started in the late sixties in trying to help fighter pilots cope with the increasing complexity of the fighter jet technology. Some of the specific problems that he trying solve included how to use the head to aim while shooting, how to represent information from imaging sensors on virtual displays, and how to make the systems less complex to understand and operate. There was limited space in the cockpit to monitor and control both the flying and fighting, and so Tom turned to creating augmented reality systems to display more information to the pilots in a virtual environment. This resulted in the first “Visually Coupled Airborne Systems Simulator” system that he helped develop in 1971.

There’s a lot of the history and development of virtual reality that’s been fairly dark and hidden from the period of the late 60s to early 90s. Tom is the first person that I’ve interviewed that has been involved with the evolution of virtual reality from the very beginning. He points out that a lot the virtual and augmented reality programs he was working on were being developed primarily to help fighter pilots in the cockpit first, and that the flight simulations and other training applications came after that.

Because of this, then it’s highly likely that there has been a continuation of AR and VR technology used by fighter pilots that we don’t know a lot about due to it’s sensitive and likely classified nature. We have to rely upon various trade magazine reports and books to start to piece together some of the details of some of these programs. In a book titled Virtual Reality Excursions with Programs in C, the authors say:

In the 1970s, for the first time, the capabilities of advanced fighter aircraft began to exceed that of the humans that flew them. The F-15 had nine different buttons on the control stick, seven more on the throttle, and a bewildering array of gauges, switches, and dials. Worse, in the midst of the stress and confusion of battle, perhaps even as they begin to black out from the high-G turns, pilots must choose the correct sequence of manipulations.

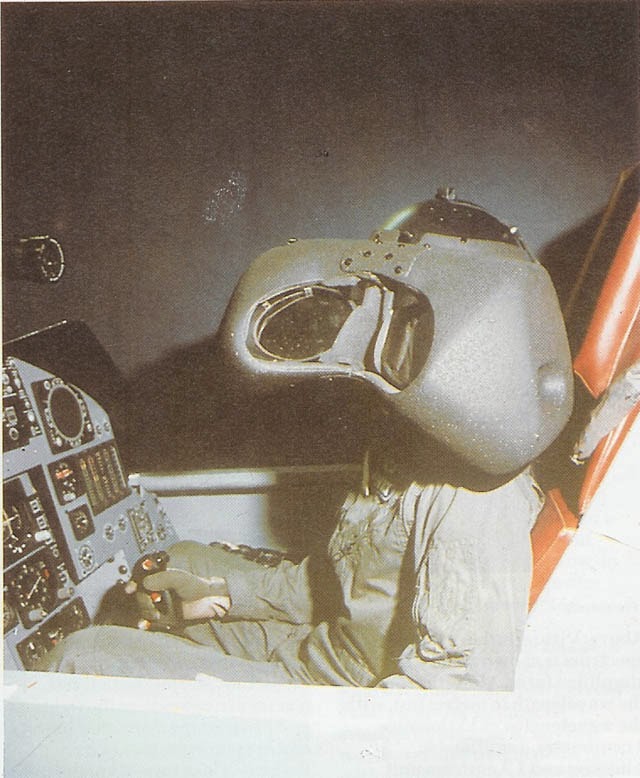

Thomas Furness III had a background in creating visual displays dating back to 1966. He had an idea for how to manage the deluge of information provided to pilots. He succeeded in securing funding for a prototype system to be developed at Wright-Patterson Air Force Base in Ohio. The Visually Coupled Airborne Systems Simulator (VCASS) was demonstrated in 1982. Test Pilots wore the Darth Vader helmet and sat in a cockpit mockup.

VCASS included a Polhemus tracking sensor to determine position, orientation, and gaze direction in six degrees of freedom. It had one-inch diameter CRTs that accepted images with two thousand scan lines, four times what a television uses. It totally immersed the pilot in it’s symbolic world, a world which was created to streamline the information to be presented to the pilot.

The VCASS system eventually led to the development of The Super Cockpit program in 1986 that Tom describes a system where you “put on a magic helmet, magic flight suit, and magic gloves” and then you were imported into an immersive virtual world. The Super Cockpit was the main project that Tom worked on all throughout the 80s, but there’s not a lot of direct information about it on Tom’s website beyond some old press articles that aren’t online. This brief academic article submitted to the Proceedings of the Human Factors Society in 1986 gives some of the most specific details I could find on how it included features such as “head-aimed control, voice-actuated control, touch sensitive panel, virtual hand controller, and an eye control system.”

The Encyclopedia Britannica has the following to say about The Super Cockpit:

From 1986 to 1989, Furness directed the air force’s Super Cockpit program. The essential idea of this project was that the capacity of human pilots to handle spatial information depended on these data being “portrayed in a way that takes advantage of the human’s natural perceptual mechanisms.” Applying the HMD to this goal, Furness designed a system that projected information such as computer-generated 3D maps, forward-looking infrared and radar imagery, and avionics data into an immersive, 3D virtual space that the pilot could view and hear in real time. The helmet’s tracking system, voice-actuated controls, and sensors enabled the pilot to control the aircraft with gestures, utterances, and eye movements, translating immersion in a data-filled virtual space into control modalities. The more natural perceptual interface also reduced the complexity and number of controls in the cockpit. The Super Cockpit thus realized Licklider’s vision of man-machine symbiosis by creating a virtual environment in which pilots flew through data.

Tom left the Air Force soon after The Super Cockpit program, and started to bring some of these virtual reality technologies to the wider world in what he characterizes as beating his swords into plowshares. One of the technologies that he helped to invent was the Virtual Retinal Display technology where photons are scanned directly onto the retina. Because the patent has now expired, this is some of the technology that Magic Leap is partially based upon.

In an article in Aviation Today from 2001, Tom says that they licensed out the virtual retinal display technology to Microvision in 1993 so that they could commercialize it for the next-generation Super Cockpit called the “Virtual Cockpit Optimization Program.”

John R. Lewis, an employee from Microvision, suggests in this IEEE Spectrum article that Tom was working on similar scanned-beam technology while working on the Super Cockpit program and gives some more details as to what became of the Super Cockpit program:

The military gave scanned-beam technology its start in the 1980s as part of the U.S. Air Force’s Super Cockpit program. Its team, led by Thomas A. Furness III, now at the University of Washington, Seattle, produced helmet-mounted displays with an extremely large field of view that let fighter pilots continuously see vital data such as weapons readiness. The displayed information moved with the pilot’s head, giving him an unobstructed view of what was going on in front of him and helping him to distinguish friend from foe.

There’s a number of Microvision press releases about the continuation of the “Virtual Cockpit Optimization Program” into the early 2000s, but it’s unclear as whether or not the virtual retinal display or augmented reality helmets ever moved beyond the prototype and proof-of-concept stage into full operational use. It’s likely that the military uses of augmented and virtual reality have continued in training, but it’s unclear as to the extent to which the technology has continued to develop and potentially be used within operational systems.

Tom talks about how he thinks that the virtual retinal display technology has the potential to solve some of the problems around the vergence-accommodation conflicts in pixel-based virtual reality head-mounted display technologies. He also shared his surprise that shooting photons directly into the retina can actually allow some people with impaired vision be able to see, and so he started to develop some virtual retina display peripherals designed for people with impaired vision.

Finally, Tom talks about his vision for turning living rooms into classrooms with the Virtual World Society. He imagines a subscription-based program that could be used to fund the develop free educational virtual world environments that families could explore with their children and potentially help solve some of the world’s most intractable problems. Tom says that “When you put people into a place, you put the place into people.” If you design a good enough virtual world, then it’s possible that the people who experience it will never forget it because VR allows for a whole-body learning experience that is amazing what can be retained.

Tom is an visionary of virtual reality, and it was really inspiring to be able to learn more about the history and what he sees as the ultimate potential of VR to be able to appreciate what we have in reality and to continue to learn, grow, and collaborate with each other to make the world a better place.

Become a Patron! Support The Voices of VR Podcast Patreon

Theme music: “Fatality” by Tigoolio

Subscribe to the Voices of VR podcast.

Rough Transcript

[00:00:05.412] Kent Bye: The Voices of VR Podcast.

[00:00:11.935] Tom Furness: My name is Tom Ferdas, and currently I'm, among other things, I'm a professor at the University of Washington in the College of Engineering. But prior to that time, I spent 23 years working for the Department of Defense. And that's where we really birthed the early days of virtual reality. Really, there were sort of three initial guys that were involved in it, including Mort Heilig that did the sensorama. And this was in the early 60s. And then Ivan Sutherland, who did some work with 3D displays that were head-mounted and could be immersive. and then I was working on Fighter Cockpits. So each three of us had different motivations for getting into it, but I was there at the beginning, in the early days, and probably spent more time continuously working on VR than anybody else in the world, because I've been at it for 50 years, and seen various ups and downs as we went along, and that's me.

[00:01:02.267] Kent Bye: Great, so how did you first hear of, say, like, the Sword of Damocles? And, you know, tell me about your experience of first experiencing that.

[00:01:09.113] Tom Furness: Okay, the Sword of Damocles actually happened about the same time I was working on virtual cockpits. We actually met up at a conference. One of Ivan's students with the Sword of Damocles, they were explaining what they were doing at the University of Utah when I was explaining what I was doing in the U.S. Air Force. And it turns out there were a lot of similarities, although different motivations for doing it. Certainly, Ivan's motivation was more had to do with how do you interface with computers. Mine was solving problems in airplanes, in fighter airplanes, and how it could need to tap this three-dimensional capability of humans in order to do that. And in the process, invented this whole family of helmet-mounted sights, tracking systems, and displays. And so that I did for 23 years, and then since that time as an academic. I've continued this work here at the University of Washington for another 26 years now, and we've also done a family of technologies, both the hardware, software, and human factors kind of things to help create this field.

[00:02:08.977] Kent Bye: Yeah, when I talked to Henry Fuchs at IEEE VR, he talked about how flight simulations were actually a very big driver of virtual reality in terms of creating these immersive environments. And so, what was it that you were actually working on with the Department of Defense with these head-mounted displays?

[00:02:25.037] Tom Furness: Well, certainly simulation was a big promoter of using synthesized worlds, you know, to train people. But I was working on the real airplanes, you know, what was actually going in the air and helping pilots to do the interfacing. And we had maybe four different problems we were trying to solve. The first problem had to do with how do you aim the systems on the airplane without aiming the whole airplane? So there was an aiming problem. Then there was a problem having to do with how do you represent information from sensors, especially imaging sensors, where you had a low light level television or a far away looking infrared and you're trying to somehow communicate that to the pilot, but there wasn't enough space in the cockpit to put a display that was large enough so you could actually see the image. So there was a problem with displays, and then a problem with complexity, with all the controls and displays in the cockpit, and how do you make sense of them, operate them, especially when you're busy. And so there are these kind of problems I was trying to solve, and that's when, in 1966 and 67, 68, there was this family of control systems, head-tracking systems, and head-mounted displays that I developed that we demonstrated and tested in real aircraft. to see how we can use the head position of the pilot to aim things, aim systems on the aircraft, how we can use these virtual displays to take the place of any kind of physical display in the cockpit, because you can make them large enough. But you can make the virtual display any size you wanted to. So this gave us a better way of coupling with the systems on the airplane. And then eventually we put these two together, the tracking along with the display, and that was basically VR. And then we now stabilized information in space. And then we started using that later as training simulators. It actually was engineering simulation for the real airplane first, and then later on became part of the training simulations.

[00:04:12.201] Kent Bye: Yeah, and one of the other interesting things that I found at the IEEE VR is that, you know, there was kind of like an explosion of VR in the 90s, but yet there's kind of like the history of what was actually happening with VR. It's kind of dark, you know, either in industry or within the military where we don't have a lot of visibility into what was actually happening. So, what can you tell us was happening from the late 60s into the 90s with VR?

[00:04:35.016] Tom Furness: Well, one of the big programs that I was involved in, the military side, was the so-called super cockpit. This is a cockpit that you wear, and basically when you put this cockpit on, a magic helmet, magic flight suit, magic gloves, you're imported in a virtual world. And we were doing this in the late 70s and early 80s. We were actually demonstrating these kind of concepts. So I worked on that systematically, the visual, the acoustic, the tactile, all these things, eye tracking, all these things throughout that period of time through the 80s. But most of it was in the military and when I left the military, I was hoping that I could beat my sword into a plowshare and take these technologies and use them for other applications, and education, and medicine, and business, and entertainment, and things like that. And that's when I started the lab here at the University of Washington, the so-called HIT Lab, Human Interface Technology Lab. And so there was a lot going on with government funding behind the scenes in the military. And then I left that and then to start my own lab to do things on the outside. And we were booming for a while through the 90s, a lot of other stuff going on. But it was clear that it wasn't really practical for consumer use because it's too expensive. Furthermore, the technologies for generating the content were really sort of lame and expensive and slow. So the real-time graphics that we needed to create usable virtual environments just didn't exist. And the ones that we could get to work reasonably well were very expensive. You know, hundreds of thousands of dollars. So the reason that we arrived at this sort of winter time for VR, as we approached 2000 and up until the Oculus Rift really appeared, was because of really we just didn't have the constituencies we needed of commercial off-the-shelf technology. We didn't have a killer application either. which would pull this. You know, we thought, well, gaming surely will pull this along, but it didn't because the game developing companies said, you know, there's not going to be enough of those units out there for anybody to use, and they're too expensive anyway. And so that was, we didn't have that pull, significant pull, the killer app for it. So there was a downtime there, but with the birth of this new resurgence that was led by the Oculus Rift, I mean, there's nothing new there. I mean, the technology is basically the same as what we were playing with 20, 30, some cases 40 years ago. But what we do have now is the computational technology. So we can now render things on cell phones that we weren't able to do in a $1 million Silicon graphics machine. So that's been the big enabler that we can now do this. And people are ready for it. People are ready for VR, I think. Certainly there are early adopters that are chomping at the bit. But it will happen. And we see this young generation of kids, you know, that already are so technology savvy with their phones and their iPads and, you know, the games that they're playing on the game platforms that they want it. And that's going to be the driver now, I think. People really want it. And it's affordable and pretty good. The technology is pretty good.

[00:07:54.116] Kent Bye: Since you started the HitLab, what would you say are some of the big contributions to the field of virtuality that you've been able to make?

[00:08:02.824] Tom Furness: We worked in sort of all aspects of VR. Certainly the hardware side, in terms of the display technology, the acoustic technology, the software in terms of operating systems. For example, we built the first AR toolkit for augmented reality and started that company. A lot of applications in medicine, demonstrated in medicine and in education, we proved that it works. It really worked well. But probably if we look at the biggest contributions, that would fall into maybe three or four, I would think, that are the transformative ones. Number one is the virtual retinal display. Virtual retinal display transform display technology. That's where we scan an image directly on the retina of the eye. You aren't looking at a screen through optics. It is going directly to the retina. And that gives you this unprecedented quality of the image in terms of resolution and luminance and color saturation, things like that. Just really amazing. But then again, that patent was issued in 95. and now it's expired. And so it's been around for a while, but it was a huge contribution, and we're going to see more and more of that now. As a matter of fact, we are seeing it. We're seeing it emerging in things like the Magic Leap technology. And Magic Leap is fundamentally based upon that original work in the virtual retinal display. So there's hardware contribution. I think the virtual retinal display is one of those. One of the things that happened with the virtual retinal display is we discovered, by accident, that people who have certain forms of low vision can see with it, whereas they couldn't see otherwise. And this was another breakthrough and took us off in an entirely different direction of exploring how we can use the virtual retinal display as a prosthesis for people who have low vision. And that's a stumble upon kind of thing. Another stumble upon kind of thing was pain. We got into using virtual reality as a way to distract patients while they're undergoing treatment for wound care and burn victims, things like that, and for PTSD. I don't know if you've interviewed with the DeepStream people and things like that. That all came out of the, those are my students, and they developed that technology. Then, of course, another area was in education. We found in experiments we did in schools, with elementary schools, secondary schools, as well as university students, a profound impact in the way you learned in virtual reality. And one of the main mechanisms for doing that is that when you put people into a place, you put the place into people. like you never forget it. So if you go into a virtual world, a good virtual world, you'll never forget it. And for educational applications, having that sort of that connection to the brain, it's like writing on the brain with indelible ink, permanent ink. and very powerful tool and furthermore it allows students a whole body learning experience because the pedagogy we use today doesn't work for all the students. The students are able to get in and manipulate things and touch things and even though they're abstract like atoms in an atom building world. It's amazing how they learn and what they can retain. So I would think that those are sort of the transformative things. A few of the transformative things, certainly we did a lot of work in motion sickness, simulator sickness. That was a big deal and things having to do with surgical simulation. A lot of medical applications for the technology.

[00:11:28.068] Kent Bye: Yeah, this virtual retinal display is kind of like shooting photons directly into the eyeballs. How did that originate? Is that something that was funded by the government, the military, or what was the application of that technology originally?

[00:11:42.221] Tom Furness: Okay, well the virtual retinal display came from my desire to solve some problems with traditional displays. The problem with traditional ways of generating virtual images, you started off with a real image to begin with, and then somehow you magnified it and collimated it and projected it so it appeared to be a virtual image. But it's all based upon that original image. Now, if you wanted to make the picture big, immersive, that kind of thing, that means you're expanding or magnifying all those individual pixels, making them big. You are indeed able to get wide field of view, but you sacrifice resolution. So the image isn't very clear. In order to make it clear, you have to pack in more and more picture elements if you use that kind of paradigm. So it was clear that there were real limitations of how many pixels you could pack into an LCD device or an OLED device or something like that. Furthermore, the more pixels you packed in, the less contrast you could get and the less luminance you could get out of them. So, we were basically throwing out the baby with the bathwater. With matrix element displays, we were sacrificing a lot to get the field of view. So, I was trying to come up with a solution for that. And I realized that, you know, all we're trying to do is get photons onto the receptors in the retina. There are other ways to do that. Why don't we just shoot them directly and not start off with a screen to begin with and just have a little micro scanner that takes modulated lasers and you just pipe them directly to the retina. Now these are very low energy lasers. I mean we're not talking about any kind of damage to the retina at all. I mean it's like less energy and you have what you walk outside in daylight. But, we did manipulate them in such a way that could be very wide field of view, high resolution, and high luminance all at the same time. With a fraction of something that's very small that you can ultimately run on a hearing aid battery. So that was my impetus for doing it and a lot of people said, I'd never work. This will never work because there's no persistence. You know, you're scanning a beam across these receptors and since there is no persistence like you'd have with a liquid crystal display or something like that, they won't be able to see it. Well, they were wrong. It worked and worked very well because there is persistence in the eye itself that we're using. So, I think that the motivation again was to solve some problems with traditional displays and this worked. And I don't think it's been, you know, it's not really been adopted from a widespread standpoint because we have all these screens now that are on cell phones. And they're getting pretty good in terms of resolution. But still we're going to run out of picture elements if we want to go to really wide field of view. Because we need to get way beyond what we even see today on the floor here in terms of the instantaneous field of view of these displays.

[00:14:28.656] Kent Bye: Yeah, have you tried out the Magic Leap technology then?

[00:14:31.670] Tom Furness: I have not, personally. Although they're using some of my patents, I have not seen it. But my colleagues, and even some of my former colleagues at the Hit Lab who are now working on it, tell me about it and say it's really, I'm hoping to have a visit to Magic Leap soon. Have you? No. Yeah. But the Light Wave technology, which was pretty much originated with a virtual patent display, is what they're using. Yeah.

[00:14:56.780] Kent Bye: Yeah, digital light fields. How does that actually work in terms of capturing a light field?

[00:15:02.513] Tom Furness: Well, I mean, basically what you have are ways to channel light. And when you talk about doing things with coherent light, you can manipulate that coherent light a lot easier in terms of creating the shape of a light wave front. One of the problems that we have in traditional virtual reality displays is that all the picture elements are collimated to some image distance, some viewing distance. Typically, it's optical infinity. So that means you're putting a lens in front of your initial image source to make it appear as if it is at optical infinity. So if you have a stereographic display or a binocular display, you're creating vergence cues between the two eyes in addition to the accommodative cues that are coming from the focus of each eye individually. Now, when you put objects up close to you, what's happening, the light rays are coming in parallel. So one part of your brain says, it's in the distance. But the other part of the brain says, no, it's not because I have these vergence cues between the way the eyes are focused and close and the way they're turned in toward the object that's closer to you. So there's this conflict between the visual accommodative cues and the binocular cues, which basically cause problems. It's not right. So the light wave technology lets you manipulate that light wave front so you can put the pixel at any distance. And oh, by the way, you're using the same retinal scanning technology that I talked about before. And in this case, using scan fiber. You're scanning a fiber that's being manipulated instead of a mirror that's actually steering the beam around, but it's the same effect. One advantage of going with the fiber is you can get very wide fields of view with that approach, but you can also manipulate the light wave front.

[00:16:50.169] Kent Bye: Do you foresee this digital light wave technology with the virtual retina display to be more of a sustainable solution in terms of eye strain or be in virtual reality for an extended period of time?

[00:17:00.978] Tom Furness: I believe so. I think it solves some problems. And one of them I just mentioned, you know, the accommodative cue versus the vergence cues. And so therefore, it's a more natural display. But at the same time, I think there's things we don't know. You know, if you have people wearing these things eight hours a day, seven days a week, don't know because it's not been done yet. And so some of the longevity side of things, as in the case of regular VR, you know, we really just don't know when you have a persistent virtual environment you're in day after day, what kind of effect that's going to have. So, I think that there are some attributes of it. In the end, the cost can be substantially less than some of the other approaches. But with the economy of scale that we have with the screens that are used in phones, you know, they're getting down a fairly low cost for a reasonable resolution and luminance. and you look at that through some optics, both reflective and refractive optics, it's not too bad. But still you have the problem of the conflict between the cues. So it depends upon what people are willing to put up with in terms of these kind of visual abnormalities in order to experience virtual world.

[00:18:13.561] Kent Bye: And so what is it that you're working on now with the virtual worlds?

[00:18:17.695] Tom Furness: Okay, actually there are a number of projects. I'm still interested of course in the virtual retinal display and there are a number of companies who are actually working on that technology that I'm consulting with to help them along the way. One of the things that fascinates me is that we need to have a better operating system for virtual reality. We're typically running on Windows platforms or maybe even a Mac OS. And those were never intended to be real-time. You know, they are pretty good. You know, they have graphics processors, multi-core CPUs and things like that. So that's pretty good. But what we really need is a robust real-time system. And one of the companies I'm working with is Envelop VR. And I'm a senior advisor there because I believe that that is a key. to getting a lot of these applications really running better and fast. Envelop VR is putting together that wrapper, that super glue, that brings the computational resources that are available in a platform into something that will run in real time. And that's what we're going to really need. And I see that as sort of a seminal advancement. It sort of underlies a lot of the things we see here that everybody can use, more a universal kind of addition to other operating systems that will make VR more viable. So that's another area I'm interested in. Another thing I'm trying to do is explore the far reach of this, particularly deeply coupling. How do we deeply couple the human to these incredible machines, and especially looking at other physiological signals at the same time? Looking at brainwaves, looking at eye movement, looking at combination of speech, things like that. And I think that deep coupling is an area where we're going to get this fusion of the brain that's truly an unlocking of intelligence as we're intimately connecting with these machines. And when you add AI to that, it can be a wonderful tool. I'm also exploring these applications that are going to help heal. I think that VR can do a lot to heal, not only that we see in pain, but for diagnostics, for medical simulation, and for reducing stress. I mean, a lot of disease is a result of stress that people have, and I think that we can build hollow beds that are places for people to recover from some of these traumatic things that have happened to them in their lives. Now, all of these things are, I think, leading up to my virtual world society, which is really what I want to do and in process of doing. The virtual world society is not-for-profit society that really creates a community of people who want to work together to solve these pervasive problems. And in particular, what I want to do is link these independent developers who really are fascinated and, if nothing else, addicted to virtual reality because they experience it and they say, this is what I want to do. This is the future. This is exciting. And a lot of these folks, albeit they have a lot of energy and a lot of smarts, they don't necessarily know what to do with it. And what I would like to do is point them in a direction toward these educational and medical applications with funding that helps them actually fulfill these things. Now, another group that goes along in parallel with this are the young people, kids that are in their homes. I would like to turn living rooms into learning rooms, into classrooms, where families learn together about these pervasive problems and become part of the solution. Rather than the kids going upstairs in their room and playing Grand Theft Auto, that they actually are able to go into worlds with their parents and figure out how we're going to solve the problem of AIDS in Africa, how we're going to look at the problems of renewable energy, global warming, all these things, desolating scourges that we see. And I believe that it's not too early to get kids in elementary school thinking about those things and giving them experiences within their homes, because that's where most learning takes place anyhow, is in the home. Give them those kind of experiences and have the family learn together. The Virtual World Society is intending to do that. where we'll have members, subscribers, that together, let's say a dream, this is my dream, 10 million families around the world that are members of the Virtual World Society who are willing to pay a subscription of $30 a year to be a member. And for that, one of the member benefits is getting access to these worlds that have been developed by these indie developers. Well, you multiply 30 times 10 million, and you get $300 million, right? What could you do with $300 million with a lot of talented people who want to help and help the world be a better place in providing this content and this community to come together and work on it? So it's really bringing together a lot of little fish. to solve these problems. It's a grassroots kind of thing. I don't want to be an old guy that's standing in the way of this, but I want to try to help provide a bridge so that these young folks who really want to do it have a chance to do it and also are able to eat at the same time. And I believe that this is a formula that will work, and we have a lot of interest and attention. So it's just beginning. So if people are interested in this, they ought to Google virtualworldsociety.org and get involved.

[00:23:45.022] Kent Bye: So you've been involved in virtual reality for over 50 years. As you said, probably the person that's been working on it the longest in the world. You've had a lot of VR experiences, and maybe you could tell me what has been some of the most compelling experiences you've had in VR.

[00:24:01.297] Tom Furness: Ah, the most compelling experiences. Wow. I have to say that when I turned on that virtual cockpit display for the first time, this was the so-called VCAS, we call the Visually Coupled Airborne System Simulator. where we generated a 120 degree field of view, four degrees of which in the middle was in stereographic. It was all head stabilized and we had the virtual cockpit projected and all the stuff in space. It blew me away. I said, oh my goodness, what have we done? I mean, it was profound. And chills just ran up and down me. I said, oh my goodness, we're on to something here that is really big. And this was like in, you know, 79, 80, 81 time period that that happened. I mean, we'd been playing around with it before. We'd been playing around with head-stabilized, with virtual reality, but not until that time did we have the impact of field of view. And it was a big deal because now, instead of looking at a picture that was projected on a head-mounted display, we were in a place. It's like a hand reached out of it and pulled us inside. And now you didn't realize you were sitting in a cockpit. You were in this other space, and it was interactive. So even as a little crude at the time, you know, it told me that this was going to be the future. That was an epiphany. I knew that it was going to be neat, but I didn't realize how neat it was going to be. So that was one of those things. Another time was when this guy came to my lab and looked at my virtual retinal display, and he told me he could see with his blind eye. And that said, what have we done here? And it turns out, you know, that the way that we were structuring this light piping it into the receptors, was getting through a lot of scar material, scar tissue, and things like that, and getting into receptors that could still operate, but were obscured by some other problem. And also, even patients who had degenerative diseases of the retina, age-related macular degeneration, retinitis pigmentosa, these kinds of things, diabetic retinopathy, and they were able to see, and not, you know, perfectly, but they were able to see better than they would otherwise. That was another profound experience. But probably the most fun one was to see the kids. You put them into a virtual world and they just jump up and down. And, wow, this is so cool. And that is the term we look for. This is so cool.

[00:26:25.724] Kent Bye: Great. And finally, what do you see as kind of the ultimate potential of virtual reality and what it might be able to enable?

[00:26:33.631] Tom Furness: Well, the ultimate reality is reality. You know, nothing takes the place of the real world. And we realize what virtual reality will help us realize is how incredible the real world is. We can appreciate its parts better than we could otherwise. But there's some things you can't do in a real world, like fly and walk at the speed of light and turn yourself into a teapot and visit with people that are around the world that you wouldn't be able to touch or see otherwise. Go to places you wouldn't be able to go in the world. So I believe that this transportation system for our senses is really what it's going to is and it will unlock intelligence. It will unlock intelligence and link minds. And I see this future as making the world more a village and more a community of cooperation. And with this recognition and sharing, I mean, we find out that people from other cultures, other ethnic backgrounds, anywhere in the world, when it comes right down to it, we're the same. In terms of our motivations, what we really want out of life, what we want out of our children and their futures, we find out they're pretty much the same. And that will help us realize that boundaries aren't important. Governments may not even be that important, especially if they get in the way of that. And so I think we'll see a new world because of this connectivity, a new world emerging, which is good. I think it's much better than what we have now in terms of these arbitrary boundaries we place around things. You know, astronauts tell us when they're in a space station, they don't see those boundaries on the Earth. They just see this beautiful blue orb, right? And, wow, that's cool if we could dissolve those boundaries and work together as an earth and as a world of so many billion people, you know, that are cooperating in solving these problems. If one of our brothers is having a problem, let's get together, no matter where you are in the world, and let's work on that problem. Yeah.

[00:28:31.627] Kent Bye: Great. Well, thank you so much.

[00:28:33.328] Tom Furness: Thanks for the opportunity.

[00:28:35.106] Kent Bye: And thank you for listening! If you'd like to support the Voices of VR podcast, then please consider becoming a patron at patreon.com slash voicesofvr.