I interviewed The Golden Key co-directors Matthew Niederhauser & Marc Da Costa remotely during SXSW XR Experience 2024. See more context in the rough transcript below.

Here is all of my coverage of projects in and around SXSW XR Experience 2024:

- #1360: Sneak Peak of SXSW XR Experience Projects, Events, & Lounges with Programmer Blake Kammerdiener

- #1362: “The Tent” Tabletop AR Mixes Photogrammetry, Volumetric Capture, & Theatre in Modern-Day Fairytale about Unhoused Crisis

- #1363: DIY 360 Video for Perspective-Taking and Investigating Murder of Trans Woman in “Her Name Was Gisberta”

- #1364: Step into the Movies with “The Vortex Cinema” Blending Cinematic Storytelling, Gaming, & Escape Room Mechanics

- #1365: “We Speak Their Names in Hushed Tones” Explores Impact of Migration on Families Left Behind in Poetic Immersive Still Life & Audio Documentary

- #1366: Electric South’s Ingrid Kopp on Increasing Access to Immersive Production Resources to African XR Creators + Tribeca 2019 Program

- #1367: “Escape to Shanghai” Immersive Doc Tells the Story of Jewish Refugees who Fled to China to Escape the Holocaust

- #1368: “Walk to Westerbork” Immersive Doc Shares Remarkable Story about a Dutch Jewish Holocaust Survivor Who Defied the Odds

- #1369: Interactive UN Doc “Dreaming of Lebanon” Blends Interactive Oral History, 360 Video, and Speculative Worldbuilding

- #1370: “Madame Pirate: Code of Conduct” Blends Spatial Representations to Tell the Story of Most Powerful Pirate in History

- #1371: “Pirate Queen: The Forgotten Legend” Fuses Escape Room Mechanics with Environmental Storytelling & Embodied Gameplay

- #1372: “Last We Left Off” 360 Video Plays Switches Between D&D Imaginal Realms with Physical Reality, & Exhibiting with Apple Vision Pro

- #1373: Interactive Biopic Doc on Opera Singer “Joseph Rouleau: Final Encore” that Mixes Modalities

- #1374: Telling Stories of Indigenous Leaders with OurWorlds.io’s “Chief” on Apple Vision Pro

- #1375: Unique 2D Hand-Drawn Animation Technique with “Tadpole” Leads to a Provocative Immersive Story

- #1376: Indie Musician Roman Rappak’s Annual Mixed Reality Performance Experiments & Expansion into “Detachment” Immersive Story

- #1377: Immersive Producer Katayoun Dibamehr’s Journey to Becoming an Award-Winning Producer at Floréal

- #1378: Anagram’s Mental Health Series Continues with Preview of “Impulse” Mixed Reality Story about ADHD

- #1379: “Maya: The Birth of a Superhero” Evolves Storytelling Grammar with Magical Realism, Dream Logic, & Interactive Embodiment

- #1380: “Reimagined Volume III: Young Thang” Adapts a Nigerian Folktale while Refining the Grammar of Spatial Storytelling

- #1381: “Soul Paint” Wins SXSW Special Jury Prize for Innovative Body Mapping Technique to Spatially Draw Your Emotions

- #1382: Interactive Generative AI Storytelling Installation “The Golden Key” Wins Top Prize at SXSW Leveraging Archetypal Folklore Motifs

- PREVIOUSLY COVERED PROJECTS

- [PART 1 from Tribeca Immersive 2023 – Part 1 + Part 2 debuts at SXSW] #1244: “Maya: The Birth” Animation Uses Mythic Symbols & Magical Realism to Explore Menstrual Taboos

- [from Venice Immersive 2023] #1272: Kickoff of Venice Immersive 2023 Coverage with Winner “Songs by a Passerby” and Atmospheric Storytelling

- [from Venice Immersive 2023] #1276: Beautiful “Emperor” Explores Aphasia Communication Gaps with Compelling Interactions

- [from Venice Immersive 2023] #1287: “Letters from Drancy” is an Incredibly Emotional and Powerful Story About the Holocaust

- [from Venice Immersive 2023] #1292: Pioneering the VR Essay with “Shadowtime” Critiquing Sci-Fi Dystopic Aspirations of VR

- [from Venice Immersive 2023] #1293: The Personalized AI-Driven “Tulpamancer” VR Sandpaintings with AI Text to Audio & VR Workflow

- [from Venice Immersive 2023] #1303: A Deep Dive into Breaking Down the Experiential Design of “The Imaginary Friend”

- [from DocLab 2023] #1331: Recreating Spatial Presence in Caves with Point Clouds & Spatial Audio in “Buried in the Rock” Documentary

This is a listener-supported podcast through the Voices of VR Patreon.

Music: Fatality

Podcast: Play in new window | Download

Rough Transcript

[00:00:05.452] Kent Bye: The Voices of VR Podcast. Hello, my name is Kent Bye, and welcome to the Voices of VR Podcast. It's a podcast that looks at the structures and forms of immersive storytelling and the future of spatial computing. You can support the podcast at patreon.com slash voices of VR. So this is the final episode of my series of looking at different immersive experiences from South by Southwest 2024. And this is actually with the Grand Jury Prize called The Golden Key, which is a piece by Matthew Niederheiser and Mark DaCosta, which they've previously showed a piece called Topalmancer that I covered at Venice Immersive back in episode 1293. So they're using a lot of AI technologies from both large language models and generative AI. In the case of Topalmancer, which they had another iteration there at Southwest Southwest this year that had a little bit more streamlined interface and kind of a self-service model where Topelmancer was essentially like you sit down at this old 1980s era computer, you answer a bunch of questions about your past, present, and future, and then it creates a very specific virtual reality experience just for you. It's translating your inputs into these prompts back into audio narration, tied in with different visuals from prompts. So it's like taking stable diffusion and creating these echo rectangular images that are being put into this whole VR experience. And it's basically an experience just for one person. So lots of cutting edge technologies to show what's possible with generative AI and large language models. So when they were at Venice, they were really thinking about, okay, geez, it'd be really nice to be able to have like a lot more than like 150 or 300 people to be able to see Total Bombing Answer and just get like a lot more throughput to see what's possible with some of these different immersive technologies. And so they had a three screen installation with Golden Key that was essentially like this never ending story that was being told. And so it's starting with a corpus of a lot of fairy tales and trying to dive into a lot of the archetypal signatures and patterns and motifs within these corpus of different fairy tales and they have two kiosks where you can go up and answer a prompt we have like 60 seconds to answer a question and from that prompt within the course of like the next five or seven minutes it gets integrated into this kind of ongoing never-ending story that has these like three different panes And there's a whole audio narration that's also taking this kind of like Ouroboros of like different generative AI systems kind of feed into each other with different versions and whatnot. We go into a lot more of that type of architecture in the previous conversation in 1293. In this conversation, we get a little bit more of an update and also what they were able to achieve with the Golden Key, which ended up winning the Grand Jury Prize this year at Southwest Southwest. So that's what we're covering on today's episode of the Voices of VR podcast. So this interview with Matthew and Mark happened on Wednesday, March 20th, 2024. So with that, let's go ahead and dive right in.

[00:02:54.978] Matthew Niederhauser: My name is Matthew Miederhauser. Always a joy to be back on with you, Kent. I am an artist who especially works in experiential and immersive mediums. I've been creating a lot of work in this realm for, I guess, almost the past 10 years at this point, and most recently have been focusing on these type of projects that incorporate artificial intelligence and all these new machine learning tools. And I am also the technical director of Onassis Onyx in New York, an XR studio and production space. and otherwise also co-founded a experiential studio called Sensorian with John Fitzgerald, through which we've done a lot of XR projects in the past and continue to do work to this day.

[00:03:53.098] Marc Da Costa: Great. And my name is Mark DaCosta. It's great to be back together with you, Ken. So I'm an artist and anthropologist. A lot of my artistic practice has been interested in the questions about the relationship between emerging technologies, archives, and human experience. So over the last year or so, Matthew and I have been collaborating on a lot of these projects sort of at the intersection of generative AI and, you know, what is broadly called immersive or XR media. But, you know, I've sort of come to this artistic practice also from as I said, the context of anthropology, in which I did a PhD some years ago. And consequently, I'm always very interested in thinking through and contextualizing these new technologies within sort of the broader social and cultural contexts from which they have emerged. So.

[00:04:52.581] Kent Bye: Great. I'm wondering if you could each give a bit more context as to your background and your journey into the space.

[00:04:58.070] Matthew Niederhauser: So I first started taking apart computers in the 80s when I was a kid to desperately get out of the humdrum of life living on a farm in West Virginia and put on my first VR headset in the mid 90s. But I actually, in college, veered into anthropology as well, and essentially journalism and storytelling, and spent about 10 years in China actually working as a photojournalist. And even back then, I was still doing computational photography, other early visual cameras, back when I had an 8 megabyte SD card with my 2 megapixel camera. But it wasn't until I came back to New York after living in China and joined New Ink in its first year that I was re-exposed to specifically immersive media, especially with a large cohort of other really amazing creators who were at New Ink at that time. And so essentially, I had a very big jumpstart on knowledge about and how to build custom 360 cameras. And so I was immediately very into camera-based XR production, how to do 360 stereoscopy, photogrammetry, volumetrics. And that was my way into this world, along with John Fitzgerald with Synthorium, which was sort of my main focus back then. And we created projects, especially social VR projects, where people would interact with each other. We got to produce Rachel Rawson's piece, The Sky is a Gap, that was at Sundance. Worked with Gabo Aurora on Zicker, which was like a four-person VR documentary interactive piece. And a bunch of other different pieces that took us to Tribeca, Sundance a third time with Wesley Allsbrook and Elie Zanonieri and John again. And. you know, when the pandemic hit, we just had started building Onassis Onyx, which is this really amazing artist space in New York. And that was actually about the time that I ran into Mark again and immediately struck up this conversation about our mutual interest in artificial intelligence. And I think that was sort of the Google deep mind was emerging. We were interested in potentially using those tools, although they were very sort of nascent and the results we're getting were still not amazing. Plus, you couldn't do it on your GPU sitting on your desk. And that's when we started coming up with a lot of these ideas in terms of using generative machine learning tools in order to create interactive experiences. And then, especially with the early releases of ChatGPT and a lot of all these other sort of neural network based machine learning models. It's been a bit off to the races and the golden key, what we just did at South by Southwest was the fourth piece that Mark and I have now done in the past, let's say 18 months. But before that, I will hand it back over to Mark and dive more into his past.

[00:08:14.107] Marc Da Costa: Yeah, absolutely. So yeah, I suppose I have sort of a bit of a roundabout and circuitous route into this XR world. So, you know, as I mentioned, I was doing a PhD in cultural anthropology where I was spending a lot of time studying climate computer modeling and also Antarctic research science and sort of the ways in which this world of big computers and this world of, you know, human beings going to the end of the planet and drilling ice cores and doing things like that converge together to help us you know, really have a sense of the planet and where we are in it and how all the pieces fit together. And while I was in the midst of doing this academic work, I wound up co-founding a data analytics company called Enigma. And this was and continues to be a reasonably large data company in New York City that really in its first moment was very interested in, you know, asking the question, why is there no Google for public data? So there's a tremendous universe of databases out there that government agencies publish that tell us a lot about the world, but which are really difficult to dig into and see the connections and build pictures out of it. And for me there was a very strong connection between the academic, the sort of tech, and additionally the artistic work in this interest of what is data, how does it kind of function in our society, and how does it inform the way in which we go about our everyday lives and experience the world around us. And so, you know, sort of continuing to live with many hats on as an academic, as an entrepreneur, and as an artist, I continued to develop a lot of sort of experimental artworks that were derived from archives and data, and sort of had developed a practice along those lines. However, as Matthew was saying, actually, I think the first, it depends how we define our terms, but, you know, certainly for me, my then really arrival and entrance into the XR world was really when we met in Venice over the summer. You know, I think perhaps I would recast some of my earlier work as immersive in different ways. But it's, I think, I'm sure you would have much more to say about that than I, but it's a sort of interesting, capacious and moving concept from my perspective. And yeah, so I don't know, in any event, that's a little bit of backstory of a rather convoluted life journey to get to this point.

[00:10:51.072] Kent Bye: Yeah. And at Venice, we had a chance to really dig into some of these prior projects that you had a chance to work on and wanted to get a couple of updates, both on Parallels and Topomancer, just because Matt, I know you had a chance to take Parallels to Filmgate International Film Festival in Miami and actually took home a tech prize there. And I had a chance to check it out as well. And then also Topomancer was at Venice, but then you also had a chance to bring it to South by Southwest. So maybe we can start with parallels and if you made any changes to the original iteration of it, when you showed it at Filmgate International and just maybe give a bit more context to that project.

[00:11:28.048] Matthew Niederhauser: Yeah, totally. I can dive into Parallels first. And yeah, it was different. Parallels is a piece where we actually adapt it to make it site-specific for each location in which it's installed. And not necessarily in its hardware or format, which is usually a 2.5 meter by 2.5 meter LED screen with a camera mounted behind it. that sort of creates this slice of the world that the software consistently interprets through prompts that we engineer specifically for that location that dive into the history, local artists, things that we feel reflect even like the current social and political climate in that area. So we were super fortunate to have it on the porch of the Perez Art Museum. and sort of focus it on these like architectural elements there and then moved it across the way to the Frost Science Museum and even did a little bit of some updates overnight to make sure that we had some aquarium features from the Frost Museum and and other pieces. There's certainly some that are more research-based and obviously some looks that are more crowd-pleasing. But it still is like a super fun, impactful piece for people to interact with. And yeah, it was a lot of fun to take it down to Miami. And it's definitely one that we're going to continue to develop. Mark, I don't know if you want to jump in on that one as well about how that project's been advancing.

[00:13:11.951] Marc Da Costa: No, I mean, I think that covers it. I mean, just to kind of put a bow around it and just sort of to say that, you know, for us, you know, Parallels, just to kind of get it back on the table, as Matt said, it's two and a half meter screen installed in a site specific way. And effectively, what it's doing is with this camera that's on the backside, effectively doing sort of a continuous reinterpretation of the landscape and of the sort of people that are passing in front of this camera. And the point of it is to present audiences with an opportunity to sort of have an experience of and be able to interact with how machines are sort of viewing and reinterpreting the landscape. So this was sort of in many respects, an emblematic piece of the kind of work we've been doing insofar as it's trying to connect publics in a sort of interactive and critical way with the logics of these emerging machines.

[00:14:07.780] Matthew Niederhauser: And it should see some new iterations as well, hopefully later this year. It's been sort of a really popular accessible outdoor installation that has been something in terms of like a format that Mark and I have been like super interested in.

[00:14:27.497] Kent Bye: Yeah. And so Topomancer I first saw at Venice, and I'm wondering before we start to dive into the golden key, if there were any other changes that you made to the architecture or how you presented or showed Topomancer because, you know, as you work with these technologies, you create something that works. My understanding was that it was a little bit of like trying to hold it all together and not make too many changes. But yet at the same time, once even you pick it up a number of months later, let's say from like six months later, let's say from September until March, if everything was still working or if you had to make any changes, just because if you put it on the shelf for six months, you know, it may require some changes because of just how quickly everything is moving. And it's always like a little bit of a moving target. So I'd love to hear a little bit of an update of Topalmancer and what you had to do to get it ready for South by Southwest.

[00:15:16.472] Marc Da Costa: Yeah, I'm happy to jump in and kick that off. So South by Southwest, it's interesting, it's actually the third time that we've publicly showed it. So we had it at Venice when it premiered, and then there was another showing of it at the Geneva International Film Festival in November, and then we had just brought it this month in March to Austin. you know, just a kind of a high level there. I think one of the things that was very interesting for us is, you know, Topamancer is a very language-driven project insofar as participants are sitting down, typing out memories and dreams and things into a computer, and then going into a headset, having a VR experience that, you know, is very much so carried by the voiceover also that's sort of pushing it. And it was very interesting for us having it at South by Southwest, because this is the first time we're really showing it to a native English-speaking audience. Certainly in Venice and in Geneva, a ton of people fluent in English, but because it is such a language-driven piece, it was interesting to see how people responded to it in their native language. And, you know, we don't have any idea really like what people are typing or what they're hearing, but we do have sort of high-level ideas or metrics about how long people are spending on sort of various parts of it. And it was very interesting for us to see, you know, I think far and beyond what we had in Venice and Geneva, people taking like the full-time with the questions and really giving more of themselves to it in this sort of way. So this is a very interesting takeaway in terms of how the work has technically evolved. It's interesting, you know, the The first project in this series of AI experiments Matt and I have been working on was called Ekphrasis, and this was an exploration of the poetry of Constantine Cavafy, who was one of the most famous contemporary Greek poets. And as part of that project, we created a sort of artist compendium book. that was very much so focused on the process of making that piece from our dialogues about it, screenshots of the UI, really trying to capture the state of the art in early 2023 of what it meant to work with these things. And I think, you know, as we've been talking about this, We really decided that, you know, Tulpamancer should be more or less like a kind of a locked piece in terms of the looks and the kind of logic of it and something that's very much so a product of its time in a way. That said, of course, in festivals and in public settings, the throughput for how many audience members you can serve is a very important thing for programmers. And frankly, for us as artists, because we want people to actually be able to experience what we make. And so we did make some changes to the kind of like backend infrastructure for Tulpamancer that allows us to support, you know, multiple terminals. You know, as you may remember, there was an old 1980s computer. We bought a bunch of those on eBay and have sort of gotten the ability to kind of scale the staging of it a bit. And we also, you know, as it was presented both in Venice and Geneva, there was sort of a two-room setup where you sit at the old computer, you go into a next room, which is like the VR room. At South By, we had both the headset and the computer sort of on the same desk, and certainly There's a lot of practical benefits to that in a festival context, because it made things much lighter on the docents. Many people could self-serve putting on the headset if it was something they were familiar with, and of course has a smaller footprint as well in terms of space. And you know, it is interesting. I think with these projects, we are increasingly interested in trying to think about how we can remove the external API dependencies. So one thing that did change technically is we now are running the text to speech server locally in addition to the image generation servers. So as we've mentioned before, we use chat GPT for that. project, and so you still have to go to the internet for that. But in terms of thinking about how we even archive and sort of lock these works in a broader way, you know, I think in the future as we do other projects and as the technology develops and it's already, I think there are interesting ways, but we haven't fully explored. I think it would also be interesting to think if there are ways for us to have language generation models also locally, so that it's sort of a complete bounded piece in that way. I don't know, Matthew, if you want to tack on to that.

[00:19:58.154] Matthew Niederhauser: Yeah. I mean, well, according to NVIDIA, we're going to be running 3.5 Turbo on our phones next week. So that might happen. But yeah, as Mark said, I think especially with this move to the other text-to-language model that was local, I think we will and can sort of keep the text-to-text locked with TPT 3.5 Turbo, which is sort of the realm that we were working in at that time. So there is definitely a path forward to keeping that look and feel consistent to how it was built. The tools being made available for us are just coming so quickly right now, and we joked about it a lot. Tulpamancer is so 2023, but it still had I think it's still a clear favorite, still, even at South by Southwest. The Golden Key is amazing, completely different piece and context and setting. But we had four setups of Tulpamancer running at the same time, and we had super impactful reactions to that piece. But yeah, as Mark was saying, we have been playing, well, first of all, harvesting Compaq 3 computers. So, you know, stay away from them on eBay. Everybody out there, they're ours. But yeah, we're hopefully, you know, trying to find ways to do longer term, more substantial installations of the work with cultural institutions. And, you know, at Venice, we definitely had this idea of like, you know, well, there was like the dark laboratory room with the desk, and then you got moved almost into this like bright lab, you know what I mean? And I still do like that format, but it was actually sort of fun combining the rooms in one place, you know? So the headset was sort of like sitting there next to you as you're on this 1980s computer. So there's like this weird juxtaposition already sort of occurring in the first place. And we still have those lighting changes, you know what I mean? So when it prompts you to put on the headset, like the bulb suddenly goes green and I don't know. I've done it a few times where like the lighting changes suddenly and then you're like, Oh, I'm just going to put this on now. So it's almost your VR experience. It's almost like it hits you that much more quickly. And if, especially if you know how to put on a headset and this time we actually moved to a Vive Pro headset just because they are a lot more stable in festival environments, especially low light, you know, where we're doing lighting control. It's not using inside out tracking. but lighthouses. I don't know. I was sort of into that. I mean, in terms of thinking about its next iteration, we're going to hopefully have an opportunity to like build out the scenic even more. You know what I mean? And there's a lot to be done with that, but fundamentally we're trying to keep Tulpa, Mansure in the same space, very 2023, but it was, um, it was really fun to show it at South by Southwest and, and sort of bring it to, uh, Texas. And as I said, like a lot of like. Very impactful, you know, experiences for people and that a lot of amazing feedback as well.

[00:23:24.858] Kent Bye: Great. And so I guess it's a good time to shift into talking about the golden key, which picked up the top prize at South by Southwest XR experience selection. And so Matt, you had a chance to zoom me in a little bit through your phone and cause I was not able to make it out to South by Southwest. So I saw a little bit of it from afar in terms of having like these three big screens and have a couple of different stations where people could enter in text prompts. And then you have this never ending story that's being shown images, like three different iterations of these images, kind of like a modern day fairy tale that's being told, but taking input from the audience. And so maybe you could take me back to where the idea for this began and how you wanted to continue to push forward what was possible with not only increasing the throughput for having lots of people to see these technologies, but also what you wanted to do from the story perspective of, you know, what you were able to achieve with Topalmancer of creating these primed prompts that were able to create a character or at least, you know, have a consistent story throughout the course of Topalmancer. But this is like more of a much broader time scope in terms of continuous story that's being put together. So very curious to hear where you began on the Golden Key.

[00:24:36.485] Matthew Niederhauser: Yeah. I mean, The Golden Key as an idea really actually started at Venice. And we were there setting up Tulpamancer for the first time, stressing out, you know, making sure it was running. And I was also still pondering the absurdity of, you know, the amount of work we've done for a VR experience to be seen by maybe 150, 200 people over the course of a week. But it was maybe more at Venice. It was probably closer to 500. But my initial thought about it was, how can we open it up? How can we use these tools in a new context, large visuals? And even harkening back to some of the stuff I was talking about in my own creative past, I love making social experiences. And don't get me wrong, Philpa Mancer is still this super impactful personal journey. I really do love that piece, but there is a part of me that was about how can we break it open, have people interacting with the piece at the same time. As we were talking there at Venice, this idea of creating this never-ending story that people could move in and out of, which also has some tailspins into Tulpamancer in terms of it being like recombinant in terms of the storytelling, like participatory. Tulpamancer is more of like a reaction, like it is fundamentally different in terms of how it ingests processes and responds to you. And that was sort of, I think, like the first step came out of that week. And then this fall, we essentially started figuring out what the backend could look like, how it could be, you know, what the interface could look like. And I'll kick this back over to Mark, because then he sort of, as he's want to do, went off on a deep research spin and came up with a lot of the elements that sort of factored into the prompt engineering for how the story unfolds itself.

[00:27:01.260] Marc Da Costa: Totally, yeah. I mean, you know, and I think at a high level, you know, so the Golden Key, the name actually comes from a Brothers Grimm story that would often appear at the end of their collections, and it's a very strange story. It's very short, and it's like about a boy out in the forest as the sun is going down. He's out there collecting firewood. There's a little foreboding-ness. And then, you know, he stops to light a fire to warm up before going home, sees a golden key on the ground, digs a little bit more, finds a lock that the key goes in, puts the key in, turns it, and then the story ends. And it's this sort of like kind of open-ended bookend, in a sense, to these brothers' grim collections of, you know, which were these sort of European, largely kind of folk oral history tales and things. You know, and I think a big interest in this project as well for us is, you know, kind of exploring this question of like myth making and what are the myths that AI is writing? What are the myths that we're telling about AI? You know, what will it mean when we live in a world where you can't turn around without running into a story that is artificially generated? through this sort of technology. So we were really interested in kind of thinking about what would like a myth machine look like. And so we sort of, you know, as we were talking about it, we kind of imagined like, you know, oh, what would it mean if we were in some kind of post-climate collapse world? And the only thing that was left of this whole sort of mythological past that we're so steeped in right now was this like weird myth machine that people came on. So in the process of building it and thinking about how do we actually architect something like that, we dug pretty deeply into the academic literature around kind of folklore studies and this is a whole academic discipline, sort of came up in the 19th century when industrialization was happening and all these sort of cultures, village life, kind of rural culture was dying off and there was an interest in collecting it and systematizing it and classifying it. So we found some databases, you know, one of which is like a database of story motifs. So you can think of, you know, the magic castle, the enchanted wand, the cursed tortoise shell, whatever it might be. You know, basically a huge database of these things. And then a smaller database of kind of story archetypes from folklore. So you can think about, you know, little girl walks in the woods, goes home, sees her grandmother, turns out it's a wolf, eats her. You know, this is like a archetype, if you will. and basically kind of, you know, structured all of these sort of input streams, and as Matthew was saying, created basically like a context for those to be recombined in kind of a random reshuffling way with what people are typing into these kiosks. And in the South by Southwest, there were two kiosks on the floor. And this is something I think we may continue to evolve and kind of think about. But the way it was structured at South by was that these kiosks basically took these story motifs, made questions out of them, and posed these questions to the audience and put them within like a one minute time box to sort of answer it so it's not like some the interaction wasn't that you could go up and pound through these things and just keep hitting the enter key but was sort of inviting people to pause and think about how they wanted to sort of be included in the story yeah and to

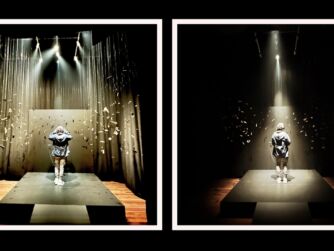

[00:30:33.270] Matthew Niederhauser: continue diving in. And I would actually be completely remiss if I also didn't mention our amazing technical director, Aaron Santiago, who also worked so closely with us to move all of this information around on this really complex back-end system that was then, as Mark was explaining, recombining these different indexes and academic databases of both the motifs and the archetypes, the folktale types. And we then, in terms of its presentation, routed it through TouchDesigner in order to create this massive three-projection mural. And that, in some ways, almost felt like, I almost think of it like as panels on a church or even a graphic novel strip type format. where we were actually at one point thinking about, okay, like let's prompt each one of these windows differently, but we actually ended up just sending the same prompt to all three every single time. And it just, insofar as the way these generative machine learning models work, every single time you ask it, especially in text to image, it's going to give you something different back. It is fundamentally stochastic and I don't want to say completely random. You can get close to recreating the same image if you use the same seed every single time, but there was this really amazing, not always immediately appreciated sort of sitting there, but you're literally looking at the machine learning taking the exact same prompt and then seeing the slight variations. And sometimes there would be, you know, it would look repetitive, but sometimes it would look like motion playing out or different perspectives on the same scene. And even in some crazy ways, we've had some, we're almost created like a practically, I feel, look like a seamless three-paneled sort of window. And we were once again using some of the tools in Stable Diffusion so that we could create like a slight parallax movement as well that sort of gave it this like a little bit of a mesmerizing look to it. And once again, we were working like with a set of looks that we engineered as well. A lot of them based off of 19th century sort of classic illustrators who worked with folktales that gave it some of these like classic looks that we are used to. And the combined effect really like created this Revenant type space, especially with the constant sort of voiceover going. So as Mark mentioned, I think we're still thinking a little bit about the UI and like how, you know, we prompted people to sort of engage with the story, you know, as with all festivals, we were like, turn it on. And this is our first time seeing a massive amount of people interact with it for the first time. And it was a lot of people, you know, we were having over a thousand people coming through that room per day at South by Southwest, obviously a much larger throughput than Tolkienmanster. But, you know, it was sort of amazing watching people, I'd say even sometimes the joy of seeing how their input in the stories got recombined into these motifs and archetypes. And we didn't want to make it one-to-one, like, oh, I'm going to type something and boom, it's up. And I'm going to type something and boom, it's up. But encouraging them to answer three questions or do three different prompts, we had this 60-second timer that would let you input, and then it would take your input away and incorporate it into the next story. So we would encourage people to input two or three different ideas or a continuous idea, and then to sit down and then watch it sort of emerge with this never-ending story. Obviously, there are some people who didn't have the patience for it. And we're like, oh, cool. It's like a video. And then other people who really got into it. And what I did for you, Kent, was insofar as we were really playing with it for the first time, obviously learned how to jam it up real good which would basically mean if you really sat there and typed out three full paragraphs in a row like a story and I think for you is about how you were an otter wrestler and you loved the otters you had this great relationship and then you wanted to take a rocket ship to the moon But then you realize how much you missed the otters and decided to come back and all the otters missed you too. So I jammed it up for you and then it caught an entire story where that became the real dominant elements. And there are some other factors as well that probably came in from the mix of the archetypes and indexes, but the amount you put in, the amount you could get back out. And so it was really fun doing that with people. And when, when people like sort of figured out, you know, they'd go and type and then they'd watch and they came back and they would sort of reinsert themselves into the story again. There's just like a really large spectrum in terms of how people could interact, appreciate it, and start also to sort of understand or at least bring a little bit of a critical edge about how these technologies work with you in terms of potentially telling stories, how you can shape them, and how it might try to reinterpret your input. So there's, I don't know, there's a ton of implications we feel like about the piece itself, but it was a real sort of joy to like see it come alive at that scale, you know what I mean, in Austin. So it was an amazing week.

[00:36:44.764] Kent Bye: Yeah, I think both Topalmancer and The Golden Key are pointing towards this holodeck type of future where you're able to speak out with natural language and say, I want this type of experience or this type of story. And then you're able to, with Topalmancer, have a very personal story that's kind of architected in a way that looks at your past, present, and future. And The Golden Key seems like more of a endless folk tale, fairy tale, folklore type of integration. I'm wondering if you maybe elaborate on the back end, because I know last time we talked, you were talking about, OK, things moving forward, we want to take stuff that maybe is in the cloud now, move it into more local models. You have stable diffusion, which is obviously local, and you had some cloud rendering in the past, but now being able to render out stuff locally. There's also the potential of having some of the large language model stuff move locally. Sounds like you're still using chat 3.5 turbo with the Topelman server. I'm not sure if you switched your large language model. Also just the Ouroboros feeding these systems into each other to be able to create Topomancer. And if there was any of that continuation of that multimodal kind of swapping or being able to feed it into itself. And also just if there's a kind of a cyclical nature of like, if you have a set of prompts that then repeat after a certain amount of time, or if it really is this generative system that can just kind of get started and have a variation forever. So I'd love to hear a little bit more about some of the backend and pipeline for generating the golden key.

[00:38:07.667] Marc Da Costa: Totally. I'm happy to jump in there and have to echo what Matthew said and give a huge shout out to Aaron Santiago, who is our technical director, without whom none of this would have come together in the way that it did. And I am very in awe to be able to report that we actually had zero technical problems the entire time we were at South by Southwest, which always feels very nice and is a testament to Aaron's work in that regard. But to dig more directly into your question, Kent, I think it's interesting. I mean, in many respects, the backend stacks for both Tulpamancer and the Golden Key are very similar, at least in terms of some of the core services that are required. So it was actually a very interesting experience for us because, you know, we showed up in Austin with like a dozen very powerful computers, and this was our first time deploying really like a cluster of computers that could service multiple experiences at the same time. And so, you know, we've had issues in the past with the text-to-speech cloud services going down, you know, at times and really being quite problematic. And so this really became a very high priority for us, you know, in a festival context to be able to have all of that locally. And I guess it's sort of interesting in a way. I mean, you've got companies like OpenAI that are really super well funded, really at the top of the stack in terms of tech sophistication. You know, even they go down and have problems sometimes. But when you start looking at companies like Play.ht or Eleven Labs, which are the two big text-to-speech services, this is still a very experimental technology, and these are companies that have outages sometimes at very inconvenient times. For us, thinking about what is the balance in between doing stuff on the cloud, doing stuff locally became important. Another interesting aspect to that is to the extent to which these things are deployed at festivals, there's often a big range of internet reliability and connectivity at festival sites. South by Southwest, for instance, you know, it's in a conference hotel. It's extremely expensive to get dedicated internet lines there. I mean, it adds a lot of sort of complexity in terms of how much you feel comfortable relying on what you have in-house and, you know, what you have up in the cloud. So these are certainly some things we were sort of thinking about. You know, it's kind of interesting because in the process of developing these artworks, we're also developing these kind of technical products or infrastructures that are then very kind of flexible for us going forward. So being able to scale horizontally the number of stable diffusion servers we have and being able to queue those up to generate more images has given us a lot of flexibility, I think, when we're thinking about future projects and how we can extend all of these things. And yeah, no, so it's been very interesting when the pace of change that we're trying to incorporate, I would say really has sped up quite a bit. I mean, it's crazy, Matt. I remember when we were working like more in the ekphrasis zone in the beginning of 2023, everything was just like focused on the stable diffusion image generation. It was like a pretty tight aperture of stuff, but now it feels like with the video and the 3D modeling and all of the other stuff that's going, that the threads that you have to kind of follow to stay on top of stuff are proliferating a bit as well.

[00:41:48.679] Matthew Niederhauser: And I would say sort of the possibilities for new creative works of which I think we do have some tricks up our sleeves still, you know, we're just getting started. But yeah, I mean, just to build off of what Mark was saying, there's something to be said about having everything local. And there could be some text to text models out there that could work. But the big thing about ChatGPT, which is for some people they consider a bad thing, but also for us in terms of having a public facing machine learning installation that we don't intercede with, that we sort of build and let people interact with. ChatGPT does a very good job of trying to help with not safe for work type content. For people who would be, you know, trying to potentially input inappropriate things into the installation itself. So, you know, when you're working on like an API level with chat, CPT, you do have the ability to request very specific models. And we did, you know, sometimes we actually do use four and 3.5 differently, depending on. different parts of that stack that is sometimes talking back to itself, especially in terms of deriving the stable diffusion prompts based off of essentially the script and text-to-text generation. That can always be recreated, I feel, going forward as well in terms of locking the exchange into a certain level of technology, even if the text-to-text is cloud-based. But yeah, we had to get a custom power drop into that room, be a 200 amp power drop because of the number of computers we were running. I think we had to ship down nearly 600 pounds, 700 pounds of gear to Austin to get this working. And, you know, we were really, the infrastructure was much more advanced compared to anything we did at Venice and Geneva, and trying to prove that we could also start working at scale. And all of these works at Frasis, Parallels, you know, Topamancer, the Golden Key, speak to each other, you know, and they're using a lot of the same tools, even if in very fundamentally different contexts and forms of interactivity and aesthetics, presentation. But, you know, we do see them as a group of works that we're also trying to find ways to show together, you know, further down the line, hopefully. And that infrastructure in terms of being able to run them stably is important. So, you know, that definitely would not have been able to move as fast as we did without the wizardry of Aaron. And, you know, I think we're very excited to continue working together as a group, you know, to start addressing, especially some of these newer, more interesting tools that are creating really like text to 3D in a more responsive fashion and even just digging deeper on the possibilities for interactivity and responsiveness that are continuously just being amped up right now. So it's going to be a very interesting decade coming down the line in terms of how we continue to seek to use these tools to create compelling art. And this is a really, as I said, I put on my first headset in the 90s and you know, now it's really starting to work now. It's an exciting time to be interacting with these tools. And we didn't have the best delivery in terms of our award speech at South by Southwest, but we definitely got up there and we were excited and we were like, We used to be cinematographers and photographers too, but you guys need to engage with these tools because they're coming. Think about them. You know what I mean? And it was like silence. But it's something that we think artists need to work with and discover, and ideally, audiences to engage with and think critically about. And so we also hope to further that end as well.

[00:46:18.115] Kent Bye: So I know at South by Southwest, there was the film festival and then the technology conference. And I know that before some of the film festival screenings, they were showing clips from the technology conference. And on Tuesday, they showed a bunch of AI hype clips. Someone from OpenAI is saying that AI is going to make us more human. And then the film audiences were actively booing a lot of these social claims that were coming from the technology side. So it's the first time that I've seen like the peak of the hype cycle on the one side with South by Southwest having over 160 different panels on AI. And then on the film side, you have this immediate backlash or tech lash or profit disillusionment, very skeptical of some of these social claims that are being made. And so when I think about four or five years from now, what if every single project was using the same model, What does that do for cultural production? Does it stifle our creativity if all the voices that are coming forward are of stuff that is synthesizing the voice and the perspectives? I know that you can prompt it, but I'm just wondering of like the implications of like, as we move forward and we're moving more and more of our cultural production into these types of synthesized AI models, if you think that the diversity of voices can still come through with how you're framing it, or if there's like these deeper implications of what's it mean to have more and more of our cultural production being synthesised into these models of AI?

[00:47:37.147] Marc Da Costa: Yeah, it's a thought-provoking question. I mean, from my perspective, You know, there's a lot to unpack there. I mean, I think first and foremost, and it was, you know, I think very interesting. And certainly I think when we last spoke and met Kent in Venice, we're in the middle of the Hollywood strikes, you know, we're very much so tied to AI and sort of the issues around it, you know, that we're coming down the pipe of A, having these massive social capital kind of labor issues that are going to spread across the society and probably reinforce the sort of power structures and inequalities that are already here. I think it's something that's super important for people to be tracking and paying attention to. I mean, I think also, you know, we can't forget where we were 10 years ago, or a bit more at this point, when the social media and the internet went from a fun, cool new place to what we now call surveillance capitalism. And we really realized through the Edward Snowden disclosures and a slew of other things, the ways in which the profit motive swooped into this potentiality and really weaponized it in a way that isn't necessarily in the best interest of all of us. And I think we cannot but look at the current situation with AI, where we have all of this exciting capability coming out, and not, as a society, be super concerned and super focused on what the outcomes of all of this is going to be writ large. And when we sort of narrow the aperture, I think, into the realm of culture and art, and when we start to think about, you know, will we lose the diversity of voices? Is this going to kill creativity? And these things, I mean, I think, from my perspective, These things remain tools that artists can use, and I think there will always be how human creativity is applying them and creating from them. There is certainly the concern of like, we're going to run out of training data because it's all full of synthetic stuff that's like derived from these machines. And then, but I'm sure some clever tech capitalists will figure out a good path forward to keep the train moving, but it's going to be a wild ride that we need to keep a close eye on, I think.

[00:49:57.952] Matthew Niederhauser: Yeah, this is, this is something that we're thinking about a lot. I would say, especially there's, you know, insofar as we've gone very deeply into the world of prompt engineering and it's, you know, the representations that come out of it. you know, we run into and see the biases in the data very quickly as well. And you can also imagine that when we're trying to create this never-ending story that, you know, quite frankly is based off of indexes of these archetypes and motifs that, yes, are largely like North American and European, you know what I mean? There are Asian and African motifs in there. And Quite frankly, the diversity of it is strangely very human in terms of these storytelling archetypes that have appeared across all cultures. It's something that is insofar... I've sort of been obsessed with folktales and especially the role of the trickster. It's something I sort of wrote my MFA thesis about. And we felt that there are some universals present in terms of the recombinant data that exists there. The biggest biases we definitely see are in the text to image. You know what I mean? Especially if we're looking at like 20th century like illustrators of consistently having dewy white fairy tale princesses appearing on the screen. And we definitely tried, I don't say just tried, I definitely found some ways in which to work with the prompts to try to create images that had racial diversity in them. And I think we're successful with some of them. But there's also a question in terms of how we're using this Where, you know, that doesn't just solve the problem, you know what I mean, as well. And so it was almost this gray area that we're playing, not I would say playing with, but thinking about as artists, you know, where, yeah, like you can get in there. and try to basically sidestep or force the system to create, for Mark and I, what we think is a more equitable representation. And does that make everything all right? I don't think it does. You know what I mean? We were trying to continue to figure out that role mainly by also talking to other artists working in this realm. I mean, I always especially love to point to the work of Stephanie Dinkins, who is somebody who's really interrogating existent biases in large language models and in other places. I would say that we draw a lot of inspiration from in terms of our own practice. But it is something we encounter consistently. But I personally still sort of stand by what I was saying earlier. Awareness comes from engagement and also understanding. And this technology is coming in across the board. As we're talking about within the lens of culture and art, It is going to be as impactful and manifest in so many different ways ever since the assembly line. It's, I feel, going to herald in a new fundamental shift in economics and industry and in media consumption, you know what I mean, in a very major way. I think that we can approach this with greater criticality than even me signing up for Facebook in college and posting dumb photos of myself. You know what I mean? We're living in a substantially different world in terms of our awareness of media and its implications and how the medium is the message in so many ways. So, I don't know. We're trying to walk this balance, you know, where there might not be an overt criticality in the piece itself. And it's something as artists, I think we're thinking about, do we try to create a harder edge within the piece itself? You know what I mean? But they're not like slick. You know what I mean? You can see where it goes off. It's like, it's mesmerizing, but it's also uncanny. And I think we try to tread that line a bit in terms of, you know, the works make you interact, but also don't make it easy. You know what I mean? And hopefully we leave an audience member thinking more deeply about the implications of the technology and where it's going.

[00:54:43.825] Kent Bye: Right. And as we wrap up here, I'm curious if you have any final thoughts or any sense of where things might be going in terms of the interactions of XR and AI as you've been working on this for the past year plus now looking at this intersection and really being at the cutting edge of where things are at and where they're going. So I'd love to hear any final thoughts as we move forward.

[00:55:03.848] Marc Da Costa: I think it'll be super interesting as we start getting more into the video and 3D world, that there is sort of a level of, I think there's another boundary that will be crossed in terms of, I don't know, sort of liveliness of the kind of assets that are generated from all of this. There's a sense in which the text stuff has gotten very, very good. And then the kind of like 3D visual aspects I think are trailing behind, but I think are posed for for a jump ahead as well.

[00:55:35.283] Matthew Niederhauser: I would say, even just saying it, like seeing this technology starting to infiltrate all sorts of different, especially like mobile app touchpoints that we interact with on a day-to-day basis, you're just going to see this type of media, I mean, obviously already growing on social media, but also in terms of how it mediates actual news sources, podcast, video. I mean, we already see the freak out that's occurring just by seeing some clips coming out of Sora and OpenAI. It's going to become more and more. Man, one of the craziest things I did see at South by Southwest, it was really unfortunate. Obviously, during the XR experience, during the exhibition, We were just really upstairs focused on our piece, and especially watching how people were interacting with the Golden Key, thinking about how we were going to iterate on that moving forward, tending the data farm, if you will. And I didn't get to see a lot of the other experiences, which is always disappointing because, you know, one of the most influential creative moments for me have always been, A, to be fortunate enough to participate in the festivals, but also to see other work and the community of other creatives and artists in this world, I think. are some of the most interesting out there. But I did go over to, after everything closed down, we still had an extra day or two to see some music and actually went to the formal floor of the convention center. Man, the Korean metaverse pavilion blew my mind. And I was like, what is it? Everything was just like, boom, we are creating the K-pop metaverse right now, and you are going to love it. And it was text to speech, all of like automatic 3D model movement, haptics, like the stuff I saw in the Korean metaverse pavilion. And there was at least like 20 to 30 different startups in there. almost all of them touching on generative AI and machine learning. I was like, they are off to the races over there. And the golden key was cooler, I still think. But it was all these things I talked about, like the foreshadowing is coming. I was like, whoa, here's a very dedicated industry that is going digital and gen AI very quickly. And so my eyes are often toward South Korea and also Japan for that matter, in terms of very quick implementation, iteration and, you know, applications within like a pop cultural context. I thought that was fascinating, but yeah, lots of great work this year. I heard to the grapevine, the oral tradition of experiential media. I heard, this is what happened to me. It was actually my first South by Southwest ever. And it was a really amazing experience. And if anything, I could also like really thank the South by Southwest team for like giving us the opportunity to show the work at that scale. And we're looking forward to trying to continue to push forward in this medium. And it's a medium that's still a minefield, you know, access to most of the world right now. But yeah. We're going to keep engaging and it's a super important time to be aware and try to prepare yourself for what I feel is going to be a dramatically different, especially media landscape coming our way.

[00:59:14.895] Kent Bye: Awesome. Well, I know Matthew and Mark, you were able to really push forward the possibilities for the intersection of XR and AI with both Toplmancer and the Golden Key. And I think it's in terms of the overall ecosystem and the novelty of where the future of narrative is going to be going. really looking at this high level architecture of the story arcs, but also I think in the future, getting more into like the character based stuff that like nworld.ai is doing in terms of having individual NPC characters that are playing out that and how the individual characters are playing into the overall arc will be a very interesting areas as we move forward. I'm sure as we see more and more folks starting to look at these intersections and kind of building upon the ideas that you've been proving out in both Topalmancer as well as the Golden Key, the ways that these generative systems can start to feed into much larger worlds, much larger stories as people go forward. So very much a look into where things might be going here in the future and very much appreciate the time to talk about your process and your journey and a little bit more context for how you put together both Topalmancer and all the other projects as well as the Golden Key. Thanks again for joining me here to help break it all down.

[01:00:18.194] Marc Da Costa: Amazing.

[01:00:18.614] Matthew Niederhauser: Thank you. Always a pleasure. Talk to you soon.

[01:00:22.455] Kent Bye: So thanks again for listening to this interview. This is usually where I would share some additional takeaways, but I've started to do a little bit more real-time takeaways at the end of my conversations with folks to give some of my impressions. And I think as time goes on, I'm going to figure out how to use XR technologies within the context of the VoicesOfVR.com website itself to do these type of spatial visualizations. So I'm putting a lot of my energy on thinking about that a lot more right now. But if you do want a little bit more in-depth conversations around some of these different ideas around immersive storytelling, I highly recommend the talk that I gave on YouTube. You can search for StoryCon Keynote, Kent Bye. I did a whole primer on presence, immersive storytelling, and experiential design. So, that's all that I have for today, and I just want to thank you all for listening to the Voices of VR podcast. And if you enjoy the podcast, then please do spread the word, tell your friends, and consider becoming a member of the Patreon. This is a listener-supported podcast, and so I do rely upon donations from people like yourself in order to continue to bring you this coverage. So you could become a member and donate today at patreon.com slash voicesofvr. Thanks for listening.